mirror of

https://github.com/huggingface/trl.git

synced 2025-10-20 18:43:52 +08:00

Compare commits

209 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

| 6ff477e5be | |||

| 8e9cae8072 | |||

| 654543a8cf | |||

| c273b18c1c | |||

| 6c6ff24926 | |||

| 6ff0fac2c1 | |||

| 951ca1841f | |||

| cc1de9820a | |||

| a64a522fcc | |||

| 5b32372b71 | |||

| d759004e52 | |||

| cbc6c9bb3e | |||

| f3cd86578b | |||

| b763432eaf | |||

| 2bbd594ec5 | |||

| b89b712dbf | |||

| ec9e76623e | |||

| d192244f54 | |||

| 051d5a1f61 | |||

| 2068fdcd93 | |||

| 02f5c1d8ce | |||

| 7de7db6765 | |||

| 4e7d5b5abe | |||

| a90e13321b | |||

| 5b2aeca6c0 | |||

| 1f3314fd2f | |||

| 304ee70eef | |||

| 0a5aee7d99 | |||

| db592a2eb6 | |||

| 122edc8f5d | |||

| f91fb2bda2 | |||

| 01e4ad0009 | |||

| 1e56ff0f16 | |||

| c4ed3274be | |||

| 14b6bc6691 | |||

| eb4d2f381a | |||

| 78e08bd658 | |||

| 96d4854455 | |||

| 3ef21a24e7 | |||

| f7707fd4c6 | |||

| dd9b8f4189 | |||

| ddd318865b | |||

| 8aa12d3c95 | |||

| 95aea7c072 | |||

| eda1f36c57 | |||

| ac0d5b726d | |||

| 6826d592ae | |||

| c058ee6f05 | |||

| fbeb146eea | |||

| 98845b9282 | |||

| 9f6326e65a | |||

| 7dcc71b1a6 | |||

| 6b73adc900 | |||

| 249d3e3259 | |||

| ad8d50e30d | |||

| d608fea0d1 | |||

| 92b03f5fdc | |||

| 7877e92991 | |||

| 1d7e3c2ae2 | |||

| eb6aa20401 | |||

| b8f0c4cf12 | |||

| e11a45c5d8 | |||

| 08cfc4179b | |||

| d603e7c527 | |||

| 5d30cd4d30 | |||

| 46975236be | |||

| 9a8d52cc5a | |||

| 0a6c42c12c | |||

| 221be13d26 | |||

| a922af6927 | |||

| 42e7a0a824 | |||

| 15d52e759b | |||

| 24e914a0ab | |||

| 637612d95f | |||

| 35694baef2 | |||

| d2f27df50a | |||

| 5cee9a0478 | |||

| 3f7710aed7 | |||

| ca0af3944d | |||

| e4f9a483d9 | |||

| 80890b17be | |||

| cf9d2a7133 | |||

| c02ce6d3f5 | |||

| 9141aa42ba | |||

| 05723c0b88 | |||

| b87ec2d5a0 | |||

| 27df071ad8 | |||

| 67452ef213 | |||

| 22a90198e5 | |||

| 4f81e7736d | |||

| 14292b08af | |||

| 453c4eca14 | |||

| decc832d3e | |||

| 1111295776 | |||

| c04074e248 | |||

| d484dc2a93 | |||

| 34e6948d45 | |||

| 9f69f06a1c | |||

| 5bb46687c5 | |||

| 25d6700c5e | |||

| 4d31d0c4f8 | |||

| 0ff39d2a87 | |||

| b4899b29d2 | |||

| 6aae9e75f3 | |||

| 79b90e19ba | |||

| 7f636c9ed7 | |||

| 98d8cc509d | |||

| 9d09b3e107 | |||

| 336d63eb80 | |||

| 7fc970983c | |||

| d3bbee3ab8 | |||

| eb5465df7e | |||

| 1c272240ac | |||

| b095245830 | |||

| c115453fba | |||

| 16f214c58d | |||

| e9a437992e | |||

| c837fbe5b9 | |||

| 01c4a35928 | |||

| 1aca98fbcf | |||

| 029f961b7c | |||

| 8ec912ffa6 | |||

| f360c37466 | |||

| 217313014b | |||

| b946e875b1 | |||

| 6dd50b45d8 | |||

| 98120d6aeb | |||

| 3b2c820db6 | |||

| 25fd6f2313 | |||

| 3f1477cdc0 | |||

| 2cff1e4385 | |||

| d7d7902938 | |||

| 77b0cc1707 | |||

| 17f22c1c20 | |||

| e448bb69f0 | |||

| 9aa4e3ce2b | |||

| ca8a508913 | |||

| a00ab445ba | |||

| 431f0c9a2f | |||

| 64bc9bc9e6 | |||

| 5a1e1bf06e | |||

| e8dd8102d8 | |||

| 1b46c61d43 | |||

| 3b0a1b5f8c | |||

| 31658b4263 | |||

| f7227fb296 | |||

| b3c2e73e70 | |||

| d78d917880 | |||

| cdde7f71d7 | |||

| 51d5f08d88 | |||

| 8762507d3a | |||

| 1bd852aa8f | |||

| 170d58ffce | |||

| 84c9209037 | |||

| d0fe348a0a | |||

| 5857d0acc6 | |||

| fd50e063e1 | |||

| bcff7c2dab | |||

| 0e8d9f8504 | |||

| 7f297b38c6 | |||

| 84393f3b94 | |||

| 388bdc03ac | |||

| 5c7bfbc8d9 | |||

| 36b77ae81d | |||

| 2049d03e82 | |||

| 31b98aa5a6 | |||

| d06b131097 | |||

| f3230902b1 | |||

| bbc7eeb29c | |||

| 163dae5579 | |||

| 64c8db2f9a | |||

| 25d4d81801 | |||

| 685620ac6c | |||

| 2b531b9223 | |||

| 4f7f73dd09 | |||

| c60c41688e | |||

| cbb98dabb1 | |||

| a86eaab8e8 | |||

| aa9770c6bd | |||

| 0fe603eca1 | |||

| 843c14574f | |||

| 009b82412f | |||

| 82c8f20601 | |||

| b56e8b3277 | |||

| 0161a8e602 | |||

| 6e34c5932b | |||

| e1531aa526 | |||

| cb6c45474a | |||

| fe55b440e7 | |||

| 431456732c | |||

| 9679d87012 | |||

| 099f0bf42b | |||

| 33f88ead0b | |||

| 7705daa672 | |||

| fe49697e66 | |||

| d1ad5405cb | |||

| 1e88b84ab9 | |||

| c39207460f | |||

| 61af5f26b6 | |||

| 7a89a43c3f | |||

| fead2c8c77 | |||

| b4bb12992e | |||

| b21baddc5c | |||

| 216c119fa9 | |||

| a2747acc0f | |||

| b61a4b95a0 | |||

| 5c5d7687d8 | |||

| 096f5e9da5 | |||

| 2a0ed3a596 |

107

.github/workflows/benchmark.yml

vendored

Normal file

107

.github/workflows/benchmark.yml

vendored

Normal file

@ -0,0 +1,107 @@

|

||||

name: "Benchmark on Comment"

|

||||

|

||||

# https://docs.github.com/en/actions/using-workflows/events-that-trigger-workflows

|

||||

on:

|

||||

issue_comment:

|

||||

types: [created]

|

||||

|

||||

jobs:

|

||||

Benchmark:

|

||||

strategy:

|

||||

fail-fast: true

|

||||

matrix:

|

||||

python-version: [3.9]

|

||||

os: [self-hosted]

|

||||

|

||||

name: Benchmark

|

||||

# Only run if it#s a PR and the comment contains /Benchmark

|

||||

if: github.event.issue.pull_request && startsWith(github.event.comment.body, '/benchmark-trl-experiments') && contains(FromJSON('["vwxyzjn", "younesbelkada", "lvwerra", "lewtun"]'), github.actor)

|

||||

runs-on: ${{ matrix.os }}

|

||||

|

||||

steps:

|

||||

- name: Get branch of PR

|

||||

uses: xt0rted/pull-request-comment-branch@v1

|

||||

id: comment-branch

|

||||

- name: Set latest commit status as pending

|

||||

uses: myrotvorets/set-commit-status-action@master

|

||||

with:

|

||||

sha: ${{ steps.comment-branch.outputs.head_sha }}

|

||||

token: ${{ secrets.GITHUB_TOKEN }}

|

||||

status: pending

|

||||

- name: Checkout `main` branch

|

||||

uses: actions/checkout@v3

|

||||

- name: Checkout PR branch

|

||||

run: gh pr checkout $PR_NUMBER

|

||||

env:

|

||||

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

|

||||

PR_NUMBER: ${{ github.event.issue.number }}

|

||||

- name: Set up Python ${{ matrix.python-version }}

|

||||

uses: actions/setup-python@v4

|

||||

with:

|

||||

python-version: ${{ matrix.python-version }}

|

||||

# - name: Cleanup pip packages (specific to self-hosted runners)

|

||||

# run: |

|

||||

# echo PATH is $PATH

|

||||

# echo PYTHONPATH is $PYTHONPATH

|

||||

# echo which python is $(which python)

|

||||

# echo which pip is $(which pip)

|

||||

|

||||

# pip_list=$(pip list --format=freeze | grep -v "^pip==" | grep -v "^setuptools==")

|

||||

# if [ ! -z "$pip_list" ]; then

|

||||

# echo "$pip_list" | xargs pip uninstall -y

|

||||

# fi

|

||||

- name: Print python depdenencies

|

||||

run: pip list --format=freeze

|

||||

- name: Install dependencies

|

||||

run: |

|

||||

pip install .[test,benchmark]

|

||||

|

||||

- name: Login

|

||||

run: wandb login ${{ secrets.WANDB_API_KEY }} && huggingface-cli login --token ${{ secrets.HUGGING_FACE_HUB_TOKEN }}

|

||||

- name: Run benchmark

|

||||

env:

|

||||

GITHUB_CONTEXT: ${{ toJson(github) }}

|

||||

PERSONAL_ACCESS_TOKEN_GITHUB: ${{ secrets.PERSONAL_ACCESS_TOKEN_GITHUB }}

|

||||

run: |

|

||||

COMMENT="${{ github.event.comment.body }}"

|

||||

if [[ "$COMMENT" == *"/benchmark-trl-experiments benchmark/benchmark_level1.sh"* ]]; then

|

||||

echo "Running benchmark/benchmark_level1.sh"

|

||||

BENCHMARK_SCRIPT="benchmark/benchmark_level1.sh" BENCHMARK_PLOT_SCRIPT="benchmark/benchmark_level1_plot.sh" bash benchmark/benchmark_and_report.sh

|

||||

elif [[ "$COMMENT" == *"/benchmark-trl-experiments benchmark/benchmark_level2.sh"* ]]; then

|

||||

echo "Running benchmark/benchmark_level2.sh"

|

||||

BENCHMARK_SCRIPT="benchmark/benchmark_level2.sh" BENCHMARK_PLOT_SCRIPT="benchmark/benchmark_level2_plot.sh" bash benchmark/benchmark_and_report.sh

|

||||

elif [[ "$COMMENT" == *"/benchmark-trl-experiments benchmark/benchmark_level3.sh"* ]]; then

|

||||

echo "Running benchmark/benchmark_level3.sh"

|

||||

BENCHMARK_SCRIPT="benchmark/benchmark_level3.sh" BENCHMARK_PLOT_SCRIPT="benchmark/benchmark_level3_plot.sh" bash benchmark/benchmark_and_report.sh

|

||||

else

|

||||

echo "Invalid command in comment. Skipping execution."

|

||||

fi

|

||||

|

||||

# send message to PR

|

||||

- name: Setup Node.js 16

|

||||

uses: actions/setup-node@v3

|

||||

with:

|

||||

node-version: 16

|

||||

- name: Add workflow result as comment on PR

|

||||

uses: actions/github-script@v6

|

||||

if: always()

|

||||

with:

|

||||

script: |

|

||||

const name = '${{ github.workflow }}';

|

||||

const url = '${{ github.server_url }}/${{ github.repository }}/actions/runs/${{ github.run_id }}';

|

||||

const success = '${{ job.status }}' === 'success';

|

||||

const body = `${name}: ${success ? 'succeeded ✅' : 'failed ❌'}\n${url}`;

|

||||

|

||||

await github.rest.issues.createComment({

|

||||

issue_number: context.issue.number,

|

||||

owner: context.repo.owner,

|

||||

repo: context.repo.repo,

|

||||

body: body

|

||||

})

|

||||

- name: Set latest commit status as ${{ job.status }}

|

||||

uses: myrotvorets/set-commit-status-action@master

|

||||

if: always()

|

||||

with:

|

||||

sha: ${{ steps.comment-branch.outputs.head_sha }}

|

||||

token: ${{ secrets.GITHUB_TOKEN }}

|

||||

status: ${{ job.status }}

|

||||

3

.github/workflows/build_documentation.yml

vendored

3

.github/workflows/build_documentation.yml

vendored

@ -13,7 +13,6 @@ jobs:

|

||||

with:

|

||||

commit_sha: ${{ github.sha }}

|

||||

package: trl

|

||||

repo_owner: lvwerra

|

||||

version_tag_suffix: ""

|

||||

secrets:

|

||||

token: ${{ secrets.HUGGINGFACE_PUSH }}

|

||||

hf_token: ${{ secrets.HF_DOC_BUILD_PUSH }}

|

||||

|

||||

1

.github/workflows/build_pr_documentation.yml

vendored

1

.github/workflows/build_pr_documentation.yml

vendored

@ -14,5 +14,4 @@ jobs:

|

||||

commit_sha: ${{ github.event.pull_request.head.sha }}

|

||||

pr_number: ${{ github.event.number }}

|

||||

package: trl

|

||||

repo_owner: lvwerra

|

||||

version_tag_suffix: ""

|

||||

14

.github/workflows/delete_doc_comment.yml

vendored

14

.github/workflows/delete_doc_comment.yml

vendored

@ -1,13 +1,13 @@

|

||||

name: Delete dev documentation

|

||||

name: Delete doc comment

|

||||

|

||||

on:

|

||||

pull_request:

|

||||

types: [ closed ]

|

||||

|

||||

workflow_run:

|

||||

workflows: ["Delete doc comment trigger"]

|

||||

types:

|

||||

- completed

|

||||

|

||||

jobs:

|

||||

delete:

|

||||

uses: huggingface/doc-builder/.github/workflows/delete_doc_comment.yml@main

|

||||

with:

|

||||

pr_number: ${{ github.event.number }}

|

||||

package: trl

|

||||

secrets:

|

||||

comment_bot_token: ${{ secrets.COMMENT_BOT_TOKEN }}

|

||||

12

.github/workflows/delete_doc_comment_trigger.yml

vendored

Normal file

12

.github/workflows/delete_doc_comment_trigger.yml

vendored

Normal file

@ -0,0 +1,12 @@

|

||||

name: Delete doc comment trigger

|

||||

|

||||

on:

|

||||

pull_request:

|

||||

types: [ closed ]

|

||||

|

||||

|

||||

jobs:

|

||||

delete:

|

||||

uses: huggingface/doc-builder/.github/workflows/delete_doc_comment_trigger.yml@main

|

||||

with:

|

||||

pr_number: ${{ github.event.number }}

|

||||

27

.github/workflows/stale.yml

vendored

Normal file

27

.github/workflows/stale.yml

vendored

Normal file

@ -0,0 +1,27 @@

|

||||

name: Stale Bot

|

||||

|

||||

on:

|

||||

schedule:

|

||||

- cron: "0 15 * * *"

|

||||

|

||||

jobs:

|

||||

close_stale_issues:

|

||||

name: Close Stale Issues

|

||||

if: github.repository == 'huggingface/trl'

|

||||

runs-on: ubuntu-latest

|

||||

env:

|

||||

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

|

||||

steps:

|

||||

- uses: actions/checkout@v3

|

||||

|

||||

- name: Setup Python

|

||||

uses: actions/setup-python@v4

|

||||

with:

|

||||

python-version: 3.8

|

||||

|

||||

- name: Install requirements

|

||||

run: |

|

||||

pip install PyGithub

|

||||

- name: Close stale issues

|

||||

run: |

|

||||

python scripts/stale.py

|

||||

34

.github/workflows/tests.yml

vendored

34

.github/workflows/tests.yml

vendored

@ -7,33 +7,31 @@ on:

|

||||

branches: [ main ]

|

||||

|

||||

jobs:

|

||||

|

||||

check_code_quality:

|

||||

runs-on: ubuntu-latest

|

||||

strategy:

|

||||

matrix:

|

||||

python-version: [3.9]

|

||||

|

||||

steps:

|

||||

- uses: actions/checkout@v3

|

||||

- name: Set up Python

|

||||

uses: actions/setup-python@v4

|

||||

- uses: actions/checkout@v2

|

||||

with:

|

||||

python-version: "3.8"

|

||||

cache: "pip"

|

||||

cache-dependency-path: |

|

||||

setup.py

|

||||

requirements.txt

|

||||

- name: Install dependencies

|

||||

run: |

|

||||

python -m pip install --upgrade pip

|

||||

pip install .[dev]

|

||||

- name: Check quality

|

||||

run: |

|

||||

make quality

|

||||

fetch-depth: 0

|

||||

submodules: recursive

|

||||

- name: Set up Python ${{ matrix.python-version }}

|

||||

uses: actions/setup-python@v2

|

||||

with:

|

||||

python-version: ${{ matrix.python-version }}

|

||||

- uses: pre-commit/action@v2.0.3

|

||||

with:

|

||||

extra_args: --all-files

|

||||

|

||||

tests:

|

||||

needs: check_code_quality

|

||||

strategy:

|

||||

matrix:

|

||||

python-version: [3.7, 3.8, 3.9]

|

||||

os: ['ubuntu-latest', 'macos-latest', 'windows-latest']

|

||||

python-version: ['3.8', '3.9', '3.10']

|

||||

os: ['ubuntu-latest', 'windows-latest']

|

||||

runs-on: ${{ matrix.os }}

|

||||

steps:

|

||||

- uses: actions/checkout@v3

|

||||

|

||||

16

.github/workflows/upload_pr_documentation.yml

vendored

Normal file

16

.github/workflows/upload_pr_documentation.yml

vendored

Normal file

@ -0,0 +1,16 @@

|

||||

name: Upload PR Documentation

|

||||

|

||||

on:

|

||||

workflow_run:

|

||||

workflows: ["Build PR Documentation"]

|

||||

types:

|

||||

- completed

|

||||

|

||||

jobs:

|

||||

build:

|

||||

uses: huggingface/doc-builder/.github/workflows/upload_pr_documentation.yml@main

|

||||

with:

|

||||

package_name: trl

|

||||

secrets:

|

||||

hf_token: ${{ secrets.HF_DOC_BUILD_PUSH }}

|

||||

comment_bot_token: ${{ secrets.COMMENT_BOT_TOKEN }}

|

||||

1

.gitignore

vendored

1

.gitignore

vendored

@ -1,3 +1,4 @@

|

||||

benchmark/trl

|

||||

*.bak

|

||||

.gitattributes

|

||||

.last_checked

|

||||

|

||||

42

.pre-commit-config.yaml

Normal file

42

.pre-commit-config.yaml

Normal file

@ -0,0 +1,42 @@

|

||||

repos:

|

||||

- repo: https://github.com/PyCQA/isort

|

||||

rev: 5.12.0

|

||||

hooks:

|

||||

- id: isort

|

||||

args:

|

||||

- --profile=black

|

||||

- --skip-glob=wandb/**/*

|

||||

- --thirdparty=wandb

|

||||

- repo: https://github.com/myint/autoflake

|

||||

rev: v1.4

|

||||

hooks:

|

||||

- id: autoflake

|

||||

args:

|

||||

- -r

|

||||

- --exclude=wandb,__init__.py

|

||||

- --in-place

|

||||

- --remove-unused-variables

|

||||

- --remove-all-unused-imports

|

||||

- repo: https://github.com/python/black

|

||||

rev: 22.3.0

|

||||

hooks:

|

||||

- id: black

|

||||

args:

|

||||

- --line-length=119

|

||||

- --target-version=py38

|

||||

- --exclude=wandb

|

||||

- repo: https://github.com/pycqa/flake8

|

||||

rev: 6.0.0

|

||||

hooks:

|

||||

- id: flake8

|

||||

args:

|

||||

- --ignore=E203,E501,W503,E128

|

||||

- --max-line-length=119

|

||||

|

||||

# - repo: https://github.com/codespell-project/codespell

|

||||

# rev: v2.1.0

|

||||

# hooks:

|

||||

# - id: codespell

|

||||

# args:

|

||||

# - --ignore-words-list=nd,reacher,thist,ths,magent,ba

|

||||

# - --skip=docs/css/termynal.css,docs/js/termynal.js

|

||||

@ -17,7 +17,7 @@ authors:

|

||||

family-names: Thrush

|

||||

- given-names: Nathan

|

||||

family-names: Lambert

|

||||

repository-code: 'https://github.com/lvwerra/trl'

|

||||

repository-code: 'https://github.com/huggingface/trl'

|

||||

abstract: "With trl you can train transformer language models with Proximal Policy Optimization (PPO). The library is built on top of the transformers library by \U0001F917 Hugging Face. Therefore, pre-trained language models can be directly loaded via transformers. At this point, most decoder and encoder-decoder architectures are supported."

|

||||

keywords:

|

||||

- rlhf

|

||||

|

||||

@ -36,10 +36,15 @@ First you want to make sure that all the tests pass:

|

||||

make test

|

||||

```

|

||||

|

||||

Then before submitting your PR make sure the code quality follows the standards. You can run the following command to format and test:

|

||||

Then before submitting your PR make sure the code quality follows the standards. You can run the following command to format:

|

||||

|

||||

```bash

|

||||

make style && make quality

|

||||

make precommit

|

||||

```

|

||||

|

||||

Make sure to install `pre-commit` before running the command:

|

||||

```bash

|

||||

pip install pre-commit

|

||||

```

|

||||

|

||||

## Do you want to contribute to the documentation?

|

||||

|

||||

16

Makefile

16

Makefile

@ -1,15 +1,15 @@

|

||||

.PHONY: quality style test

|

||||

.PHONY: test precommit benchmark_core benchmark_aux

|

||||

|

||||

check_dirs := examples tests trl

|

||||

|

||||

test:

|

||||

python -m pytest -n auto --dist=loadfile -s -v ./tests/

|

||||

|

||||

quality:

|

||||

black --check --line-length 119 --target-version py38 $(check_dirs)

|

||||

isort --check-only $(check_dirs)

|

||||

flake8 $(check_dirs)

|

||||

precommit:

|

||||

pre-commit run --all-files

|

||||

|

||||

style:

|

||||

black --line-length 119 --target-version py38 $(check_dirs)

|

||||

isort $(check_dirs)

|

||||

benchmark_core:

|

||||

bash ./benchmark/benchmark_core.sh

|

||||

|

||||

benchmark_aux:

|

||||

bash ./benchmark/benchmark_aux.sh

|

||||

|

||||

101

README.md

101

README.md

@ -3,18 +3,38 @@

|

||||

</div>

|

||||

|

||||

# TRL - Transformer Reinforcement Learning

|

||||

> Train transformer language models with reinforcement learning.

|

||||

> Full stack transformer language models with reinforcement learning.

|

||||

|

||||

<p align="center">

|

||||

<a href="https://github.com/huggingface/trl/blob/main/LICENSE">

|

||||

<img alt="License" src="https://img.shields.io/github/license/huggingface/trl.svg?color=blue">

|

||||

</a>

|

||||

<a href="https://huggingface.co/docs/trl/index">

|

||||

<img alt="Documentation" src="https://img.shields.io/website/http/huggingface.co/docs/trl/index.svg?down_color=red&down_message=offline&up_message=online">

|

||||

</a>

|

||||

<a href="https://github.com/huggingface/trl/releases">

|

||||

<img alt="GitHub release" src="https://img.shields.io/github/release/huggingface/trl.svg">

|

||||

</a>

|

||||

</p>

|

||||

|

||||

|

||||

## What is it?

|

||||

With `trl` you can train transformer language models with Proximal Policy Optimization (PPO). The library is built on top of the [`transformers`](https://github.com/huggingface/transformers) library by 🤗 Hugging Face. Therefore, pre-trained language models can be directly loaded via `transformers`. At this point most of decoder architectures and encoder-decoder architectures are supported.

|

||||

|

||||

<div style="text-align: center">

|

||||

<img src="https://huggingface.co/datasets/trl-internal-testing/example-images/resolve/main/images/TRL-readme.png">

|

||||

</div>

|

||||

|

||||

`trl` is a full stack library where we provide a set of tools to train transformer language models and stable diffusion models with Reinforcement Learning, from the Supervised Fine-tuning step (SFT), Reward Modeling step (RM) to the Proximal Policy Optimization (PPO) step. The library is built on top of the [`transformers`](https://github.com/huggingface/transformers) library by 🤗 Hugging Face. Therefore, pre-trained language models can be directly loaded via `transformers`. At this point most of decoder architectures and encoder-decoder architectures are supported. Refer to the documentation or the `examples/` folder for example code snippets and how to run these tools.

|

||||

|

||||

**Highlights:**

|

||||

- `PPOTrainer`: A PPO trainer for language models that just needs (query, response, reward) triplets to optimise the language model.

|

||||

- `AutoModelForCausalLMWithValueHead` & `AutoModelForSeq2SeqLMWithValueHead`: A transformer model with an additional scalar output for each token which can be used as a value function in reinforcement learning.

|

||||

- Example: Train GPT2 to generate positive movie reviews with a BERT sentiment classifier.

|

||||

|

||||

## How it works

|

||||

- [`SFTTrainer`](https://huggingface.co/docs/trl/sft_trainer): A light and friendly wrapper around `transformers` Trainer to easily fine-tune language models or adapters on a custom dataset.

|

||||

- [`RewardTrainer`](https://huggingface.co/docs/trl/reward_trainer): A light wrapper around `transformers` Trainer to easily fine-tune language models for human preferences (Reward Modeling).

|

||||

- [`PPOTrainer`](https://huggingface.co/docs/trl/trainer#trl.PPOTrainer): A PPO trainer for language models that just needs (query, response, reward) triplets to optimise the language model.

|

||||

- [`AutoModelForCausalLMWithValueHead`](https://huggingface.co/docs/trl/models#trl.AutoModelForCausalLMWithValueHead) & [`AutoModelForSeq2SeqLMWithValueHead`](https://huggingface.co/docs/trl/models#trl.AutoModelForSeq2SeqLMWithValueHead): A transformer model with an additional scalar output for each token which can be used as a value function in reinforcement learning.

|

||||

- [Examples](https://github.com/huggingface/trl/tree/main/examples): Train GPT2 to generate positive movie reviews with a BERT sentiment classifier, full RLHF using adapters only, train GPT-j to be less toxic, [Stack-Llama example](https://huggingface.co/blog/stackllama), etc.

|

||||

|

||||

## How PPO works

|

||||

Fine-tuning a language model via PPO consists of roughly three steps:

|

||||

|

||||

1. **Rollout**: The language model generates a response or continuation based on query which could be the start of a sentence.

|

||||

@ -40,7 +60,7 @@ pip install trl

|

||||

### From source

|

||||

If you want to run the examples in the repository a few additional libraries are required. Clone the repository and install it with pip:

|

||||

```bash

|

||||

git clone https://github.com/lvwerra/trl.git

|

||||

git clone https://github.com/huggingface/trl.git

|

||||

cd trl/

|

||||

pip install .

|

||||

```

|

||||

@ -52,8 +72,59 @@ pip install -e .

|

||||

|

||||

## How to use

|

||||

|

||||

### Example

|

||||

This is a basic example on how to use the library. Based on a query the language model creates a response which is then evaluated. The evaluation could be a human in the loop or another model's output.

|

||||

### `SFTTrainer`

|

||||

|

||||

This is a basic example on how to use the `SFTTrainer` from the library. The `SFTTrainer` is a light wrapper around the `transformers` Trainer to easily fine-tune language models or adapters on a custom dataset.

|

||||

|

||||

```python

|

||||

# imports

|

||||

from datasets import load_dataset

|

||||

from trl import SFTTrainer

|

||||

|

||||

# get dataset

|

||||

dataset = load_dataset("imdb", split="train")

|

||||

|

||||

# get trainer

|

||||

trainer = SFTTrainer(

|

||||

"facebook/opt-350m",

|

||||

train_dataset=dataset,

|

||||

dataset_text_field="text",

|

||||

max_seq_length=512,

|

||||

)

|

||||

|

||||

# train

|

||||

trainer.train()

|

||||

```

|

||||

|

||||

### `RewardTrainer`

|

||||

|

||||

This is a basic example on how to use the `RewardTrainer` from the library. The `RewardTrainer` is a wrapper around the `transformers` Trainer to easily fine-tune reward models or adapters on a custom preference dataset.

|

||||

|

||||

```python

|

||||

# imports

|

||||

from transformers import AutoModelForSequenceClassification, AutoTokenizer

|

||||

from trl import RewardTrainer

|

||||

|

||||

# load model and dataset - dataset needs to be in a specific format

|

||||

model = AutoModelForSequenceClassification.from_pretrained("gpt2", num_labels=1)

|

||||

tokenizer = AutoTokenizer.from_pretrained("gpt2")

|

||||

|

||||

...

|

||||

|

||||

# load trainer

|

||||

trainer = RewardTrainer(

|

||||

model=model,

|

||||

tokenizer=tokenizer,

|

||||

train_dataset=dataset,

|

||||

)

|

||||

|

||||

# train

|

||||

trainer.train()

|

||||

```

|

||||

|

||||

### `PPOTrainer`

|

||||

|

||||

This is a basic example on how to use the `PPOTrainer` from the library. Based on a query the language model creates a response which is then evaluated. The evaluation could be a human in the loop or another model's output.

|

||||

|

||||

```python

|

||||

# imports

|

||||

@ -91,14 +162,6 @@ reward = [torch.tensor(1.0)]

|

||||

train_stats = ppo_trainer.step([query_tensor[0]], [response_tensor[0]], reward)

|

||||

```

|

||||

|

||||

### Advanced example: IMDB sentiment

|

||||

For a detailed example check out the example python script `examples/sentiment/scripts/gpt2-sentiment.py`, where GPT2 is fine-tuned to generate positive movie reviews. An few examples from the language models before and after optimisation are given below:

|

||||

|

||||

<div style="text-align: center">

|

||||

<img src="https://huggingface.co/datasets/trl-internal-testing/example-images/resolve/main/images/table_imdb_preview.png" width="800">

|

||||

<p style="text-align: center;"> <b>Figure:</b> A few review continuations before and after optimisation. </p>

|

||||

</div>

|

||||

|

||||

## References

|

||||

|

||||

### Proximal Policy Optimisation

|

||||

@ -111,11 +174,11 @@ The language models utilize the `transformers` library by 🤗 Hugging Face.

|

||||

|

||||

```bibtex

|

||||

@misc{vonwerra2022trl,

|

||||

author = {Leandro von Werra and Younes Belkada and Lewis Tunstall and Edward Beeching and Tristan Thrush and Nathan Lambert},

|

||||

author = {Leandro von Werra and Younes Belkada and Lewis Tunstall and Edward Beeching and Tristan Thrush and Nathan Lambert and Shengyi Huang},

|

||||

title = {TRL: Transformer Reinforcement Learning},

|

||||

year = {2020},

|

||||

publisher = {GitHub},

|

||||

journal = {GitHub repository},

|

||||

howpublished = {\url{https://github.com/lvwerra/trl}}

|

||||

howpublished = {\url{https://github.com/huggingface/trl}}

|

||||

}

|

||||

```

|

||||

|

||||

150

benchmark/benchmark.py

Normal file

150

benchmark/benchmark.py

Normal file

@ -0,0 +1,150 @@

|

||||

import argparse

|

||||

import math

|

||||

import os

|

||||

import shlex

|

||||

import subprocess

|

||||

import uuid

|

||||

from distutils.util import strtobool

|

||||

|

||||

import requests

|

||||

|

||||

|

||||

def parse_args():

|

||||

# fmt: off

|

||||

parser = argparse.ArgumentParser()

|

||||

parser.add_argument("--command", type=str, default="",

|

||||

help="the command to run")

|

||||

parser.add_argument("--num-seeds", type=int, default=3,

|

||||

help="the number of random seeds")

|

||||

parser.add_argument("--start-seed", type=int, default=1,

|

||||

help="the number of the starting seed")

|

||||

parser.add_argument("--workers", type=int, default=0,

|

||||

help="the number of workers to run benchmark experimenets")

|

||||

parser.add_argument("--auto-tag", type=lambda x: bool(strtobool(x)), default=True, nargs="?", const=True,

|

||||

help="if toggled, the runs will be tagged with git tags, commit, and pull request number if possible")

|

||||

parser.add_argument("--slurm-template-path", type=str, default=None,

|

||||

help="the path to the slurm template file (see docs for more details)")

|

||||

parser.add_argument("--slurm-gpus-per-task", type=int, default=1,

|

||||

help="the number of gpus per task to use for slurm jobs")

|

||||

parser.add_argument("--slurm-total-cpus", type=int, default=50,

|

||||

help="the number of gpus per task to use for slurm jobs")

|

||||

parser.add_argument("--slurm-ntasks", type=int, default=1,

|

||||

help="the number of tasks to use for slurm jobs")

|

||||

parser.add_argument("--slurm-nodes", type=int, default=None,

|

||||

help="the number of nodes to use for slurm jobs")

|

||||

args = parser.parse_args()

|

||||

# fmt: on

|

||||

return args

|

||||

|

||||

|

||||

def run_experiment(command: str):

|

||||

command_list = shlex.split(command)

|

||||

print(f"running {command}")

|

||||

|

||||

# Use subprocess.PIPE to capture the output

|

||||

fd = subprocess.Popen(command_list, stdout=subprocess.PIPE, stderr=subprocess.PIPE)

|

||||

output, errors = fd.communicate()

|

||||

|

||||

return_code = fd.returncode

|

||||

assert return_code == 0, f"Command failed with error: {errors.decode('utf-8')}"

|

||||

|

||||

# Convert bytes to string and strip leading/trailing whitespaces

|

||||

return output.decode("utf-8").strip()

|

||||

|

||||

|

||||

def autotag() -> str:

|

||||

wandb_tag = ""

|

||||

print("autotag feature is enabled")

|

||||

git_tag = ""

|

||||

try:

|

||||

git_tag = subprocess.check_output(["git", "describe", "--tags"]).decode("ascii").strip()

|

||||

print(f"identified git tag: {git_tag}")

|

||||

except subprocess.CalledProcessError as e:

|

||||

print(e)

|

||||

if len(git_tag) == 0:

|

||||

try:

|

||||

count = int(subprocess.check_output(["git", "rev-list", "--count", "HEAD"]).decode("ascii").strip())

|

||||

hash = subprocess.check_output(["git", "rev-parse", "--short", "HEAD"]).decode("ascii").strip()

|

||||

git_tag = f"no-tag-{count}-g{hash}"

|

||||

print(f"identified git tag: {git_tag}")

|

||||

except subprocess.CalledProcessError as e:

|

||||

print(e)

|

||||

wandb_tag = f"{git_tag}"

|

||||

|

||||

git_commit = subprocess.check_output(["git", "rev-parse", "--verify", "HEAD"]).decode("ascii").strip()

|

||||

try:

|

||||

# try finding the pull request number on github

|

||||

prs = requests.get(f"https://api.github.com/search/issues?q=repo:huggingface/trl+is:pr+{git_commit}")

|

||||

if prs.status_code == 200:

|

||||

prs = prs.json()

|

||||

if len(prs["items"]) > 0:

|

||||

pr = prs["items"][0]

|

||||

pr_number = pr["number"]

|

||||

wandb_tag += f",pr-{pr_number}"

|

||||

print(f"identified github pull request: {pr_number}")

|

||||

except Exception as e:

|

||||

print(e)

|

||||

|

||||

return wandb_tag

|

||||

|

||||

|

||||

if __name__ == "__main__":

|

||||

args = parse_args()

|

||||

if args.auto_tag:

|

||||

existing_wandb_tag = os.environ.get("WANDB_TAGS", "")

|

||||

wandb_tag = autotag()

|

||||

if len(wandb_tag) > 0:

|

||||

if len(existing_wandb_tag) > 0:

|

||||

os.environ["WANDB_TAGS"] = ",".join([existing_wandb_tag, wandb_tag])

|

||||

else:

|

||||

os.environ["WANDB_TAGS"] = wandb_tag

|

||||

print("WANDB_TAGS: ", os.environ.get("WANDB_TAGS", ""))

|

||||

commands = []

|

||||

for seed in range(0, args.num_seeds):

|

||||

commands += [" ".join([args.command, "--seed", str(args.start_seed + seed)])]

|

||||

|

||||

print("======= commands to run:")

|

||||

for command in commands:

|

||||

print(command)

|

||||

|

||||

if args.workers > 0 and args.slurm_template_path is None:

|

||||

from concurrent.futures import ThreadPoolExecutor

|

||||

|

||||

executor = ThreadPoolExecutor(max_workers=args.workers, thread_name_prefix="cleanrl-benchmark-worker-")

|

||||

for command in commands:

|

||||

executor.submit(run_experiment, command)

|

||||

executor.shutdown(wait=True)

|

||||

else:

|

||||

print("not running the experiments because --workers is set to 0; just printing the commands to run")

|

||||

|

||||

# SLURM logic

|

||||

if args.slurm_template_path is not None:

|

||||

if not os.path.exists("slurm"):

|

||||

os.makedirs("slurm")

|

||||

if not os.path.exists("slurm/logs"):

|

||||

os.makedirs("slurm/logs")

|

||||

print("======= slurm commands to run:")

|

||||

with open(args.slurm_template_path) as f:

|

||||

slurm_template = f.read()

|

||||

slurm_template = slurm_template.replace("{{array}}", f"0-{len(commands) - 1}%{args.workers}")

|

||||

slurm_template = slurm_template.replace(

|

||||

"{{seeds}}", f"({' '.join([str(args.start_seed + int(seed)) for seed in range(args.num_seeds)])})"

|

||||

)

|

||||

slurm_template = slurm_template.replace("{{len_seeds}}", f"{args.num_seeds}")

|

||||

slurm_template = slurm_template.replace("{{command}}", args.command)

|

||||

slurm_template = slurm_template.replace("{{gpus_per_task}}", f"{args.slurm_gpus_per_task}")

|

||||

total_gpus = args.slurm_gpus_per_task * args.slurm_ntasks

|

||||

slurm_cpus_per_gpu = math.ceil(args.slurm_total_cpus / total_gpus)

|

||||

slurm_template = slurm_template.replace("{{cpus_per_gpu}}", f"{slurm_cpus_per_gpu}")

|

||||

slurm_template = slurm_template.replace("{{ntasks}}", f"{args.slurm_ntasks}")

|

||||

if args.slurm_nodes is not None:

|

||||

slurm_template = slurm_template.replace("{{nodes}}", f"#SBATCH --nodes={args.slurm_nodes}")

|

||||

else:

|

||||

slurm_template = slurm_template.replace("{{nodes}}", "")

|

||||

filename = str(uuid.uuid4())

|

||||

open(os.path.join("slurm", f"{filename}.slurm"), "w").write(slurm_template)

|

||||

slurm_path = os.path.join("slurm", f"{filename}.slurm")

|

||||

print(f"saving command in {slurm_path}")

|

||||

if args.workers > 0:

|

||||

job_id = run_experiment(f"sbatch --parsable {slurm_path}")

|

||||

print(f"Job ID: {job_id}")

|

||||

41

benchmark/benchmark_and_report.sh

Normal file

41

benchmark/benchmark_and_report.sh

Normal file

@ -0,0 +1,41 @@

|

||||

#### Step 1: create a work directory:

|

||||

# this is necessary because another github action job will remove

|

||||

# the entire directory, which slurm depends on.

|

||||

# https://stackoverflow.com/questions/4632028/how-to-create-a-temporary-directory

|

||||

MY_SLURM_TMP_DIR=/fsx/costa/slurm_tmpdir

|

||||

mkdir -p $MY_SLURM_TMP_DIR

|

||||

WORK_DIR=`mktemp -d -p "$MY_SLURM_TMP_DIR"`

|

||||

cp -r "$PWD" "$WORK_DIR"

|

||||

cd "$WORK_DIR/$(basename "$PWD")"

|

||||

echo WORK_DIR: $WORK_DIR

|

||||

|

||||

#### Step 2: actual work starts:

|

||||

echo PATH is $PATH

|

||||

echo PYTHONPATH is $PYTHONPATH

|

||||

echo whcih python is $(which python)

|

||||

|

||||

export WANDB_ENTITY=huggingface

|

||||

bash $BENCHMARK_SCRIPT > output.txt

|

||||

|

||||

# Extract Job IDs into an array

|

||||

job_ids=($(grep "Job ID:" output.txt | awk '{print $3}'))

|

||||

|

||||

# Extract WANDB_TAGS into an array

|

||||

WANDB_TAGS=($(grep "WANDB_TAGS:" output.txt | awk '{print $2}'))

|

||||

WANDB_TAGS=($(echo $WANDB_TAGS | tr "," "\n"))

|

||||

|

||||

# Print to verify

|

||||

echo "Job IDs: ${job_ids[@]}"

|

||||

echo "WANDB_TAGS: ${WANDB_TAGS[@]}"

|

||||

|

||||

TAGS_STRING="?tag=${WANDB_TAGS[0]}"

|

||||

FOLDER_STRING="${WANDB_TAGS[0]}"

|

||||

for tag in "${WANDB_TAGS[@]:1}"; do

|

||||

TAGS_STRING+="&tag=$tag"

|

||||

FOLDER_STRING+="_$tag"

|

||||

done

|

||||

|

||||

echo "TAGS_STRING: $TAGS_STRING"

|

||||

echo "FOLDER_STRING: $FOLDER_STRING"

|

||||

|

||||

TAGS_STRING=$TAGS_STRING FOLDER_STRING=$FOLDER_STRING BENCHMARK_PLOT_SCRIPT=$BENCHMARK_PLOT_SCRIPT sbatch --dependency=afterany:$job_ids benchmark/post_github_comment.sbatch

|

||||

11

benchmark/benchmark_level1.sh

Normal file

11

benchmark/benchmark_level1.sh

Normal file

@ -0,0 +1,11 @@

|

||||

# hello world experiment

|

||||

python benchmark/benchmark.py \

|

||||

--command "python examples/scripts/ppo.py --ppo_config.log_with wandb" \

|

||||

--num-seeds 3 \

|

||||

--start-seed 1 \

|

||||

--workers 10 \

|

||||

--slurm-nodes 1 \

|

||||

--slurm-gpus-per-task 1 \

|

||||

--slurm-ntasks 1 \

|

||||

--slurm-total-cpus 12 \

|

||||

--slurm-template-path benchmark/trl.slurm_template

|

||||

20

benchmark/benchmark_level1_plot.sh

Normal file

20

benchmark/benchmark_level1_plot.sh

Normal file

@ -0,0 +1,20 @@

|

||||

# pip install openrlbenchmark==0.2.1a5

|

||||

# see https://github.com/openrlbenchmark/openrlbenchmark#get-started for documentation

|

||||

echo "we deal with $TAGS_STRING"

|

||||

|

||||

python -m openrlbenchmark.rlops_multi_metrics \

|

||||

--filters '?we=huggingface&wpn=trl&xaxis=_step&ceik=trl_ppo_trainer_config.value.reward_model&cen=trl_ppo_trainer_config.value.exp_name&metrics=env/reward_mean&metrics=objective/kl' \

|

||||

"ppo$TAGS_STRING" \

|

||||

--env-ids sentiment-analysis:lvwerra/distilbert-imdb \

|

||||

--no-check-empty-runs \

|

||||

--pc.ncols 2 \

|

||||

--pc.ncols-legend 1 \

|

||||

--output-filename benchmark/trl/$FOLDER_STRING/hello_world \

|

||||

--scan-history

|

||||

|

||||

python benchmark/upload_benchmark.py \

|

||||

--folder_path="benchmark/trl/$FOLDER_STRING" \

|

||||

--path_in_repo="images/benchmark/$FOLDER_STRING" \

|

||||

--repo_id="trl-internal-testing/example-images" \

|

||||

--repo_type="dataset"

|

||||

|

||||

23

benchmark/benchmark_level2.sh

Normal file

23

benchmark/benchmark_level2.sh

Normal file

@ -0,0 +1,23 @@

|

||||

# compound experiments: gpt2xl + grad_accu

|

||||

python benchmark/benchmark.py \

|

||||

--command "python examples/scripts/ppo.py --ppo_config.exp_name ppo_gpt2xl_grad_accu --ppo_config.model_name gpt2-xl --ppo_config.mini_batch_size 16 --ppo_config.gradient_accumulation_steps 8 --ppo_config.log_with wandb" \

|

||||

--num-seeds 3 \

|

||||

--start-seed 1 \

|

||||

--workers 10 \

|

||||

--slurm-nodes 1 \

|

||||

--slurm-gpus-per-task 1 \

|

||||

--slurm-ntasks 1 \

|

||||

--slurm-total-cpus 12 \

|

||||

--slurm-template-path benchmark/trl.slurm_template

|

||||

|

||||

# compound experiments: Cerebras-GPT-6.7B + deepspeed zero2 + grad_accu

|

||||

python benchmark/benchmark.py \

|

||||

--command "accelerate launch --config_file examples/accelerate_configs/deepspeed_zero2.yaml examples/scripts/ppo.py --ppo_config.exp_name ppo_Cerebras-GPT-6.7B_grad_accu_deepspeed_stage2 --ppo_config.batch_size 32 --ppo_config.mini_batch_size 32 --ppo_config.log_with wandb --ppo_config.model_name cerebras/Cerebras-GPT-6.7B --ppo_config.reward_model sentiment-analysis:cerebras/Cerebras-GPT-6.7B" \

|

||||

--num-seeds 3 \

|

||||

--start-seed 1 \

|

||||

--workers 10 \

|

||||

--slurm-nodes 1 \

|

||||

--slurm-gpus-per-task 8 \

|

||||

--slurm-ntasks 1 \

|

||||

--slurm-total-cpus 90 \

|

||||

--slurm-template-path benchmark/trl.slurm_template

|

||||

31

benchmark/benchmark_level2_plot.sh

Normal file

31

benchmark/benchmark_level2_plot.sh

Normal file

@ -0,0 +1,31 @@

|

||||

# pip install openrlbenchmark==0.2.1a5

|

||||

# see https://github.com/openrlbenchmark/openrlbenchmark#get-started for documentation

|

||||

echo "we deal with $TAGS_STRING"

|

||||

|

||||

python -m openrlbenchmark.rlops_multi_metrics \

|

||||

--filters '?we=huggingface&wpn=trl&xaxis=_step&ceik=trl_ppo_trainer_config.value.reward_model&cen=trl_ppo_trainer_config.value.exp_name&metrics=env/reward_mean&metrics=objective/kl' \

|

||||

"ppo$TAGS_STRING" \

|

||||

"ppo_gpt2xl_grad_accu$TAGS_STRING" \

|

||||

--env-ids sentiment-analysis:lvwerra/distilbert-imdb \

|

||||

--no-check-empty-runs \

|

||||

--pc.ncols 2 \

|

||||

--pc.ncols-legend 1 \

|

||||

--output-filename benchmark/trl/$FOLDER_STRING/different_models \

|

||||

--scan-history

|

||||

|

||||

python -m openrlbenchmark.rlops_multi_metrics \

|

||||

--filters '?we=huggingface&wpn=trl&xaxis=_step&ceik=trl_ppo_trainer_config.value.reward_model&cen=trl_ppo_trainer_config.value.exp_name&metrics=env/reward_mean&metrics=objective/kl' \

|

||||

"ppo_Cerebras-GPT-6.7B_grad_accu_deepspeed_stage2$TAGS_STRING" \

|

||||

--env-ids sentiment-analysis:cerebras/Cerebras-GPT-6.7B \

|

||||

--no-check-empty-runs \

|

||||

--pc.ncols 2 \

|

||||

--pc.ncols-legend 1 \

|

||||

--output-filename benchmark/trl/$FOLDER_STRING/deepspeed \

|

||||

--scan-history

|

||||

|

||||

python benchmark/upload_benchmark.py \

|

||||

--folder_path="benchmark/trl/$FOLDER_STRING" \

|

||||

--path_in_repo="images/benchmark/$FOLDER_STRING" \

|

||||

--repo_id="trl-internal-testing/example-images" \

|

||||

--repo_type="dataset"

|

||||

|

||||

46

benchmark/benchmark_level3.sh

Normal file

46

benchmark/benchmark_level3.sh

Normal file

@ -0,0 +1,46 @@

|

||||

## w/ and w/o gradient accumulation

|

||||

python benchmark/benchmark.py \

|

||||

--command "python examples/scripts/ppo.py --ppo_config.exp_name ppo_step_grad_accu --ppo_config.mini_batch_size 1 --ppo_config.gradient_accumulation_steps 128 --ppo_config.log_with wandb" \

|

||||

--num-seeds 3 \

|

||||

--start-seed 1 \

|

||||

--workers 10 \

|

||||

--slurm-nodes 1 \

|

||||

--slurm-gpus-per-task 1 \

|

||||

--slurm-ntasks 1 \

|

||||

--slurm-total-cpus 12 \

|

||||

--slurm-template-path benchmark/trl.slurm_template

|

||||

|

||||

## w/ different models (gpt2, gpt2-xl, falcon, llama2)

|

||||

python benchmark/benchmark.py \

|

||||

--command "python examples/scripts/ppo.py --ppo_config.exp_name ppo_gpt2 --ppo_config.log_with wandb" \

|

||||

--num-seeds 3 \

|

||||

--start-seed 1 \

|

||||

--workers 10 \

|

||||

--slurm-nodes 1 \

|

||||

--slurm-gpus-per-task 1 \

|

||||

--slurm-ntasks 1 \

|

||||

--slurm-total-cpus 12 \

|

||||

--slurm-template-path benchmark/trl.slurm_template

|

||||

python benchmark/benchmark.py \

|

||||

--command "python examples/scripts/ppo.py --ppo_config.exp_name ppo_falcon_rw_1b --ppo_config.model_name tiiuae/falcon-rw-1b --ppo_config.log_with wandb" \

|

||||

--num-seeds 3 \

|

||||

--start-seed 1 \

|

||||

--workers 10 \

|

||||

--slurm-nodes 1 \

|

||||

--slurm-gpus-per-task 1 \

|

||||

--slurm-ntasks 1 \

|

||||

--slurm-total-cpus 12 \

|

||||

--slurm-template-path benchmark/trl.slurm_template

|

||||

|

||||

|

||||

## w/ and w/o PEFT

|

||||

python benchmark/benchmark.py \

|

||||

--command "python examples/scripts/ppo.py --ppo_config.exp_name ppo_peft --use_peft --ppo_config.log_with wandb" \

|

||||

--num-seeds 3 \

|

||||

--start-seed 1 \

|

||||

--workers 10 \

|

||||

--slurm-nodes 1 \

|

||||

--slurm-gpus-per-task 1 \

|

||||

--slurm-ntasks 1 \

|

||||

--slurm-total-cpus 12 \

|

||||

--slurm-template-path benchmark/trl.slurm_template

|

||||

56

benchmark/plot.sh

Normal file

56

benchmark/plot.sh

Normal file

@ -0,0 +1,56 @@

|

||||

# pip install openrlbenchmark==0.2.1a5

|

||||

# see https://github.com/openrlbenchmark/openrlbenchmark#get-started for documentation

|

||||

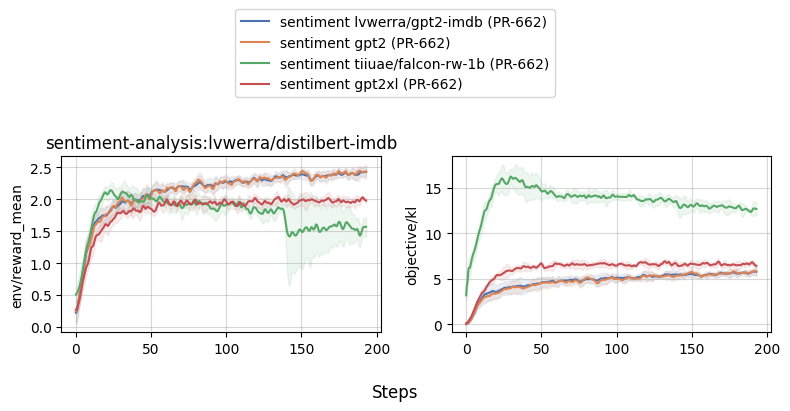

BASELINE_PR_TAG=v0.4.7-55-g110e672

|

||||

BASELINE_PR_NAME=PR-662

|

||||

|

||||

python -m openrlbenchmark.rlops_multi_metrics \

|

||||

--filters '?we=huggingface&wpn=trl&xaxis=_step&ceik=trl_ppo_trainer_config.value.reward_model&cen=trl_ppo_trainer_config.value.exp_name&metrics=env/reward_mean&metrics=objective/kl' \

|

||||

"sentiment_tuning?tag=$BASELINE_PR_TAG&cl=sentiment lvwerra/gpt2-imdb ($BASELINE_PR_NAME)" \

|

||||

--env-ids sentiment-analysis:lvwerra/distilbert-imdb \

|

||||

--no-check-empty-runs \

|

||||

--pc.ncols 2 \

|

||||

--pc.ncols-legend 1 \

|

||||

--output-filename benchmark/trl/$BASELINE_PR_TAG/sentiment \

|

||||

--scan-history

|

||||

|

||||

python -m openrlbenchmark.rlops_multi_metrics \

|

||||

--filters '?we=huggingface&wpn=trl&xaxis=_step&ceik=trl_ppo_trainer_config.value.reward_model&cen=trl_ppo_trainer_config.value.exp_name&metrics=env/reward_mean&metrics=objective/kl' \

|

||||

"sentiment_tuning?tag=$BASELINE_PR_TAG&cl=sentiment lvwerra/gpt2-imdb ($BASELINE_PR_NAME)" \

|

||||

"sentiment_tuning_step_grad_accu?tag=$BASELINE_PR_TAG&cl=sentiment lvwerra/gpt2-imdb gradient accumulation ($BASELINE_PR_NAME)" \

|

||||

--env-ids sentiment-analysis:lvwerra/distilbert-imdb \

|

||||

--no-check-empty-runs \

|

||||

--pc.ncols 2 \

|

||||

--pc.ncols-legend 1 \

|

||||

--output-filename benchmark/trl/$BASELINE_PR_TAG/gradient_accu \

|

||||

--scan-history

|

||||

|

||||

python -m openrlbenchmark.rlops_multi_metrics \

|

||||

--filters '?we=huggingface&wpn=trl&xaxis=_step&ceik=trl_ppo_trainer_config.value.reward_model&cen=trl_ppo_trainer_config.value.exp_name&metrics=env/reward_mean&metrics=objective/kl' \

|

||||

"sentiment_tuning?tag=$BASELINE_PR_TAG&cl=sentiment lvwerra/gpt2-imdb ($BASELINE_PR_NAME)" \

|

||||

"sentiment_tuning_gpt2?tag=$BASELINE_PR_TAG&cl=sentiment gpt2 ($BASELINE_PR_NAME)" \

|

||||

"sentiment_tuning_falcon_rw_1b?tag=$BASELINE_PR_TAG&cl=sentiment tiiuae/falcon-rw-1b ($BASELINE_PR_NAME)" \

|

||||

"sentiment_tuning_gpt2xl_grad_accu?tag=$BASELINE_PR_TAG&cl=sentiment gpt2xl ($BASELINE_PR_NAME)" \

|

||||

--env-ids sentiment-analysis:lvwerra/distilbert-imdb \

|

||||

--no-check-empty-runs \

|

||||

--pc.ncols 2 \

|

||||

--pc.ncols-legend 1 \

|

||||

--output-filename benchmark/trl/$BASELINE_PR_TAG/different_models \

|

||||

--scan-history

|

||||

|

||||

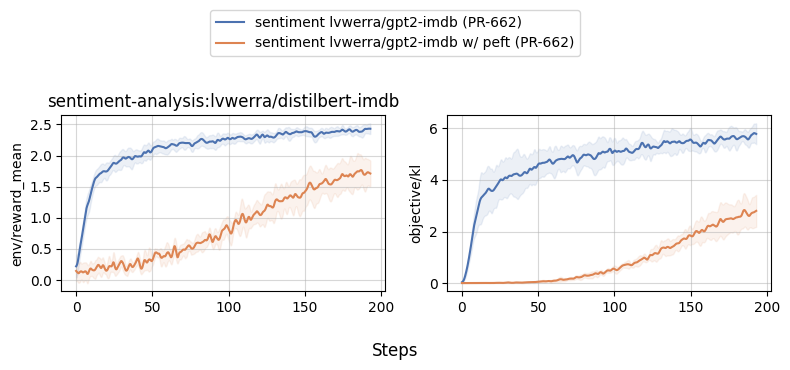

python -m openrlbenchmark.rlops_multi_metrics \

|

||||

--filters '?we=huggingface&wpn=trl&xaxis=_step&ceik=trl_ppo_trainer_config.value.reward_model&cen=trl_ppo_trainer_config.value.exp_name&metrics=env/reward_mean&metrics=objective/kl' \

|

||||

"sentiment_tuning?tag=$BASELINE_PR_TAG&cl=sentiment lvwerra/gpt2-imdb ($BASELINE_PR_NAME)" \

|

||||

"sentiment_tuning_peft?tag=$BASELINE_PR_TAG&cl=sentiment lvwerra/gpt2-imdb w/ peft ($BASELINE_PR_NAME)" \

|

||||

--env-ids sentiment-analysis:lvwerra/distilbert-imdb \

|

||||

--no-check-empty-runs \

|

||||

--pc.ncols 2 \

|

||||

--pc.ncols-legend 1 \

|

||||

--output-filename benchmark/trl/$BASELINE_PR_TAG/peft \

|

||||

--scan-history

|

||||

|

||||

|

||||

python benchmark/upload_benchmark.py \

|

||||

--folder_path="benchmark/trl/$BASELINE_PR_TAG" \

|

||||

--path_in_repo="images/benchmark/$BASELINE_PR_TAG" \

|

||||

--repo_id="trl-internal-testing/example-images" \

|

||||

--repo_type="dataset"

|

||||

26

benchmark/post_github_comment.py

Normal file

26

benchmark/post_github_comment.py

Normal file

@ -0,0 +1,26 @@

|

||||

import json

|

||||

import os

|

||||

|

||||

from ghapi.all import GhApi

|

||||

|

||||

|

||||

FOLDER_STRING = os.environ.get("FOLDER_STRING", "")

|

||||

folder = f"benchmark/trl/{FOLDER_STRING}"

|

||||

host_url = f"https://huggingface.co/datasets/trl-internal-testing/example-images/resolve/main/images/benchmark/{FOLDER_STRING}"

|

||||

|

||||

# Create a GitHub API instance

|

||||

github_context = json.loads(os.environ["GITHUB_CONTEXT"])

|

||||

token = os.environ["PERSONAL_ACCESS_TOKEN_GITHUB"] # this needs to refreshed every 12 months

|

||||

status_message = "**[COSTA BENCHMARK BOT]**: Here are the results"

|

||||

body = status_message

|

||||

repo = github_context["repository"]

|

||||

owner, repo = repo.split("/")

|

||||

api = GhApi(owner=owner, repo=repo, token=token)

|

||||

|

||||

# for each `.png` file in the folder, add it to the comment

|

||||

for file in os.listdir(folder):

|

||||

if file.endswith(".png"):

|

||||

body += f"\n"

|

||||

|

||||

# Create a comment on the issue

|

||||

api.issues.create_comment(issue_number=github_context["event"]["issue"]["number"], body=body)

|

||||

9

benchmark/post_github_comment.sbatch

Normal file

9

benchmark/post_github_comment.sbatch

Normal file

@ -0,0 +1,9 @@

|

||||

#!/bin/bash

|

||||

#SBATCH --job-name=trl

|

||||

#SBATCH --partition=production-cluster

|

||||

#SBATCH --ntasks=1

|

||||

#SBATCH --output=slurm/logs/%x_%j.out

|

||||

|

||||

sleep 2m

|

||||

bash $BENCHMARK_PLOT_SCRIPT

|

||||

srun python benchmark/post_github_comment.py

|

||||

16

benchmark/trl.slurm_template

Normal file

16

benchmark/trl.slurm_template

Normal file

@ -0,0 +1,16 @@

|

||||

#!/bin/bash

|

||||

#SBATCH --job-name=trl

|

||||

#SBATCH --partition=production-cluster

|

||||

#SBATCH --gpus-per-task={{gpus_per_task}}

|

||||

#SBATCH --cpus-per-gpu={{cpus_per_gpu}}

|

||||

#SBATCH --ntasks={{ntasks}}

|

||||

#SBATCH --output=slurm/logs/%x_%j.out

|

||||

#SBATCH --array={{array}}

|

||||

#SBATCH --exclude=ip-26-0-156-239,ip-26-0-148-151,ip-26-0-146-212,ip-26-0-145-137,ip-26-0-146-249,ip-26-0-146-149,ip-26-0-147-233,ip-26-0-145-154,ip-26-0-144-35,ip-26-0-144-189,ip-26-0-146-183,ip-26-0-147-120,ip-26-0-144-95,ip-26-0-145-193

|

||||

{{nodes}}

|

||||

|

||||

seeds={{seeds}}

|

||||

seed=${seeds[$SLURM_ARRAY_TASK_ID % {{len_seeds}}]}

|

||||

|

||||

echo "Running task $SLURM_ARRAY_TASK_ID with seed: $seed"

|

||||

srun {{command}} --ppo_config.seed $seed

|

||||

23

benchmark/upload_benchmark.py

Normal file

23

benchmark/upload_benchmark.py

Normal file

@ -0,0 +1,23 @@

|

||||

from dataclasses import dataclass

|

||||

|

||||

import tyro

|

||||

from huggingface_hub import HfApi

|

||||

|

||||

|

||||

@dataclass

|

||||

class Args:

|

||||

folder_path: str = "benchmark/trl"

|

||||

path_in_repo: str = "images/benchmark"

|

||||

repo_id: str = "trl-internal-testing/example-images"

|

||||

repo_type: str = "dataset"

|

||||

|

||||

|

||||

args = tyro.cli(Args)

|

||||

api = HfApi()

|

||||

|

||||

api.upload_folder(

|

||||

folder_path=args.folder_path,

|

||||

path_in_repo=args.path_in_repo,

|

||||

repo_id=args.repo_id,

|

||||

repo_type=args.repo_type,

|

||||

)

|

||||

@ -1,14 +1,18 @@

|

||||

- sections:

|

||||

- sections:

|

||||

- local: index

|

||||

title: TRL

|

||||

- local: quickstart

|

||||

title: Quickstart

|

||||

- local: installation

|

||||

title: Installation

|

||||

- local: how_to_train

|

||||

title: PPO Training FAQ

|

||||

- local: use_model

|

||||

title: Use Trained Models

|

||||

- local: customization

|

||||

title: Customize your training

|

||||

title: Customize the Training

|

||||

- local: logging

|

||||

title: Understanding logs

|

||||

title: Understanding Logs

|

||||

title: Get started

|

||||

- sections:

|

||||

- local: models

|

||||

@ -16,19 +20,35 @@

|

||||

- local: trainer

|

||||

title: Trainer Classes

|

||||

- local: reward_trainer

|

||||

title: Training your own reward model

|

||||

title: Reward Model Training

|

||||

- local: sft_trainer

|

||||

title: Supervised fine-tuning

|

||||

title: Supervised Fine-Tuning

|

||||

- local: ppo_trainer

|

||||

title: PPO Trainer

|

||||

- local: best_of_n

|

||||

title: Best of N Sampling

|

||||

- local: dpo_trainer

|

||||

title: DPO Trainer

|

||||

- local: ddpo_trainer

|

||||

title: Denoising Diffusion Policy Optimization

|

||||

- local: iterative_sft_trainer

|

||||

title: Iterative Supervised Fine-Tuning

|

||||

- local: text_environments

|

||||

title: Text Environments

|

||||

title: API

|

||||

- sections:

|

||||

- sections:

|

||||

- local: example_overview

|

||||

title: Example Overview

|

||||

- local: sentiment_tuning

|

||||

title: Sentiment Tuning

|

||||

- local: lora_tuning_peft

|

||||

title: Peft support - Low rank adaption of 8 bit models

|

||||

- local: summarization_reward_tuning

|

||||

title: Summarization Reward Tuning

|

||||

title: Training with PEFT

|

||||

- local: detoxifying_a_lm

|

||||

title: Detoxifying a Language Model

|

||||

- local: using_llama_models

|

||||

title: Using LLaMA with TRL

|

||||

title: Training StackLlama

|

||||

- local: learning_tools

|

||||

title: Learning to Use Tools

|

||||

- local: multi_adapter_rl

|

||||

title: Multi Adapter RLHF

|

||||

title: Examples

|

||||

|

||||

72

docs/source/best_of_n.mdx

Normal file

72

docs/source/best_of_n.mdx

Normal file

@ -0,0 +1,72 @@

|

||||

# Best of N sampling: Alternative ways to get better model output without RL based fine-tuning

|

||||

|

||||

Within the extras module is the `best-of-n` sampler class that serves as an alternative method of generating better model output.

|

||||

As to how it fares against the RL based fine-tuning, please look in the `examples` directory for a comparison example

|

||||

|

||||

## Usage

|

||||

|

||||

To get started quickly, instantiate an instance of the class with a model, a length sampler, a tokenizer and a callable that serves as a proxy reward pipeline that outputs reward scores for input queries

|

||||

|

||||

```python

|

||||

|

||||

from transformers import pipeline, AutoTokenizer

|

||||

from trl import AutoModelForCausalLMWithValueHead

|

||||

from trl.core import LengthSampler

|

||||

from trl.extras import BestOfNSampler

|

||||

|

||||

ref_model = AutoModelForCausalLMWithValueHead.from_pretrained(ref_model_name)

|

||||

reward_pipe = pipeline("sentiment-analysis", model=reward_model, device=device)

|

||||

tokenizer = AutoTokenizer.from_pretrained(ref_model_name)

|

||||

tokenizer.pad_token = tokenizer.eos_token

|

||||

|

||||

|

||||

# callable that takes a list of raw text and returns a list of corresponding reward scores

|

||||

def queries_to_scores(list_of_strings):

|

||||

return [output["score"] for output in reward_pipe(list_of_strings)]

|

||||

|

||||

best_of_n = BestOfNSampler(model, tokenizer, queries_to_scores, length_sampler=output_length_sampler)

|

||||

|

||||

|

||||

```

|

||||

|

||||

And assuming you have a list/tensor of tokenized queries, you can generate better output by calling the `generate` method

|

||||

|

||||

```python

|

||||

|

||||

best_of_n.generate(query_tensors, device=device, **gen_kwargs)

|

||||

|

||||

```

|

||||

The default sample size is 4, but you can change it at the time of instance initialization like so

|

||||

|

||||

```python

|

||||

|

||||

best_of_n = BestOfNSampler(model, tokenizer, queries_to_scores, length_sampler=output_length_sampler, sample_size=8)

|

||||

|

||||

```

|

||||

|

||||

The default output is the result of taking the top scored output for each query, but you can change it to top 2 and so on by passing the `n_candidates` argument at the time of instance initialization

|

||||

|

||||

```python

|

||||

|

||||

best_of_n = BestOfNSampler(model, tokenizer, queries_to_scores, length_sampler=output_length_sampler, n_candidates=2)

|

||||

|

||||

```

|

||||

|

||||

There is the option of setting the generation settings (like `temperature`, `pad_token_id`) at the time of instance creation as opposed to when calling the `generate` method.

|

||||

This is done by passing a `GenerationConfig` from the `transformers` library at the time of initialization

|

||||

|

||||

```python

|

||||

|

||||

from transformers import GenerationConfig

|

||||

|

||||

generation_config = GenerationConfig(min_length= -1, top_k=0.0, top_p= 1.0, do_sample= True, pad_token_id=tokenizer.eos_token_id)

|

||||

|

||||

best_of_n = BestOfNSampler(model, tokenizer, queries_to_scores, length_sampler=output_length_sampler, generation_config=generation_config)

|

||||

|

||||

best_of_n.generate(query_tensors, device=device)

|

||||

|

||||

```

|

||||

|

||||

Furthermore, at the time of initialization you can set the seed to control repeatability of the generation process and the number of samples to generate for each query

|

||||

|

||||

|

||||

@ -1,22 +1,50 @@

|

||||

# Training customization

|

||||

|

||||

At `trl` we provide the possibility to give enough modularity to users to be able to efficiently customize the training loop for their needs. Below are some examples on how you can apply and test different techniques.

|

||||

TRL is designed with modularity in mind so that users to be able to efficiently customize the training loop for their needs. Below are some examples on how you can apply and test different techniques.

|

||||

|

||||

## Run on multiple GPUs / nodes

|

||||

## Train on multiple GPUs / nodes

|

||||

|

||||

We leverage `accelerate` to enable users to run their training on multiple GPUs or nodes. You should first create your accelerate config by simply running:

|

||||

The trainers in TRL use 🤗 Accelerate to enable distributed training across multiple GPUs or nodes. To do so, first create an 🤗 Accelerate config file by running

|

||||

|

||||

```bash

|

||||

accelerate config

|

||||

```

|

||||

|

||||

Then make sure you have selected multi-gpu / multi-node setup. You can then run your training by simply running:

|

||||

and answering the questions according to your multi-gpu / multi-node setup. You can then launch distributed training by running:

|

||||

|

||||

```bash

|

||||

accelerate launch your_script.py

|

||||

```

|

||||

|

||||

Refer to the [examples page](https://github.com/lvwerra/trl/tree/main/examples) for more details

|

||||

We also provide config files in the [examples folder](https://github.com/huggingface/trl/tree/main/examples/accelerate_configs) that can be used as templates. To use these templates, simply pass the path to the config file when launching a job, e.g.:

|

||||

|

||||

```shell

|

||||

accelerate launch --config_file=examples/accelerate_configs/multi_gpu.yaml --num_processes {NUM_GPUS} path_to_script.py --all_arguments_of_the_script

|

||||

```

|

||||

|

||||

Refer to the [examples page](https://github.com/huggingface/trl/tree/main/examples) for more details.

|

||||

|

||||

### Distributed training with DeepSpeed

|

||||

|

||||

All of the trainers in TRL can be run on multiple GPUs together with DeepSpeed ZeRO-{1,2,3} for efficient sharding of the optimizer states, gradients, and model weights. To do so, run:

|

||||

|

||||

```shell

|

||||

accelerate launch --config_file=examples/accelerate_configs/deepspeed_zero{1,2,3}.yaml --num_processes {NUM_GPUS} path_to_your_script.py --all_arguments_of_the_script

|

||||

```

|

||||

|

||||

Note that for ZeRO-3, a small tweak is needed to initialize your reward model on the correct device via the `zero3_init_context_manager()` context manager. In particular, this is needed to avoid DeepSpeed hanging after a fixed number of training steps. Here is a snippet of what is involved from the [`sentiment_tuning`](https://github.com/huggingface/trl/blob/main/examples/scripts/ppo.py) example:

|

||||

|

||||

```python

|

||||

ds_plugin = ppo_trainer.accelerator.state.deepspeed_plugin

|

||||

if ds_plugin is not None and ds_plugin.is_zero3_init_enabled():

|

||||