mirror of

https://github.com/huggingface/transformers.git

synced 2025-10-26 05:34:35 +08:00

Compare commits

2 Commits

ssh_new_cl

...

disable_mu

| Author | SHA1 | Date | |

|---|---|---|---|

| aa60ef9910 | |||

| 21814b8355 |

@ -34,44 +34,64 @@ jobs:

|

||||

- run: echo 'export "GIT_COMMIT_MESSAGE=$(git show -s --format=%s)"' >> "$BASH_ENV" && source "$BASH_ENV"

|

||||

- run: mkdir -p test_preparation

|

||||

- run: python utils/tests_fetcher.py | tee tests_fetched_summary.txt

|

||||

- store_artifacts:

|

||||

path: ~/transformers/tests_fetched_summary.txt

|

||||

- run: |

|

||||

if [ -f test_list.txt ]; then

|

||||

cp test_list.txt test_preparation/test_list.txt

|

||||

else

|

||||

touch test_preparation/test_list.txt

|

||||

fi

|

||||

- run: |

|

||||

if [ -f examples_test_list.txt ]; then

|

||||

mv examples_test_list.txt test_preparation/examples_test_list.txt

|

||||

else

|

||||

touch test_preparation/examples_test_list.txt

|

||||

fi

|

||||

- run: |

|

||||

if [ -f filtered_test_list_cross_tests.txt ]; then

|

||||

mv filtered_test_list_cross_tests.txt test_preparation/filtered_test_list_cross_tests.txt

|

||||

else

|

||||

touch test_preparation/filtered_test_list_cross_tests.txt

|

||||

fi

|

||||

- run: |

|

||||

if [ -f doctest_list.txt ]; then

|

||||

cp doctest_list.txt test_preparation/doctest_list.txt

|

||||

else

|

||||

touch test_preparation/doctest_list.txt

|

||||

fi

|

||||

- run: |

|

||||

if [ -f test_repo_utils.txt ]; then

|

||||

mv test_repo_utils.txt test_preparation/test_repo_utils.txt

|

||||

else

|

||||

touch test_preparation/test_repo_utils.txt

|

||||

fi

|

||||

- run: python utils/tests_fetcher.py --filter_tests

|

||||

- run: |

|

||||

if [ -f test_list.txt ]; then

|

||||

mv test_list.txt test_preparation/filtered_test_list.txt

|

||||

else

|

||||

touch test_preparation/filtered_test_list.txt

|

||||

fi

|

||||

- store_artifacts:

|

||||

path: test_preparation/test_list.txt

|

||||

- store_artifacts:

|

||||

path: test_preparation/doctest_list.txt

|

||||

- store_artifacts:

|

||||

path: ~/transformers/test_preparation/filtered_test_list.txt

|

||||

- store_artifacts:

|

||||

path: test_preparation/examples_test_list.txt

|

||||

- run: export "GIT_COMMIT_MESSAGE=$(git show -s --format=%s)" && echo $GIT_COMMIT_MESSAGE && python .circleci/create_circleci_config.py --fetcher_folder test_preparation

|

||||

- run: |

|

||||

if [ ! -s test_preparation/generated_config.yml ]; then

|

||||

echo "No tests to run, exiting early!"

|

||||

circleci-agent step halt

|

||||

fi

|

||||

|

||||

if [ ! -s test_preparation/generated_config.yml ]; then

|

||||

echo "No tests to run, exiting early!"

|

||||

circleci-agent step halt

|

||||

fi

|

||||

- store_artifacts:

|

||||

path: test_preparation

|

||||

|

||||

- run:

|

||||

name: "Retrieve Artifact Paths"

|

||||

env:

|

||||

CIRCLE_TOKEN: ${{ secrets.CI_ARTIFACT_TOKEN }}

|

||||

command: |

|

||||

project_slug="gh/${CIRCLE_PROJECT_USERNAME}/${CIRCLE_PROJECT_REPONAME}"

|

||||

job_number=${CIRCLE_BUILD_NUM}

|

||||

url="https://circleci.com/api/v2/project/${project_slug}/${job_number}/artifacts"

|

||||

curl -o test_preparation/artifacts.json ${url}

|

||||

- run:

|

||||

name: "Prepare pipeline parameters"

|

||||

command: |

|

||||

python utils/process_test_artifacts.py

|

||||

|

||||

# To avoid too long generated_config.yaml on the continuation orb, we pass the links to the artifacts as parameters.

|

||||

# Otherwise the list of tests was just too big. Explicit is good but for that it was a limitation.

|

||||

# We used:

|

||||

|

||||

# https://circleci.com/docs/api/v2/index.html#operation/getJobArtifacts : to get the job artifacts

|

||||

# We could not pass a nested dict, which is why we create the test_file_... parameters for every single job

|

||||

|

||||

path: test_preparation/generated_config.yml

|

||||

- store_artifacts:

|

||||

path: test_preparation/transformed_artifacts.json

|

||||

- store_artifacts:

|

||||

path: test_preparation/artifacts.json

|

||||

path: test_preparation/filtered_test_list_cross_tests.txt

|

||||

- continuation/continue:

|

||||

parameters: test_preparation/transformed_artifacts.json

|

||||

configuration_path: test_preparation/generated_config.yml

|

||||

|

||||

# To run all tests for the nightly build

|

||||

|

||||

@ -32,7 +32,7 @@ COMMON_ENV_VARIABLES = {

|

||||

"RUN_PT_FLAX_CROSS_TESTS": False,

|

||||

}

|

||||

# Disable the use of {"s": None} as the output is way too long, causing the navigation on CircleCI impractical

|

||||

COMMON_PYTEST_OPTIONS = {"max-worker-restart": 0, "dist": "loadfile", "vvv": None, "rsf":None}

|

||||

COMMON_PYTEST_OPTIONS = {"max-worker-restart": 0, "dist": "loadfile", "v": None}

|

||||

DEFAULT_DOCKER_IMAGE = [{"image": "cimg/python:3.8.12"}]

|

||||

|

||||

|

||||

@ -50,15 +50,16 @@ class EmptyJob:

|

||||

class CircleCIJob:

|

||||

name: str

|

||||

additional_env: Dict[str, Any] = None

|

||||

cache_name: str = None

|

||||

cache_version: str = "0.8.2"

|

||||

docker_image: List[Dict[str, str]] = None

|

||||

install_steps: List[str] = None

|

||||

marker: Optional[str] = None

|

||||

parallelism: Optional[int] = 0

|

||||

parallelism: Optional[int] = 1

|

||||

pytest_num_workers: int = 12

|

||||

pytest_options: Dict[str, Any] = None

|

||||

resource_class: Optional[str] = "2xlarge"

|

||||

tests_to_run: Optional[List[str]] = None

|

||||

num_test_files_per_worker: Optional[int] = 10

|

||||

# This should be only used for doctest job!

|

||||

command_timeout: Optional[int] = None

|

||||

|

||||

@ -66,6 +67,8 @@ class CircleCIJob:

|

||||

# Deal with defaults for mutable attributes.

|

||||

if self.additional_env is None:

|

||||

self.additional_env = {}

|

||||

if self.cache_name is None:

|

||||

self.cache_name = self.name

|

||||

if self.docker_image is None:

|

||||

# Let's avoid changing the default list and make a copy.

|

||||

self.docker_image = copy.deepcopy(DEFAULT_DOCKER_IMAGE)

|

||||

@ -76,96 +79,156 @@ class CircleCIJob:

|

||||

self.docker_image[0]["image"] = f"{self.docker_image[0]['image']}:dev"

|

||||

print(f"Using {self.docker_image} docker image")

|

||||

if self.install_steps is None:

|

||||

self.install_steps = ["uv venv && uv pip install ."]

|

||||

self.install_steps = []

|

||||

if self.pytest_options is None:

|

||||

self.pytest_options = {}

|

||||

if isinstance(self.tests_to_run, str):

|

||||

self.tests_to_run = [self.tests_to_run]

|

||||

else:

|

||||

test_file = os.path.join("test_preparation" , f"{self.job_name}_test_list.txt")

|

||||

print("Looking for ", test_file)

|

||||

if os.path.exists(test_file):

|

||||

with open(test_file) as f:

|

||||

expanded_tests = f.read().strip().split("\n")

|

||||

self.tests_to_run = expanded_tests

|

||||

print("Found:", expanded_tests)

|

||||

else:

|

||||

self.tests_to_run = []

|

||||

print("not Found")

|

||||

if self.parallelism is None:

|

||||

self.parallelism = 1

|

||||

|

||||

def to_dict(self):

|

||||

env = COMMON_ENV_VARIABLES.copy()

|

||||

env.update(self.additional_env)

|

||||

|

||||

cache_branch_prefix = os.environ.get("CIRCLE_BRANCH", "pull")

|

||||

if cache_branch_prefix != "main":

|

||||

cache_branch_prefix = "pull"

|

||||

|

||||

job = {

|

||||

"docker": self.docker_image,

|

||||

"environment": env,

|

||||

}

|

||||

if self.resource_class is not None:

|

||||

job["resource_class"] = self.resource_class

|

||||

if self.parallelism is not None:

|

||||

job["parallelism"] = self.parallelism

|

||||

steps = [

|

||||

"checkout",

|

||||

{"attach_workspace": {"at": "test_preparation"}},

|

||||

]

|

||||

steps.extend([{"run": l} for l in self.install_steps])

|

||||

steps.append({"run": {"name": "Show installed libraries and their size", "command": """du -h -d 1 "$(pip -V | cut -d ' ' -f 4 | sed 's/pip//g')" | grep -vE "dist-info|_distutils_hack|__pycache__" | sort -h | tee installed.txt || true"""}})

|

||||

steps.append({"run": {"name": "Show installed libraries and their versions", "command": """pip list --format=freeze | tee installed.txt || true"""}})

|

||||

|

||||

steps.append({"run":{"name":"Show biggest libraries","command":"""dpkg-query --show --showformat='${Installed-Size}\t${Package}\n' | sort -rh | head -25 | sort -h | awk '{ package=$2; sub(".*/", "", package); printf("%.5f GB %s\n", $1/1024/1024, package)}' || true"""}})

|

||||

steps.append({"store_artifacts": {"path": "installed.txt"}})

|

||||

|

||||

all_options = {**COMMON_PYTEST_OPTIONS, **self.pytest_options}

|

||||

pytest_flags = [f"--{key}={value}" if (value is not None or key in ["doctest-modules"]) else f"-{key}" for key, value in all_options.items()]

|

||||

pytest_flags.append(

|

||||

f"--make-reports={self.name}" if "examples" in self.name else f"--make-reports=tests_{self.name}"

|

||||

)

|

||||

# Examples special case: we need to download NLTK files in advance to avoid cuncurrency issues

|

||||

timeout_cmd = f"timeout {self.command_timeout} " if self.command_timeout else ""

|

||||

marker_cmd = f"-m '{self.marker}'" if self.marker is not None else ""

|

||||

additional_flags = f" -p no:warning -o junit_family=xunit1 --junitxml=test-results/junit.xml"

|

||||

parallel = f' << pipeline.parameters.{self.job_name}_parallelism >> '

|

||||

steps = [

|

||||

"checkout",

|

||||

{"attach_workspace": {"at": "test_preparation"}},

|

||||

{"run": "apt-get update && apt-get install -y curl"},

|

||||

{"run": " && ".join(self.install_steps)},

|

||||

{"run": {"name": "Download NLTK files", "command": """python -c "import nltk; nltk.download('punkt', quiet=True)" """} if "example" in self.name else "echo Skipping"},

|

||||

{"run": {

|

||||

"name": "Show installed libraries and their size",

|

||||

"command": """du -h -d 1 "$(pip -V | cut -d ' ' -f 4 | sed 's/pip//g')" | grep -vE "dist-info|_distutils_hack|__pycache__" | sort -h | tee installed.txt || true"""}

|

||||

},

|

||||

{"run": {

|

||||

"name": "Show installed libraries and their versions",

|

||||

"command": """pip list --format=freeze | tee installed.txt || true"""}

|

||||

},

|

||||

{"run": {

|

||||

"name": "Show biggest libraries",

|

||||

"command": """dpkg-query --show --showformat='${Installed-Size}\t${Package}\n' | sort -rh | head -25 | sort -h | awk '{ package=$2; sub(".*/", "", package); printf("%.5f GB %s\n", $1/1024/1024, package)}' || true"""}

|

||||

},

|

||||

{"run": {"name": "Create `test-results` directory", "command": "mkdir test-results"}},

|

||||

{"run": {"name": "Get files to test", "command":f'curl -L -o {self.job_name}_test_list.txt <<pipeline.parameters.{self.job_name}_test_list>>' if self.name != "pr_documentation_tests" else 'echo "Skipped"'}},

|

||||

{"run": {"name": "Split tests across parallel nodes: show current parallel tests",

|

||||

"command": f"TESTS=$(circleci tests split --split-by=timings {self.job_name}_test_list.txt) && echo $TESTS > splitted_tests.txt && echo $TESTS | tr ' ' '\n'" if self.parallelism else f"awk '{{printf \"%s \", $0}}' {self.job_name}_test_list.txt > splitted_tests.txt"

|

||||

}

|

||||

},

|

||||

{"run": {

|

||||

"name": "Run tests",

|

||||

"command": f"({timeout_cmd} python3 -m pytest {marker_cmd} -n {self.pytest_num_workers} {additional_flags} {' '.join(pytest_flags)} $(cat splitted_tests.txt) | tee tests_output.txt)"}

|

||||

},

|

||||

{"run": {"name": "Expand to show skipped tests", "when": "always", "command": f"python3 .circleci/parse_test_outputs.py --file tests_output.txt --skip"}},

|

||||

{"run": {"name": "Failed tests: show reasons", "when": "always", "command": f"python3 .circleci/parse_test_outputs.py --file tests_output.txt --fail"}},

|

||||

{"run": {"name": "Errors", "when": "always", "command": f"python3 .circleci/parse_test_outputs.py --file tests_output.txt --errors"}},

|

||||

{"store_test_results": {"path": "test-results"}},

|

||||

{"store_artifacts": {"path": "test-results/junit.xml"}},

|

||||

{"store_artifacts": {"path": "reports"}},

|

||||

{"store_artifacts": {"path": "tests.txt"}},

|

||||

{"store_artifacts": {"path": "splitted_tests.txt"}},

|

||||

{"store_artifacts": {"path": "installed.txt"}},

|

||||

]

|

||||

if self.parallelism:

|

||||

job["parallelism"] = parallel

|

||||

|

||||

steps.append({"run": {"name": "Create `test-results` directory", "command": "mkdir test-results"}})

|

||||

|

||||

# Examples special case: we need to download NLTK files in advance to avoid cuncurrency issues

|

||||

if "examples" in self.name:

|

||||

steps.append({"run": {"name": "Download NLTK files", "command": """python -c "import nltk; nltk.download('punkt', quiet=True)" """}})

|

||||

|

||||

test_command = ""

|

||||

if self.command_timeout:

|

||||

test_command = f"timeout {self.command_timeout} "

|

||||

# junit familiy xunit1 is necessary to support splitting on test name or class name with circleci split

|

||||

test_command += f"python3 -m pytest -rsfE -p no:warnings --tb=short -o junit_family=xunit1 --junitxml=test-results/junit.xml -n {self.pytest_num_workers} " + " ".join(pytest_flags)

|

||||

|

||||

if self.parallelism == 1:

|

||||

if self.tests_to_run is None:

|

||||

test_command += " << pipeline.parameters.tests_to_run >>"

|

||||

else:

|

||||

test_command += " " + " ".join(self.tests_to_run)

|

||||

else:

|

||||

# We need explicit list instead of `pipeline.parameters.tests_to_run` (only available at job runtime)

|

||||

tests = self.tests_to_run

|

||||

if tests is None:

|

||||

folder = os.environ["test_preparation_dir"]

|

||||

test_file = os.path.join(folder, "filtered_test_list.txt")

|

||||

if os.path.exists(test_file): # We take this job's tests from the filtered test_list.txt

|

||||

with open(test_file) as f:

|

||||

tests = f.read().split(" ")

|

||||

|

||||

# expand the test list

|

||||

if tests == ["tests"]:

|

||||

tests = [os.path.join("tests", x) for x in os.listdir("tests")]

|

||||

expanded_tests = []

|

||||

for test in tests:

|

||||

if test.endswith(".py"):

|

||||

expanded_tests.append(test)

|

||||

elif test == "tests/models":

|

||||

if "tokenization" in self.name:

|

||||

expanded_tests.extend(glob.glob("tests/models/**/test_tokenization*.py", recursive=True))

|

||||

elif self.name in ["flax","torch","tf"]:

|

||||

name = self.name if self.name != "torch" else ""

|

||||

if self.name == "torch":

|

||||

all_tests = glob.glob(f"tests/models/**/test_modeling_{name}*.py", recursive=True)

|

||||

filtered = [k for k in all_tests if ("_tf_") not in k and "_flax_" not in k]

|

||||

expanded_tests.extend(filtered)

|

||||

else:

|

||||

expanded_tests.extend(glob.glob(f"tests/models/**/test_modeling_{name}*.py", recursive=True))

|

||||

else:

|

||||

expanded_tests.extend(glob.glob("tests/models/**/test_modeling*.py", recursive=True))

|

||||

elif test == "tests/pipelines":

|

||||

expanded_tests.extend(glob.glob("tests/models/**/test_modeling*.py", recursive=True))

|

||||

else:

|

||||

expanded_tests.append(test)

|

||||

tests = " ".join(expanded_tests)

|

||||

|

||||

# Each executor to run ~10 tests

|

||||

n_executors = max(len(expanded_tests) // 10, 1)

|

||||

# Avoid empty test list on some executor(s) or launching too many executors

|

||||

if n_executors > self.parallelism:

|

||||

n_executors = self.parallelism

|

||||

job["parallelism"] = n_executors

|

||||

|

||||

# Need to be newline separated for the command `circleci tests split` below

|

||||

command = f'echo {tests} | tr " " "\\n" >> tests.txt'

|

||||

steps.append({"run": {"name": "Get tests", "command": command}})

|

||||

|

||||

command = 'TESTS=$(circleci tests split tests.txt) && echo $TESTS > splitted_tests.txt'

|

||||

steps.append({"run": {"name": "Split tests", "command": command}})

|

||||

|

||||

steps.append({"store_artifacts": {"path": "tests.txt"}})

|

||||

steps.append({"store_artifacts": {"path": "splitted_tests.txt"}})

|

||||

|

||||

test_command += " $(cat splitted_tests.txt)"

|

||||

if self.marker is not None:

|

||||

test_command += f" -m {self.marker}"

|

||||

|

||||

if self.name == "pr_documentation_tests":

|

||||

# can't use ` | tee tee tests_output.txt` as usual

|

||||

test_command += " > tests_output.txt"

|

||||

# Save the return code, so we can check if it is timeout in the next step.

|

||||

test_command += '; touch "$?".txt'

|

||||

# Never fail the test step for the doctest job. We will check the results in the next step, and fail that

|

||||

# step instead if the actual test failures are found. This is to avoid the timeout being reported as test

|

||||

# failure.

|

||||

test_command = f"({test_command}) || true"

|

||||

else:

|

||||

test_command = f"({test_command} | tee tests_output.txt)"

|

||||

steps.append({"run": {"name": "Run tests", "command": test_command}})

|

||||

|

||||

steps.append({"run": {"name": "Skipped tests", "when": "always", "command": f"python3 .circleci/parse_test_outputs.py --file tests_output.txt --skip"}})

|

||||

steps.append({"run": {"name": "Failed tests", "when": "always", "command": f"python3 .circleci/parse_test_outputs.py --file tests_output.txt --fail"}})

|

||||

steps.append({"run": {"name": "Errors", "when": "always", "command": f"python3 .circleci/parse_test_outputs.py --file tests_output.txt --errors"}})

|

||||

|

||||

steps.append({"store_test_results": {"path": "test-results"}})

|

||||

steps.append({"store_artifacts": {"path": "tests_output.txt"}})

|

||||

steps.append({"store_artifacts": {"path": "test-results/junit.xml"}})

|

||||

steps.append({"store_artifacts": {"path": "reports"}})

|

||||

|

||||

job["steps"] = steps

|

||||

return job

|

||||

|

||||

@property

|

||||

def job_name(self):

|

||||

return self.name if ("examples" in self.name or "pipeline" in self.name or "pr_documentation" in self.name) else f"tests_{self.name}"

|

||||

return self.name if "examples" in self.name else f"tests_{self.name}"

|

||||

|

||||

|

||||

# JOBS

|

||||

torch_and_tf_job = CircleCIJob(

|

||||

"torch_and_tf",

|

||||

docker_image=[{"image":"huggingface/transformers-torch-tf-light"}],

|

||||

install_steps=["uv venv && uv pip install ."],

|

||||

additional_env={"RUN_PT_TF_CROSS_TESTS": True},

|

||||

marker="is_pt_tf_cross_test",

|

||||

pytest_options={"rA": None, "durations": 0},

|

||||

@ -176,6 +239,7 @@ torch_and_flax_job = CircleCIJob(

|

||||

"torch_and_flax",

|

||||

additional_env={"RUN_PT_FLAX_CROSS_TESTS": True},

|

||||

docker_image=[{"image":"huggingface/transformers-torch-jax-light"}],

|

||||

install_steps=["uv venv && uv pip install ."],

|

||||

marker="is_pt_flax_cross_test",

|

||||

pytest_options={"rA": None, "durations": 0},

|

||||

)

|

||||

@ -183,46 +247,35 @@ torch_and_flax_job = CircleCIJob(

|

||||

torch_job = CircleCIJob(

|

||||

"torch",

|

||||

docker_image=[{"image": "huggingface/transformers-torch-light"}],

|

||||

marker="not generate",

|

||||

install_steps=["uv venv && uv pip install ."],

|

||||

parallelism=6,

|

||||

pytest_num_workers=8

|

||||

)

|

||||

|

||||

generate_job = CircleCIJob(

|

||||

"generate",

|

||||

docker_image=[{"image": "huggingface/transformers-torch-light"}],

|

||||

marker="generate",

|

||||

parallelism=6,

|

||||

pytest_num_workers=8

|

||||

pytest_num_workers=4

|

||||

)

|

||||

|

||||

tokenization_job = CircleCIJob(

|

||||

"tokenization",

|

||||

docker_image=[{"image": "huggingface/transformers-torch-light"}],

|

||||

parallelism=8,

|

||||

pytest_num_workers=16

|

||||

install_steps=["uv venv && uv pip install ."],

|

||||

parallelism=6,

|

||||

pytest_num_workers=4

|

||||

)

|

||||

|

||||

processor_job = CircleCIJob(

|

||||

"processors",

|

||||

docker_image=[{"image": "huggingface/transformers-torch-light"}],

|

||||

parallelism=8,

|

||||

pytest_num_workers=6

|

||||

)

|

||||

|

||||

tf_job = CircleCIJob(

|

||||

"tf",

|

||||

docker_image=[{"image":"huggingface/transformers-tf-light"}],

|

||||

install_steps=["uv venv", "uv pip install -e."],

|

||||

parallelism=6,

|

||||

pytest_num_workers=16,

|

||||

pytest_num_workers=4,

|

||||

)

|

||||

|

||||

|

||||

flax_job = CircleCIJob(

|

||||

"flax",

|

||||

docker_image=[{"image":"huggingface/transformers-jax-light"}],

|

||||

install_steps=["uv venv && uv pip install ."],

|

||||

parallelism=6,

|

||||

pytest_num_workers=16

|

||||

pytest_num_workers=4

|

||||

)

|

||||

|

||||

|

||||

@ -230,8 +283,8 @@ pipelines_torch_job = CircleCIJob(

|

||||

"pipelines_torch",

|

||||

additional_env={"RUN_PIPELINE_TESTS": True},

|

||||

docker_image=[{"image":"huggingface/transformers-torch-light"}],

|

||||

install_steps=["uv venv && uv pip install ."],

|

||||

marker="is_pipeline_test",

|

||||

parallelism=4

|

||||

)

|

||||

|

||||

|

||||

@ -239,8 +292,8 @@ pipelines_tf_job = CircleCIJob(

|

||||

"pipelines_tf",

|

||||

additional_env={"RUN_PIPELINE_TESTS": True},

|

||||

docker_image=[{"image":"huggingface/transformers-tf-light"}],

|

||||

install_steps=["uv venv && uv pip install ."],

|

||||

marker="is_pipeline_test",

|

||||

parallelism=4

|

||||

)

|

||||

|

||||

|

||||

@ -248,24 +301,34 @@ custom_tokenizers_job = CircleCIJob(

|

||||

"custom_tokenizers",

|

||||

additional_env={"RUN_CUSTOM_TOKENIZERS": True},

|

||||

docker_image=[{"image": "huggingface/transformers-custom-tokenizers"}],

|

||||

install_steps=["uv venv","uv pip install -e ."],

|

||||

parallelism=None,

|

||||

resource_class=None,

|

||||

tests_to_run=[

|

||||

"./tests/models/bert_japanese/test_tokenization_bert_japanese.py",

|

||||

"./tests/models/openai/test_tokenization_openai.py",

|

||||

"./tests/models/clip/test_tokenization_clip.py",

|

||||

],

|

||||

)

|

||||

|

||||

|

||||

examples_torch_job = CircleCIJob(

|

||||

"examples_torch",

|

||||

additional_env={"OMP_NUM_THREADS": 8},

|

||||

cache_name="torch_examples",

|

||||

docker_image=[{"image":"huggingface/transformers-examples-torch"}],

|

||||

# TODO @ArthurZucker remove this once docker is easier to build

|

||||

install_steps=["uv venv && uv pip install . && uv pip install -r examples/pytorch/_tests_requirements.txt"],

|

||||

pytest_num_workers=8,

|

||||

pytest_num_workers=1,

|

||||

)

|

||||

|

||||

|

||||

examples_tensorflow_job = CircleCIJob(

|

||||

"examples_tensorflow",

|

||||

additional_env={"OMP_NUM_THREADS": 8},

|

||||

cache_name="tensorflow_examples",

|

||||

docker_image=[{"image":"huggingface/transformers-examples-tf"}],

|

||||

pytest_num_workers=16,

|

||||

install_steps=["uv venv && uv pip install . && uv pip install -r examples/tensorflow/_tests_requirements.txt"],

|

||||

parallelism=8

|

||||

)

|

||||

|

||||

|

||||

@ -274,12 +337,12 @@ hub_job = CircleCIJob(

|

||||

additional_env={"HUGGINGFACE_CO_STAGING": True},

|

||||

docker_image=[{"image":"huggingface/transformers-torch-light"}],

|

||||

install_steps=[

|

||||

'uv venv && uv pip install .',

|

||||

"uv venv && uv pip install .",

|

||||

'git config --global user.email "ci@dummy.com"',

|

||||

'git config --global user.name "ci"',

|

||||

],

|

||||

marker="is_staging_test",

|

||||

pytest_num_workers=2,

|

||||

pytest_num_workers=1,

|

||||

)

|

||||

|

||||

|

||||

@ -287,7 +350,8 @@ onnx_job = CircleCIJob(

|

||||

"onnx",

|

||||

docker_image=[{"image":"huggingface/transformers-torch-tf-light"}],

|

||||

install_steps=[

|

||||

"uv venv",

|

||||

"uv venv && uv pip install .",

|

||||

"uv pip install --upgrade eager pip",

|

||||

"uv pip install .[torch,tf,testing,sentencepiece,onnxruntime,vision,rjieba]",

|

||||

],

|

||||

pytest_options={"k onnx": None},

|

||||

@ -297,7 +361,15 @@ onnx_job = CircleCIJob(

|

||||

|

||||

exotic_models_job = CircleCIJob(

|

||||

"exotic_models",

|

||||

install_steps=["uv venv && uv pip install ."],

|

||||

docker_image=[{"image":"huggingface/transformers-exotic-models"}],

|

||||

tests_to_run=[

|

||||

"tests/models/*layoutlmv*",

|

||||

"tests/models/*nat",

|

||||

"tests/models/deta",

|

||||

"tests/models/udop",

|

||||

"tests/models/nougat",

|

||||

],

|

||||

pytest_num_workers=12,

|

||||

parallelism=4,

|

||||

pytest_options={"durations": 100},

|

||||

@ -307,8 +379,11 @@ exotic_models_job = CircleCIJob(

|

||||

repo_utils_job = CircleCIJob(

|

||||

"repo_utils",

|

||||

docker_image=[{"image":"huggingface/transformers-consistency"}],

|

||||

pytest_num_workers=4,

|

||||

install_steps=["uv venv && uv pip install ."],

|

||||

parallelism=None,

|

||||

pytest_num_workers=1,

|

||||

resource_class="large",

|

||||

tests_to_run="tests/repo_utils",

|

||||

)

|

||||

|

||||

|

||||

@ -317,18 +392,28 @@ repo_utils_job = CircleCIJob(

|

||||

# the bash output redirection.)

|

||||

py_command = 'from utils.tests_fetcher import get_doctest_files; to_test = get_doctest_files() + ["dummy.py"]; to_test = " ".join(to_test); print(to_test)'

|

||||

py_command = f"$(python3 -c '{py_command}')"

|

||||

command = f'echo """{py_command}""" > pr_documentation_tests_temp.txt'

|

||||

command = f'echo "{py_command}" > pr_documentation_tests_temp.txt'

|

||||

doc_test_job = CircleCIJob(

|

||||

"pr_documentation_tests",

|

||||

docker_image=[{"image":"huggingface/transformers-consistency"}],

|

||||

additional_env={"TRANSFORMERS_VERBOSITY": "error", "DATASETS_VERBOSITY": "error", "SKIP_CUDA_DOCTEST": "1"},

|

||||

install_steps=[

|

||||

# Add an empty file to keep the test step running correctly even no file is selected to be tested.

|

||||

"uv venv && pip install .",

|

||||

"touch dummy.py",

|

||||

command,

|

||||

"cat pr_documentation_tests_temp.txt",

|

||||

"tail -n1 pr_documentation_tests_temp.txt | tee pr_documentation_tests_test_list.txt"

|

||||

{

|

||||

"name": "Get files to test",

|

||||

"command": command,

|

||||

},

|

||||

{

|

||||

"name": "Show information in `Get files to test`",

|

||||

"command":

|

||||

"cat pr_documentation_tests_temp.txt"

|

||||

},

|

||||

{

|

||||

"name": "Get the last line in `pr_documentation_tests.txt`",

|

||||

"command":

|

||||

"tail -n1 pr_documentation_tests_temp.txt | tee pr_documentation_tests.txt"

|

||||

},

|

||||

],

|

||||

tests_to_run="$(cat pr_documentation_tests.txt)", # noqa

|

||||

pytest_options={"-doctest-modules": None, "doctest-glob": "*.md", "dist": "loadfile", "rvsA": None},

|

||||

@ -336,37 +421,121 @@ doc_test_job = CircleCIJob(

|

||||

pytest_num_workers=1,

|

||||

)

|

||||

|

||||

REGULAR_TESTS = [torch_and_tf_job, torch_and_flax_job, torch_job, tf_job, flax_job, hub_job, onnx_job, tokenization_job, processor_job, generate_job] # fmt: skip

|

||||

EXAMPLES_TESTS = [examples_torch_job, examples_tensorflow_job]

|

||||

PIPELINE_TESTS = [pipelines_torch_job, pipelines_tf_job]

|

||||

REGULAR_TESTS = [

|

||||

torch_and_tf_job,

|

||||

torch_and_flax_job,

|

||||

torch_job,

|

||||

tf_job,

|

||||

flax_job,

|

||||

custom_tokenizers_job,

|

||||

hub_job,

|

||||

onnx_job,

|

||||

exotic_models_job,

|

||||

tokenization_job

|

||||

]

|

||||

EXAMPLES_TESTS = [

|

||||

examples_torch_job,

|

||||

examples_tensorflow_job,

|

||||

]

|

||||

PIPELINE_TESTS = [

|

||||

pipelines_torch_job,

|

||||

pipelines_tf_job,

|

||||

]

|

||||

REPO_UTIL_TESTS = [repo_utils_job]

|

||||

DOC_TESTS = [doc_test_job]

|

||||

ALL_TESTS = REGULAR_TESTS + EXAMPLES_TESTS + PIPELINE_TESTS + REPO_UTIL_TESTS + DOC_TESTS + [custom_tokenizers_job] + [exotic_models_job] # fmt: skip

|

||||

|

||||

|

||||

def create_circleci_config(folder=None):

|

||||

if folder is None:

|

||||

folder = os.getcwd()

|

||||

# Used in CircleCIJob.to_dict() to expand the test list (for using parallelism)

|

||||

os.environ["test_preparation_dir"] = folder

|

||||

jobs = [k for k in ALL_TESTS if os.path.isfile(os.path.join("test_preparation" , f"{k.job_name}_test_list.txt") )]

|

||||

print("The following jobs will be run ", jobs)

|

||||

jobs = []

|

||||

all_test_file = os.path.join(folder, "test_list.txt")

|

||||

if os.path.exists(all_test_file):

|

||||

with open(all_test_file) as f:

|

||||

all_test_list = f.read()

|

||||

else:

|

||||

all_test_list = []

|

||||

if len(all_test_list) > 0:

|

||||

jobs.extend(PIPELINE_TESTS)

|

||||

|

||||

test_file = os.path.join(folder, "filtered_test_list.txt")

|

||||

if os.path.exists(test_file):

|

||||

with open(test_file) as f:

|

||||

test_list = f.read()

|

||||

else:

|

||||

test_list = []

|

||||

if len(test_list) > 0:

|

||||

jobs.extend(REGULAR_TESTS)

|

||||

|

||||

extended_tests_to_run = set(test_list.split())

|

||||

# Extend the test files for cross test jobs

|

||||

for job in jobs:

|

||||

if job.job_name in ["tests_torch_and_tf", "tests_torch_and_flax"]:

|

||||

for test_path in copy.copy(extended_tests_to_run):

|

||||

dir_path, fn = os.path.split(test_path)

|

||||

if fn.startswith("test_modeling_tf_"):

|

||||

fn = fn.replace("test_modeling_tf_", "test_modeling_")

|

||||

elif fn.startswith("test_modeling_flax_"):

|

||||

fn = fn.replace("test_modeling_flax_", "test_modeling_")

|

||||

else:

|

||||

if job.job_name == "test_torch_and_tf":

|

||||

fn = fn.replace("test_modeling_", "test_modeling_tf_")

|

||||

elif job.job_name == "test_torch_and_flax":

|

||||

fn = fn.replace("test_modeling_", "test_modeling_flax_")

|

||||

new_test_file = str(os.path.join(dir_path, fn))

|

||||

if os.path.isfile(new_test_file):

|

||||

if new_test_file not in extended_tests_to_run:

|

||||

extended_tests_to_run.add(new_test_file)

|

||||

extended_tests_to_run = sorted(extended_tests_to_run)

|

||||

for job in jobs:

|

||||

if job.job_name in ["tests_torch_and_tf", "tests_torch_and_flax"]:

|

||||

job.tests_to_run = extended_tests_to_run

|

||||

fn = "filtered_test_list_cross_tests.txt"

|

||||

f_path = os.path.join(folder, fn)

|

||||

with open(f_path, "w") as fp:

|

||||

fp.write(" ".join(extended_tests_to_run))

|

||||

|

||||

example_file = os.path.join(folder, "examples_test_list.txt")

|

||||

if os.path.exists(example_file) and os.path.getsize(example_file) > 0:

|

||||

with open(example_file, "r", encoding="utf-8") as f:

|

||||

example_tests = f.read()

|

||||

for job in EXAMPLES_TESTS:

|

||||

framework = job.name.replace("examples_", "").replace("torch", "pytorch")

|

||||

if example_tests == "all":

|

||||

job.tests_to_run = [f"examples/{framework}"]

|

||||

else:

|

||||

job.tests_to_run = [f for f in example_tests.split(" ") if f.startswith(f"examples/{framework}")]

|

||||

|

||||

if len(job.tests_to_run) > 0:

|

||||

jobs.append(job)

|

||||

|

||||

doctest_file = os.path.join(folder, "doctest_list.txt")

|

||||

if os.path.exists(doctest_file):

|

||||

with open(doctest_file) as f:

|

||||

doctest_list = f.read()

|

||||

else:

|

||||

doctest_list = []

|

||||

if len(doctest_list) > 0:

|

||||

jobs.extend(DOC_TESTS)

|

||||

|

||||

repo_util_file = os.path.join(folder, "test_repo_utils.txt")

|

||||

if os.path.exists(repo_util_file) and os.path.getsize(repo_util_file) > 0:

|

||||

jobs.extend(REPO_UTIL_TESTS)

|

||||

|

||||

if len(jobs) == 0:

|

||||

jobs = [EmptyJob()]

|

||||

print("Full list of job name inputs", {j.job_name + "_test_list":{"type":"string", "default":''} for j in jobs})

|

||||

config = {

|

||||

"version": "2.1",

|

||||

"parameters": {

|

||||

# Only used to accept the parameters from the trigger

|

||||

"nightly": {"type": "boolean", "default": False},

|

||||

"tests_to_run": {"type": "string", "default": ''},

|

||||

**{j.job_name + "_test_list":{"type":"string", "default":''} for j in jobs},

|

||||

**{j.job_name + "_parallelism":{"type":"integer", "default":1} for j in jobs},

|

||||

},

|

||||

"jobs" : {j.job_name: j.to_dict() for j in jobs},

|

||||

"workflows": {"version": 2, "run_tests": {"jobs": [j.job_name for j in jobs]}}

|

||||

config = {"version": "2.1"}

|

||||

config["parameters"] = {

|

||||

# Only used to accept the parameters from the trigger

|

||||

"nightly": {"type": "boolean", "default": False},

|

||||

"tests_to_run": {"type": "string", "default": test_list},

|

||||

}

|

||||

config["jobs"] = {j.job_name: j.to_dict() for j in jobs}

|

||||

config["workflows"] = {"version": 2, "run_tests": {"jobs": [j.job_name for j in jobs]}}

|

||||

with open(os.path.join(folder, "generated_config.yml"), "w") as f:

|

||||

f.write(yaml.dump(config, sort_keys=False, default_flow_style=False).replace("' << pipeline", " << pipeline").replace(">> '", " >>"))

|

||||

f.write(yaml.dump(config, indent=2, width=1000000, sort_keys=False))

|

||||

|

||||

|

||||

if __name__ == "__main__":

|

||||

|

||||

@ -67,4 +67,4 @@ def main():

|

||||

|

||||

|

||||

if __name__ == "__main__":

|

||||

main()

|

||||

main()

|

||||

37

.github/workflows/self-push-amd.yml

vendored

37

.github/workflows/self-push-amd.yml

vendored

@ -64,24 +64,23 @@ jobs:

|

||||

outputs:

|

||||

matrix: ${{ steps.set-matrix.outputs.matrix }}

|

||||

test_map: ${{ steps.set-matrix.outputs.test_map }}

|

||||

env:

|

||||

# `CI_BRANCH_PUSH`: The branch name from the push event

|

||||

# `CI_BRANCH_WORKFLOW_RUN`: The name of the branch on which this workflow is triggered by `workflow_run` event

|

||||

# `CI_SHA_PUSH`: The commit SHA from the push event

|

||||

# `CI_SHA_WORKFLOW_RUN`: The commit SHA that triggers this workflow by `workflow_run` event

|

||||

CI_BRANCH_PUSH: ${{ github.event.ref }}

|

||||

CI_BRANCH_WORKFLOW_RUN: ${{ github.event.workflow_run.head_branch }}

|

||||

CI_SHA_PUSH: ${{ github.event.head_commit.id }}

|

||||

CI_SHA_WORKFLOW_RUN: ${{ github.event.workflow_run.head_sha }}

|

||||

steps:

|

||||

# Necessary to get the correct branch name and commit SHA for `workflow_run` event

|

||||

# We also take into account the `push` event (we might want to test some changes in a branch)

|

||||

- name: Prepare custom environment variables

|

||||

shell: bash

|

||||

# `CI_BRANCH_PUSH`: The branch name from the push event

|

||||

# `CI_BRANCH_WORKFLOW_RUN`: The name of the branch on which this workflow is triggered by `workflow_run` event

|

||||

# `CI_BRANCH`: The non-empty branch name from the above two (one and only one of them is empty)

|

||||

# `CI_SHA_PUSH`: The commit SHA from the push event

|

||||

# `CI_SHA_WORKFLOW_RUN`: The commit SHA that triggers this workflow by `workflow_run` event

|

||||

# `CI_SHA`: The non-empty commit SHA from the above two (one and only one of them is empty)

|

||||

run: |

|

||||

CI_BRANCH_PUSH=${{ github.event.ref }}

|

||||

CI_BRANCH_PUSH=${CI_BRANCH_PUSH/'refs/heads/'/''}

|

||||

CI_BRANCH_WORKFLOW_RUN=${{ github.event.workflow_run.head_branch }}

|

||||

CI_SHA_PUSH=${{ github.event.head_commit.id }}

|

||||

CI_SHA_WORKFLOW_RUN=${{ github.event.workflow_run.head_sha }}

|

||||

echo $CI_BRANCH_PUSH

|

||||

echo $CI_BRANCH_WORKFLOW_RUN

|

||||

echo $CI_SHA_PUSH

|

||||

@ -160,12 +159,6 @@ jobs:

|

||||

container:

|

||||

image: huggingface/transformers-pytorch-amd-gpu-push-ci # <--- We test only for PyTorch for now

|

||||

options: --device /dev/kfd --device /dev/dri --env ROCR_VISIBLE_DEVICES --shm-size "16gb" --ipc host -v /mnt/cache/.cache/huggingface:/mnt/cache/

|

||||

env:

|

||||

# For the meaning of these environment variables, see the job `Setup`

|

||||

CI_BRANCH_PUSH: ${{ github.event.ref }}

|

||||

CI_BRANCH_WORKFLOW_RUN: ${{ github.event.workflow_run.head_branch }}

|

||||

CI_SHA_PUSH: ${{ github.event.head_commit.id }}

|

||||

CI_SHA_WORKFLOW_RUN: ${{ github.event.workflow_run.head_sha }}

|

||||

steps:

|

||||

# Necessary to get the correct branch name and commit SHA for `workflow_run` event

|

||||

# We also take into account the `push` event (we might want to test some changes in a branch)

|

||||

@ -173,7 +166,11 @@ jobs:

|

||||

shell: bash

|

||||

# For the meaning of these environment variables, see the job `Setup`

|

||||

run: |

|

||||

CI_BRANCH_PUSH=${{ github.event.ref }}

|

||||

CI_BRANCH_PUSH=${CI_BRANCH_PUSH/'refs/heads/'/''}

|

||||

CI_BRANCH_WORKFLOW_RUN=${{ github.event.workflow_run.head_branch }}

|

||||

CI_SHA_PUSH=${{ github.event.head_commit.id }}

|

||||

CI_SHA_WORKFLOW_RUN=${{ github.event.workflow_run.head_sha }}

|

||||

echo $CI_BRANCH_PUSH

|

||||

echo $CI_BRANCH_WORKFLOW_RUN

|

||||

echo $CI_SHA_PUSH

|

||||

@ -259,12 +256,6 @@ jobs:

|

||||

# run_tests_torch_cuda_extensions_single_gpu,

|

||||

# run_tests_torch_cuda_extensions_multi_gpu

|

||||

]

|

||||

env:

|

||||

# For the meaning of these environment variables, see the job `Setup`

|

||||

CI_BRANCH_PUSH: ${{ github.event.ref }}

|

||||

CI_BRANCH_WORKFLOW_RUN: ${{ github.event.workflow_run.head_branch }}

|

||||

CI_SHA_PUSH: ${{ github.event.head_commit.id }}

|

||||

CI_SHA_WORKFLOW_RUN: ${{ github.event.workflow_run.head_sha }}

|

||||

steps:

|

||||

- name: Preliminary job status

|

||||

shell: bash

|

||||

@ -280,7 +271,11 @@ jobs:

|

||||

shell: bash

|

||||

# For the meaning of these environment variables, see the job `Setup`

|

||||

run: |

|

||||

CI_BRANCH_PUSH=${{ github.event.ref }}

|

||||

CI_BRANCH_PUSH=${CI_BRANCH_PUSH/'refs/heads/'/''}

|

||||

CI_BRANCH_WORKFLOW_RUN=${{ github.event.workflow_run.head_branch }}

|

||||

CI_SHA_PUSH=${{ github.event.head_commit.id }}

|

||||

CI_SHA_WORKFLOW_RUN=${{ github.event.workflow_run.head_sha }}

|

||||

echo $CI_BRANCH_PUSH

|

||||

echo $CI_BRANCH_WORKFLOW_RUN

|

||||

echo $CI_SHA_PUSH

|

||||

|

||||

67

.github/workflows/self-push.yml

vendored

67

.github/workflows/self-push.yml

vendored

@ -40,24 +40,23 @@ jobs:

|

||||

outputs:

|

||||

matrix: ${{ steps.set-matrix.outputs.matrix }}

|

||||

test_map: ${{ steps.set-matrix.outputs.test_map }}

|

||||

env:

|

||||

# `CI_BRANCH_PUSH`: The branch name from the push event

|

||||

# `CI_BRANCH_WORKFLOW_RUN`: The name of the branch on which this workflow is triggered by `workflow_run` event

|

||||

# `CI_SHA_PUSH`: The commit SHA from the push event

|

||||

# `CI_SHA_WORKFLOW_RUN`: The commit SHA that triggers this workflow by `workflow_run` event

|

||||

CI_BRANCH_PUSH: ${{ github.event.ref }}

|

||||

CI_BRANCH_WORKFLOW_RUN: ${{ github.event.workflow_run.head_branch }}

|

||||

CI_SHA_PUSH: ${{ github.event.head_commit.id }}

|

||||

CI_SHA_WORKFLOW_RUN: ${{ github.event.workflow_run.head_sha }}

|

||||

steps:

|

||||

# Necessary to get the correct branch name and commit SHA for `workflow_run` event

|

||||

# We also take into account the `push` event (we might want to test some changes in a branch)

|

||||

- name: Prepare custom environment variables

|

||||

shell: bash

|

||||

# `CI_BRANCH_PUSH`: The branch name from the push event

|

||||

# `CI_BRANCH_WORKFLOW_RUN`: The name of the branch on which this workflow is triggered by `workflow_run` event

|

||||

# `CI_BRANCH`: The non-empty branch name from the above two (one and only one of them is empty)

|

||||

# `CI_SHA_PUSH`: The commit SHA from the push event

|

||||

# `CI_SHA_WORKFLOW_RUN`: The commit SHA that triggers this workflow by `workflow_run` event

|

||||

# `CI_SHA`: The non-empty commit SHA from the above two (one and only one of them is empty)

|

||||

run: |

|

||||

CI_BRANCH_PUSH=${{ github.event.ref }}

|

||||

CI_BRANCH_PUSH=${CI_BRANCH_PUSH/'refs/heads/'/''}

|

||||

CI_BRANCH_WORKFLOW_RUN=${{ github.event.workflow_run.head_branch }}

|

||||

CI_SHA_PUSH=${{ github.event.head_commit.id }}

|

||||

CI_SHA_WORKFLOW_RUN=${{ github.event.workflow_run.head_sha }}

|

||||

echo $CI_BRANCH_PUSH

|

||||

echo $CI_BRANCH_WORKFLOW_RUN

|

||||

echo $CI_SHA_PUSH

|

||||

@ -136,12 +135,6 @@ jobs:

|

||||

container:

|

||||

image: huggingface/transformers-all-latest-gpu-push-ci

|

||||

options: --gpus 0 --shm-size "16gb" --ipc host -v /mnt/cache/.cache/huggingface:/mnt/cache/

|

||||

env:

|

||||

# For the meaning of these environment variables, see the job `Setup`

|

||||

CI_BRANCH_PUSH: ${{ github.event.ref }}

|

||||

CI_BRANCH_WORKFLOW_RUN: ${{ github.event.workflow_run.head_branch }}

|

||||

CI_SHA_PUSH: ${{ github.event.head_commit.id }}

|

||||

CI_SHA_WORKFLOW_RUN: ${{ github.event.workflow_run.head_sha }}

|

||||

steps:

|

||||

# Necessary to get the correct branch name and commit SHA for `workflow_run` event

|

||||

# We also take into account the `push` event (we might want to test some changes in a branch)

|

||||

@ -149,7 +142,11 @@ jobs:

|

||||

shell: bash

|

||||

# For the meaning of these environment variables, see the job `Setup`

|

||||

run: |

|

||||

CI_BRANCH_PUSH=${{ github.event.ref }}

|

||||

CI_BRANCH_PUSH=${CI_BRANCH_PUSH/'refs/heads/'/''}

|

||||

CI_BRANCH_WORKFLOW_RUN=${{ github.event.workflow_run.head_branch }}

|

||||

CI_SHA_PUSH=${{ github.event.head_commit.id }}

|

||||

CI_SHA_WORKFLOW_RUN=${{ github.event.workflow_run.head_sha }}

|

||||

echo $CI_BRANCH_PUSH

|

||||

echo $CI_BRANCH_WORKFLOW_RUN

|

||||

echo $CI_SHA_PUSH

|

||||

@ -231,12 +228,6 @@ jobs:

|

||||

container:

|

||||

image: huggingface/transformers-all-latest-gpu-push-ci

|

||||

options: --gpus all --shm-size "16gb" --ipc host -v /mnt/cache/.cache/huggingface:/mnt/cache/

|

||||

env:

|

||||

# For the meaning of these environment variables, see the job `Setup`

|

||||

CI_BRANCH_PUSH: ${{ github.event.ref }}

|

||||

CI_BRANCH_WORKFLOW_RUN: ${{ github.event.workflow_run.head_branch }}

|

||||

CI_SHA_PUSH: ${{ github.event.head_commit.id }}

|

||||

CI_SHA_WORKFLOW_RUN: ${{ github.event.workflow_run.head_sha }}

|

||||

steps:

|

||||

# Necessary to get the correct branch name and commit SHA for `workflow_run` event

|

||||

# We also take into account the `push` event (we might want to test some changes in a branch)

|

||||

@ -244,7 +235,11 @@ jobs:

|

||||

shell: bash

|

||||

# For the meaning of these environment variables, see the job `Setup`

|

||||

run: |

|

||||

CI_BRANCH_PUSH=${{ github.event.ref }}

|

||||

CI_BRANCH_PUSH=${CI_BRANCH_PUSH/'refs/heads/'/''}

|

||||

CI_BRANCH_WORKFLOW_RUN=${{ github.event.workflow_run.head_branch }}

|

||||

CI_SHA_PUSH=${{ github.event.head_commit.id }}

|

||||

CI_SHA_WORKFLOW_RUN=${{ github.event.workflow_run.head_sha }}

|

||||

echo $CI_BRANCH_PUSH

|

||||

echo $CI_BRANCH_WORKFLOW_RUN

|

||||

echo $CI_SHA_PUSH

|

||||

@ -326,12 +321,6 @@ jobs:

|

||||

container:

|

||||

image: huggingface/transformers-pytorch-deepspeed-latest-gpu-push-ci

|

||||

options: --gpus 0 --shm-size "16gb" --ipc host -v /mnt/cache/.cache/huggingface:/mnt/cache/

|

||||

env:

|

||||

# For the meaning of these environment variables, see the job `Setup`

|

||||

CI_BRANCH_PUSH: ${{ github.event.ref }}

|

||||

CI_BRANCH_WORKFLOW_RUN: ${{ github.event.workflow_run.head_branch }}

|

||||

CI_SHA_PUSH: ${{ github.event.head_commit.id }}

|

||||

CI_SHA_WORKFLOW_RUN: ${{ github.event.workflow_run.head_sha }}

|

||||

steps:

|

||||

# Necessary to get the correct branch name and commit SHA for `workflow_run` event

|

||||

# We also take into account the `push` event (we might want to test some changes in a branch)

|

||||

@ -339,7 +328,11 @@ jobs:

|

||||

shell: bash

|

||||

# For the meaning of these environment variables, see the job `Setup`

|

||||

run: |

|

||||

CI_BRANCH_PUSH=${{ github.event.ref }}

|

||||

CI_BRANCH_PUSH=${CI_BRANCH_PUSH/'refs/heads/'/''}

|

||||

CI_BRANCH_WORKFLOW_RUN=${{ github.event.workflow_run.head_branch }}

|

||||

CI_SHA_PUSH=${{ github.event.head_commit.id }}

|

||||

CI_SHA_WORKFLOW_RUN=${{ github.event.workflow_run.head_sha }}

|

||||

echo $CI_BRANCH_PUSH

|

||||

echo $CI_BRANCH_WORKFLOW_RUN

|

||||

echo $CI_SHA_PUSH

|

||||

@ -418,12 +411,6 @@ jobs:

|

||||

container:

|

||||

image: huggingface/transformers-pytorch-deepspeed-latest-gpu-push-ci

|

||||

options: --gpus all --shm-size "16gb" --ipc host -v /mnt/cache/.cache/huggingface:/mnt/cache/

|

||||

env:

|

||||

# For the meaning of these environment variables, see the job `Setup`

|

||||

CI_BRANCH_PUSH: ${{ github.event.ref }}

|

||||

CI_BRANCH_WORKFLOW_RUN: ${{ github.event.workflow_run.head_branch }}

|

||||

CI_SHA_PUSH: ${{ github.event.head_commit.id }}

|

||||

CI_SHA_WORKFLOW_RUN: ${{ github.event.workflow_run.head_sha }}

|

||||

steps:

|

||||

# Necessary to get the correct branch name and commit SHA for `workflow_run` event

|

||||

# We also take into account the `push` event (we might want to test some changes in a branch)

|

||||

@ -431,7 +418,11 @@ jobs:

|

||||

shell: bash

|

||||

# For the meaning of these environment variables, see the job `Setup`

|

||||

run: |

|

||||

CI_BRANCH_PUSH=${{ github.event.ref }}

|

||||

CI_BRANCH_PUSH=${CI_BRANCH_PUSH/'refs/heads/'/''}

|

||||

CI_BRANCH_WORKFLOW_RUN=${{ github.event.workflow_run.head_branch }}

|

||||

CI_SHA_PUSH=${{ github.event.head_commit.id }}

|

||||

CI_SHA_WORKFLOW_RUN=${{ github.event.workflow_run.head_sha }}

|

||||

echo $CI_BRANCH_PUSH

|

||||

echo $CI_BRANCH_WORKFLOW_RUN

|

||||

echo $CI_SHA_PUSH

|

||||

@ -509,12 +500,6 @@ jobs:

|

||||

run_tests_torch_cuda_extensions_single_gpu,

|

||||

run_tests_torch_cuda_extensions_multi_gpu

|

||||

]

|

||||

env:

|

||||

# For the meaning of these environment variables, see the job `Setup`

|

||||

CI_BRANCH_PUSH: ${{ github.event.ref }}

|

||||

CI_BRANCH_WORKFLOW_RUN: ${{ github.event.workflow_run.head_branch }}

|

||||

CI_SHA_PUSH: ${{ github.event.head_commit.id }}

|

||||

CI_SHA_WORKFLOW_RUN: ${{ github.event.workflow_run.head_sha }}

|

||||

steps:

|

||||

- name: Preliminary job status

|

||||

shell: bash

|

||||

@ -528,7 +513,11 @@ jobs:

|

||||

shell: bash

|

||||

# For the meaning of these environment variables, see the job `Setup`

|

||||

run: |

|

||||

CI_BRANCH_PUSH=${{ github.event.ref }}

|

||||

CI_BRANCH_PUSH=${CI_BRANCH_PUSH/'refs/heads/'/''}

|

||||

CI_BRANCH_WORKFLOW_RUN=${{ github.event.workflow_run.head_branch }}

|

||||

CI_SHA_PUSH=${{ github.event.head_commit.id }}

|

||||

CI_SHA_WORKFLOW_RUN=${{ github.event.workflow_run.head_sha }}

|

||||

echo $CI_BRANCH_PUSH

|

||||

echo $CI_BRANCH_WORKFLOW_RUN

|

||||

echo $CI_SHA_PUSH

|

||||

|

||||

2

.github/workflows/self-scheduled.yml

vendored

2

.github/workflows/self-scheduled.yml

vendored

@ -102,7 +102,7 @@ jobs:

|

||||

strategy:

|

||||

fail-fast: false

|

||||

matrix:

|

||||

machine_type: [single-gpu, multi-gpu]

|

||||

machine_type: [single-gpu]

|

||||

slice_id: ${{ fromJSON(needs.setup.outputs.slice_ids) }}

|

||||

uses: ./.github/workflows/model_jobs.yml

|

||||

with:

|

||||

|

||||

20

.github/workflows/ssh-runner.yml

vendored

20

.github/workflows/ssh-runner.yml

vendored

@ -1,9 +1,17 @@

|

||||

name: SSH into our runners

|

||||

|

||||

on:

|

||||

push:

|

||||

branches:

|

||||

- ssh_new_cluster

|

||||

workflow_dispatch:

|

||||

inputs:

|

||||

runner_type:

|

||||

description: 'Type of runner to test (a10 or t4)'

|

||||

required: true

|

||||

docker_image:

|

||||

description: 'Name of the Docker image'

|

||||

required: true

|

||||

num_gpus:

|

||||

description: 'Type of the number of gpus to use (`single` or `multi`)'

|

||||

required: true

|

||||

|

||||

env:

|

||||

HF_HUB_READ_TOKEN: ${{ secrets.HF_HUB_READ_TOKEN }}

|

||||

@ -20,10 +28,9 @@ env:

|

||||

jobs:

|

||||

ssh_runner:

|

||||

name: "SSH"

|

||||

runs-on:

|

||||

group: aws-g4dn-2xlarge-cache-test

|

||||

runs-on: ["${{ github.event.inputs.num_gpus }}-gpu", nvidia-gpu, "${{ github.event.inputs.runner_type }}", ci]

|

||||

container:

|

||||

image: huggingface/transformers-all-latest-gpu

|

||||

image: ${{ github.event.inputs.docker_image }}

|

||||

options: --gpus all --privileged --ipc host -v /mnt/cache/.cache/huggingface:/mnt/cache/

|

||||

|

||||

steps:

|

||||

@ -54,4 +61,3 @@ jobs:

|

||||

slackChannel: ${{ secrets.SLACK_CIFEEDBACK_CHANNEL }}

|

||||

slackToken: ${{ secrets.SLACK_CIFEEDBACK_BOT_TOKEN }}

|

||||

waitForSSH: true

|

||||

sshTimeout: 30m

|

||||

|

||||

@ -36,4 +36,5 @@ Please inspect the code of the tools before passing them to the Agent to protect

|

||||

|

||||

## Reporting a Vulnerability

|

||||

|

||||

Feel free to submit vulnerability reports to [security@huggingface.co](mailto:security@huggingface.co), where someone from the HF security team will review and recommend next steps. If reporting a vulnerability specific to open source, please note [Huntr](https://huntr.com) is a vulnerability disclosure program for open source software.

|

||||

🤗 Please feel free to submit vulnerability reports to our private bug bounty program at https://hackerone.com/hugging_face. You'll need to request access to the program by emailing security@huggingface.co.

|

||||

Note that you'll need to be invited to our program, so send us a quick email at security@huggingface.co if you've found a vulnerability.

|

||||

|

||||

@ -24,9 +24,7 @@

|

||||

- local: model_sharing

|

||||

title: Share your model

|

||||

- local: agents

|

||||

title: Agents 101

|

||||

- local: agents_advanced

|

||||

title: Agents, supercharged - Multi-agents, External tools, and more

|

||||

title: Agents

|

||||

- local: llm_tutorial

|

||||

title: Generation with LLMs

|

||||

- local: conversations

|

||||

@ -96,8 +94,6 @@

|

||||

title: Text to speech

|

||||

- local: tasks/image_text_to_text

|

||||

title: Image-text-to-text

|

||||

- local: tasks/video_text_to_text

|

||||

title: Video-text-to-text

|

||||

title: Multimodal

|

||||

- isExpanded: false

|

||||

sections:

|

||||

@ -492,8 +488,6 @@

|

||||

title: Nyströmformer

|

||||

- local: model_doc/olmo

|

||||

title: OLMo

|

||||

- local: model_doc/olmoe

|

||||

title: OLMoE

|

||||

- local: model_doc/open-llama

|

||||

title: Open-Llama

|

||||

- local: model_doc/opt

|

||||

@ -836,8 +830,6 @@

|

||||

title: LLaVA-NeXT

|

||||

- local: model_doc/llava_next_video

|

||||

title: LLaVa-NeXT-Video

|

||||

- local: model_doc/llava_onevision

|

||||

title: LLaVA-Onevision

|

||||

- local: model_doc/lxmert

|

||||

title: LXMERT

|

||||

- local: model_doc/matcha

|

||||

|

||||

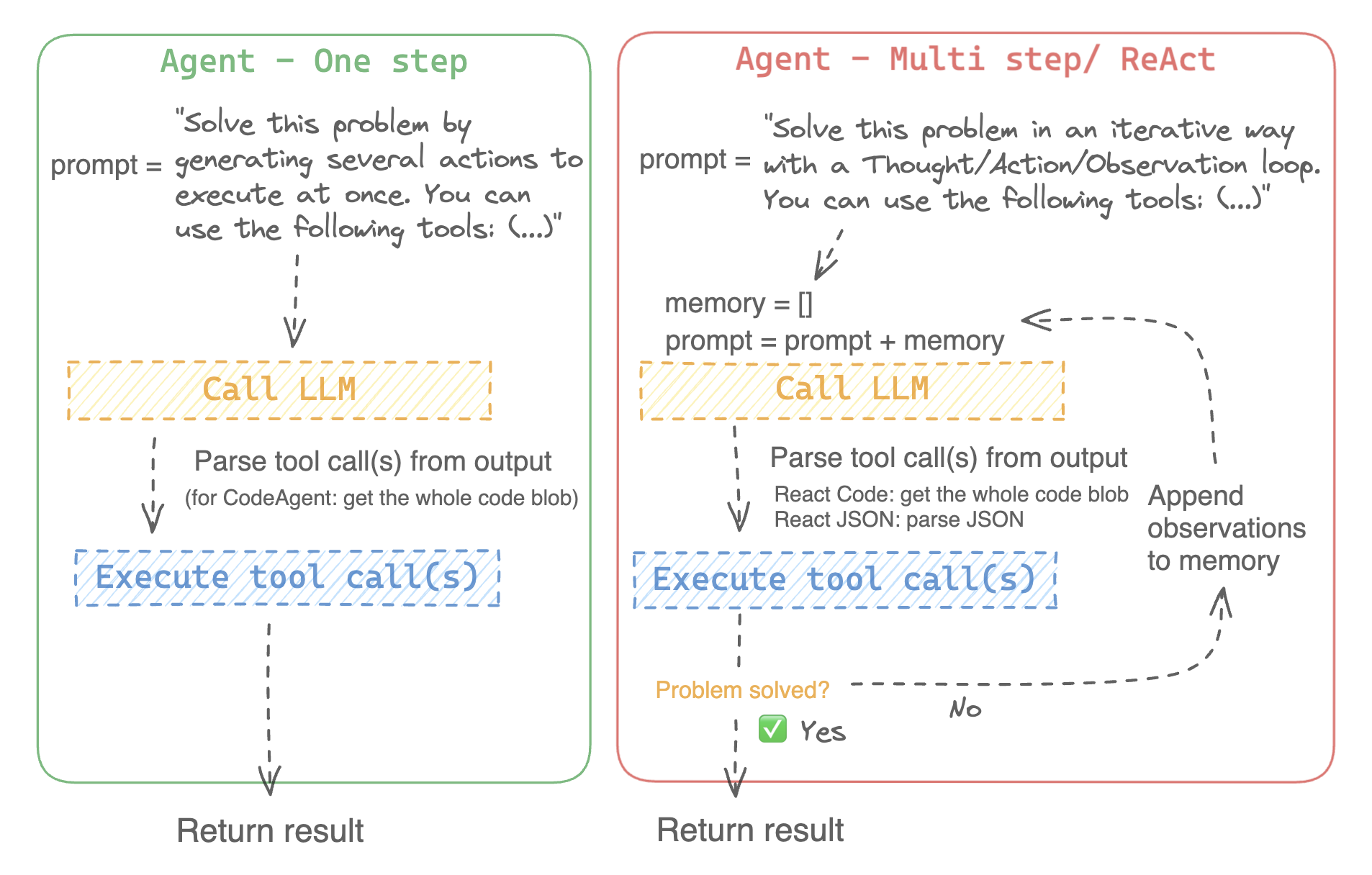

@ -28,8 +28,8 @@ An agent is a system that uses an LLM as its engine, and it has access to functi

|

||||

These *tools* are functions for performing a task, and they contain all necessary description for the agent to properly use them.

|

||||

|

||||

The agent can be programmed to:

|

||||

- devise a series of actions/tools and run them all at once, like the [`CodeAgent`]

|

||||

- plan and execute actions/tools one by one and wait for the outcome of each action before launching the next one, like the [`ReactJsonAgent`]

|

||||

- devise a series of actions/tools and run them all at once like the [`CodeAgent`] for example

|

||||

- plan and execute actions/tools one by one and wait for the outcome of each action before launching the next one like the [`ReactJsonAgent`] for example

|

||||

|

||||

### Types of agents

|

||||

|

||||

@ -46,18 +46,7 @@ We implement two versions of ReactJsonAgent:

|

||||

- [`ReactCodeAgent`] is a new type of ReactJsonAgent that generates its tool calls as blobs of code, which works really well for LLMs that have strong coding performance.

|

||||

|

||||

> [!TIP]

|

||||

> Read [Open-source LLMs as LangChain Agents](https://huggingface.co/blog/open-source-llms-as-agents) blog post to learn more about ReAct agents.

|

||||

|

||||

<div class="flex justify-center">

|

||||

<img

|

||||

class="block dark:hidden"

|

||||

src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/transformers/Agent_ManimCE.gif"

|

||||

/>

|

||||

<img

|

||||

class="hidden dark:block"

|

||||

src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/transformers/Agent_ManimCE.gif"

|

||||

/>

|

||||

</div>

|

||||

> Read [Open-source LLMs as LangChain Agents](https://huggingface.co/blog/open-source-llms-as-agents) blog post to learn more the ReAct agent.

|

||||

|

||||

|

||||

|

||||

@ -137,13 +126,12 @@ Additionally, `llm_engine` can also take a `grammar` argument. In the case where

|

||||

|

||||

You will also need a `tools` argument which accepts a list of `Tools` - it can be an empty list. You can also add the default toolbox on top of your `tools` list by defining the optional argument `add_base_tools=True`.

|

||||

|

||||

Now you can create an agent, like [`CodeAgent`], and run it. You can also create a [`TransformersEngine`] with a pre-initialized pipeline to run inference on your local machine using `transformers`.

|

||||

For convenience, since agentic behaviours generally require stronger models such as `Llama-3.1-70B-Instruct` that are harder to run locally for now, we also provide the [`HfApiEngine`] class that initializes a `huggingface_hub.InferenceClient` under the hood.

|

||||

Now you can create an agent, like [`CodeAgent`], and run it. For convenience, we also provide the [`HfEngine`] class that uses `huggingface_hub.InferenceClient` under the hood.

|

||||

|

||||

```python

|

||||

from transformers import CodeAgent, HfApiEngine

|

||||

from transformers import CodeAgent, HfEngine

|

||||

|

||||

llm_engine = HfApiEngine(model="meta-llama/Meta-Llama-3-70B-Instruct")

|

||||

llm_engine = HfEngine(model="meta-llama/Meta-Llama-3-70B-Instruct")

|

||||

agent = CodeAgent(tools=[], llm_engine=llm_engine, add_base_tools=True)

|

||||

|

||||

agent.run(

|

||||

@ -153,7 +141,7 @@ agent.run(

|

||||

```

|

||||

|

||||

This will be handy in case of emergency baguette need!

|

||||

You can even leave the argument `llm_engine` undefined, and an [`HfApiEngine`] will be created by default.

|

||||

You can even leave the argument `llm_engine` undefined, and an [`HfEngine`] will be created by default.

|

||||

|

||||

```python

|

||||

from transformers import CodeAgent

|

||||

@ -294,8 +282,7 @@ Transformers comes with a default toolbox for empowering agents, that you can ad

|

||||

- **Speech to text**: given an audio recording of a person talking, transcribe the speech into text ([Whisper](./model_doc/whisper))

|

||||

- **Text to speech**: convert text to speech ([SpeechT5](./model_doc/speecht5))

|

||||

- **Translation**: translates a given sentence from source language to target language.

|

||||

- **DuckDuckGo search***: performs a web search using DuckDuckGo browser.

|

||||

- **Python code interpreter**: runs your the LLM generated Python code in a secure environment. This tool will only be added to [`ReactJsonAgent`] if you initialize it with `add_base_tools=True`, since code-based agent can already natively execute Python code

|

||||

- **Python code interpreter**: runs your the LLM generated Python code in a secure environment. This tool will only be added to [`ReactJsonAgent`] if you use `add_base_tools=True`, since code-based tools can already execute Python code

|

||||

|

||||

|

||||

You can manually use a tool by calling the [`load_tool`] function and a task to perform.

|

||||

@ -455,3 +442,123 @@ To speed up the start, tools are loaded only if called by the agent.

|

||||

This gets you this image:

|

||||

|

||||

<img src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/transformers/rivers_and_lakes.png">

|

||||

|

||||

|

||||

### Use gradio-tools

|

||||

|

||||

[gradio-tools](https://github.com/freddyaboulton/gradio-tools) is a powerful library that allows using Hugging

|

||||

Face Spaces as tools. It supports many existing Spaces as well as custom Spaces.

|

||||

|

||||

Transformers supports `gradio_tools` with the [`Tool.from_gradio`] method. For example, let's use the [`StableDiffusionPromptGeneratorTool`](https://github.com/freddyaboulton/gradio-tools/blob/main/gradio_tools/tools/prompt_generator.py) from `gradio-tools` toolkit for improving prompts to generate better images.

|

||||

|

||||

Import and instantiate the tool, then pass it to the `Tool.from_gradio` method:

|

||||

|

||||

```python

|

||||

from gradio_tools import StableDiffusionPromptGeneratorTool

|

||||

from transformers import Tool, load_tool, CodeAgent

|

||||

|

||||

gradio_prompt_generator_tool = StableDiffusionPromptGeneratorTool()

|

||||

prompt_generator_tool = Tool.from_gradio(gradio_prompt_generator_tool)

|

||||

```

|

||||

|

||||

Now you can use it just like any other tool. For example, let's improve the prompt `a rabbit wearing a space suit`.

|

||||

|

||||

```python

|

||||

image_generation_tool = load_tool('huggingface-tools/text-to-image')

|

||||

agent = CodeAgent(tools=[prompt_generator_tool, image_generation_tool], llm_engine=llm_engine)

|

||||

|

||||

agent.run(

|

||||

"Improve this prompt, then generate an image of it.", prompt='A rabbit wearing a space suit'

|

||||

)

|

||||

```

|

||||

|

||||

The model adequately leverages the tool:

|

||||

```text

|

||||

======== New task ========

|

||||

Improve this prompt, then generate an image of it.

|

||||

You have been provided with these initial arguments: {'prompt': 'A rabbit wearing a space suit'}.

|

||||

==== Agent is executing the code below:

|

||||

improved_prompt = StableDiffusionPromptGenerator(query=prompt)

|

||||

while improved_prompt == "QUEUE_FULL":

|

||||

improved_prompt = StableDiffusionPromptGenerator(query=prompt)

|

||||

print(f"The improved prompt is {improved_prompt}.")

|

||||

image = image_generator(prompt=improved_prompt)

|

||||

====

|

||||

```

|

||||

|

||||

Before finally generating the image:

|

||||

|

||||

<img src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/transformers/rabbit.png">

|

||||

|

||||

|

||||

> [!WARNING]

|

||||

> gradio-tools require *textual* inputs and outputs even when working with different modalities like image and audio objects. Image and audio inputs and outputs are currently incompatible.

|

||||

|

||||

### Use LangChain tools

|

||||

|

||||

We love Langchain and think it has a very compelling suite of tools.

|

||||

To import a tool from LangChain, use the `from_langchain()` method.

|

||||

|

||||

Here is how you can use it to recreate the intro's search result using a LangChain web search tool.

|

||||

|

||||

```python

|

||||

from langchain.agents import load_tools

|

||||

from transformers import Tool, ReactCodeAgent

|

||||

|

||||

search_tool = Tool.from_langchain(load_tools(["serpapi"])[0])

|

||||

|

||||

agent = ReactCodeAgent(tools=[search_tool])

|

||||

|

||||

agent.run("How many more blocks (also denoted as layers) in BERT base encoder than the encoder from the architecture proposed in Attention is All You Need?")

|

||||

```

|

||||

|

||||

## Gradio interface

|

||||

|

||||