* 1,100%!

* Clean

* Don't touch DS

* Experiment with dtype allocation

* skip test_load_save_without_tied_weights test

* A little faster

* Include proper upscaling?

* Fixup tests

* Potentially skip?

* Let's see if this fixes git history

* Maintain new dtype

* Fin

* Rm hook idea for now

* New approach, see what breaks

* stage

* Clean

* Stash

* Should be fin now, just need to mark failing models

* Clean up

* Simplify

* Deal with weird models

* Enc/Dec

* Skip w/ reason

* Adjust test

* Fix test

* one more test

* Keep experimenting

* Fix ref

* TO REMOVE: testing feedback CI

* Right push

* Update tests/utils/test_modeling_utils.py

Co-authored-by: Arthur <48595927+ArthurZucker@users.noreply.github.com>

* disable

* Add new func

* Test nits from Amy

* Update src/transformers/modeling_utils.py

Co-authored-by: amyeroberts <22614925+amyeroberts@users.noreply.github.com>

* Adjust comment

* Adjust comment on skip

* make private

* Fin

* Should be a not flag

* Clarify and rename test

---------

Co-authored-by: Marc Sun <marc@huggingface.co>

Co-authored-by: Arthur <48595927+ArthurZucker@users.noreply.github.com>

Co-authored-by: amyeroberts <22614925+amyeroberts@users.noreply.github.com>

* tmp commit

* shorter

* nit

* explicit kwargs

* propagate changes

* mass propagation with a few manual touches (let's see how CI behaves)

* fix cacheless case

* Update src/transformers/generation/utils.py

Co-authored-by: Arthur <48595927+ArthurZucker@users.noreply.github.com>

* make fixup

---------

Co-authored-by: Arthur <48595927+ArthurZucker@users.noreply.github.com>

* Change `Trainer.get_optimizer_cls_and_kwargs` to `self.`

* Make `get_optimizer_cls_and_kwargs` an instance method

* Fixing typo

* Revert `get_optimizer_cls_and_kwargs` to staticmethod

* restore newline to trainer.py eof

* Add warning message for and parameters

* Fix when the warning is raised

* Formatting changes

* Improve testing and remove duplicated warning from _fix_key

* add gather_use_object arguments

* fix name and pass the CI test for Seq2SeqTrainer

* make style

* make it to functools

* fix typo

* add accelerate version:

* adding warning

* Update src/transformers/trainer.py

Co-authored-by: Marc Sun <57196510+SunMarc@users.noreply.github.com>

* make style

* Update src/transformers/training_args.py

* check function move to initial part

* add test for eval_use_gather_object

* fix minor

---------

Co-authored-by: Marc Sun <57196510+SunMarc@users.noreply.github.com>

* fix galore lr display with lr schedulers

* style

* add some tests to check for displayed lrs

* copy-paste err for warmup steps

* standardize the default lr to be only in the optimizer

* trying out my luck with the reads

* cast image features to model.dtype where needed to support FP16 or other precision in pipelines

* Update src/transformers/pipelines/image_feature_extraction.py

Co-authored-by: amyeroberts <22614925+amyeroberts@users.noreply.github.com>

* Use .to instead

* Add FP16 pipeline support for zeroshot audio classification

* Remove unused torch imports

* Add docs on FP16 pipeline

* Remove unused import

* Add FP16 tests to pipeline mixin

* Add fp16 placeholder for mask_generation pipeline test

* Add FP16 tests for all pipelines

* Fix formatting

* Remove torch_dtype arg from is_pipeline_test_to_skip*

* Fix format

* trigger ci

---------

Co-authored-by: amyeroberts <22614925+amyeroberts@users.noreply.github.com>

* Repeating an important warning in the chat template docs

* Update docs/source/en/chat_templating.md

Co-authored-by: Lysandre Debut <hi@lysand.re>

* Reword for clarity

* Reword for clarity

---------

Co-authored-by: Lysandre Debut <hi@lysand.re>

* Add siglip loss function

* Update docs

* Enable training tests

[experimental] enable GC training tests as it has worked for my own data

* Remove test_training* overrides to enable training tests

[run_slow] siglip

* Skip training tests for Siglip text model and ImageClassificationModel

[run_slow] siglip

* Skip GC training tests for SiglipForImageClassification

* Explicitly skip training tests for SiglipVisionModel

Add skip reason for training tests for SiglipTextModel

* Remove copied from to fix CI

* Update CometCallback to allow reusing of the running experiment

* Fixups

* Remove useless TODO

* Add checks for minimum version of the Comet SDK

* Fix documentation and links.

Also simplify how the Comet Experiment name is passed

* Add torch_empty_cache_steps to TrainingArguments

* Fix formatting

* Add torch_empty_cache_steps to docs on single gpu training

* Remove check for torch_empty_cache_steps <= max_steps

* Captalize Tip

* Be device agnostic

* Fix linting

* Fix init for rt-detr heads

* Fixup

* Add separate prior_prob value to config for initialization

* Add bbox init

* Change to 1 / num_labels init

* Adjust weights init test

* Fix style for test

* [fix BUG] pad labels before use it in preprocess_logits_for_metrics

* a more readable fix

labels can't use `gather` before pass to `preprocess_logits_for_metrics`, so must split into 2 if-block

* add a comment

* oh code quality check

* remove incorrect urls pointing to the llava repository

* remove incorrect urls pointing to the llava repository; removing entire comments

* remove incorrect urls pointing to the llava repository; removing entire comments; ran fix-copies

* ran fixup

* add gather_use_object arguments

* fix name and pass the CI test for Seq2SeqTrainer

* make style

* make it to functools

* fix typo

* add accelerate version:

* adding warning

* Update src/transformers/trainer.py

Co-authored-by: Marc Sun <57196510+SunMarc@users.noreply.github.com>

* make style

* Update src/transformers/training_args.py

* check function move to initial part

* add test for eval_use_gather_object

---------

Co-authored-by: Marc Sun <57196510+SunMarc@users.noreply.github.com>

* squash into single commit

* run diff once more

* docstring

* tests

* minor chnages and ready to go

* Update src/transformers/models/llava_next_video/processing_llava_next_video.py

Co-authored-by: amyeroberts <22614925+amyeroberts@users.noreply.github.com>

* Update tests/models/vipllava/test_modeling_vipllava.py

Co-authored-by: amyeroberts <22614925+amyeroberts@users.noreply.github.com>

* [run-slow] llava-next-video

* [run-slow] llava-next-video

* [run-slow] llava_next_video

* fix two tests

* fix slow tests

* remove logit checks due to numeric errors

* run test once more

* [run-slow] llava_next_video

* final try to pass the test

* [run-slow] llava_next_video

* [run-slow] llava_next_video

* [run-slow] llava_next_video

* style

* fix

* style

---------

Co-authored-by: amyeroberts <22614925+amyeroberts@users.noreply.github.com>

Co-authored-by: ydshieh <ydshieh@users.noreply.github.com>

* fix llama fsdp

* fixup

* adding FSDP tests for CPU offloading

* fixes

* fix tests

* fix tests

* add it for mixtral

* propagate the changes on other models

* Update src/transformers/models/phi/modeling_phi.py

* Delete utils/testing_scripts/fsdp_cpu_offloading.py

Remove script - FSDP + CPU offloading it tested in the test suite

* Delete utils/testing_scripts/dummy_fsdp_config.yml

* Update + add cache_positions docstring

---------

Co-authored-by: amyeroberts <22614925+amyeroberts@users.noreply.github.com>

* starting support for sdpa in `gptneox` models

* small comment on tests

* fix dropout

* documentation and style

* clarify concrete paths for reference

* generalise attn projections and rope application

added head mask check to sdpa mask creation

handle sdpa memory backend bug via own version flag

* update docs and style

* move dtype casting outside of general attn_projection_and_rope function

fix flash_attn_2 stuff

* more generic attn warning if output_attns or head_mask

* simplify head mask check by moving head mask creation to a later point

* remove copied llama artifact

* remove padding_mask from attention function signature

* removing unnecessary comments, only "save" attn implementation once

* [run_slow] gpt_neox

* Add initial implementation of `spectrogram_batch`

* Format the initial implementation

* Add test suite for the `spectrogram_batch`

* Update `spectrogram_batch` to ensure compatibility with test suite

* Update `spectrogram_batch` to include pre and post-processing

* Add `amplitude_to_db_batch` function and associated tests

* Add `power_to_db_batch` function and associated tests

* Reimplement the test suite for `spectrogram_batch`

* Fix errors in `spectrogram_batch`

* Add the function annotation for `spectrogram_batch`

* Address code quality

* Re-add `test_chroma_equivalence` function

* Update src/transformers/audio_utils.py

Co-authored-by: amyeroberts <22614925+amyeroberts@users.noreply.github.com>

* Update src/transformers/audio_utils.py

Co-authored-by: amyeroberts <22614925+amyeroberts@users.noreply.github.com>

---------

Co-authored-by: amyeroberts <22614925+amyeroberts@users.noreply.github.com>

* PR SPLIT: moving origina changes for adding user defined symbols

* adding gemma test and generalizing gemma converter

* ruff

* update common test

* update serialization test

* deberta v2 tests updates as rust version adds '.' as a user added token, so a space is not added

* removing commented lines

* applying feedback - user only added_tokens to add and check piece.type instead of trainer_spec for user_defined_symbols

* add comment referencing sentencepiece

* Consider inheritance in type checking for tensors

Add an additional check to bypass type assertion when both tensors are

torch.Tensor instances.

* Fix the quality issue

* Update chat template docs

* Minor bug in the version check

* Update docs/source/en/chat_templating.md

Co-authored-by: Joshua Lochner <admin@xenova.com>

* Update docs/source/en/chat_templating.md

Co-authored-by: Joshua Lochner <admin@xenova.com>

* Update docs/source/en/chat_templating.md

Co-authored-by: Joshua Lochner <admin@xenova.com>

* Replace backticks with bolding because the doc builder was trying to parse them

* Replace backticks with bolding because the doc builder was trying to parse them

* Replace backticks with bolding because the doc builder was trying to parse them

* More cleanups to avoid upsetting the doc builder

* Add one more tip at the end

---------

Co-authored-by: Joshua Lochner <admin@xenova.com>

* Fix single letter stop strings

* Change the 0 to a 1 to avoid potential empty vector headaches later

* Restructure for clarity

* Update tests/generation/test_stopping_criteria.py

Co-authored-by: amyeroberts <22614925+amyeroberts@users.noreply.github.com>

* Add the unsqueeze

---------

Co-authored-by: amyeroberts <22614925+amyeroberts@users.noreply.github.com>

* Improve Python interpreter

* Add with and assert statements

* Prevent overwriting existing tools

* Check interpreter errors are well logged in code agent

* Add lazy evaluation for and and or

* Improve variable assignment

* Fix early return statements in functions

* Add small import fix on interpreter tool

* Pass datasets trust_remote_code

* Pass trust_remote_code in more tests

* Add trust_remote_dataset_code arg to some tests

* Revert "Temporarily pin datasets upper version to fix CI"

This reverts commit b7672826cad31e30319487af876e608d8af7d37b.

* Pass trust_remote_code in librispeech_asr_dummy docstrings

* Revert "Pin datasets<2.20.0 for examples"

This reverts commit 833fc17a3e3f0dcb40cff2ffd86c00ad9ecadab9.

* Pass trust_remote_code to all examples

* Revert "Add trust_remote_dataset_code arg to some tests" to research_projects

* Pass trust_remote_code to tests

* Pass trust_remote_code to docstrings

* Fix flax examples tests requirements

* Pass trust_remote_dataset_code arg to tests

* Replace trust_remote_dataset_code with trust_remote_code in one example

* Fix duplicate trust_remote_code

* Replace args.trust_remote_dataset_code with args.trust_remote_code

* Replace trust_remote_dataset_code with trust_remote_code in parser

* Replace trust_remote_dataset_code with trust_remote_code in dataclasses

* Replace trust_remote_dataset_code with trust_remote_code arg

* xpu: support xpu backend from stock pytorch (>=2.4)

Fixes: https://github.com/huggingface/transformers/issues/31237

XPU backend is available in the stock PyTorch starting from

version 2.4, see [1]. This commit extends huggingface transformers

to support XPU from both IPEX and the stock pytorch. IPEX is being

tried first.

See: https://github.com/pytorch/pytorch/issues/114842

Requires: https://github.com/huggingface/accelerate/pull/2825

Signed-off-by: Dmitry Rogozhkin <dmitry.v.rogozhkin@intel.com>

* xpu: enable gpt2 and decision_transformer tests for xpu pytorch backend

Note that running xpu tests requires TRANSFORMERS_TEST_DEVICE_SPEC=spec.py

passed to the test runner:

import torch

DEVICE_NAME = 'xpu'

MANUAL_SEED_FN = torch.xpu.manual_seed

EMPTY_CACHE_FN = torch.xpu.empty_cache

DEVICE_COUNT_FN = torch.xpu.device_count

Signed-off-by: Dmitry Rogozhkin <dmitry.v.rogozhkin@intel.com>

---------

Signed-off-by: Dmitry Rogozhkin <dmitry.v.rogozhkin@intel.com>

* Let's try moving chat templates out of IDEFICS and into the generic ProcessorMixin

* Chat templates should not be mandatory

* Chat templates should not be mandatory

* Not all classes will have default chat templates

* stash commit

* Add chat template docstring

* Clean up docstring

* Add chat templates to LLaVA/LLaVA-next

* Docstring fixup

* Quick IDEFICS2 fixup

* Remove some old references to the Conversation class

* make fixup

* Change JSON serialization to custom json.dumps to prevent escaping of "<", ">", "&", "'"

* caller has control over the order, remove sort_key=True

* Move tojson into a proper function and expose a couple of other args

---------

Co-authored-by: jun.4 <jun.4@kakaobrain.com>

Co-authored-by: Matt <rocketknight1@gmail.com>

* Draft fast image processors

* Draft working fast version

* py3.8 compatible cache

* Enable loading fast image processors through auto

* Tidy up; rescale behaviour based on input type

* Enable tests for fast image processors

* Smarter rescaling

* Don't default to Fast

* Safer imports

* Add necessary Pillow requirement

* Woops

* Add AutoImageProcessor test

* Fix up

* Fix test for imagegpt

* Fix test

* Review comments

* Add warning for TF and JAX input types

* Rearrange

* Return transforms

* NumpyToTensor transformation

* Rebase - include changes from upstream in ImageProcessingMixin

* Safe typing

* Fix up

* convert mean/std to tesnor to rescale

* Don't store transforms in state

* Fix up

* Update src/transformers/image_processing_utils_fast.py

Co-authored-by: Arthur <48595927+ArthurZucker@users.noreply.github.com>

* Update src/transformers/models/auto/image_processing_auto.py

Co-authored-by: Arthur <48595927+ArthurZucker@users.noreply.github.com>

* Update src/transformers/models/auto/image_processing_auto.py

Co-authored-by: Arthur <48595927+ArthurZucker@users.noreply.github.com>

* Update src/transformers/models/auto/image_processing_auto.py

Co-authored-by: Arthur <48595927+ArthurZucker@users.noreply.github.com>

* Warn if fast image processor available

* Update src/transformers/models/vit/image_processing_vit_fast.py

* Transpose incoming numpy images to be in CHW format

* Update mapping names based on packages, auto set fast to None

* Fix up

* Fix

* Add AutoImageProcessor.from_pretrained(checkpoint, use_fast=True) test

* Update src/transformers/models/vit/image_processing_vit_fast.py

Co-authored-by: Pavel Iakubovskii <qubvel@gmail.com>

* Add equivalence and speed tests

* Fix up

---------

Co-authored-by: Arthur <48595927+ArthurZucker@users.noreply.github.com>

Co-authored-by: Pavel Iakubovskii <qubvel@gmail.com>

* First draft, still missing automatic function conversion

* First draft of the automatic schema generator

* Lots of small fixes

* the walrus has betrayed me

* please stop committing your debug breakpoints

* Lots of cleanup and edge cases, looking better now

* Comments and bugfixes for the type hint parser

* More cleanup

* Add tests, update schema generator

* Update tests, proper handling of return values

* Small docstring change

* More doc updates

* More doc updates

* Add json_schema decorator

* Clean up the TODOs and finish the docs

* self.maxDiff = None to see the whole diff for the nested list test

* add import for add_json_schema

* Quick test fix

* Fix something that was bugging me in the chat template docstring

* Less "anyOf" when unnecessary

* Support return types for the templates that need them

* Proper return type tests

* Switch to Google format docstrings

* Update chat templating docs to match new format

* Stop putting the return type in with the other parameters

* Add Tuple support

* No more decorator - we just do it implicitly!

* Add enum support to get_json_schema

* Update docstring

* Add copyright header

* Update src/transformers/tokenization_utils_base.py

Co-authored-by: amyeroberts <22614925+amyeroberts@users.noreply.github.com>

* Update docs/source/en/chat_templating.md

Co-authored-by: amyeroberts <22614925+amyeroberts@users.noreply.github.com>

* Update src/transformers/utils/chat_template_utils.py

Co-authored-by: amyeroberts <22614925+amyeroberts@users.noreply.github.com>

* Update src/transformers/utils/chat_template_utils.py

Co-authored-by: amyeroberts <22614925+amyeroberts@users.noreply.github.com>

* Add copyright header

* make fixup

* Fix indentation

* Reformat chat_template_utils

* Correct return value

* Make regexes module-level

* Support more complex, multi-line arg docstrings

* Update error message for ...

* Update ruff

* Add document type validation

* Refactor docs

* Refactor docs

* Refactor docs

* Clean up Tuple error

* Add an extra test for very complex defs and docstrings and clean everything up for it

* Document enum block

* Quick test fixes

* Stop supporting type hints in docstring to fix bugs and simplify the regex

* Update docs for the regex change

* Clean up enum regex

* Wrap functions in {"type": "function", "function": ...}

* Update src/transformers/utils/chat_template_utils.py

Co-authored-by: Pablo Montalvo <39954772+molbap@users.noreply.github.com>

* Temporary tool calling commit

* Add type hints to chat template utils, partially update docs (incomplete!)

* Code cleanup based on @molbap's suggestion

* Add comments to explain regexes

* Fix up type parsing for unions and lists

* Add custom exception types and adjust tests to look for them

* Update docs with a demo!

* Docs cleanup

* Pass content as string

* Update tool call formatting

* Update docs with new function format

* Update docs

* Update docs with a second tool to show the model choosing correctly

---------

Co-authored-by: amyeroberts <22614925+amyeroberts@users.noreply.github.com>

Co-authored-by: Pablo Montalvo <39954772+molbap@users.noreply.github.com>

* Rename to test_model_common_attributes

The method name is misleading - it is testing being able to get and set embeddings, not common attributes to all models

* Explicitly skip

* Update TVP model to interpolate pre-trained image pad prompter encodings

* feat: Add 2D positional embeddings interpolation in TvpVisualInputEmbedding

* added required comments

* Update TVP model to interpolate pre-trained image pad prompter encodings

* feat: Add 2D positional embeddings interpolation in TvpVisualInputEmbedding

* added required comments

* docstring and argument fix

* doc fixes and test case fix suggested in review.

* varibale typo fix

* styling and name fixes for padding interpolation flag.

* Remove ConversationalPipeline and Conversation object, as they have been deprecated for some time and are due for removal

* Update not-doctested.txt

* Fix JA and ZH docs

* Fix JA and ZH docs some more

* Fix JA and ZH docs some more

* Implement JSON dump conversion for torch_dtype in TrainingArguments

* Add unit test for converting torch_dtype in TrainingArguments to JSON

* move unit test for converting torch_dtype into TrainerIntegrationTest class

* reformating using ruff

* convert dict_torch_dtype_to_str to private method _dict_torch_dtype_to_str

---------

Co-authored-by: jun.4 <jun.4@kakaobrain.com>

* fix: wav2vec2_with_lm decoding error

Fixed an error where some language models could

not be loaded due to a decoding error, since it

was impossible to select the 'unigram_encoding'

value.

* fix: unexpected keyword argument

Fixed unexpected keyword argument caused by

passing kwargs directly to BeamSearchDecoderCTC.

* style: wav2vec2_with_lm

Changed single quotes to double quotes.

* Add list check for image and question

* Handle passing two lists and update docstring

* Add tests

* Add support for dataset

* Add test for dataset as input

* fixup

* fix unprotected import

* fix unprotected import

* fix import again

* fix param type

* Initial attempt

* Updates: PR suggestions

* Interpolate the relative position bias when interpolate_pos_encoding is True

* Add slow tag for the added tests

* Add in DATA2VEC_VISION_INPUTS_DOCSTRING

* Fix contrastive_search for new cache structure, and improve performance by removing inneficient torch.stack(torch.split(x, top_k, dim=0))

* Fix _contrastive_search for non-standard cache using ellipsis slicing

* Fix all outputs.logits memory leaks for all decoding strategies!

* Fix small error in _contrastive_search()

* Make all necessary change and revert for the new class

* Apply coding style

* Remove pipes in type hints for compatibility

* correct type hint

* apply style

* Use DynamicCache by default and solve conflicts

* Fix rebase issues

* Add `_supports_dynamic_cache_class` in models for models that support DynamicCache but not other caches to make DynamicCache the default for more models

* Create generation config to return legacy format by default, or to choose not to

* style

* Fix case when use_cache is False

* Remove default DynamicCache in assiste_decoding if assistant_model does not support it + fix _seen_tokens when cropping cache

* Update prepare_inputs_for_generation() for case with empty DynamicCache

* Correct return of args in _assisted_decoding

* Remove EfficientDynamicCache as it is no longer needed

* Correct mistake in generation config

* Move cache logic of assisted decoding to AssistedCandidateGenerator.__init__

* change DynamicCache function names from "split" to "batch_split" for readability + apply coding style

* Remove `_supports_dynamic_cache_class` attribute after rebase

* Correct missing line lost in conflict resolution during rebasing

* Add special case for Jamba

* Fix jamba test

* Coding style

* coding style

* Correct missing import in rebasing

* Simplify _validate_model_kwargs based on removal of _supports_dynamic_cache attribute

* Simplify code paths in _contrastive_search

* coding style

* Update docstrings of cache methods

* Update prepare_inputs_for_generation() -> past_key_values are always Cache objects

The StoppingCriteriaList allocates is_done without specifying dtype=torch.bool. On XLA this allocates a float tensor and causes a failure on the following line:

is_done = is_done | criteria(input_ids, scores, **kwargs)

by attempting to OR float with bool.

* Added interpolate pos encoding feature and test to deit

* Added interpolate pos encoding feature and test for deit TF model

* readded accidentally delted test for multi_gpu

* storing only patch_size instead of entire config and removed commented code

* Update modeling_tf_deit.py to remove extra line

Co-authored-by: amyeroberts <22614925+amyeroberts@users.noreply.github.com>

---------

Co-authored-by: amyeroberts <22614925+amyeroberts@users.noreply.github.com>

* add tokenizer_summary to es/_toctree.yml

* add tokenizer_summary to es/

* fix link to Transformes XL in en/

* translate until Subword tokenization section

* fix GPT link in en/

* fix other GPT link in en/

* fix typo in en/

* translate the doc

* run make fixup

* Remove .md in Transformer XL link

* fix some link issues in es/

* fix typo

* fix the get_size_with_aspect_ratio in max_size situation

* make fix-up

* add more general solution

* consider when max_size is not defined

* fix typo

* fix typo

* simple fix

* fix error

* fix if else error

* fix error of size overwrite

* fix yolos image processing

* fix detr image processing

* make

* add longest related test script

* Update src/transformers/models/yolos/image_processing_yolos.py

Co-authored-by: amyeroberts <22614925+amyeroberts@users.noreply.github.com>

* add more test

* add test script about longest size

* remove deprecated

---------

Co-authored-by: amyeroberts <22614925+amyeroberts@users.noreply.github.com>

While running the model.prepare_tf_dataset() method,

it raises the error below:

```

TypeError: Cannot convert [array([322., 1.])] to EagerTensor of dtype int64

```

This happens, in "DataCollatorForSeq2Seq" function when we are try

to convert the labels to tensors. While converting the labels to tensors,

the labels can be in the format of list of list or list of ndarrays.

There is no problem converting the list of list lables. There is a problem

when the list of ndarrays are float values(like below).

```

[array([322., 1.])]

```

so the exception raises while trying to convert this label to tensors using

below code.

```

batch["labels"] = tf.constant(batch["labels"], dtype=tf.int64)

```

The labels are always integer values, so this got converted to float

values in the label padding operation below.

```

batch["labels"] = [

call(label)

if padding_side == "right"

else np.concatenate([[self.label_pad_token_id] * (max_label_length - len(label)), label])

for label in labels

]

```

Here we have 2 cases:

1 - Concatenating an array having integer padding token value with labels.

2 - Concatenating an empty array with labels.

----------------------------------------------------------------------------------------

case 1: Concatenating an array having integer padding token value with labels.

WORKS EXPECTED:

----------------------------------------------------------------------------------------

```

label = np.array([233, 1])

max_label_length = 4

label_pad_token_id = -100

np.concatenate([[label_pad_token_id] * (max_label_length - len(label)), label])

o/p:

array([-100, -100, 233, 1])

```

----------------------------------------------------------------------------------------

Case 2: Concatenating an empty array with labels.

GIVES THE ISSUE:

This scenorio can happen when the label has the maximum label length -- No padding needed.

----------------------------------------------------------------------------------------

```

label = np.array([233, 1])

max_label_length = 2

label_pad_token_id = -100

np.concatenate([[label_pad_token_id] * (max_label_length - len(label)), label])

o/p:

array([233., 1.])

```

----------------------------------------------------------------------------------------

Solution:

----------------------------------------------------------------------------------------

We need to concatenate a ndarray of dtype int with labels.

AFTER FIX:

----------

case 1:

```

label = np.array([233, 1])

max_label_length = 4

label_pad_token_id = -100

np.concatenate([np.array([label_pad_token_id] * (max_label_length - len(label)), dtype=np.int64),label])

o/p:

array([-100, -100, 233, 1])

```

case 2:

```

label = np.array([233, 1])

max_label_length = 2

label_pad_token_id = -100

np.concatenate([np.array([label_pad_token_id] * (max_label_length - len(label)), dtype=np.int64),label])

o/p:

array([233, 1])

```

* token healing impl + trie with extensions

* make fixup

* prefix-robust space tokenization

* examples readme and requirements

* make fixup

* allow input prompt and model

* redundant defaults

* Specialized Trie

* make fixup

* updated tests with new inherited Tree

* input ids to auto device_map

* rm unused import

* Update src/transformers/generation/utils.py

Co-authored-by: Arthur <48595927+ArthurZucker@users.noreply.github.com>

* naming convention

* Revert "naming convention"

This reverts commit dd39d9c5b7a969e2d8a8d2a8e54f121b82dc44f0.

* naming convention

* last -hopefully- changes

---------

Co-authored-by: Arthur <48595927+ArthurZucker@users.noreply.github.com>

Corrected a typo in security.md. Changed `use_safetenstors` to `use_safetensors` in the section discussing the usage of safe formats for loading models to prevent arbitrary code execution.

* current working example!

* commit regex and result file

* update

* nit

* push the conversion file

* oups

* roadmap and nits

* attempt diffs for 3 files

* persimmon

* nit

* add diff file that is the same as the modeling_llama.py

* fix rope nits

* updates

* updates with converted versions

* give some breathing space to the code

* delete

* update

* update

* push the actual result

* update regex patterns

* update regex patterns

* fix some issues

* fix some issues

* fix some issues

* updates

* updates

* updates

* updates

* updates

* revert changes done to llama

* updates

* update gemma

* updates

* oups

* current state

* current state

* update

* ouiiii

* nit

* clear diffs

* nit

* fixup

* update

* doc 🚀

* 🔥

* for now use gemma

* deal with comments

* style

* handle funtions

* deal with assigns

* todos

* process inheritage

* keep decorators?

* 🤗

* deal with duplicates

* fixup

* correctly remove duplicate code

* run ruff post script

* ruff deals pretty well with imports, let's leave it to him

* ah maybe not lol

* for now remove all imports from child.

* nit

* conversion of llama

* okay

* convert starcoder2

* synch with main

* update llama diff

* updates

* https://docs.astral.sh/ruff/rules/redefined-while-unused/ fixes the imports, bit needs later version of ruff

* updates

* okay actual state

* non zero exit

* update!

* revert unrelated

* remove other diff files

* updates

* cleanup

* update

* less diff!

* stash

* current updates

* updates

* No need for call

* finished fining deps

* update

* current changes

* current state

* current state

* new status

* nit

* finally

* fixes

* nits

* order is now expected

* use logger info instead of prints

* fixup

* up

* nit

* update

* nits

* update

* correct merge

* update

* update

* update

* add warning

* update caution message

* update

* better merging strategy

* copy class statements :wink

* fixups

* nits

* update

* Apply suggestions from code review

Co-authored-by: amyeroberts <22614925+amyeroberts@users.noreply.github.com>

* nits

* smaller header

* do cleanup some stuff

* even simpler header?

* fixup

* updates

* ruff

* update examples

* nit

* TODO

* state

* OUUUUUUF

* current state

* nits

* final state

* add a readme

* fixup

* remove diff llama

* fix

* nit

* dummy noy funny

* ruff format tests src utils --check

* everless diffs

* less diffs and fix test

* fixes

* naming nit?

* update converter and add supper example

* nits

* updated for function signatures

* update

* update

* add converted dummies

* autoformat

* single target assign fix

* fixup

* fix some imports

* fixes

* don't push them

* `# noqa: F841`

---------

Co-authored-by: amyeroberts <22614925+amyeroberts@users.noreply.github.com>

* Description of quantization_config

Added missing description about quantization_config in replace_with_bnb_linear for better readability.

* Removed trailing spaces

`mask` variable is not defined. probably a writing mistake. it should be `segmentation_map`. `segmentation_map` should be a `1` channel image rather than `RGB`.

[on a different note, the `mask_url` is the same as `raw_image`. could provide a better example.

* Fix has_file in offline mode

* harmonize env variable for offline mode

* Switch to HF_HUB_OFFLINE

* fix test

* revert test_offline to test TRANSFORMERS_OFFLINE

* Add new offline test

* merge conflicts

* docs

* seems like `split_special_tokens` is used here

* split special token

* add new line at end of file

* moving split special token test to common tests

* added assertions

* test

* fixup

* add co-author

* passing rest of args to gptsan_japanese, fixing tests

* removing direct comparison of fast and slow models

* adding test support for UDOP and LayoutXLM

* ruff fix

* readd check if slow tokenizer

* modify test to handle bos tokens

* removing commented function

* trigger build

* applying review feedback - updated docstrings, var names, and simplified tests

* ruff fixes

* Update tests/test_tokenization_common.py

Co-authored-by: Arthur <48595927+ArthurZucker@users.noreply.github.com>

* applying feedback, comments

* shutil temp directory fix

---------

Co-authored-by: Arthur Zucker <arthur.zucker@gmail.com>

Co-authored-by: Ita Zaporozhets <itazaporozhets@Itas-MBP.localdomain>

Co-authored-by: itazap <itazap@users.noreply.github.com>

Co-authored-by: Arthur <48595927+ArthurZucker@users.noreply.github.com>

Co-authored-by: Ita Zaporozhets <itazaporozhets@Itas-MacBook-Pro.local>

* added interpolation for vitmae model in pytorch as well as tf.

* Update modeling_vit_mae.py

irreugalr import fixed

* small changes and proper formatting

* changes suggested in review.

* modified decoder interpolate_func

* arguments and docstring fix

* Apply suggestions from code review

doc fixes

Co-authored-by: amyeroberts <22614925+amyeroberts@users.noreply.github.com>

---------

Co-authored-by: amyeroberts <22614925+amyeroberts@users.noreply.github.com>

* add test that currently fails

* test passed

* all perceiver passed

* fixup, style, quality, repo-consistency, all passed

* Apply suggestions from code review: default to False + compute sqrt once only

Co-authored-by: amyeroberts <22614925+amyeroberts@users.noreply.github.com>

* fix a minor bracket

* replace dim with self._num_channels

* add arguments to the rest preprocessors

---------

Co-authored-by: amyeroberts <22614925+amyeroberts@users.noreply.github.com>

* add prefix space ignored in llama #29625

* adding test with add_prefix_space=False

* ruff

---------

Co-authored-by: Ita Zaporozhets <itazaporozhets@Itas-MBP.localdomain>

* Add a check that warmup_setps is either 0 or >= 1

Update training_args.py to add a check that warmup_setps is either 0 or >= 1. Otherwise, raise an error.

* Update src/transformers/training_args.py

Co-authored-by: amyeroberts <22614925+amyeroberts@users.noreply.github.com>

---------

Co-authored-by: amyeroberts <22614925+amyeroberts@users.noreply.github.com>

* [build-ci-image]

* correct branch

* push ci image

* [build-ci-image]

* update scheduled as well

* [push-ci-image]

* [build-ci-image]

* [push-ci-image]

* update deps

* [build-ci-image]

* [build-ci-image]

* [build-ci-image]

* [build-ci-image]

* [build-ci-image]

* [build-ci-image]

* oups [build-ci-image]

* [push-ci-image]

* fix

* [build-ci-image]

* [build-ci-image]

* [build-ci-image]

* [build-ci-image]

* [build-ci-image]

* [build-ci-image]

* [build-ci-image]

* updated

* [build-ci-image] update tag

* [build-ci-image]

* [build-ci-image]

* fix tag

* [build-ci-image]

* [build-ci-image]

* [build-ci-image]

* [build-ci-image]

* github name

* commit_title?

* fetch

* update

* it not found

* dev

* dev

* [push-ci-image]

* dev

* dev

* update

* dev

* dev print dev commit message dev

* dev ? dev

* dev

* dev

* dev

* dev

* [build-ci-image]

* [build-ci-image]

* [push-ci-image]

* revert unwanted

* revert convert as well

* no you are not important

* [build-ci-image]

* Update .circleci/config.yml

* pin tf probability dev

If required padding for a crop larger than input image is odd-numbered,

the padding would be rounded down instead of rounded up, causing the

output dimension to be one smaller than it should be.

* add model_memory_anatomy to es/_toctree.yml

* copy model_memory_anatomy.md to es/

* translate first section

* translate doc

* chage forward activations

* fix sentence and and link to Trainer

* fix Trainer link

* Introduce configured_state

* Include note on tuning

* Allow for users to have defined a state already

* Include tests

* Add note on hpam tune

* Guard a bit better

* Update src/transformers/training_args.py

Co-authored-by: amyeroberts <22614925+amyeroberts@users.noreply.github.com>

* Update src/transformers/training_args.py

Co-authored-by: amyeroberts <22614925+amyeroberts@users.noreply.github.com>

* Finish rebase

* Finish rebase

* Guard carefully

* Fixup test

* Refactor

* Fin refactor

* Comment

* Update wrt feedback

---------

Co-authored-by: amyeroberts <22614925+amyeroberts@users.noreply.github.com>

* fix for custom pipeline configuration

* fix for custom pipelines

* remove extra exception

* added test for custom pipelines extra tag

* format with ruff

* limit extra tag for first time only

* format with ruff

* improve tests for custom pipelines

Fix num_hidden_layers in initialization

Originally, the initialization was using config.num_layers instead of config.num_hidden_layers. This fixes that.

* Add MistralForTokenClassification

* Add tests and docs

* Add token classification for Mixtral and Qwen2

* Save llma for token classification draft

* Add token classification support for Llama, Gemma, Persimmon, StableLm and StarCoder2

* Formatting

* Add token classification support for Qwen2Moe model

* Add dropout layer to each ForTokenClassification model

* Add copied from in tests

* Update src/transformers/models/llama/modeling_llama.py

Co-authored-by: Younes Belkada <49240599+younesbelkada@users.noreply.github.com>

* Propagate suggested changes

* Style

---------

Co-authored-by: Younes Belkada <49240599+younesbelkada@users.noreply.github.com>

* Support arbitrary processor

* fix

* nit

* update

* nit

* nit

* fix and revert

* add a small test

* better check

* fixup

* bug so let's just use class for now

* oups

* .

* Remove deprecated logic and warnings

* Add back some code that seems to be important...

* Let's just add all he nllb stuff back; removing it is a bit more involved

* Remove kwargs

* Remove more kwargs

* Fix llama model forward function with attention=True, same-length encoded sequence.

* Fix style

* propagate fix to modeling_cohere, gemma, dbrx, and olmo (which copy the same sdpa masking logic from llama)

* Fix style

* ignore unnecessary sdpa mask converter when output_attentions=True

* add tests checking sdpa and eager outputs match when output_attentions=True

* Split if statements in two lines

Co-authored-by: Arthur <48595927+ArthurZucker@users.noreply.github.com>

* Fix formatting

* Add fix to new jetmoe model

* Add missing output_attentions argument to jetmoe mask creation

---------

Co-authored-by: Arthur <48595927+ArthurZucker@users.noreply.github.com>

* Add support for mixing languages in a single batch

* Update docstring

* Enable different detected languages in batch

* Do not require input_features

* Test list of languages

* Fix comment

* Make init_tokens length-1 if possible, broadcast at the end

* Test for ValueError with language list of incorrect length

* Slow test for batched multilingual transcription

* fixup

* Apply suggestions from code review

Co-authored-by: Sanchit Gandhi <93869735+sanchit-gandhi@users.noreply.github.com>

* Address review, refactor

* Second attempt to move this line where it was originally

* Split test, fix a bug

---------

Co-authored-by: Sanchit Gandhi <93869735+sanchit-gandhi@users.noreply.github.com>

* Adding model_parallel = False

* Revert "Adding model_parallel = False"

This reverts commit ba1d99976acb598824ce3347dbe7d848daa21e79.

* Trainer: circumvent error for model in which is_parallelizable is True but does not have model_parallel attribute

* Add utility for finding candidate models for deprecation

* Update model init

* Make into configurable script

* Fix path

* Add sorting of base object alphabetically

* Tidy

* Refactor __init__ alpha ordering

* Update script with logging

* fix import

* Fix logger

* Fix logger

* Get config file before moving files

* Take models from CLI

* Split models into lines to make easier to feed to deprecate_models script

* Update

* Use posix path

* Print instead

* Add example in module docstring

* Fix up

* Add clarifying comments; add models to DEPRECATE_MODELS

* Address PR comments

* Don't update relative paths on the same level

* Initial commit

* Just a copy of modeling_idefics.py that will be ported to TF

* - Prepend TF to the name of all classes

- Convert pytorch ops to TF (not all operations are converted yet)

* Add TF imports

* Add autotranslated files

* Add TF classes to model_tf_auto.py

* Add the TF classes in model_doc

* include auto-translated code

* Adopted from auto-translated version

* Add a forgotten super().build

* Add test code for TF version.

* Fix indentation and load pytorch weights for now

* Some fixes. Many tests are still failing but some are passing now.

- I have added TODO's for some of the hacks I made to unblock me

and I will address them soon

- I have the processing_idefics.py hacked in my view to support TF temporarily

* Add ALL_LAYERNORM_LAYERS to match pytorch

* Revert "Add ALL_LAYERNORM_LAYERS to match pytorch"

This reverts commit 7e0a35119b4d7a6284d04d8c543fba1b29e573c9 as it

is not needed in the tf implementation.

* Fix freeze_relevant_params()

* Some more fixes

* Fix test_attention_outputs

* Add tf stuff to processing_idefics.py

processing_idefics.py supports both pytorch and tf now.

test_processor_idefics.py for pytorch is passing, so i didn't break anything

but still some issues with tf. I also need to add tf tests in

test_processor_idefics.py.

* Pass return_tensors to image processing code and fix test

* Pass return_tensors to the image processor __init__

* Fix several test cases

- Make input to some of the forward pass of type `TFModelInputType`

- Decorate main layer forward pass with `@unpack_inputs`

- Decorate main layer with `@keras_serializable`

- Pass `inputs` to TFIdeficsModel

* Some more fixes forgotten in last commit

* Fix processing code and vision_tf.py

* Fix perceiver bug

* Import from

* Auto-add build() methods + style pass

* Fix build() errors due to `None` being passed as shape to some layers

* Change name in TFIdeficsForVisionText2Text to attribute in IdeficsForVisionText2Text

* Fix pytorch weights load for tf2

There were a lot of `name=` missing in weight initialization code.

* Attempt to fix CI

* Add back accidently removed line

* Remove torch-specific stuff from the TF test file

* make fix-copies, make style, remove autotranslated files

* Fixes to imports/docstrings

* Let's try the from future import in desperation

* Fix the core random_attention_mask fn to match the torch/flax behaviour

* Clean random_attention_mask up correctly

* Remove torch-only test

* Fix loss shape, couple of nits

* make style

* Don't test for OOB embeddings because IDEFICS uses those deliberately

* Fix loss computation to handle masking

* Fix test failures when flattening

* Fix some test failures

- Add cross attention gate which was missing and wasn't being passed arround

- Fix overwriting of image_attention_mask due to hack I had for dummy inputs

* Add a proper stateless scaled_dot_product_attention

* make style

* Adding missing attribute from the PyTorch version

* Small cleanups to decoupledlinearlayer in case that helps

* Pass epsilon to LayerNormalization

* Attemp to fix pytorch weight cross-loading for TFIdeficsEmbedding

* Fix a bug in TFIdeficsGatedCrossAttentionLayer

* Patching up build() methods

* Constant self.inv_freq

* Constant self.inv_freq

* First working version

The TF implementation works now, there was a bug in the TFIdeficsDecoupledLinear

where the weights were mis-intialized (in_features,out_features)

when it should be: (out_features, in_features)

I have tested this so far with tiny-random and idefics-9b-instruct

and gives correct output.

I also dumped the final outputs for both pytorch and TF

and they are identical.

* Fix some test failures

* remove print statement

* Fix return_tensors

* Fix CI test failure check_code_quality

* Attempt to fix CI failures by running `make fixup`

The hardcoded IDs in test_modeling_tf_idefics.py are for the integration

test and makes that file unreadable and should probably be moved to a seperate file.

* Attempt to fix tests_pr_documentation_tests

* Fix a test failure in test_image_processing_idefics.py

* Fix test test_pt_tf_model_equivalence

* Fix a few failures

* Tiny fix

* Some minor fixes

* Remove a duplicate test

* Override a few test failures for IDEFICS

- `test_keras_save_load` is passing now

- `test_compile_tf_model` is still failing

* Fix processing_idefics.py after rebase

* Guard import keras with is_tf_available

* fix check code quality

* fix check code quality

* Minor fixes

* Skip test_save_load temporarily

This test passed on my local box but fails on the CI, skipping

for now to see if there are other remaining failures on the CI.

* Run `ruff format tests src utils`

* Fix last failing test, `test_compile_tf_model`

* Add fixes for vision_tf.py

I forgot to add this file in last commit.

* Minor fixes

* Replace "<<<" with "<<" for doc tests

IDEFICS-9B is too big for doctest runner, so don't run it there

* Make code more readable

* Fix bug after code review

I added a layer_norm_eps to IdeficsConfig but I don't even need it

since the vision config has a layer_norm_eps.

* Fix after code review

Use original code tokenizer.convert_tokens_to_ids

* Keep PyTorch as the default return_tensors

* Fixes to modeling_tf after code review

* Fixes from code review

- Remove all references of `TF_IDEFICS_PRETRAINED_MODEL_ARCHIVE_LIST`

- Pass 1e-5 to LayerNormalization in perceiver

* Run ruff

* Undo a change

* Refactor processing code after Matt's suggestion

* Remove TODO's that aren't needed anymore

* For pytorch, Use original pytorch processing code from main

Since this PR is a TF port it shouldn't make any modifications

to pytorch IDEFICS code. This changes undo's the pytorch processing

modifications I made and uses original code from main.

* Update tests/models/idefics/test_modeling_idefics.py

* Update tests/models/idefics/test_modeling_tf_idefics.py

* Add missing imports for is_pt_tf_cross_test

* [DO NOT MERGE]: This is a commit for debugging and will be reverted

The cross test `test_pt_tf_model_equivalence` passes locally but

fails when running on the CI. This commit is to help debug that

and will be reverted.

* Revert "[DO NOT MERGE]: This is a commit for debugging and will be reverted"

This reverts commit 8f0d709ec5bd46685fb0b4259d914ffee794875b.

* [DO NOT MERGE]: This commit is for debugging a CI failure and will be reverted

* [DO NOT MERGE]: This commit is for debugging a CI failure and will be reverted

* Revert "[DO NOT MERGE]: This commit is for debugging a CI failure and will be reverted"

This reverts commit 998cc38b8c3d313bf5e5eb55a7f5b7b881897b89.

* Revert "[DO NOT MERGE]: This commit is for debugging a CI failure and will be reverted"

This reverts commit 1c695ac4219c4ae4d39b330b01744dc27deb7dd4.

* Don't skip test_save_load

IIRC test_save_load was also failing on the CI but not on my local

box, it might be easier to debug that on the CI first than the cross tests

* Debugging commit, will be reverted

* Revert "Debugging commit, will be reverted"

This reverts commit 8eafc8e41e20c4e95a3a90834f06a6e9f445e2d5.

* Override `test_save_load` and push model to save

Maybe this will help me repro this weird bug

* pass my repo_id

* add endpoint

* Pass a temp (write) token just for this CI

* Undo last few commits, still pushing to hub for model debugging

The issue seems to be with save_pretrained(), when I looked at the model saved

from the CI test failure it is basically empty and has no weights.

`self.save_weights(..)` seems to be failing in save_pretrained but needs

more debugging

* Add logging to modeling tf utils, will be reverted just for debugging

* Debugging, will revert

* Revert "Debugging, will revert"

This reverts commit 9d0d3075fb7c82d8cde3a5c76bc8f3876c5c55d3.

* Revert "Add logging to modeling tf utils, will be reverted just for debugging"

This reverts commit 774b6b7b1c17b3ce5d7634ade768f2f686cee617.

* Remove `test_save_load`

The CI failures are gone after my latest rebase, no idea why

but I was still saving the model to my hub on HF and the tf_model.h5

file now has everything.

* Run make fix-copies

* Run ruff format tests src utils

* Debugging commit, will be reverted

* Run ruff, also trigger CI run

* Run ruff again

* Undo debugging commit

---------

Co-authored-by: Matt <rocketknight1@gmail.com>

Co-authored-by: Matt <Rocketknight1@users.noreply.github.com>

* blip with interpolated pos encoding

* feat: Add interpolate_pos_encoding option to other models from `BLIP` family.

* include check for textual generated content in tests

description:Submit a proposal/request for a new transformers feature

labels:["feature"]

labels:["Feature request"]

body:

- type:textarea

id:feature-request

@ -19,7 +19,7 @@ body:

label:Motivation

description:|

Please outline the motivation for the proposal. Is your feature request related to a problem? e.g., I'm always frustrated when [...]. If this is related to another GitHub issue, please link here too.

RUN_SLOW:yes# For gated repositories, we still need to agree to share information on the Hub repo. page in order to get access. # This token is created under the bot `hf-transformers-bot`.

@ -14,7 +14,7 @@ Models uploaded on the Hugging Face Hub come in different formats. We heavily re

models in the [`safetensors`](https://github.com/huggingface/safetensors) format (which is the default prioritized

by the transformers library), as developed specifically to prevent arbitrary code execution on your system.

To avoid loading models from unsafe formats(e.g. [pickle](https://docs.python.org/3/library/pickle.html), you should use the `use_safetenstors` parameter. If doing so, in the event that no .safetensors file is present, transformers will error when loading the model.

To avoid loading models from unsafe formats(e.g. [pickle](https://docs.python.org/3/library/pickle.html), you should use the `use_safetensors` parameter. If doing so, in the event that no .safetensors file is present, transformers will error when loading the model.

@ -596,7 +596,7 @@ Keywords: Data-Centric AI, Data Quality, Noisy Labels, Outlier Detection, Active

## [BentoML](https://github.com/bentoml/BentoML)

[BentoML](https://github.com/bentoml) is the unified framework for for building, shipping, and scaling production-ready AI applications incorporating traditional ML, pre-trained AI models, Generative and Large Language Models.

[BentoML](https://github.com/bentoml) is the unified framework for building, shipping, and scaling production-ready AI applications incorporating traditional ML, pre-trained AI models, Generative and Large Language Models.

All Hugging Face models and pipelines can be seamlessly integrated into BentoML applications, enabling the running of models on the most suitable hardware and independent scaling based on usage.

Keywords: BentoML, Framework, Deployment, AI Applications

# arguments specific to this wrapper for our own customization

parser.add_argument("--ensure_empty",type=bool,default=True,help="If to create a temporary directory.")

parser.add_argument(

"--commit",

type=list_str,

default="",

help="Comma-separated list of branch names and/or commit sha values on which the benchmark will run. If `diff` is specified, it will run on both the current head and the `main` branch.",

)

parser.add_argument("--metrics",type=str,help="The metrics to be included in the summary.")

parser.add_argument("--repo_id",type=str,default=None,help="The repository to which the file will be uploaded.")

parser.add_argument("--path_in_repo",type=str,default=None,help="Relative filepath in the repo.")

parser.add_argument("--token",type=str,default=None,help="A valid user access token (string).")

RUN pip install --no-cache-dir "transformers[sklearn,tf-cpu,testing,sentencepiece,tf-speech,vision]"

RUN pip install --no-cache-dir "git+https://github.com/huggingface/transformers.git@${REF}#egg=transformers[sklearn,tf-cpu,testing,sentencepiece,tf-speech,vision]"

RUN uv pip install --no-cache-dir "protobuf==3.20.3" tensorflow_probability

@ -162,7 +162,7 @@ Transformers verwendet die Shell-Umgebungsvariablen `PYTORCH_TRANSFORMERS_CACHE`

## Offline Modus

Transformers ist in der Lage, in einer Firewall- oder Offline-Umgebung zu laufen, indem es nur lokale Dateien verwendet. Setzen Sie die Umgebungsvariable `TRANSFORMERS_OFFLINE=1`, um dieses Verhalten zu aktivieren.

Transformers ist in der Lage, in einer Firewall- oder Offline-Umgebung zu laufen, indem es nur lokale Dateien verwendet. Setzen Sie die Umgebungsvariable `HF_HUB_OFFLINE=1`, um dieses Verhalten zu aktivieren.

Die `bitsandbytes`-Integration unterstützt Datentypen mit 8bit und 4bit Genauigkeit, was für das Laden großer Modelle nützlich ist, weil es Speicher spart (lesen Sie den `bitsandbytes`-Integrations [guide](./quantization#bitsandbytes-integration), um mehr zu erfahren). Fügen Sie die Parameter `load_in_8bit` oder `load_in_4bit` zu [`~PreTrainedModel.from_pretrained`] hinzu und setzen Sie `device_map="auto"`, um das Modell effektiv auf Ihre Hardware zu verteilen:

@ -185,16 +185,16 @@ pytest -k "test and ada" tests/test_optimization.py

Manchmal müssen Sie `accelerate` Tests für Ihre Modelle ausführen. Dazu fügen Sie einfach `-m accelerate_tests` zu Ihrem Befehl hinzu, wenn Sie diese Tests bei einem `OPT`-Lauf ausführen möchten:

Um zu testen, ob die Dokumentationsbeispiele korrekt sind, sollten Sie überprüfen, ob die `doctests` erfolgreich sind.

Lassen Sie uns als Beispiel den docstring von [WhisperModel.forward](https://github.com/huggingface/transformers/blob/main/src/transformers/models/whisper/modeling_whisper.py#L1017-L1035) verwenden:

Um zu testen, ob die Dokumentationsbeispiele korrekt sind, sollten Sie überprüfen, ob die `doctests` erfolgreich sind.

Lassen Sie uns als Beispiel den docstring von [WhisperModel.forward](https://github.com/huggingface/transformers/blob/main/src/transformers/models/whisper/modeling_whisper.py#L1017-L1035) verwenden:

```python

```python

r"""

Returns:

@ -217,8 +217,8 @@ Example:

```

Führen Sie einfach die folgende Zeile aus, um automatisch jedes docstring-Beispiel in der gewünschten Datei zu testen:

```bash

Führen Sie einfach die folgende Zeile aus, um automatisch jedes docstring-Beispiel in der gewünschten Datei zu testen:

```bash

pytest --doctest-modules <path_to_file_or_dir>

```

Wenn die Datei eine Markdown-Erweiterung hat, sollten Sie das Argument `--doctest-glob="*.md"` hinzufügen.

@ -862,7 +862,7 @@ Code, der fehlerhaft ist, einen schlechten Zustand verursacht, der sich auf ande

- Hier sehen Sie, wie Sie einen ganzen Test bedingungslos überspringen können:

```python no-style

@unittest.skip("this bug needs to be fixed")

@unittest.skip(reason="this bug needs to be fixed")

@ -28,8 +28,8 @@ An agent is a system that uses an LLM as its engine, and it has access to functi

These *tools* are functions for performing a task, and they contain all necessary description for the agent to properly use them.

The agent can be programmed to:

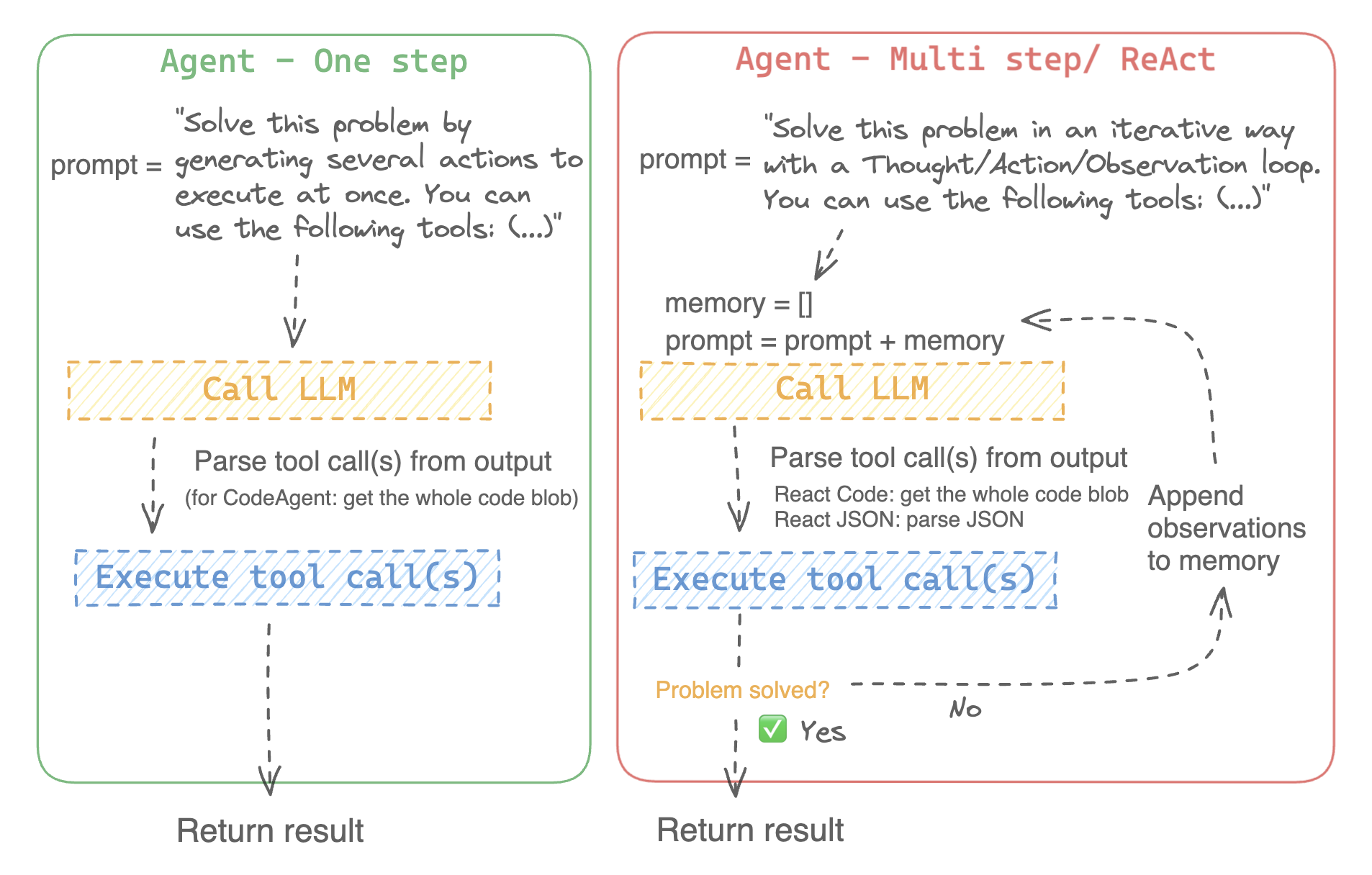

- devise a series of actions/tools and run them all at once like the `CodeAgent` for example

- plan and execute actions/tools one by one and wait for the outcome of each action before launching the next one like the `ReactJsonAgent` for example

- devise a series of actions/tools and run them all at once like the [`CodeAgent`] for example

- plan and execute actions/tools one by one and wait for the outcome of each action before launching the next one like the [`ReactJsonAgent`] for example

### Types of agents

@ -42,15 +42,15 @@ This agent has a planning step, then generates python code to execute all its ac

This is the go-to agent to solve reasoning tasks, since the ReAct framework ([Yao et al., 2022](https://huggingface.co/papers/2210.03629)) makes it really efficient to think on the basis of its previous observations.

We implement two versions of ReactJsonAgent:

- [`~ReactJsonAgent`] generates tool calls as a JSON in its output.

- [`~ReactCodeAgent`] is a new type of ReactJsonAgent that generates its tool calls as blobs of code, which works really well for LLMs that have strong coding performance.

- [`ReactJsonAgent`] generates tool calls as a JSON in its output.

- [`ReactCodeAgent`] is a new type of ReactJsonAgent that generates its tool calls as blobs of code, which works really well for LLMs that have strong coding performance.

> [!TIP]

> Read [Open-source LLMs as LangChain Agents](https://huggingface.co/blog/open-source-llms-as-agents) blog post to learn more the ReAct agent.

For example, here is how a ReAct agent would work its way through the following question.

For example, here is how a ReAct Code agent would work its way through the following question.

```py3

>>>agent.run(

@ -124,7 +124,7 @@ You could use any `llm_engine` method as long as:

You also need a `tools` argument which accepts a list of `Tools`. You can provide an empty list for `tools`, but use the default toolbox with the optional argument `add_base_tools=True`.

Now you can create an agent, like `CodeAgent`, and run it. For convenience, we also provide the `HfEngine` class that uses `huggingface_hub.InferenceClient` under the hood.

Now you can create an agent, like [`CodeAgent`], and run it. For convenience, we also provide the [`HfEngine`] class that uses `huggingface_hub.InferenceClient` under the hood.

```python

fromtransformersimportCodeAgent,HfEngine

@ -139,7 +139,7 @@ agent.run(

```

This will be handy in case of emergency baguette need!

You can even leave the argument `llm_engine` undefined, and an [~HfEngine] will be created by default.

You can even leave the argument `llm_engine` undefined, and an [`HfEngine`] will be created by default.

```python

fromtransformersimportCodeAgent

@ -181,13 +181,27 @@ You can also run an agent consecutively for different tasks: each time the attri

A Python interpreter executes the code on a set of inputs passed along with your tools.

This should be safe because the only functions that can be called are the tools you provided (especially if it's only tools by Hugging Face) and the print function, so you're already limited in what can be executed.

The Python interpreter also doesn't allow any attribute lookup or imports (which shouldn't be needed for passing inputs/outputs to a small set of functions) so all the most obvious attacks shouldn't be an issue.

The Python interpreter also doesn't allow imports by default outside of a safe list, so all the most obvious attacks shouldn't be an issue.

You can still authorize additional imports by passing the authorized modules as a list of strings in argument `additional_authorized_imports` upon initialization of your [`ReactCodeAgent`] or [`CodeAgent`]:

>>>agent.run("Could you get me the title of the page at url 'https://huggingface.co/blog'?")

(...)

'Hugging Face – Blog'

```

The execution will stop at any code trying to perform an illegal operation or if there is a regular Python error with the code generated by the agent.

> [!WARNING]

> The LLM can generate arbitrary code that will then be executed: do not add any unsafe imports!

### The system prompt

An agent, or rather the LLM that drives the agent, generates an output based on the system prompt. The system prompt can be customized and tailored to the intended task. For example, check the system prompt for the `ReactCodeAgent` (below version is slightly simplified).

An agent, or rather the LLM that drives the agent, generates an output based on the system prompt. The system prompt can be customized and tailored to the intended task. For example, check the system prompt for the [`ReactCodeAgent`] (below version is slightly simplified).

```text

You will be given a task to solve as best you can.

> Please make sure to define the `<<tool_descriptions>>` string somewhere in the `template` so the agent is aware

of the available tools.

### Inspecting an agent run

Here are a few useful attributes to inspect what happened after a run:

-`agent.logs` stores the fine-grained logs of the agent. At every step of the agent's run, everything gets stored in a dictionary that then is appended to `agent.logs`.

- Running `agent.write_inner_memory_from_logs()` creates an inner memory of the agent's logs for the LLM to view, as a list of chat messages. This method goes over each step of the log and only stores what it's interested in as a message: for instance, it will save the system prompt and task in separate messages, then for each step it will store the LLM output as a message, and the tool call output as another message. Use this if you want a higher-level view of what has happened - but not every log will be transcripted by this method.

## Tools

A tool is an atomic function to be used by an agent.

You can for instance check the [~PythonInterpreterTool]: it has a name, a description, input descriptions, an output type, and a `__call__` method to perform the action.

You can for instance check the [`PythonInterpreterTool`]: it has a name, a description, input descriptions, an output type, and a `__call__` method to perform the action.

When the agent is initialized, the tool attributes are used to generate a tool description which is baked into the agent's system prompt. This lets the agent know which tools it can use and why.

@ -259,7 +280,7 @@ Transformers comes with a default toolbox for empowering agents, that you can ad

- **Speech to text**: given an audio recording of a person talking, transcribe the speech into text ([Whisper](./model_doc/whisper))

- **Text to speech**: convert text to speech ([SpeechT5](./model_doc/speecht5))

- **Translation**: translates a given sentence from source language to target language.

- **Python code interpreter**: runs your the LLM generated Python code in a secure environment. This tool will only be added to [~ReactJsonAgent] if you use `add_base_tools=True`, since code-based tools can already execute Python code

- **Python code interpreter**: runs your the LLM generated Python code in a secure environment. This tool will only be added to [`ReactJsonAgent`] if you use `add_base_tools=True`, since code-based tools can already execute Python code

You can manually use a tool by calling the [`load_tool`] function and a task to perform.

@ -365,7 +386,7 @@ And the output:

`"The most downloaded model for the 'text-to-video' task is ByteDance/AnimateDiff-Lightning."`

### Manage agent toolbox

### Manage your agent's toolbox

If you have already initialized an agent, it is inconvenient to reinitialize it from scratch with a tool you want to use. With Transformers, you can manage an agent's toolbox by adding or replacing a tool.

config=MaskFormerConfig(backbone="microsoft/resnet50",use_pretrained_backbone=False)# backbone and neck config

config=MaskFormerConfig(backbone="microsoft/resnet-50",use_pretrained_backbone=False)# backbone and neck config

model=MaskFormerForInstanceSegmentation(config)# head

```

@ -366,15 +356,43 @@ model = MaskFormerForInstanceSegmentation(config)

```

</hfoption>

</hfoptions>

</hfoptions id="timm backbone">

[timm](https://hf.co/docs/timm/index) models are loaded with [`TimmBackbone`] and [`TimmBackboneConfig`].

[timm](https://hf.co/docs/timm/index) models are loaded within a model with `use_timm_backbone=True` or with [`TimmBackbone`] and [`TimmBackboneConfig`].

Use `use_timm_backbone=True` and `use_pretrained_backbone=True` to load pretrained timm weights for the backbone.

config=MaskFormerConfig(backbone="resnet50",use_pretrained_backbone=False,use_timm_backbone=True)# backbone and neck config

model=MaskFormerForInstanceSegmentation(config)# head

```

You could also load the backbone config and use it to create a `TimmBackbone` or pass it to the model config. Timm backbones will load pretrained weights by default. Set `use_pretrained_backbone=False` to load randomly initialized weights.

@ -16,11 +16,11 @@ rendered properly in your Markdown viewer.

# DeepSpeed

[DeepSpeed](https://www.deepspeed.ai/) is a PyTorch optimization library that makes distributed training memory-efficient and fast. At it's core is the [Zero Redundancy Optimizer (ZeRO)](https://hf.co/papers/1910.02054) which enables training large models at scale. ZeRO works in several stages:

[DeepSpeed](https://www.deepspeed.ai/) is a PyTorch optimization library that makes distributed training memory-efficient and fast. At its core is the [Zero Redundancy Optimizer (ZeRO)](https://hf.co/papers/1910.02054) which enables training large models at scale. ZeRO works in several stages:

* ZeRO-1, optimizer state partioning across GPUs

* ZeRO-1, optimizer state partitioning across GPUs

* ZeRO-2, gradient partitioning across GPUs

* ZeRO-3, parameteter partitioning across GPUs

* ZeRO-3, parameter partitioning across GPUs

In GPU-limited environments, ZeRO also enables offloading optimizer memory and computation from the GPU to the CPU to fit and train really large models on a single GPU. DeepSpeed is integrated with the Transformers [`Trainer`] class for all ZeRO stages and offloading. All you need to do is provide a config file or you can use a provided template. For inference, Transformers support ZeRO-3 and offloading since it allows loading huge models.

@ -159,7 +159,7 @@ There are three types of configuration parameters:

You could also modify the DeepSpeed configuration and edit [`TrainingArguments`] from it:

1. Create or load a DeepSpeed configuration to used as the main configuration

1. Create or load a DeepSpeed configuration to use as the main configuration

2. Create a [`TrainingArguments`] object based on these DeepSpeed configuration values

Some values, such as `scheduler.params.total_num_steps` are calculated by the [`Trainer`] during training.

@ -191,7 +191,7 @@ ZeRO-1 shards the optimizer states across GPUs, and you can expect a tiny speed

</hfoption>

<hfoptionid="ZeRO-2">

ZeRO-2 shards the optimizer and gradients across GPUs. This stage is primarily used for training since it's features are not relevant to inference. Some important parameters to configure for better performance include:

ZeRO-2 shards the optimizer and gradients across GPUs. This stage is primarily used for training since its features are not relevant to inference. Some important parameters to configure for better performance include:

*`offload_optimizer` should be enabled to reduce GPU memory usage.

*`overlap_comm` when set to `true` trades off increased GPU memory usage to lower allreduce latency. This feature uses 4.5x the `allgather_bucket_size` and `reduce_bucket_size` values. In this example, they're set to `5e8` which means it requires 9GB of GPU memory. If your GPU memory is 8GB or less, you should reduce `overlap_comm` to lower the memory requirements and prevent an out-of-memory (OOM) error.

@ -226,7 +226,7 @@ ZeRO-3 shards the optimizer, gradient, and parameters across GPUs. Unlike ZeRO-2

*`pin_memory: true` can improve throughput, but less memory becomes available for other processes because the pinned memory is reserved for the specific process that requested it and it's typically accessed much faster than normal CPU memory.

*`stage3_max_live_parameters` is the upper limit on how many full parameters you want to keep on the GPU at any given time. Reduce this value if you encounter an OOM error.

*`stage3_max_reuse_distance` is a value for determining when a parameter is used again in the future, and it helps decide whether to throw the parameter away or to keep it. If the parameter is going to be reused (if the value is less than `stage3_max_reuse_distance`), then it is kept to reduce communication overhead. This is super helpful when activation checkpointing is enabled and you want to keep the parameter in the forward recompute until the backward pass. But reduce this value if you encounter an OOM error.

*`stage3_gather_16bit_weights_on_model_save` consolidates fp16 weights when a model is saved. For large models and multiple GPUs, this is an expensive in terms of memory and speed. You should enable it if you're planning on resuming training.

*`stage3_gather_16bit_weights_on_model_save` consolidates fp16 weights when a model is saved. For large models and multiple GPUs, this is expensive in terms of memory and speed. You should enable it if you're planning on resuming training.

*`sub_group_size` controls which parameters are updated during the optimizer step. Parameters are grouped into buckets of `sub_group_size` and each bucket is updated one at a time. When used with NVMe offload, `sub_group_size` determines when model states are moved in and out of CPU memory from during the optimization step. This prevents running out of CPU memory for extremely large models. `sub_group_size` can be left to its default value if you aren't using NVMe offload, but you may want to change it if you:

1. Run into an OOM error during the optimizer step. In this case, reduce `sub_group_size` to reduce memory usage of the temporary buffers.

Certain combinations of the `generate()` parameters, and ultimately `generation_config`, can be used to enable specific

@ -398,3 +484,59 @@ just like in multinomial sampling. However, in assisted decoding, reducing the t

Alternativelly, you can also set the `prompt_lookup_num_tokens` to trigger n-gram based assisted decoding, as opposed

to model based assisted decoding. You can read more about it [here](https://twitter.com/joao_gante/status/1747322413006643259).

### DoLa Decoding

**D**ecoding by C**o**ntrasting **La**yers (DoLa) is a contrastive decoding strategy to improve the factuality and reduce the

hallucinations of LLMs, as described in this paper of ICLR 2024 [DoLa: Decoding by Contrasting Layers Improves Factuality in Large Language Models](https://arxiv.org/abs/2309.03883).

DoLa is achieved by contrasting the differences in logits obtained from final

layers versus earlier layers, thus amplify the factual knowledge localized to particular part of transformer layers.

Do the following two steps to activate DoLa decoding when calling the `model.generate` function:

1. Set the `dola_layers` argument, which can be either a string or a list of integers.

- If set to a string, it can be one of `low`, `high`.

- If set to a list of integers, it should be a list of layer indices between 0 and the total number of layers in the model. The 0-th layer is word embedding, and the 1st layer is the first transformer layer, and so on.

2. Set `repetition_penalty = 1.2` is suggested to reduce repetition in DoLa decoding.

See the following examples for DoLa decoding with the 32-layer LLaMA-7B model.

['\nThe Declaration of Independence was signed on July 4, 1776.\nWhat was the date of the signing of the Declaration of Independence?\nThe Declaration of Independence was signed on July 4,']

# DoLa decoding with contrasting higher part of layers (layers 16,18,...,30)

['\nJuly 4, 1776, when the Continental Congress voted to separate from Great Britain. The 56 delegates to the Continental Congress signed the Declaration on August 2, 1776.']

# DoLa decoding with contrasting specific layers (layers 28 and 30)