mirror of

https://github.com/huggingface/transformers.git

synced 2025-11-04 03:44:37 +08:00

Compare commits

166 Commits

llama4-unh

...

why_no_tri

| Author | SHA1 | Date | |

|---|---|---|---|

| 95068983e8 | |||

| f974214353 | |||

| 438324c9cf | |||

| bb2a44ad4b | |||

| 4acf692ace | |||

| 40cba20e87 | |||

| 346f1eebbd | |||

| 48dd89cf55 | |||

| 58e5e976e0 | |||

| c7d3cc67a1 | |||

| dc06e7cecd | |||

| 3bc44eaaee | |||

| 4f96081aad | |||

| a2ef3cf537 | |||

| 688f4707bf | |||

| 0a83588c51 | |||

| 4005730044 | |||

| a7d2bbaaa8 | |||

| 32eca7197a | |||

| c94c59fc47 | |||

| 5a6de703a7 | |||

| 9a4ce64770 | |||

| dc8227827d | |||

| 2f517200c1 | |||

| 0577cae808 | |||

| b33edf1b9b | |||

| 503541d7ef | |||

| 9ddcf5fce5 | |||

| a91020aed0 | |||

| 8669c016d2 | |||

| e3d3b54638 | |||

| 61436a9323 | |||

| 7752e7487c | |||

| 7dafcd0077 | |||

| 6fd87d1172 | |||

| ed53809ac5 | |||

| d91858c232 | |||

| 4541c2cdef | |||

| a335dc4d6d | |||

| 33f6c5a5c8 | |||

| 5ab7a7c640 | |||

| 3165eb7c28 | |||

| 33c6fdb2cf | |||

| 4cc6b60654 | |||

| 51f544a4d4 | |||

| 4f1dbe8152 | |||

| c08997c52e | |||

| 57da364d8e | |||

| 356b3cd71d | |||

| 0ad3710d47 | |||

| f6c79f767c | |||

| ecaeee66bc | |||

| 6f7ea1cf00 | |||

| d6ac923ad9 | |||

| c8e0e603de | |||

| 4e63a1747c | |||

| 8ab296501a | |||

| 20ceaca228 | |||

| cb39f7dd5b | |||

| d228f50acc | |||

| a5dfb98977 | |||

| a53a63c9c2 | |||

| 4774a39d05 | |||

| e43f168eb3 | |||

| 1efcfa9ca4 | |||

| 86064035f0 | |||

| 7cc9e61a3a | |||

| 4e53840920 | |||

| 1897a02d83 | |||

| 7bff4bdcf6 | |||

| e16775d103 | |||

| 49b9a69a36 | |||

| a5079a2c84 | |||

| e7f5724efd | |||

| 4b8c6d4cf8 | |||

| ac1df5fccd | |||

| 1ef64710d2 | |||

| 47b9f06aa2 | |||

| 78cea3e22c | |||

| 953196a43d | |||

| aaf129cdae | |||

| 69e6ddf27f | |||

| 623d395aff | |||

| 435f88f1db | |||

| 954f31cd81 | |||

| 28eae8b4bd | |||

| bf46e44878 | |||

| 897874748b | |||

| 6a75528cbc | |||

| 6cef03ba66 | |||

| a563999a02 | |||

| 3c39c07939 | |||

| f797e3d98a | |||

| 442d356aa5 | |||

| 7e9b57ce62 | |||

| 54a123f068 | |||

| 931126b929 | |||

| c7064cdba1 | |||

| 371c44d0ef | |||

| 7ff896c0f2 | |||

| 10907e2846 | |||

| 7d76876498 | |||

| dac443414e | |||

| 6daec12d0b | |||

| 0ea1151222 | |||

| 9c0c323e12 | |||

| bde41d69b4 | |||

| 7ecc5b88c0 | |||

| 5ae9b2cac0 | |||

| d9e76656ae | |||

| 1ae8d54b04 | |||

| 10144ff116 | |||

| aa478567f8 | |||

| ae5ce22664 | |||

| 4f139f5a50 | |||

| a2c2fb0108 | |||

| 0ddad2d655 | |||

| fbb2054ed5 | |||

| 6d8b0b3378 | |||

| f5865d32a2 | |||

| e39c732644 | |||

| bc0150bb04 | |||

| 9cda4265d6 | |||

| e032d12e8a | |||

| f834ca2c19 | |||

| c5c648dd74 | |||

| 71b35387fd | |||

| ad340908e4 | |||

| 2527f71a47 | |||

| 7ae0be722e | |||

| e3eda6d188 | |||

| 1e6ff5fd55 | |||

| 6f4058aee3 | |||

| 08e3217baf | |||

| 4d0de5f73a | |||

| c15a7adb28 | |||

| 121f91d36c | |||

| 4321b0648c | |||

| aab0878327 | |||

| 35f0f5b5da | |||

| 530322ccb6 | |||

| 8064cd9b4f | |||

| cdfb018d03 | |||

| 1e6b546ea6 | |||

| 0fc683d1cd | |||

| 2515a5a290 | |||

| 2da82e432d | |||

| 794fde7b1c | |||

| b54c2f4689 | |||

| 754a370bca | |||

| 31a62c2eb8 | |||

| f830105183 | |||

| e2b0224d94 | |||

| 6cc109c354 | |||

| 8bbcdf5409 | |||

| 3a826a45ca | |||

| 5e855095a2 | |||

| 416b5a875d | |||

| f8a16805c5 | |||

| 48e179857c | |||

| 832cb684a0 | |||

| 22065bd645 | |||

| f789f960c8 | |||

| 12bf24d6ae | |||

| e7ad077012 | |||

| 99f9f1042f |

2

.github/ISSUE_TEMPLATE/i18n.md

vendored

2

.github/ISSUE_TEMPLATE/i18n.md

vendored

@ -23,7 +23,7 @@ Some notes:

|

||||

* Please translate in a gender-neutral way.

|

||||

* Add your translations to the folder called `<languageCode>` inside the [source folder](https://github.com/huggingface/transformers/tree/main/docs/source).

|

||||

* Register your translation in `<languageCode>/_toctree.yml`; please follow the order of the [English version](https://github.com/huggingface/transformers/blob/main/docs/source/en/_toctree.yml).

|

||||

* Once you're finished, open a pull request and tag this issue by including #issue-number in the description, where issue-number is the number of this issue. Please ping @stevhliu and @MKhalusova for review.

|

||||

* Once you're finished, open a pull request and tag this issue by including #issue-number in the description, where issue-number is the number of this issue. Please ping @stevhliu for review.

|

||||

* 🙋 If you'd like others to help you with the translation, you can also post in the 🤗 [forums](https://discuss.huggingface.co/).

|

||||

|

||||

## Get Started section

|

||||

|

||||

18

.github/scripts/assign_reviewers.py

vendored

18

.github/scripts/assign_reviewers.py

vendored

@ -54,6 +54,21 @@ def get_file_owners(file_path, codeowners_lines):

|

||||

return owners # Remember, can still be empty!

|

||||

return [] # Should never happen, but just in case

|

||||

|

||||

def pr_author_is_in_hf(pr_author, codeowners_lines):

|

||||

# Check if the PR author is in the codeowners file

|

||||

for line in codeowners_lines:

|

||||

line = line.split('#')[0].strip()

|

||||

if not line:

|

||||

continue

|

||||

|

||||

# Split into pattern and owners

|

||||

parts = line.split()

|

||||

owners = [owner.removeprefix("@") for owner in parts[1:]]

|

||||

|

||||

if pr_author in owners:

|

||||

return True

|

||||

return False

|

||||

|

||||

def main():

|

||||

script_dir = Path(__file__).parent.absolute()

|

||||

with open(script_dir / "codeowners_for_review_action") as f:

|

||||

@ -68,6 +83,9 @@ def main():

|

||||

pr_number = event['pull_request']['number']

|

||||

pr = repo.get_pull(pr_number)

|

||||

pr_author = pr.user.login

|

||||

if pr_author_is_in_hf(pr_author, codeowners_lines):

|

||||

print(f"PR author {pr_author} is in codeowners, skipping review request.")

|

||||

return

|

||||

|

||||

existing_reviews = list(pr.get_reviews())

|

||||

if existing_reviews:

|

||||

|

||||

36

.github/workflows/build-docker-images.yml

vendored

36

.github/workflows/build-docker-images.yml

vendored

@ -63,14 +63,14 @@ jobs:

|

||||

uses: huggingface/hf-workflows/.github/actions/post-slack@main

|

||||

with:

|

||||

slack_channel: ${{ secrets.CI_SLACK_CHANNEL_DOCKER }}

|

||||

title: 🤗 Results of the transformers-all-latest-gpu-push-ci docker build

|

||||

title: 🤗 Results of the transformers-all-latest-gpu-push-ci docker build

|

||||

status: ${{ job.status }}

|

||||

slack_token: ${{ secrets.SLACK_CIFEEDBACK_BOT_TOKEN }}

|

||||

|

||||

latest-torch-deepspeed-docker:

|

||||

name: "Latest PyTorch + DeepSpeed"

|

||||

runs-on:

|

||||

group: aws-general-8-plus

|

||||

group: aws-g4dn-2xlarge-cache

|

||||

steps:

|

||||

-

|

||||

name: Set up Docker Buildx

|

||||

@ -99,7 +99,7 @@ jobs:

|

||||

uses: huggingface/hf-workflows/.github/actions/post-slack@main

|

||||

with:

|

||||

slack_channel: ${{ secrets.CI_SLACK_CHANNEL_DOCKER}}

|

||||

title: 🤗 Results of the transformers-pytorch-deepspeed-latest-gpu docker build

|

||||

title: 🤗 Results of the transformers-pytorch-deepspeed-latest-gpu docker build

|

||||

status: ${{ job.status }}

|

||||

slack_token: ${{ secrets.SLACK_CIFEEDBACK_BOT_TOKEN }}

|

||||

|

||||

@ -140,7 +140,7 @@ jobs:

|

||||

uses: huggingface/hf-workflows/.github/actions/post-slack@main

|

||||

with:

|

||||

slack_channel: ${{ secrets.CI_SLACK_CHANNEL_DOCKER }}

|

||||

title: 🤗 Results of the transformers-pytorch-deepspeed-latest-gpu-push-ci docker build

|

||||

title: 🤗 Results of the transformers-pytorch-deepspeed-latest-gpu-push-ci docker build

|

||||

status: ${{ job.status }}

|

||||

slack_token: ${{ secrets.SLACK_CIFEEDBACK_BOT_TOKEN }}

|

||||

|

||||

@ -176,7 +176,7 @@ jobs:

|

||||

uses: huggingface/hf-workflows/.github/actions/post-slack@main

|

||||

with:

|

||||

slack_channel: ${{ secrets.CI_SLACK_CHANNEL_DOCKER }}

|

||||

title: 🤗 Results of the huggingface/transformers-doc-builder docker build

|

||||

title: 🤗 Results of the huggingface/transformers-doc-builder docker build

|

||||

status: ${{ job.status }}

|

||||

slack_token: ${{ secrets.SLACK_CIFEEDBACK_BOT_TOKEN }}

|

||||

|

||||

@ -214,7 +214,7 @@ jobs:

|

||||

uses: huggingface/hf-workflows/.github/actions/post-slack@main

|

||||

with:

|

||||

slack_channel: ${{ secrets.CI_SLACK_CHANNEL_DOCKER }}

|

||||

title: 🤗 Results of the huggingface/transformers-pytorch-gpudocker build

|

||||

title: 🤗 Results of the huggingface/transformers-pytorch-gpudocker build

|

||||

status: ${{ job.status }}

|

||||

slack_token: ${{ secrets.SLACK_CIFEEDBACK_BOT_TOKEN }}

|

||||

|

||||

@ -223,19 +223,19 @@ jobs:

|

||||

runs-on:

|

||||

group: aws-general-8-plus

|

||||

steps:

|

||||

-

|

||||

-

|

||||

name: Set up Docker Buildx

|

||||

uses: docker/setup-buildx-action@v3

|

||||

-

|

||||

-

|

||||

name: Check out code

|

||||

uses: actions/checkout@v4

|

||||

-

|

||||

-

|

||||

name: Login to DockerHub

|

||||

uses: docker/login-action@v3

|

||||

with:

|

||||

username: ${{ secrets.DOCKERHUB_USERNAME }}

|

||||

password: ${{ secrets.DOCKERHUB_PASSWORD }}

|

||||

-

|

||||

-

|

||||

name: Build and push

|

||||

uses: docker/build-push-action@v5

|

||||

with:

|

||||

@ -263,7 +263,7 @@ jobs:

|

||||

uses: huggingface/hf-workflows/.github/actions/post-slack@main

|

||||

with:

|

||||

slack_channel: ${{ secrets.CI_SLACK_CHANNEL_DOCKER }}

|

||||

title: 🤗 Results of the huggingface/transformers-pytorch-amd-gpu-push-ci build

|

||||

title: 🤗 Results of the huggingface/transformers-pytorch-amd-gpu-push-ci build

|

||||

status: ${{ job.status }}

|

||||

slack_token: ${{ secrets.SLACK_CIFEEDBACK_BOT_TOKEN }}

|

||||

|

||||

@ -301,7 +301,7 @@ jobs:

|

||||

uses: huggingface/hf-workflows/.github/actions/post-slack@main

|

||||

with:

|

||||

slack_channel: ${{ secrets.CI_SLACK_CHANNEL_DOCKER }}

|

||||

title: 🤗 Results of the huggingface/transformers-tensorflow-gpu build

|

||||

title: 🤗 Results of the huggingface/transformers-tensorflow-gpu build

|

||||

status: ${{ job.status }}

|

||||

slack_token: ${{ secrets.SLACK_CIFEEDBACK_BOT_TOKEN }}

|

||||

|

||||

@ -310,19 +310,19 @@ jobs:

|

||||

runs-on:

|

||||

group: aws-general-8-plus

|

||||

steps:

|

||||

-

|

||||

-

|

||||

name: Set up Docker Buildx

|

||||

uses: docker/setup-buildx-action@v3

|

||||

-

|

||||

-

|

||||

name: Check out code

|

||||

uses: actions/checkout@v4

|

||||

-

|

||||

-

|

||||

name: Login to DockerHub

|

||||

uses: docker/login-action@v3

|

||||

with:

|

||||

username: ${{ secrets.DOCKERHUB_USERNAME }}

|

||||

password: ${{ secrets.DOCKERHUB_PASSWORD }}

|

||||

-

|

||||

-

|

||||

name: Build and push

|

||||

uses: docker/build-push-action@v5

|

||||

with:

|

||||

@ -350,7 +350,7 @@ jobs:

|

||||

uses: huggingface/hf-workflows/.github/actions/post-slack@main

|

||||

with:

|

||||

slack_channel: ${{ secrets.CI_SLACK_CHANNEL_DOCKER }}

|

||||

title: 🤗 Results of the transformers-pytorch-deepspeed-amd-gpu build

|

||||

title: 🤗 Results of the transformers-pytorch-deepspeed-amd-gpu build

|

||||

status: ${{ job.status }}

|

||||

slack_token: ${{ secrets.SLACK_CIFEEDBACK_BOT_TOKEN }}

|

||||

|

||||

@ -388,6 +388,6 @@ jobs:

|

||||

uses: huggingface/hf-workflows/.github/actions/post-slack@main

|

||||

with:

|

||||

slack_channel: ${{ secrets.CI_SLACK_CHANNEL_DOCKER }}

|

||||

title: 🤗 Results of the transformers-quantization-latest-gpu build

|

||||

title: 🤗 Results of the transformers-quantization-latest-gpu build

|

||||

status: ${{ job.status }}

|

||||

slack_token: ${{ secrets.SLACK_CIFEEDBACK_BOT_TOKEN }}

|

||||

|

||||

@ -42,7 +42,7 @@ jobs:

|

||||

nightly-torch-deepspeed-docker:

|

||||

name: "Nightly PyTorch + DeepSpeed"

|

||||

runs-on:

|

||||

group: aws-general-8-plus

|

||||

group: aws-g4dn-2xlarge-cache

|

||||

steps:

|

||||

-

|

||||

name: Set up Docker Buildx

|

||||

|

||||

20

.github/workflows/model_jobs.yml

vendored

20

.github/workflows/model_jobs.yml

vendored

@ -18,6 +18,10 @@ on:

|

||||

docker:

|

||||

required: true

|

||||

type: string

|

||||

report_name_prefix:

|

||||

required: false

|

||||

default: run_models_gpu

|

||||

type: string

|

||||

|

||||

env:

|

||||

HF_HOME: /mnt/cache

|

||||

@ -116,23 +120,23 @@ jobs:

|

||||

|

||||

- name: Run all tests on GPU

|

||||

working-directory: /transformers

|

||||

run: python3 -m pytest -rsfE -v --make-reports=${{ env.machine_type }}_run_models_gpu_${{ matrix.folders }}_test_reports tests/${{ matrix.folders }}

|

||||

run: python3 -m pytest -rsfE -v --make-reports=${{ env.machine_type }}_${{ inputs.report_name_prefix }}_${{ matrix.folders }}_test_reports tests/${{ matrix.folders }}

|

||||

|

||||

- name: Failure short reports

|

||||

if: ${{ failure() }}

|

||||

continue-on-error: true

|

||||

run: cat /transformers/reports/${{ env.machine_type }}_run_models_gpu_${{ matrix.folders }}_test_reports/failures_short.txt

|

||||

run: cat /transformers/reports/${{ env.machine_type }}_${{ inputs.report_name_prefix }}_${{ matrix.folders }}_test_reports/failures_short.txt

|

||||

|

||||

- name: Run test

|

||||

shell: bash

|

||||

run: |

|

||||

mkdir -p /transformers/reports/${{ env.machine_type }}_run_models_gpu_${{ matrix.folders }}_test_reports

|

||||

echo "hello" > /transformers/reports/${{ env.machine_type }}_run_models_gpu_${{ matrix.folders }}_test_reports/hello.txt

|

||||

echo "${{ env.machine_type }}_run_models_gpu_${{ matrix.folders }}_test_reports"

|

||||

mkdir -p /transformers/reports/${{ env.machine_type }}_${{ inputs.report_name_prefix }}_${{ matrix.folders }}_test_reports

|

||||

echo "hello" > /transformers/reports/${{ env.machine_type }}_${{ inputs.report_name_prefix }}_${{ matrix.folders }}_test_reports/hello.txt

|

||||

echo "${{ env.machine_type }}_${{ inputs.report_name_prefix }}_${{ matrix.folders }}_test_reports"

|

||||

|

||||

- name: "Test suite reports artifacts: ${{ env.machine_type }}_run_models_gpu_${{ env.matrix_folders }}_test_reports"

|

||||

- name: "Test suite reports artifacts: ${{ env.machine_type }}_${{ inputs.report_name_prefix }}_${{ env.matrix_folders }}_test_reports"

|

||||

if: ${{ always() }}

|

||||

uses: actions/upload-artifact@v4

|

||||

with:

|

||||

name: ${{ env.machine_type }}_run_models_gpu_${{ env.matrix_folders }}_test_reports

|

||||

path: /transformers/reports/${{ env.machine_type }}_run_models_gpu_${{ matrix.folders }}_test_reports

|

||||

name: ${{ env.machine_type }}_${{ inputs.report_name_prefix }}_${{ env.matrix_folders }}_test_reports

|

||||

path: /transformers/reports/${{ env.machine_type }}_${{ inputs.report_name_prefix }}_${{ matrix.folders }}_test_reports

|

||||

|

||||

2

.github/workflows/self-comment-ci.yml

vendored

2

.github/workflows/self-comment-ci.yml

vendored

@ -29,7 +29,7 @@ jobs:

|

||||

runs-on: ubuntu-22.04

|

||||

name: Get PR number

|

||||

# For security: only allow team members to run

|

||||

if: ${{ github.event.issue.state == 'open' && contains(fromJSON('["ydshieh", "ArthurZucker", "zucchini-nlp", "qubvel", "molbap", "gante", "LysandreJik", "Cyrilvallez", "Rocketknight1", "SunMarc", "muellerzr", "eustlb"]'), github.actor) && (startsWith(github.event.comment.body, 'run-slow') || startsWith(github.event.comment.body, 'run slow') || startsWith(github.event.comment.body, 'run_slow')) }}

|

||||

if: ${{ github.event.issue.state == 'open' && contains(fromJSON('["ydshieh", "ArthurZucker", "zucchini-nlp", "qubvel", "molbap", "gante", "LysandreJik", "Cyrilvallez", "Rocketknight1", "SunMarc", "muellerzr", "eustlb", "MekkCyber"]'), github.actor) && (startsWith(github.event.comment.body, 'run-slow') || startsWith(github.event.comment.body, 'run slow') || startsWith(github.event.comment.body, 'run_slow')) }}

|

||||

outputs:

|

||||

PR_NUMBER: ${{ steps.set_pr_number.outputs.PR_NUMBER }}

|

||||

steps:

|

||||

|

||||

13

.github/workflows/self-scheduled-caller.yml

vendored

13

.github/workflows/self-scheduled-caller.yml

vendored

@ -54,12 +54,23 @@ jobs:

|

||||

ci_event: Daily CI

|

||||

secrets: inherit

|

||||

|

||||

trainer-fsdp-ci:

|

||||

name: Trainer/FSDP CI

|

||||

uses: ./.github/workflows/self-scheduled.yml

|

||||

with:

|

||||

job: run_trainer_and_fsdp_gpu

|

||||

slack_report_channel: "#transformers-ci-daily-training"

|

||||

runner: daily-ci

|

||||

docker: huggingface/transformers-all-latest-gpu

|

||||

ci_event: Daily CI

|

||||

secrets: inherit

|

||||

|

||||

deepspeed-ci:

|

||||

name: DeepSpeed CI

|

||||

uses: ./.github/workflows/self-scheduled.yml

|

||||

with:

|

||||

job: run_torch_cuda_extensions_gpu

|

||||

slack_report_channel: "#transformers-ci-daily-deepspeed"

|

||||

slack_report_channel: "#transformers-ci-daily-training"

|

||||

runner: daily-ci

|

||||

docker: huggingface/transformers-pytorch-deepspeed-latest-gpu

|

||||

ci_event: Daily CI

|

||||

|

||||

35

.github/workflows/self-scheduled.yml

vendored

35

.github/workflows/self-scheduled.yml

vendored

@ -45,7 +45,7 @@ env:

|

||||

|

||||

jobs:

|

||||

setup:

|

||||

if: contains(fromJSON('["run_models_gpu", "run_quantization_torch_gpu"]'), inputs.job)

|

||||

if: contains(fromJSON('["run_models_gpu", "run_trainer_and_fsdp_gpu", "run_quantization_torch_gpu"]'), inputs.job)

|

||||

name: Setup

|

||||

strategy:

|

||||

matrix:

|

||||

@ -77,12 +77,17 @@ jobs:

|

||||

run: pip freeze

|

||||

|

||||

- id: set-matrix

|

||||

if: ${{ inputs.job == 'run_models_gpu' }}

|

||||

if: contains(fromJSON('["run_models_gpu", "run_trainer_and_fsdp_gpu"]'), inputs.job)

|

||||

name: Identify models to test

|

||||

working-directory: /transformers/tests

|

||||

run: |

|

||||

echo "folder_slices=$(python3 ../utils/split_model_tests.py --num_splits ${{ env.NUM_SLICES }})" >> $GITHUB_OUTPUT

|

||||

echo "slice_ids=$(python3 -c 'd = list(range(${{ env.NUM_SLICES }})); print(d)')" >> $GITHUB_OUTPUT

|

||||

if [ "${{ inputs.job }}" = "run_models_gpu" ]; then

|

||||

echo "folder_slices=$(python3 ../utils/split_model_tests.py --num_splits ${{ env.NUM_SLICES }})" >> $GITHUB_OUTPUT

|

||||

echo "slice_ids=$(python3 -c 'd = list(range(${{ env.NUM_SLICES }})); print(d)')" >> $GITHUB_OUTPUT

|

||||

elif [ "${{ inputs.job }}" = "run_trainer_and_fsdp_gpu" ]; then

|

||||

echo "folder_slices=[['trainer'], ['fsdp']]" >> $GITHUB_OUTPUT

|

||||

echo "slice_ids=[0, 1]" >> $GITHUB_OUTPUT

|

||||

fi

|

||||

|

||||

- id: set-matrix-quantization

|

||||

if: ${{ inputs.job == 'run_quantization_torch_gpu' }}

|

||||

@ -113,6 +118,25 @@ jobs:

|

||||

docker: ${{ inputs.docker }}

|

||||

secrets: inherit

|

||||

|

||||

run_trainer_and_fsdp_gpu:

|

||||

if: ${{ inputs.job == 'run_trainer_and_fsdp_gpu' }}

|

||||

name: " "

|

||||

needs: setup

|

||||

strategy:

|

||||

fail-fast: false

|

||||

matrix:

|

||||

machine_type: [aws-g4dn-2xlarge-cache, aws-g4dn-12xlarge-cache]

|

||||

slice_id: [0, 1]

|

||||

uses: ./.github/workflows/model_jobs.yml

|

||||

with:

|

||||

folder_slices: ${{ needs.setup.outputs.folder_slices }}

|

||||

machine_type: ${{ matrix.machine_type }}

|

||||

slice_id: ${{ matrix.slice_id }}

|

||||

runner: ${{ inputs.runner }}

|

||||

docker: ${{ inputs.docker }}

|

||||

report_name_prefix: run_trainer_and_fsdp_gpu

|

||||

secrets: inherit

|

||||

|

||||

run_pipelines_torch_gpu:

|

||||

if: ${{ inputs.job == 'run_pipelines_torch_gpu' }}

|

||||

name: PyTorch pipelines

|

||||

@ -382,7 +406,7 @@ jobs:

|

||||

run: pip freeze

|

||||

|

||||

- name: Set `machine_type` for report and artifact names

|

||||

working-directory: /transformers

|

||||

working-directory: ${{ inputs.working-directory-prefix }}/transformers

|

||||

shell: bash

|

||||

run: |

|

||||

echo "${{ matrix.machine_type }}"

|

||||

@ -541,6 +565,7 @@ jobs:

|

||||

needs: [

|

||||

setup,

|

||||

run_models_gpu,

|

||||

run_trainer_and_fsdp_gpu,

|

||||

run_pipelines_torch_gpu,

|

||||

run_pipelines_tf_gpu,

|

||||

run_examples_gpu,

|

||||

|

||||

@ -26,7 +26,7 @@ There are two main venues to receive support: [the forums](https://discuss.huggi

|

||||

|

||||

[The user forums](https://discuss.huggingface.co/) are supported by the wide community of the library users and backed up by developers when needed.

|

||||

|

||||

If you have a difficulty with deploying this library or some questions, or you'd like to discuss a new feature, please first consider discussing those things at the forums. Only when you feel your subject matter has been crystalized and you still need support from the library developers do proceed to file an [issue](https://github.com/huggingface/transformers/issues).

|

||||

If you have a difficulty with deploying this library or some questions, or you'd like to discuss a new feature, please first consider discussing those things at the forums. Only when you feel your subject matter has been crystallized and you still need support from the library developers do proceed to file an [issue](https://github.com/huggingface/transformers/issues).

|

||||

|

||||

In particular all "Please explain" questions or objectively very user-specific feature requests belong to the forums. Here are some example of such questions:

|

||||

|

||||

|

||||

@ -70,7 +70,7 @@ Explore the [Hub](https://huggingface.com/) today to find a model and use Transf

|

||||

|

||||

## Installation

|

||||

|

||||

Transformers works with Python 3.9+ [PyTorch](https://pytorch.org/get-started/locally/) 2.0+, [TensorFlow](https://www.tensorflow.org/install/pip) 2.6+, and [Flax](https://flax.readthedocs.io/en/latest/) 0.4.1+.

|

||||

Transformers works with Python 3.9+ [PyTorch](https://pytorch.org/get-started/locally/) 2.1+, [TensorFlow](https://www.tensorflow.org/install/pip) 2.6+, and [Flax](https://flax.readthedocs.io/en/latest/) 0.4.1+.

|

||||

|

||||

Create and activate a virtual environment with [venv](https://docs.python.org/3/library/venv.html) or [uv](https://docs.astral.sh/uv/), a fast Rust-based Python package and project manager.

|

||||

|

||||

|

||||

@ -27,13 +27,6 @@ These models require the `trust_remote_code=True` parameter to be set when using

|

||||

the content of the modeling files when using this argument. We recommend setting a revision in order to ensure you

|

||||

protect yourself from updates on the repository.

|

||||

|

||||

#### Tools

|

||||

|

||||

Through the `Agent` framework, remote tools can be downloaded to be used by the Agent. You're to specify these tools

|

||||

yourself, but please keep in mind that their code will be run on your machine if the Agent chooses to run them.

|

||||

|

||||

Please inspect the code of the tools before passing them to the Agent to protect your runtime and local setup.

|

||||

|

||||

## Reporting a Vulnerability

|

||||

|

||||

Feel free to submit vulnerability reports to [security@huggingface.co](mailto:security@huggingface.co), where someone from the HF security team will review and recommend next steps. If reporting a vulnerability specific to open source, please note [Huntr](https://huntr.com) is a vulnerability disclosure program for open source software.

|

||||

|

||||

@ -66,7 +66,6 @@ NOT_DEVICE_TESTS = {

|

||||

"ModelTester::test_pipeline_",

|

||||

"/repo_utils/",

|

||||

"/utils/",

|

||||

"/agents/",

|

||||

}

|

||||

|

||||

# allow having multiple repository checkouts and not needing to remember to rerun

|

||||

@ -83,7 +82,6 @@ def pytest_configure(config):

|

||||

config.addinivalue_line("markers", "is_pipeline_test: mark test to run only when pipelines are tested")

|

||||

config.addinivalue_line("markers", "is_staging_test: mark test to run only in the staging environment")

|

||||

config.addinivalue_line("markers", "accelerate_tests: mark test that require accelerate")

|

||||

config.addinivalue_line("markers", "agent_tests: mark the agent tests that are run on their specific schedule")

|

||||

config.addinivalue_line("markers", "not_device_test: mark the tests always running on cpu")

|

||||

|

||||

|

||||

|

||||

@ -14,6 +14,8 @@ ARG PYTORCH='2.6.0'

|

||||

ARG INTEL_TORCH_EXT='2.3.0'

|

||||

# Example: `cu102`, `cu113`, etc.

|

||||

ARG CUDA='cu121'

|

||||

# Disable kernel mapping for now until all tests pass

|

||||

ENV DISABLE_KERNEL_MAPPING=1

|

||||

|

||||

RUN apt update

|

||||

RUN apt install -y git libsndfile1-dev tesseract-ocr espeak-ng python3 python3-pip ffmpeg git-lfs

|

||||

|

||||

@ -1,12 +1,12 @@

|

||||

# https://docs.nvidia.com/deeplearning/frameworks/pytorch-release-notes/rel-23-11.html#rel-23-11

|

||||

FROM nvcr.io/nvidia/pytorch:23.11-py3

|

||||

# https://docs.nvidia.com/deeplearning/frameworks/pytorch-release-notes/rel-24-08.html

|

||||

FROM nvcr.io/nvidia/pytorch:24.08-py3

|

||||

LABEL maintainer="Hugging Face"

|

||||

|

||||

ARG DEBIAN_FRONTEND=noninteractive

|

||||

|

||||

ARG PYTORCH='2.2.0'

|

||||

ARG PYTORCH='2.6.0'

|

||||

# Example: `cu102`, `cu113`, etc.

|

||||

ARG CUDA='cu121'

|

||||

ARG CUDA='cu126'

|

||||

|

||||

RUN apt -y update

|

||||

RUN apt install -y libaio-dev

|

||||

@ -15,7 +15,8 @@ RUN python3 -m pip install --no-cache-dir --upgrade pip

|

||||

ARG REF=main

|

||||

RUN git clone https://github.com/huggingface/transformers && cd transformers && git checkout $REF

|

||||

|

||||

RUN python3 -m pip install --no-cache-dir ./transformers[deepspeed-testing]

|

||||

# `datasets` requires pandas, pandas has some modules compiled with numpy=1.x causing errors

|

||||

RUN python3 -m pip install --no-cache-dir './transformers[deepspeed-testing]' 'pandas<2' 'numpy<2'

|

||||

|

||||

# Install latest release PyTorch

|

||||

# (PyTorch must be installed before pre-compiling any DeepSpeed c++/cuda ops.)

|

||||

|

||||

@ -1,11 +1,11 @@

|

||||

# https://docs.nvidia.com/deeplearning/frameworks/pytorch-release-notes/rel-23-11.html#rel-23-11

|

||||

FROM nvcr.io/nvidia/pytorch:23.11-py3

|

||||

FROM nvcr.io/nvidia/pytorch:24.08-py3

|

||||

LABEL maintainer="Hugging Face"

|

||||

|

||||

ARG DEBIAN_FRONTEND=noninteractive

|

||||

|

||||

# Example: `cu102`, `cu113`, etc.

|

||||

ARG CUDA='cu121'

|

||||

ARG CUDA='cu126'

|

||||

|

||||

RUN apt -y update

|

||||

RUN apt install -y libaio-dev

|

||||

@ -21,7 +21,8 @@ RUN python3 -m pip uninstall -y torch torchvision torchaudio

|

||||

# (https://www.deepspeed.ai/tutorials/advanced-install/#pre-install-deepspeed-ops)

|

||||

RUN python3 -m pip install --no-cache-dir -U --pre torch torchvision torchaudio --extra-index-url https://download.pytorch.org/whl/nightly/$CUDA

|

||||

|

||||

RUN python3 -m pip install --no-cache-dir ./transformers[deepspeed-testing]

|

||||

# `datasets` requires pandas, pandas has some modules compiled with numpy=1.x causing errors

|

||||

RUN python3 -m pip install --no-cache-dir './transformers[deepspeed-testing]' 'pandas<2' 'numpy<2'

|

||||

|

||||

RUN python3 -m pip install --no-cache-dir git+https://github.com/huggingface/accelerate@main#egg=accelerate

|

||||

|

||||

|

||||

@ -12,6 +12,8 @@ SHELL ["sh", "-lc"]

|

||||

ARG PYTORCH='2.6.0'

|

||||

# Example: `cu102`, `cu113`, etc.

|

||||

ARG CUDA='cu121'

|

||||

# Disable kernel mapping for quantization tests

|

||||

ENV DISABLE_KERNEL_MAPPING=1

|

||||

|

||||

RUN apt update

|

||||

RUN apt install -y git libsndfile1-dev tesseract-ocr espeak-ng python3 python3-pip ffmpeg

|

||||

|

||||

@ -23,8 +23,6 @@

|

||||

title: تحميل النماذج المخصصة وتدريبها باستخدام 🤗 PEFT

|

||||

- local: model_sharing

|

||||

title: مشاركة نموذجك

|

||||

- local: agents

|

||||

title: الوكلاء

|

||||

- local: llm_tutorial

|

||||

title: التوليد باستخدام LLMs

|

||||

- local: conversations

|

||||

@ -252,8 +250,6 @@

|

||||

title: أطر مفاهيمية

|

||||

# - sections:

|

||||

# - sections:

|

||||

# - local: main_classes/agent

|

||||

# title: الوكلاء والأدوات

|

||||

# - local: model_doc/auto

|

||||

# title: فئات يتم إنشاؤها ديناميكيًا

|

||||

# - local: main_classes/backbones

|

||||

|

||||

@ -1,539 +0,0 @@

|

||||

# الوكلاء والأدوات

|

||||

|

||||

[[open-in-colab]]

|

||||

|

||||

### ما هو الوكيل؟

|

||||

|

||||

يمكن للنظم اللغوية الكبيرة (LLMs) التي تم تدريبها على أداء [نمذجة اللغة السببية](./tasks/language_modeling.) التعامل مع مجموعة واسعة من المهام، ولكنها غالبًا ما تواجه صعوبات في المهام الأساسية مثل المنطق والحساب والبحث. وعندما يتم استدعاؤها في مجالات لا تؤدي فيها أداءً جيدًا، فإنها غالبًا ما تفشل في توليد الإجابة التي نتوقعها منها.

|

||||

|

||||

يتمثل أحد النهج للتغلب على هذا القصور في إنشاء "وكيل".

|

||||

|

||||

الوكيل هو نظام يستخدم LLM كمحرك له، ولديه حق الوصول إلى وظائف تسمى "أدوات".

|

||||

|

||||

هذه "الأدوات" هي وظائف لأداء مهمة، وتحتوي على جميع الأوصاف اللازمة للوكيل لاستخدامها بشكل صحيح.

|

||||

|

||||

يمكن برمجة الوكيل للقيام بما يلي:

|

||||

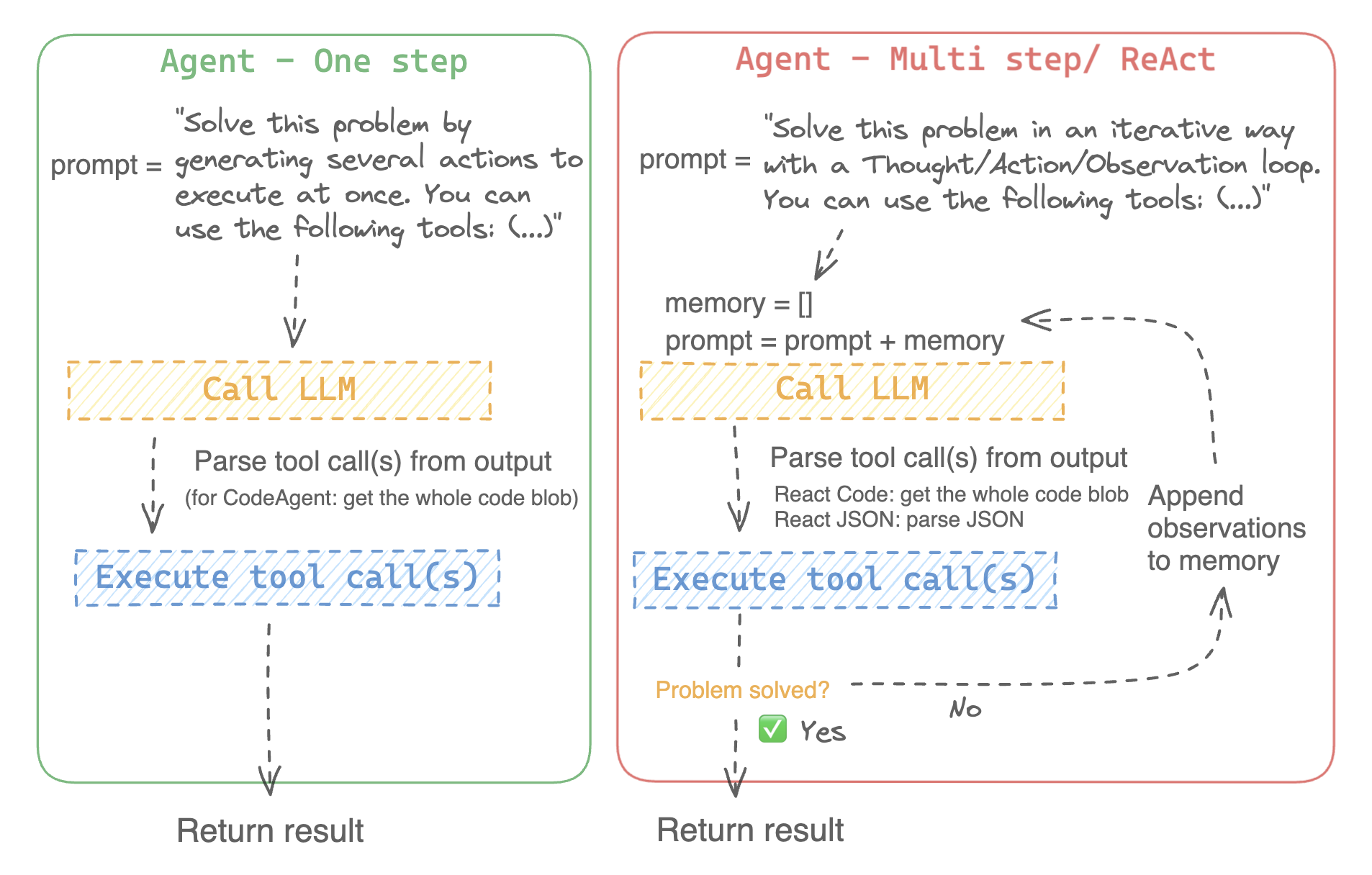

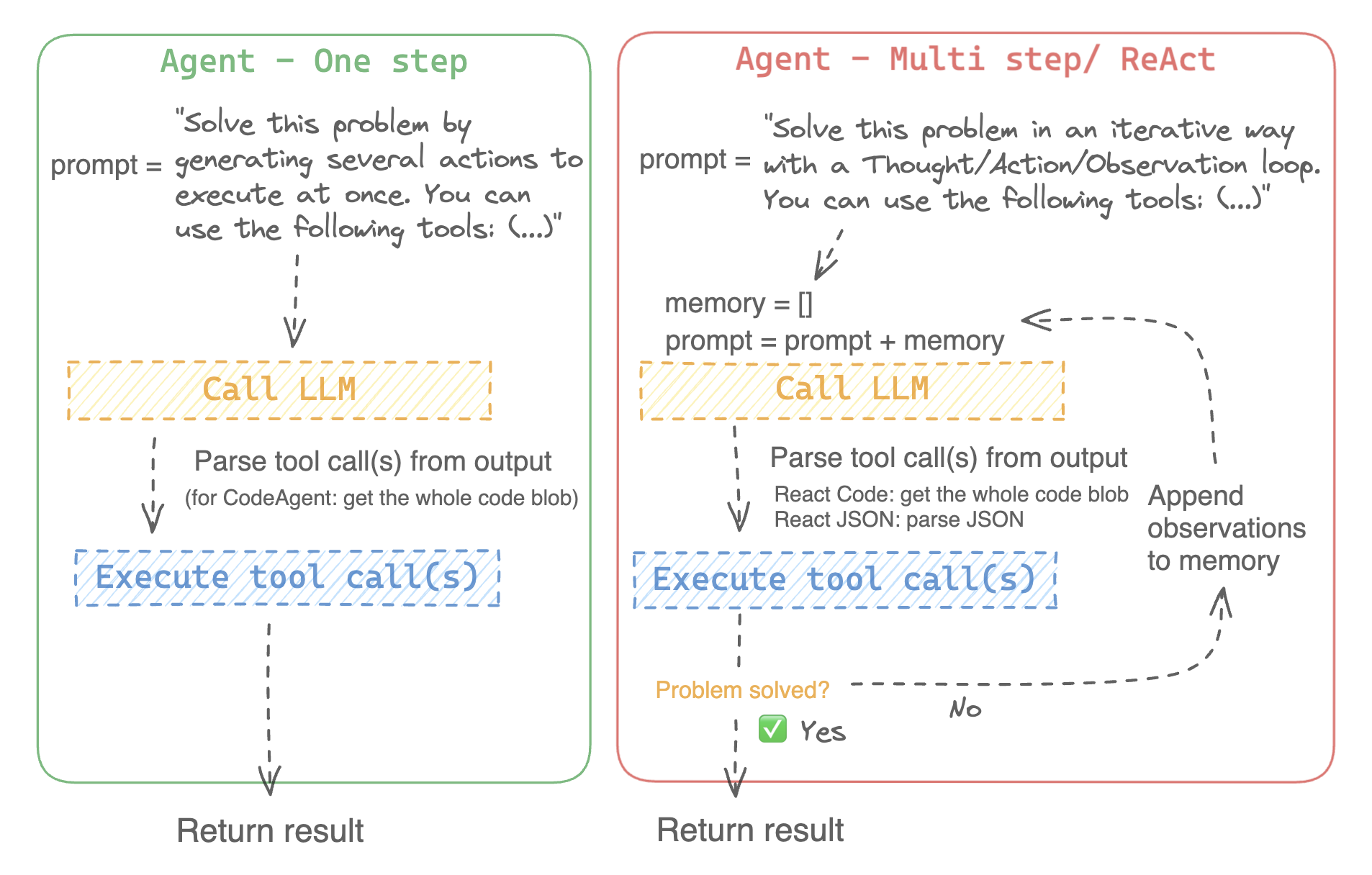

- وضع سلسلة من الإجراءات/الأدوات وتشغيلها جميعًا في نفس الوقت مثل [`CodeAgent`] على سبيل المثال

|

||||

- التخطيط للاجراءات/الأدوات وتنفيذها واحدة تلو الأخرى والانتظار حتى انتهاء كل إجراء قبل إطلاق التالي مثل [`ReactJsonAgent`] على سبيل المثال

|

||||

|

||||

### أنواع الوكلاء

|

||||

|

||||

#### الوكيل البرمجي (Code agent)

|

||||

|

||||

يتمتع هذا الوكيل يتبع خطوات محددة: أولًا، يخطط لسلسلة من الإجراءات التي يريد تنفيذها، ثم شفرة Python لتنفيذ جميع الإجراءات في نفس الوقت. وهو يتعامل بشكل أصلي مع أنواع مختلفة من المدخلات والمخرجات للأدوات التي يستخدمها، وبالتالي فهو الخيار الموصى به للمهام متعددة الوسائط.

|

||||

|

||||

#### وكلاء التفاعل

|

||||

|

||||

هذا هو الوكيل الذي يتم اللجوء إليه لحل مهام الاستدلال، حيث يجعل إطار ReAct ([Yao et al.، 2022](https://huggingface.co/papers/2210.03629)) من الكفاءة حقًا التفكير على أساس ملاحظاته السابقة.

|

||||

|

||||

نقوم بتنفيذ إصدارين من ReactJsonAgent:

|

||||

- [`ReactJsonAgent`] يقوم بتوليد استدعاءات الأدوات كـ JSON في إخراجها.

|

||||

- [`ReactCodeAgent`] هو نوع جديد من ReactJsonAgent يقوم بتوليد استدعاءات أدواته كمقاطع من التعليمات البرمجية، والتي تعمل بشكل جيد حقًا مع LLMs التي تتمتع بأداء قوي في البرمجة.

|

||||

|

||||

> [!TIP]

|

||||

> اقرأ منشور المدونة [Open-source LLMs as LangChain Agents](https://huggingface.co/blog/open-source-llms-as-agents) لمعرفة المزيد عن وكيل ReAct.

|

||||

|

||||

|

||||

|

||||

على سبيل المثال، إليك كيف يعمل وكيل ReAct Code طريقه من خلال السؤال التالي.

|

||||

|

||||

```py3

|

||||

>>> agent.run(

|

||||

... "How many more blocks (also denoted as layers) in BERT base encoder than the encoder from the architecture proposed in Attention is All You Need?",

|

||||

... )

|

||||

=====New task=====

|

||||

How many more blocks (also denoted as layers) in BERT base encoder than the encoder from the architecture proposed in Attention is All You Need?

|

||||

====Agent is executing the code below:

|

||||

bert_blocks = search(query="number of blocks in BERT base encoder")

|

||||

print("BERT blocks:", bert_blocks)

|

||||

====

|

||||

Print outputs:

|

||||

BERT blocks: twelve encoder blocks

|

||||

|

||||

====Agent is executing the code below:

|

||||

attention_layer = search(query="number of layers in Attention is All You Need")

|

||||

print("Attention layers:", attention_layer)

|

||||

====

|

||||

Print outputs:

|

||||

Attention layers: Encoder: The encoder is composed of a stack of N = 6 identical layers. Each layer has two sub-layers. The first is a multi-head self-attention mechanism, and the second is a simple, position- 2 Page 3 Figure 1: The Transformer - model architecture.

|

||||

|

||||

====Agent is executing the code below:

|

||||

bert_blocks = 12

|

||||

attention_layers = 6

|

||||

diff = bert_blocks - attention_layers

|

||||

print("Difference in blocks:", diff)

|

||||

final_answer(diff)

|

||||

====

|

||||

|

||||

Print outputs:

|

||||

Difference in blocks: 6

|

||||

|

||||

Final answer: 6

|

||||

```

|

||||

|

||||

### كيف يمكنني بناء وكيل؟

|

||||

|

||||

لتهيئة وكيل، تحتاج إلى هذه الوسائط:

|

||||

|

||||

- نموذج لغوي كبير (LLM) يشكل المحرك الأساسي للوكيل. الوكيل نفسه ليس النموذج اللغوي، بل هو برنامج يستخدم النموذج اللغوي كمحرك له.

|

||||

- موجه النظام (system prompt): هذه هي التعليمات التي يتم إعطاؤها للنموذج اللغوي لإنشاء مخرجاته.

|

||||

- صندوق أدوات (toolbox) يختار الوكيل منه الأدوات لتنفيذها

|

||||

- محلل (parser) لاستخراج الأدوات التي يجب استدعاؤها من مخرجات النموذج اللغوي LLM والأدوات التي يجب استخدامها

|

||||

|

||||

عند تهيئة نظام الوكيل، يتم استخدام سمات الأداة لإنشاء وصف للأداة، ثم يتم دمجها في موجه النظام الخاص `system_prompt` للوكيل لإعلامه بالأدوات التي يمكنه استخدامها ولماذا.

|

||||

|

||||

للبدء، يرجى تثبيت `agents` الإضافية لتثبيت جميع التبعيات الافتراضية.

|

||||

|

||||

```bash

|

||||

pip install transformers[agents]

|

||||

```

|

||||

|

||||

قم ببناء محرك LLM الخاص بك من خلال تعريف طريقة `llm_engine` التي تقبل قائمة من [الرسائل](./chat_templating.) وتعيد النص. يجب أن تقبل هذه الدالة القابلة للاستدعاء أيضًا معامل `stop` يشير إلى متى يجب التوقف عن التوليد.

|

||||

|

||||

```python

|

||||

from huggingface_hub import login, InferenceClient

|

||||

|

||||

login("<YOUR_HUGGINGFACEHUB_API_TOKEN>")

|

||||

|

||||

client = InferenceClient(model="meta-llama/Meta-Llama-3-70B-Instruct")

|

||||

|

||||

def llm_engine(messages, stop_sequences=["Task"]) -> str:

|

||||

response = client.chat_completion(messages, stop=stop_sequences, max_tokens=1000)

|

||||

answer = response.choices[0].message.content

|

||||

return answer

|

||||

```

|

||||

|

||||

يمكنك استخدام أي طريقة `llm_engine` طالما أنها:

|

||||

1. يتبع تنسيق [رسائل](./chat_templating.md) لإدخاله (`List [Dict [str، str]]`) ويعيد `str`

|

||||

2. يتوقف عن توليد المخراجات من التسلسلات التي تم تمريرها في معامل `stop`

|

||||

|

||||

أنت بحاجة أيضًا إلى معامل "الأدوات" الذي يقبل قائمة من "الأدوات". يمكنك توفير قائمة فارغة لـ "الأدوات"، ولكن استخدم صندوق الأدوات الافتراضي مع معامل اختياري `add_base_tools=True`.

|

||||

|

||||

الآن يمكنك إنشاء وكيل، مثل [`CodeAgent`], وتشغيله. ولتسهيل الأمر، نقدم أيضًا فئة [`HfEngine`] التي تستخدم `huggingface_hub.InferenceClient` بشكل مخفى.

|

||||

|

||||

```python

|

||||

from transformers import CodeAgent, HfEngine

|

||||

|

||||

llm_engine = HfEngine(model="meta-llama/Meta-Llama-3-70B-Instruct")

|

||||

agent = CodeAgent(tools=[], llm_engine=llm_engine, add_base_tools=True)

|

||||

|

||||

agent.run(

|

||||

"Could you translate this sentence from French, say it out loud and return the audio.",

|

||||

sentence="Où est la boulangerie la plus proche?",

|

||||

)

|

||||

```

|

||||

|

||||

هذه الميزة ستكون مفيدة في حالة الحاجة الملحة! يمكنك حتى ترك معامل `llm_engine` غير محدد، وسيتم إنشاء [`HfEngine`] بشكل تلقائي.

|

||||

|

||||

```python

|

||||

from transformers import CodeAgent

|

||||

|

||||

agent = CodeAgent(tools=[], add_base_tools=True)

|

||||

|

||||

agent.run(

|

||||

"Could you translate this sentence from French, say it out loud and give me the audio.",

|

||||

sentence="Où est la boulangerie la plus proche?",

|

||||

)

|

||||

```

|

||||

|

||||

لاحظ أننا استخدمنا معامل "sentence" إضافي: يمكنك تمرير النص كمعامل إضافي إلى النموذج.

|

||||

|

||||

يمكنك أيضًا استخدام هذا للإشارة إلى مسار الملفات المحلية أو البعيدة للنموذج لاستخدامها:

|

||||

|

||||

```py

|

||||

from transformers import ReactCodeAgent

|

||||

|

||||

agent = ReactCodeAgent(tools=[], llm_engine=llm_engine, add_base_tools=True)

|

||||

|

||||

agent.run("Why does Mike not know many people in New York?", audio="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/transformers/recording.mp3")

|

||||

```

|

||||

|

||||

|

||||

تم تحديد موجه النظام ومحلل المخرجات تلقائيًا، ولكن يمكنك فحصهما بسهولة عن طريق استدعاء `system_prompt_template` على وكيلك.

|

||||

|

||||

```python

|

||||

print(agent.system_prompt_template)

|

||||

```

|

||||

|

||||

من المهم أن تشرح بأكبر قدر ممكن من الوضوح المهمة التي تريد تنفيذها.

|

||||

كل عملية [`~Agent.run`] مستقلة، وبما أن الوكيل مدعوم من LLM، فقد تؤدي الاختلافات الطفيفة في موجهك إلى نتائج مختلفة تمامًا.

|

||||

يمكنك أيضًا تشغيل وكيل بشكل متتالي لمهام مختلفة: في كل مرة يتم فيها إعادة تهيئة سمتي `agent.task` و`agent.logs`.

|

||||

|

||||

|

||||

#### تنفيذ التعليمات البرمجية

|

||||

|

||||

يقوم مفسر Python بتنفيذ التعليمات البرمجية على مجموعة من المدخلات التي يتم تمريرها جنبًا إلى جنب مع أدواتك.

|

||||

يجب أن يكون هذا الأمر آمنًا لأن الوظائف الوحيدة التي يمكن استدعاؤها هي الأدوات التي قدمتها (خاصة إذا كانت أدوات من Hugging Face فقط) ووظيفة الطباعة، لذا فأنت مقيد بالفعل بما يمكن تنفيذه.

|

||||

|

||||

مفسر Python لا يسمح أيضًا باستدعاء دوال بشكل افتراضي خارج قائمة آمنة، لذا فإن جميع الهجمات الأكثر وضوحًا لا ينبغي أن تكون مشكلة.

|

||||

يمكنك أيضًا الإذن باستيرادات إضافية عن طريق تمرير الوحدات النمطية المصرح بها كقائمة من السلاسل في معامل `additional_authorized_imports` عند تهيئة [`ReactCodeAgent`] أو [`CodeAgent`]:

|

||||

|

||||

```py

|

||||

>>> from transformers import ReactCodeAgent

|

||||

|

||||

>>> agent = ReactCodeAgent(tools=[], additional_authorized_imports=['requests', 'bs4'])

|

||||

>>> agent.run("Could you get me the title of the page at url 'https://huggingface.co/blog'?")

|

||||

|

||||

(...)

|

||||

'Hugging Face – Blog'

|

||||

```

|

||||

|

||||

سيتم إيقاف التنفيذ عند أي رمز يحاول تنفيذ عملية غير قانونية أو إذا كان هناك خطأ Python عادي في التعليمات البرمجية التي تم إنشاؤها بواسطة الوكيل.

|

||||

|

||||

> [!WARNING]

|

||||

> يمكن لـ LLM توليد شفرة برمجية عشوائية سيتم تنفيذها بعد ذلك: لا تقمب استدعاء أى دوال غير آمنة!

|

||||

|

||||

### موجه النظام

|

||||

|

||||

ينشئ الوكيل، أو بالأحرى LLM الذي يقود الوكيل، يولد مخرجات بناءً على موجه النظام. يمكن تخصيص موجه النظام وتصميمه للمهام المقصودة. على سبيل المثال، تحقق من موجه النظام لـ [`ReactCodeAgent`] (الإصدار أدناه مبسط قليلاً).

|

||||

|

||||

```text

|

||||

You will be given a task to solve as best you can.

|

||||

You have access to the following tools:

|

||||

<<tool_descriptions>>

|

||||

|

||||

To solve the task, you must plan forward to proceed in a series of steps, in a cycle of 'Thought:', 'Code:', and 'Observation:' sequences.

|

||||

|

||||

At each step, in the 'Thought:' sequence, you should first explain your reasoning towards solving the task, then the tools that you want to use.

|

||||

Then in the 'Code:' sequence, you should write the code in simple Python. The code sequence must end with '/End code' sequence.

|

||||

During each intermediate step, you can use 'print()' to save whatever important information you will then need.

|

||||

These print outputs will then be available in the 'Observation:' field, for using this information as input for the next step.

|

||||

|

||||

In the end you have to return a final answer using the `final_answer` tool.

|

||||

|

||||

Here are a few examples using notional tools:

|

||||

---

|

||||

{examples}

|

||||

|

||||

Above example were using notional tools that might not exist for you. You only have access to those tools:

|

||||

<<tool_names>>

|

||||

You also can perform computations in the python code you generate.

|

||||

|

||||

Always provide a 'Thought:' and a 'Code:\n```py' sequence ending with '```<end_code>' sequence. You MUST provide at least the 'Code:' sequence to move forward.

|

||||

|

||||

Remember to not perform too many operations in a single code block! You should split the task into intermediate code blocks.

|

||||

Print results at the end of each step to save the intermediate results. Then use final_answer() to return the final result.

|

||||

|

||||

Remember to make sure that variables you use are all defined.

|

||||

|

||||

Now Begin!

|

||||

```

|

||||

|

||||

يتضمن موجه النظام:

|

||||

- *مقدمة* تشرح كيف يجب أن يتصرف الوكيل والأدوات التي يجب عليه استخدامها.

|

||||

- وصف لجميع الأدوات التي يتم تحديدها بواسطة رمز `<<tool_descriptions>>` الذي يتم استبداله ديناميكيًا في وقت التشغيل بالأدوات التي يحددها المستخدم أو يختارها.

|

||||

- يأتي وصف الأداة من سمات الأداة، `name`، و`description`، و`inputs` و`output_type`، وقالب `jinja2` بسيط يمكنك تحسينه.

|

||||

- شكل المخرج المتوقع.

|

||||

|

||||

يمكنك تحسين موجه النظام، على سبيل المثال، عن طريق إضافة شرح لتنسيق المخرجات.

|

||||

|

||||

للحصول على أقصى قدر من المرونة، يمكنك الكتابة فوق قالب موجه النظام بالكامل عن طريق تمرير موجه مخصص كمعامل إلى معلمة `system_prompt`.

|

||||

|

||||

```python

|

||||

from transformers import ReactJsonAgent

|

||||

from transformers.agents import PythonInterpreterTool

|

||||

|

||||

agent = ReactJsonAgent(tools=[PythonInterpreterTool()], system_prompt="{your_custom_prompt}")

|

||||

```

|

||||

|

||||

> [!WARNING]

|

||||

> يرجى التأكد من تحديد سلسلة `<<tool_descriptions>>` في مكان ما في `template` حتى يكون الوكيل على علم

|

||||

بالأدوات المتاحة.

|

||||

|

||||

|

||||

### فحص تشغيل الوكيل

|

||||

|

||||

فيما يلي بعض السمات المفيدة لفحص ما حدث بعد التشغيل:

|

||||

- تخزن `agent.logs` سجلات مفصلة للوكيل. في كل خطوة من تشغيل الوكيل، يتم تخزين كل شيء في قاموس إلحاقه بـ `agent.logs`.

|

||||

- تشغيل `agent.write_inner_memory_from_logs()` يخلق ذاكرة داخلية لسجلات الوكيل للنظام LLM لعرضها، كقائمة من رسائل الدردشة. تنتقل هذه الطريقة عبر كل خطوة من سجل الوكيل ولا تخزن سوى ما يهمها كرسالة: على سبيل المثال، سيحفظ موجه النظام والمهمة في رسائل منفصلة، ثم لكل خطوة سيخزن مخرج LLM كرسالة، ومخرج استدعاء الأداة كرسالة أخرى. استخدم هذا إذا كنت تريد عرضًا عامًا لما حدث - ولكن لن يتم نسخ كل سجل بواسطة هذه الطريقة.

|

||||

|

||||

## الأدوات

|

||||

|

||||

الأداة هي عبارة عن وظيفة أساسية يستخدمها الوكيل لتنفيذ مهمة محددة.

|

||||

|

||||

يمكنك على سبيل المثال التحقق من [`PythonInterpreterTool`]: لديه اسم ووصف ووصف للمدخلات ونوع للمخرج، وطريقة `__call__` التي تقوم بتنفيذ المهمة المطلوبة.

|

||||

|

||||

عند تهيئة الوكيل، يتم استخدام سمات الأداة لتوليد وصف للأداة يتم تضمينه في موجه النظام الخاص بالوكيل. يتيح هذا للوكيل معرفة الأدوات التي يمكنه استخدامها ولماذا.

|

||||

|

||||

### صندوق الأدوات الافتراضي

|

||||

|

||||

يأتي Transformers مع صندوق أدوات افتراضي لتمكين الوكلاء، والذي يمكنك إضافته إلى وكيلك عند التهيئة باستخدام معامل `add_base_tools = True`:

|

||||

|

||||

- **الإجابة على أسئلة المستند**: الإجابة على سؤال حول المستند (مثل ملف PDF) بتنسيق صورة ([Donut](./model_doc/donut))

|

||||

- **الإجابة على أسئلة الصور**: الإجابة على سؤال حول صورة ([VILT](./model_doc/vilt))

|

||||

- **التحدث إلى النص**: قم بتفريغ الكلام إلى نص ([Whisper](./model_doc/whisper))

|

||||

- **النص إلى كلام**: تحويل النص إلى كلام ([SpeechT5](./model_doc/speecht5))

|

||||

- **الترجمة**: ترجمة جملة معينة من لغة المصدر إلى لغة الهدف.

|

||||

- **مفسر كود Python**: تشغيل كود Python الذي تم إنشاؤه بواسطة LLM في بيئة آمنة. لن يتم إضافة هذه الأداة إلى [`ReactJsonAgent`] إلا إذا استخدمت `add_base_tools=True`، نظرًا لأن الأدوات المستندة إلى التعليمات البرمجية يمكنها بالفعل تنفيذ كود Python

|

||||

لا تترجم النصوص الخاصة ولا الأكواد البرمجية ولا الروابط ولا رموز HTML وCSS:

|

||||

|

||||

يمكنك استخدام أداة يدويًا عن طريق استدعاء دالة [`load_tool`] وتحديد مهمة لتنفيذها.

|

||||

|

||||

```python

|

||||

from transformers import load_tool

|

||||

|

||||

tool = load_tool("text-to-speech")

|

||||

audio = tool("This is a text to speech tool")

|

||||

```

|

||||

|

||||

### إنشاء أداة جديدة

|

||||

|

||||

يمكنك إنشاء أداتك الخاصة لتغطية حالات الاستخدام التي لا تغطيها الأدوات الافتراضية من Hugging Face.

|

||||

على سبيل المثال، دعنا نقوم بإنشاء أداة تعرض النموذج الأكثر تنزيلًا لمهمة معينة من Hub.

|

||||

|

||||

سوف نبدأ بالكود التالي.

|

||||

|

||||

```python

|

||||

from huggingface_hub import list_models

|

||||

|

||||

task = "text-classification"

|

||||

|

||||

model = next(iter(list_models(filter=task, sort="downloads", direction=-1)))

|

||||

print(model.id)

|

||||

```

|

||||

|

||||

يمكن تحويل هذه الشيفرة إلى فئة ترث من الفئة العليا [`Tool`].

|

||||

|

||||

تحتاج الأداة المخصصة إلى:

|

||||

|

||||

- اسم `name`، والتي تمثل اسم الأداة نفسها. عادةً ما يصف الاسم وظيفتها. بما أن الكود يعيد النموذج الأكثر تنزيلًا لمهمة ما، فلنسمها `model_download_counter`.

|

||||

- تستخدم خاصية `description` لملء موجه نظام الوكيل.

|

||||

- خاصية `inputs`، والتي هي عبارة عن قاموس بمفاتيح "type" و"description". يحتوي على معلومات تساعد المفسر Python على اتخاذ خيارات مستنيرة بشأن المدخلات.

|

||||

- خاصية `output_type`، والتي تحدد نوع المخرج.

|

||||

- طريقة `forward` والتي تحتوي على الكود الذي سيتم تنفيذه للحصول على النتيجة النهائية.

|

||||

|

||||

```python

|

||||

from transformers import Tool

|

||||

from huggingface_hub import list_models

|

||||

|

||||

class HFModelDownloadsTool(Tool):

|

||||

name = "model_download_counter"

|

||||

description = (

|

||||

"This is a tool that returns the most downloaded model of a given task on the Hugging Face Hub. "

|

||||

"It returns the name of the checkpoint."

|

||||

)

|

||||

|

||||

inputs = {

|

||||

"task": {

|

||||

"type": "text",

|

||||

"description": "the task category (such as text-classification, depth-estimation, etc)",

|

||||

}

|

||||

}

|

||||

output_type = "text"

|

||||

|

||||

def forward(self, task: str):

|

||||

model = next(iter(list_models(filter=task, sort="downloads", direction=-1)))

|

||||

return model.id

|

||||

```

|

||||

|

||||

الآن بعد أن أصبحت فئة `HfModelDownloadsTool` المخصصة جاهزة، يمكنك حفظها في ملف باسم `model_downloads.py` واستيرادها للاستخدام.

|

||||

|

||||

```python

|

||||

from model_downloads import HFModelDownloadsTool

|

||||

|

||||

tool = HFModelDownloadsTool()

|

||||

```

|

||||

|

||||

يمكنك أيضًا مشاركة أداتك المخصصة في Hub عن طريق استدعاء [`~Tool.push_to_hub`] على الأداة. تأكد من أنك قمت بإنشاء مستودع لها على Hub وأنك تستخدم رمز وصول للقراءة.

|

||||

|

||||

```python

|

||||

tool.push_to_hub("{your_username}/hf-model-downloads")

|

||||

```

|

||||

|

||||

قم بتحميل الأداة باستخدام دالة [`~Tool.load_tool`] ومررها إلى معلمة `tools` في الوكيل الخاص بك.

|

||||

|

||||

```python

|

||||

from transformers import load_tool, CodeAgent

|

||||

|

||||

model_download_tool = load_tool("m-ric/hf-model-downloads")

|

||||

agent = CodeAgent(tools=[model_download_tool], llm_engine=llm_engine)

|

||||

agent.run(

|

||||

"Can you give me the name of the model that has the most downloads in the 'text-to-video' task on the Hugging Face Hub?"

|

||||

)

|

||||

```

|

||||

|

||||

ستحصل على ما يلي:

|

||||

|

||||

```text

|

||||

======== New task ========

|

||||

Can you give me the name of the model that has the most downloads in the 'text-to-video' task on the Hugging Face Hub?

|

||||

==== Agent is executing the code below:

|

||||

most_downloaded_model = model_download_counter(task="text-to-video")

|

||||

print(f"The most downloaded model for the 'text-to-video' task is {most_downloaded_model}.")

|

||||

====

|

||||

```

|

||||

|

||||

والناتج:

|

||||

|

||||

`"النموذج الأكثر تنزيلًا لمهمة `text-to-video` هو ByteDance/AnimateDiff-Lightning."`

|

||||

|

||||

### إدارة صندوق أدوات الوكيل الخاص بك

|

||||

|

||||

إذا كنت قد قمت بتهيئة وكيل، فمن غير الملائم إعادة تهيئته من البداية لإضافة أداة جديدة ترغب في استخدامها. باستخدام مكتبة Transformers، يمكنك إدارة صندوق أدوات الوكيل بإضافة أو استبدال أداة موجودة.

|

||||

|

||||

دعنا نضيف الأداة `model_download_tool` إلى وكيل تم تهيئته مسبقًا باستخدام صندوق الأدوات الافتراضي.

|

||||

|

||||

```python

|

||||

from transformers import CodeAgent

|

||||

|

||||

agent = CodeAgent(tools=[], llm_engine=llm_engine, add_base_tools=True)

|

||||

agent.toolbox.add_tool(model_download_tool)

|

||||

```

|

||||

|

||||

الآن يمكننا الاستفادة من الأداة الجديدة وأداة تحويل النص إلى كلام السابقة:

|

||||

|

||||

```python

|

||||

agent.run(

|

||||

"Can you read out loud the name of the model that has the most downloads in the 'text-to-video' task on the Hugging Face Hub and return the audio?"

|

||||

)

|

||||

```

|

||||

|

||||

| **Audio** |

|

||||

|------------------------------------------------------------------------------------------------------------------------------------------------------|

|

||||

| <audio controls><source src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/transformers/damo.wav" type="audio/wav"/> |

|

||||

|

||||

> [!WARNING]

|

||||

> احترس عند إضافة أدوات إلى وكيل يعمل بالفعل لأنه يمكن أن يؤثر على اختيار الأداة لصالح أداتك أو اختيار أداة أخرى غير المحددة بالفعل.

|

||||

|

||||

استخدم طريقة `agent.toolbox.update_tool()` لاستبدال أداة موجودة في صندوق أدوات الوكيل.

|

||||

هذا مفيد إذا كانت أداتك الجديدة بديلاً مباشرًا للأداة الموجودة لأن الوكيل يعرف بالفعل كيفية تنفيذ تلك المهمة المحددة.

|

||||

تأكد فقط من اتباع الأداة الجديدة لنفس واجهة برمجة التطبيقات (API) للأداة المستبدلة أو قم بتكييف قالب موجه النظام لضمان تحديث جميع الأمثلة التي تستخدم الأداة المستبدلة.

|

||||

|

||||

### استخدام مجموعة من الأدوات

|

||||

|

||||

يمكنك الاستفادة من مجموعات الأدوات باستخدام كائن ToolCollection، مع تحديد مجموعة الأدوات التي تريد استخدامها.

|

||||

ثم قم بتمريرها كقائمة لتهيئة الوكيل الخاص بك، وبدء استخدامها!

|

||||

|

||||

```py

|

||||

from transformers import ToolCollection, ReactCodeAgent

|

||||

|

||||

image_tool_collection = ToolCollection(collection_slug="huggingface-tools/diffusion-tools-6630bb19a942c2306a2cdb6f")

|

||||

agent = ReactCodeAgent(tools=[*image_tool_collection.tools], add_base_tools=True)

|

||||

|

||||

agent.run("Please draw me a picture of rivers and lakes.")

|

||||

```

|

||||

|

||||

لتسريع البداية، يتم تحميل الأدوات فقط إذا استدعاها الوكيل.

|

||||

|

||||

ستحصل على هذه الصورة:

|

||||

|

||||

<img src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/transformers/rivers_and_lakes.png" />

|

||||

|

||||

### استخدام gradio-tools

|

||||

|

||||

[gradio-tools](https://github.com/freddyaboulton/gradio-tools) هي مكتبة قوية تتيح استخدام Hugging

|

||||

Face Spaces كأدوات. تدعم العديد من المساحات الموجودة بالإضافة إلى مساحات مخصصة.

|

||||

|

||||

تدعم مكتبة Transformers `gradio_tools` باستخدام طريقة [`Tool.from_gradio`] في الفئة. على سبيل المثال، دعنا نستخدم [`StableDiffusionPromptGeneratorTool`](https://github.com/freddyaboulton/gradio-tools/blob/main/gradio_tools/tools/prompt_generator.py) من مجموعة أدوات `gradio-tools` لتحسين المطالبات لإنشاء صور أفضل.

|

||||

|

||||

استورد وقم بتهيئة الأداة، ثم مررها إلى طريقة `Tool.from_gradio`:

|

||||

|

||||

```python

|

||||

from gradio_tools import StableDiffusionPromptGeneratorTool

|

||||

from transformers import Tool, load_tool, CodeAgent

|

||||

|

||||

gradio_prompt_generator_tool = StableDiffusionPromptGeneratorTool()

|

||||

prompt_generator_tool = Tool.from_gradio(gradio_prompt_generator_tool)

|

||||

```

|

||||

|

||||

الآن يمكنك استخدامه مثل أي أداة أخرى. على سبيل المثال، دعنا نحسن الموجه `a rabbit wearing a space suit`.

|

||||

|

||||

```python

|

||||

image_generation_tool = load_tool('huggingface-tools/text-to-image')

|

||||

agent = CodeAgent(tools=[prompt_generator_tool, image_generation_tool], llm_engine=llm_engine)

|

||||

|

||||

agent.run(

|

||||

"Improve this prompt, then generate an image of it.", prompt='A rabbit wearing a space suit'

|

||||

)

|

||||

```

|

||||

|

||||

يستفيد النموذج بشكل كافٍ من الأداة:

|

||||

|

||||

```text

|

||||

======== New task ========

|

||||

Improve this prompt, then generate an image of it.

|

||||

You have been provided with these initial arguments: {'prompt': 'A rabbit wearing a space suit'}.

|

||||

==== Agent is executing the code below:

|

||||

improved_prompt = StableDiffusionPromptGenerator(query=prompt)

|

||||

while improved_prompt == "QUEUE_FULL":

|

||||

improved_prompt = StableDiffusionPromptGenerator(query=prompt)

|

||||

print(f"The improved prompt is {improved_prompt}.")

|

||||

image = image_generator(prompt=improved_prompt)

|

||||

====

|

||||

```

|

||||

|

||||

قبل إنشاء الصورة أخيرًا:

|

||||

|

||||

<img src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/transformers/rabbit_spacesuit_flux.webp" />

|

||||

|

||||

> [!WARNING]

|

||||

> تتطلب gradio-tools إدخالات وإخراجات *نصية* حتى عند العمل مع طرائق مختلفة مثل كائنات الصور والصوت. الإدخالات والإخراجات الصورية والصوتية غير متوافقة حاليًا.

|

||||

|

||||

### استخدام أدوات LangChain

|

||||

|

||||

نحن نحب Langchain ونعتقد أنها تحتوي على مجموعة أدوات قوية للغاية.

|

||||

لاستيراد أداة من LangChain، استخدم الطريقة `from_langchain()`.

|

||||

|

||||

فيما يلي كيفية استخدامها لإعادة إنشاء نتيجة البحث في المقدمة باستخدام أداة بحث الويب LangChain.

|

||||

|

||||

```python

|

||||

from langchain.agents import load_tools

|

||||

from transformers import Tool, ReactCodeAgent

|

||||

|

||||

search_tool = Tool.from_langchain(load_tools(["serpapi"])[0])

|

||||

|

||||

agent = ReactCodeAgent(tools=[search_tool])

|

||||

|

||||

agent.run("How many more blocks (also denoted as layers) in BERT base encoder than the encoder from the architecture proposed in Attention is All You Need?")

|

||||

```

|

||||

|

||||

## واجهة Gradio

|

||||

|

||||

يمكنك الاستفادة من `gradio.Chatbot` لعرض أفكار الوكيل الخاص بك باستخدام `stream_to_gradio`، إليك مثال:

|

||||

|

||||

```py

|

||||

import gradio as gr

|

||||

from transformers import (

|

||||

load_tool,

|

||||

ReactCodeAgent,

|

||||

HfEngine,

|

||||

stream_to_gradio,

|

||||

)

|

||||

|

||||

# Import tool from Hub

|

||||

image_generation_tool = load_tool("m-ric/text-to-image")

|

||||

|

||||

llm_engine = HfEngine("meta-llama/Meta-Llama-3-70B-Instruct")

|

||||

|

||||

# Initialize the agent with the image generation tool

|

||||

agent = ReactCodeAgent(tools=[image_generation_tool], llm_engine=llm_engine)

|

||||

|

||||

|

||||

def interact_with_agent(task):

|

||||

messages = []

|

||||

messages.append(gr.ChatMessage(role="user", content=task))

|

||||

yield messages

|

||||

for msg in stream_to_gradio(agent, task):

|

||||

messages.append(msg)

|

||||

yield messages + [

|

||||

gr.ChatMessage(role="assistant", content="⏳ Task not finished yet!")

|

||||

]

|

||||

yield messages

|

||||

|

||||

|

||||

with gr.Blocks() as demo:

|

||||

text_input = gr.Textbox(lines=1, label="Chat Message", value="Make me a picture of the Statue of Liberty.")

|

||||

submit = gr.Button("Run illustrator agent!")

|

||||

chatbot = gr.Chatbot(

|

||||

label="Agent",

|

||||

type="messages",

|

||||

avatar_images=(

|

||||

None,

|

||||

"https://em-content.zobj.net/source/twitter/53/robot-face_1f916.png",

|

||||

),

|

||||

)

|

||||

submit.click(interact_with_agent, [text_input], [chatbot])

|

||||

|

||||

if __name__ == "__main__":

|

||||

demo.launch()

|

||||

```

|

||||

@ -77,7 +77,7 @@ model = AutoModelForCausalLM.from_pretrained(model_id, gguf_file=filename)

|

||||

|

||||

الآن لديك إمكانية الوصول إلى النسخة الكامل غير المكممة للنموذج في بيئة PyTorch، حيث يمكنك دمجه مع مجموعة كبيرة من الأدوات الأخرى.

|

||||

|

||||

لإعادة التحويل إلى ملف `gguf`، نوصي باستخدام ملف [`convert-hf-to-gguf.py`](https://github.com/ggerganov/llama.cpp/blob/master/convert-hf-to-gguf.py) من llama.cpp.

|

||||

لإعادة التحويل إلى ملف `gguf`، نوصي باستخدام ملف [`convert-hf-to-gguf.py`](https://github.com/ggerganov/llama.cpp/blob/master/convert_hf_to_gguf.py) من llama.cpp.

|

||||

|

||||

فيما يلي كيفية إكمال البرنامج النصي أعلاه لحفظ النموذج وإعادة تصديره مرة أخرى إلى `gguf`:

|

||||

|

||||

|

||||

@ -674,29 +674,7 @@ use_cpu: false

|

||||

```

|

||||

|

||||

</hfoption>

|

||||

<hfoption id="Tensor Parallelism with PyTorch 2">

|

||||

|

||||

```yml

|

||||

compute_environment: LOCAL_MACHINE

|

||||

tp_config:

|

||||

tp_size: 4

|

||||

distributed_type: TP

|

||||

downcast_bf16: 'no'

|

||||

machine_rank: 0

|

||||

main_training_function: main

|

||||

mixed_precision: 'no'

|

||||

num_machines: 1

|

||||

num_processes: 4

|

||||

rdzv_backend: static

|

||||

same_network: true

|

||||

tpu_env: []

|

||||

tpu_use_cluster: false

|

||||

tpu_use_sudo: false

|

||||

use_cpu: false

|

||||

|

||||

```

|

||||

|

||||

</hfoption>

|

||||

</hfoptions>

|

||||

يُعد أمر [`accelerate_launch`](https://huggingface.co/docs/accelerate/package_reference/cli#accelerate-launch) هو الطريقة المُوصى بها لتشغيل نص البرمجى للتدريب على نظام موزع باستخدام Accelerate و [`Trainer`] مع المعلمات المحددة في `config_file.yaml`. يتم حفظ هذا الملف في مجلد ذاكرة التخزين المؤقت لـ Accelerate ويتم تحميله تلقائيًا عند تشغيل `accelerate_launch`.

|

||||

|

||||

|

||||

@ -23,8 +23,6 @@

|

||||

title: Laden und Trainieren von Adaptern mit 🤗 PEFT

|

||||

- local: model_sharing

|

||||

title: Ein Modell teilen

|

||||

- local: transformers_agents

|

||||

title: Agents

|

||||

- local: llm_tutorial

|

||||

title: Generation with LLMs

|

||||

title: Tutorials

|

||||

@ -39,4 +37,4 @@

|

||||

title: Testen

|

||||

- local: pr_checks

|

||||

title: Überprüfung einer Pull Request

|

||||

title: Contribute

|

||||

title: Contribute

|

||||

|

||||

@ -1,323 +0,0 @@

|

||||

<!--Copyright 2023 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with

|

||||

the License. You may obtain a copy of the License at

|

||||

|

||||

http://www.apache.org/licenses/LICENSE-2.0

|

||||

|

||||

Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on

|

||||

an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the

|

||||

specific language governing permissions and limitations under the License.

|

||||

|

||||

⚠️ Note that this file is in Markdown but contain specific syntax for our doc-builder (similar to MDX) that may not be

|

||||

rendered properly in your Markdown viewer.

|

||||

|

||||

-->

|

||||

|

||||

# Transformers Agents

|

||||

|

||||

<Tip warning={true}>

|

||||

|

||||

Transformers Agents ist eine experimentelle API, die jederzeit geändert werden kann. Die von den Agenten zurückgegebenen Ergebnisse

|

||||

zurückgegeben werden, können variieren, da sich die APIs oder die zugrunde liegenden Modelle ändern können.

|

||||

|

||||

</Tip>

|

||||

|

||||

Transformers Version v4.29.0, die auf dem Konzept von *Tools* und *Agenten* aufbaut. Sie können damit spielen in

|

||||

[dieses Colab](https://colab.research.google.com/drive/1c7MHD-T1forUPGcC_jlwsIptOzpG3hSj).

|

||||

|

||||

Kurz gesagt, es bietet eine API für natürliche Sprache auf der Grundlage von Transformers: Wir definieren eine Reihe von kuratierten Tools und entwerfen einen

|

||||

Agenten, um natürliche Sprache zu interpretieren und diese Werkzeuge zu verwenden. Es ist von vornherein erweiterbar; wir haben einige relevante Tools kuratiert,

|

||||

aber wir werden Ihnen zeigen, wie das System einfach erweitert werden kann, um jedes von der Community entwickelte Tool zu verwenden.

|

||||

|

||||

Beginnen wir mit einigen Beispielen dafür, was mit dieser neuen API erreicht werden kann. Sie ist besonders leistungsfähig, wenn es um

|

||||

Sie ist besonders leistungsstark, wenn es um multimodale Aufgaben geht. Lassen Sie uns also eine Runde drehen, um Bilder zu erzeugen und Text vorzulesen.

|

||||

|

||||

```py

|

||||

agent.run("Caption the following image", image=image)

|

||||

```

|

||||

|

||||

| **Input** | **Output** |

|

||||

|-----------------------------------------------------------------------------------------------------------------------------|-----------------------------------|

|

||||

| <img src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/transformers/beaver.png" width=200> | A beaver is swimming in the water |

|

||||

|

||||

---

|

||||

|

||||