### Description

This PR fixes a bug with getting module attributes during `torch.onnx.export` when `export_modules_as_functions` is used. With this fix, we can compare the LLaMA-2 models produced by the TorchScript exporter and the [Dynamo exporter](https://github.com/pytorch/pytorch/issues/104903).

### Context

When exporting LLaMA-2 from Hugging Face with `export_modules_as_functions`, the `Embedding` object does not have the `freeze` attribute.

```

File "/home/kvaishnavi/.local/lib/python3.8/site-packages/transformers/models/llama/modeling_llama.py", line 662, in forward

inputs_embeds = self.embed_tokens(input_ids)

File "/home/kvaishnavi/.local/lib/python3.8/site-packages/torch/nn/modules/module.py", line 1519, in _wrapped_call_impl

return self._call_impl(*args, **kwargs)

File "/home/kvaishnavi/.local/lib/python3.8/site-packages/torch/nn/modules/module.py", line 1558, in _call_impl

args_result = hook(self, args)

File "/home/kvaishnavi/.local/lib/python3.8/site-packages/torch/onnx/utils.py", line 1394, in _track_module_attributes_forward_pre_hook

setattr(module, attr_name, _get_module_attributes(module))

File "/home/kvaishnavi/.local/lib/python3.8/site-packages/torch/onnx/utils.py", line 1474, in _get_module_attributes

return {k: getattr(module, k) for k in annotations}

File "/home/kvaishnavi/.local/lib/python3.8/site-packages/torch/onnx/utils.py", line 1474, in <dictcomp>

return {k: getattr(module, k) for k in annotations}

File "/home/kvaishnavi/.local/lib/python3.8/site-packages/torch/nn/modules/module.py", line 1696, in __getattr__

raise AttributeError(f"'{type(self).__name__}' object has no attribute '{name}'")

AttributeError: 'Embedding' object has no attribute 'freeze'

```

To get around this issue, we can skip adding the keys in the dictionary when the object does not have the attribute.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/109759

Approved by: https://github.com/BowenBao

Cap opset version at 17 for torch.onnx.export and suggest users to use the dynamo exporter. Warn users instead of failing hard because we should still allow users to create custom symbolic functions for opset>17.

Also updates the default opset version by running `tools/onnx/update_default_opset_version.py`.

Fixes#107801Fixes#107446

Pull Request resolved: https://github.com/pytorch/pytorch/pull/107829

Approved by: https://github.com/BowenBao

### Proposal

When arg of 'keep_initializers_as_inputs' is True, it's quite possible that parameters are set by initializer of input.

Hence we should disable de-duplicate initializer optimization when 'keep_initializers_as_inputs==True'.

- [x] Update doc related to `keep_initializers_as_inputs`.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/96320

Approved by: https://github.com/abock, https://github.com/thiagocrepaldi

Fixes#88286, Fixes#97160

Repro:

```python

import torch

import io

from torch.utils.checkpoint import checkpoint

class A(torch.nn.Module):

# A supported module.

def __init__(self):

super(A, self).__init__()

self.l1 = torch.nn.Linear(2, 2)

def forward(self, x):

return self.l1(x)

class B(torch.nn.Module):

# This module is not exportable to ONNX because it

# uses gradient-checkpointing. However, its two sub-module's

# are exportable, so ORTModule should be used to compute them.

def __init__(self):

super(B, self).__init__()

self.l1 = torch.nn.Linear(2, 2)

self.a = A()

def forward(self, x):

def custom():

def custom_forward(x_):

return self.a(x_)

return custom_forward

z = self.l1(checkpoint(custom(), x))

return z

torch.onnx.export(

B(),

(torch.randn(2, 2),),

io.BytesIO(),

autograd_inlining=True

)

```

`torch.onnx.export(autograd_inlining=True)` should repro the user error as this is the original execution path.

```bash

Traceback (most recent call last):

File "repro88286.py", line 36, in <module>

torch.onnx.export(

File "<@beartype(torch.onnx.utils.export) at 0x7f0f011faee0>", line 385, in export

File "/opt/pytorch/torch/onnx/utils.py", line 511, in export

_export(

File "/opt/pytorch/torch/onnx/utils.py", line 1576, in _export

graph, params_dict, torch_out = _model_to_graph(

File "<@beartype(torch.onnx.utils._model_to_graph) at 0x7f0f01187dc0>", line 11, in _model_to_graph

File "/opt/pytorch/torch/onnx/utils.py", line 1130, in _model_to_graph

graph, params, torch_out, module = _create_jit_graph(model, args)

File "/opt/pytorch/torch/onnx/utils.py", line 1006, in _create_jit_graph

graph, torch_out = _trace_and_get_graph_from_model(model, args)

File "/opt/pytorch/torch/onnx/utils.py", line 910, in _trace_and_get_graph_from_model

trace_graph, torch_out, inputs_states = torch.jit._get_trace_graph(

File "/opt/pytorch/torch/jit/_trace.py", line 1269, in _get_trace_graph

outs = ONNXTracedModule(f, strict, _force_outplace, return_inputs, _return_inputs_states)(*args, **kwargs)

File "/opt/pytorch/torch/nn/modules/module.py", line 1502, in _wrapped_call_impl

return self._call_impl(*args, **kwargs)

File "/opt/pytorch/torch/nn/modules/module.py", line 1511, in _call_impl

return forward_call(*args, **kwargs)

File "/opt/pytorch/torch/jit/_trace.py", line 128, in forward

graph, out = torch._C._create_graph_by_tracing(

File "/opt/pytorch/torch/jit/_trace.py", line 119, in wrapper

outs.append(self.inner(*trace_inputs))

File "/opt/pytorch/torch/nn/modules/module.py", line 1502, in _wrapped_call_impl

return self._call_impl(*args, **kwargs)

File "/opt/pytorch/torch/nn/modules/module.py", line 1511, in _call_impl

return forward_call(*args, **kwargs)

File "/opt/pytorch/torch/nn/modules/module.py", line 1492, in _slow_forward

result = self.forward(*input, **kwargs)

File "repro88286.py", line 32, in forward

z = self.l1(checkpoint(custom(), x))

File "/opt/pytorch/torch/utils/checkpoint.py", line 412, in checkpoint

return CheckpointFunction.apply(function, preserve, *args)

File "/opt/pytorch/torch/autograd/function.py", line 506, in apply

return super().apply(*args, **kwargs) # type: ignore[misc]

RuntimeError: _Map_base::at

```

By using `autograd_inlining=False`, the export still fail with a different error because autograd inlining is not enabled:

```bash

Traceback (most recent call last):

File "repro88286.py", line 36, in <module>

torch.onnx.export(

File "<@beartype(torch.onnx.utils.export) at 0x7f6088b32ee0>", line 385, in export

File "/opt/pytorch/torch/onnx/utils.py", line 511, in export

_export(

File "/opt/pytorch/torch/onnx/utils.py", line 1615, in _export

) = graph._export_onnx( # type: ignore[attr-defined]

RuntimeError: ONNX export failed: Couldn't export Python operator CheckpointFunction

```

To allow `CheckpointFunction` into the onnx graph, `operator_export_type=torch.onnx.OperatorExportTypes.ONNX_FALLTHROUGH` flag can be added to `torch.onnx.export`, which would lead to the following ONNX graph:

```bash

Exported graph: graph(%prim::PythonOp_0 : Float(2, 2, strides=[2, 1], requires_grad=0, device=cpu),

%l1.weight : Float(2, 2, strides=[2, 1], requires_grad=1, device=cpu),

%l1.bias : Float(2, strides=[1], requires_grad=1, device=cpu)):

%/PythonOp_output_0 : Float(2, 2, strides=[2, 1], requires_grad=0, device=cpu) = ^CheckpointFunction[inplace=0, module="torch.utils.checkpoint", onnx_name="/PythonOp"](<function B.forward.<locals>.custom.<locals>.custom_forward at 0x7fdf9182f670>, True)(%prim::PythonOp_0), scope: __main__.B:: # /opt/pytorch/torch/autograd/function.py:506:0

%6 : Float(2, 2, strides=[2, 1], requires_grad=1, device=cpu) = onnx::Gemm[alpha=1., beta=1., transB=1, onnx_name="/l1/Gemm"](%/PythonOp_output_0, %l1.weight, %l1.bias), scope: __main__.B::/torch.nn.modules.linear.Linear::l1 # /opt/pytorch/torch/nn/modules/linear.py:114:0

return (%6)

```

Pull Request resolved: https://github.com/pytorch/pytorch/pull/104067

Approved by: https://github.com/BowenBao, https://github.com/kit1980

Merges startswith, endswith calls to into a single call that feeds in a tuple. Not only are these calls more readable, but it will be more efficient as it iterates through each string only once.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/96754

Approved by: https://github.com/ezyang

All this time, PyTorch and ONNX has different strategy for None in output. And in internal test, we flatten the torch outputs to see if the rest of them matched. However, this doesn't work anymore in scripting after Optional node is introduced, since some of None would be kept.

#83184 forces script module to keep all Nones from Pytorch, but in ONNX, the model only keeps the ones generated with Optional node, and deletes those meaningless None.

This PR uses Optional node to keep those meaningless None in output as well, so when it comes to script module result comparison, Pytorch and ONNX should have the same amount of Nones.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/84789

Approved by: https://github.com/BowenBao

Fix#82589

Why:

1. **full_check** works in `onnx::checker::check_model` function as it turns on **strict_mode** in `onnx::shape_inference::InferShapes()` which I think that was the intention of this part of code.

2. **strict_mode** catches failed shape type inference (invalid ONNX model from onnx perspective) and ONNXRUNTIME can't run these invalid models, as ONNXRUNTIME actually rely on ONNX shape type inference to optimize ONNX graph. Why we don't set it True for default? >>> some of existing users use other platform, such as caffe2 to run ONNX model which doesn't need valid ONNX model to run.

3. This PR doesn't change the original behavior of `check_onnx_proto`, but add a warning message for those models which can't pass strict shape type inference, saying the models would fail on onnxruntime.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/83186

Approved by: https://github.com/justinchuby, https://github.com/thiagocrepaldi, https://github.com/jcwchen, https://github.com/BowenBao

Extend `register_custom_op` to support onnx-script local function. The FunctionProto from onnx-script is represented by custom op and inserted into ModelProto for op execution.

NOTE: I did experiments on >2GB case of a simple model with large initializers:

```python

import torch

class Net(torch.nn.Module):

def __init__(self, B, C):

super().__init__()

self.layer_norm = torch.nn.LayerNorm((B, C), eps=1e-3)

def forward(self, x):

return self.layer_norm(x)

N, B, C = 3, 25000, 25000

model = Net(B, C)

x = torch.randn(N, B, C)

torch.onnx.export(model, x, "large_model.onnx", opset_version=12)

```

And it turns out we won't get model_bytes > 2GB after `_export_onnx` pybind cpp function, as we split initializer in external files in that function, and have serialization before return the model bytes, which protobuf is not allowed to be larger than 2GB at any circumstances.

The test cases can be found in the next PR #86907 .

Pull Request resolved: https://github.com/pytorch/pytorch/pull/86906

Approved by: https://github.com/justinchuby, https://github.com/BowenBao

Follow-up for #87735

Once again, because BUILD_CAFFE2=0 is not tested for ONNX exporter, one scenario slipped through. A use case where the model can be exported without aten fallback when operator_export_type=ONNX_ATEN_FALLBACK and BUILD_CAFFE2=0

A new unit test has been added, but it won't prevent regressions if BUILD_CAFFE2=0 is not executed on CI again

Fixes#87313

Pull Request resolved: https://github.com/pytorch/pytorch/pull/88504

Approved by: https://github.com/justinchuby, https://github.com/BowenBao

Update `register_custom_op_symbolic`'s behavior to _only register the symbolic function at a single version_. This is more aligned with the semantics of the API signature.

As a result of this change, opset 7 and opset 8 implementations are now seen as fallback when the opset_version >= 9. Previously any ops internally registered to opset < 9 are not discoverable by an export version target >= 9. Updated the test to reflect this change.

The implication of this change is that users will need to register a symbolic function to the exact version when they want to override an existing symbolic. They are not impacted if (1) an implementation does not existing for the op, or (2) they are already registering to the exact version for export.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/85636

Approved by: https://github.com/BowenBao

Update `unconvertible_ops` to create a list of unconvertible ops using the updated registry.

- Use fewer passes in the jit process instead to avoid errors during conversion in the ONNX fallback mode

- Actually check the registry to find implemented ops

- Fix type hints for `_create_jit_graph` and `_jit_pass_onnx_remove_inplace_ops_for_onnx`

Pull Request resolved: https://github.com/pytorch/pytorch/pull/85595

Approved by: https://github.com/BowenBao

`_set_opset_version` and `_set_operator_export_type` are previously deprecated. This PR decorates them with the deprecation decorator, so warnings are emitted.

- Remove usage of `_set_opset_version` and `_set_operator_export_type` in favor of setting the globals vars directly in torch.onnx internal

- Update `GLOBALS.operator_export_type`'s default to not be None to tighten types

- Remove usage of `_set_onnx_shape_inference`

Pull Request resolved: https://github.com/pytorch/pytorch/pull/85165

Approved by: https://github.com/BowenBao, https://github.com/AllenTiTaiWang

This PR create the `GraphContext` class and relays all graph methods to _C.Graph as well as implements the `g.op` method. The GraphContext object is passed into the symbolic functions in place of _C.Graph for compatibility with existing symbolic functions.

This way (1) we can type annotate all `g` args because the method is defined and (2) we can use additional context information in symbolic functions. (3) no more monkey patching on `_C.Graph`

Also

- Fix return type of `_jit_pass_fixup_onnx_controlflow_node`

- Create `torchscript.py` to house torch.Graph related functions

- Change `GraphContext.op` to create nodes in the Block instead of the Graph

- Create `add_op_with_blocks` to handle scenarios where we need to directly manipulate sub-blocks. Update loop and if symbolic functions to use this function.

## Discussion

Should we put all the context inside `SymbolicContext` and make it an attribute in the `GraphContext` class? This way we only define two attributes `GraphContext.graph` and `GraphContext.context`. Currently all context attributes are directly defined in the class.

### Decision

Keep GraphContext flatand note that it will change in the future.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/84728

Approved by: https://github.com/AllenTiTaiWang, https://github.com/BowenBao

## Summary

The change brings the new registry for symbolic functions in ONNX. The `SymbolicRegistry` class in `torch.onnx._internal.registration` replaces the dictionary and various functions defined in `torch.onnx.symbolic_registry`.

The new registry

- Has faster lookup by storing only functions in the opset version they are defined in

- Is easier to manage and interact with due to its class design

- Builds the foundation for the more flexible registration process detailed in #83787

Implementation changes

- **Breaking**: Remove `torch.onnx.symbolic_registry`

- `register_custom_op_symbolic` and `unregister_custom_op_symbolic` in utils maintain their api for compatibility

- Update _onnx_supported_ops.py for doc generation to include quantized ops.

- Update code to register python ops in `torch/csrc/jit/passes/onnx.cpp`

## Profiling results

-0.1 seconds in execution time. -34% time spent in `_run_symbolic_function`. Tested on the alexnet example in public doc.

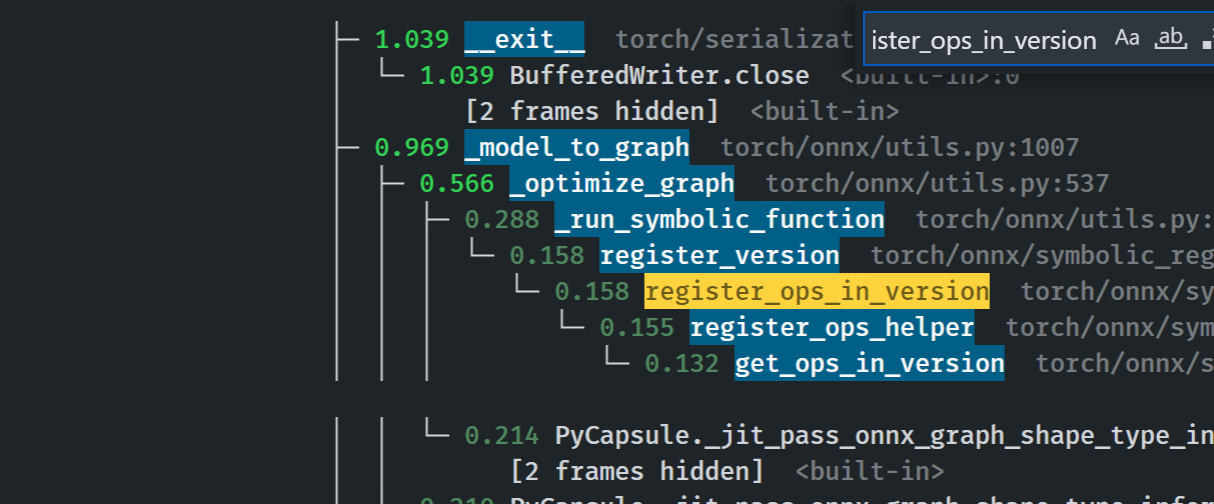

### After

```

└─ 1.641 export <@beartype(torch.onnx.utils.export) at 0x7f19be17f790>:1

└─ 1.641 export torch/onnx/utils.py:185

└─ 1.640 _export torch/onnx/utils.py:1331

├─ 0.889 _model_to_graph torch/onnx/utils.py:1005

│ ├─ 0.478 _optimize_graph torch/onnx/utils.py:535

│ │ ├─ 0.214 PyCapsule._jit_pass_onnx_graph_shape_type_inference <built-in>:0

│ │ │ [2 frames hidden] <built-in>

│ │ ├─ 0.190 _run_symbolic_function torch/onnx/utils.py:1670

│ │ │ └─ 0.145 Constant torch/onnx/symbolic_opset9.py:5782

│ │ │ └─ 0.139 _graph_op torch/onnx/_patch_torch.py:18

│ │ │ └─ 0.134 PyCapsule._jit_pass_onnx_node_shape_type_inference <built-in>:0

│ │ │ [2 frames hidden] <built-in>

│ │ └─ 0.033 [self]

```

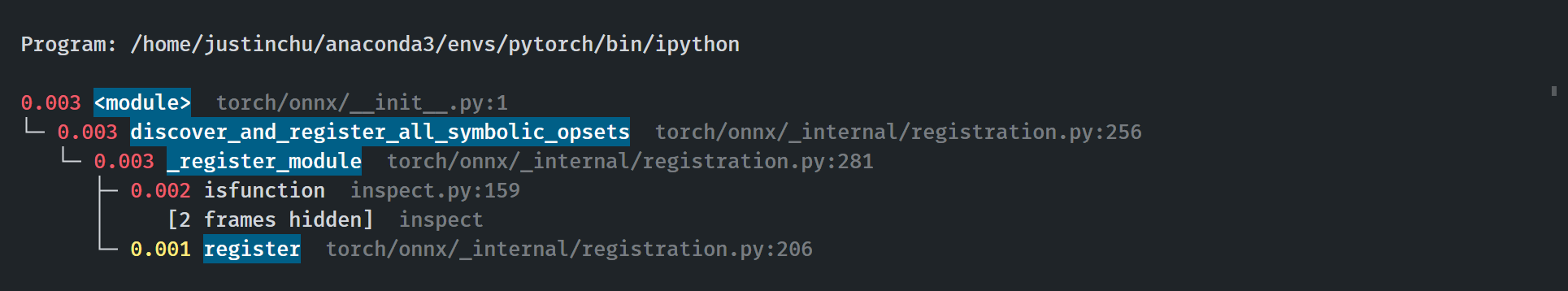

### Before

### Start up time

The startup process takes 0.03 seconds. Calls to `inspect` will be eliminated when we switch to using decorators for registration in #84448

Pull Request resolved: https://github.com/pytorch/pytorch/pull/84382

Approved by: https://github.com/AllenTiTaiWang, https://github.com/BowenBao

The default value for params_dict in _optimize_graph, which is None, throw the following error:

> _C._jit_pass_onnx_unpack_quantized_weights(

> TypeError: _jit_pass_onnx_unpack_quantized_weights(): incompatible function arguments. The following argument types are supported:

> 1. (arg0: torch::jit::Graph, arg1: Dict[str, IValue], arg2: bool) -> Dict[str, IValue]

Replacing it by an empty dict fixes the issue (and makes more sense).

Pull Request resolved: https://github.com/pytorch/pytorch/pull/83996

Approved by: https://github.com/BowenBao

Enable runtime type checking for all torch.onnx public apis, symbolic functions and most helpers (minus two that does not have a checkable type: `_.JitType` does not exist) by adding the beartype decorator. Fix type annotations to makes unit tests green.

Profile:

export `torchvision.models.alexnet(pretrained=True)`

```

with runtime type checking: 21.314 / 10 passes

without runtime type checking: 20.797 / 10 passes

+ 2.48%

```

Pull Request resolved: https://github.com/pytorch/pytorch/pull/84091

Approved by: https://github.com/BowenBao, https://github.com/thiagocrepaldi

This PR provides a temporary fix on #84092 in exporter to avoid more cases falling into this bug.

A long-term fix will be provided later.

A simple repro with torch.onnx.export is still under investigation, as torch.jit.trace() is not the API we call inside torch.onnx.export, and it may introduce the difference. Therefore, a test case is provided here only.

A specific test one can use,

```python

import torch

import onnxruntime

from onnxruntime.training.ortmodule import DebugOptions, LogLevel

from onnxruntime.training.ortmodule import ORTModule

class MyModule(torch.nn.Module):

def __init__(self):

super().__init__()

self.cv1 = torch.nn.Conv2d(3, 3, 5, 2, 1)

def forward(self, x):

x = self.cv1(x)

return x

x = torch.randn(10, 3, 20, 20) * 2

m = MyModule().eval()

x = x.cuda()

m = m.cuda()

debug_options = DebugOptions(log_level=LogLevel.VERBOSE, save_onnx=True, onnx_prefix="ViT-B")

m = ORTModule(m, debug_options=debug_options)

with torch.cuda.amp.autocast(dtype=torch.float16, cache_enabled=True):

loss = m(x)

```

AND make assertion fail in ORTModule

17ccd6fa02/orttraining/orttraining/python/training/ortmodule/_io.py (L578-L581)

Without the fix, the user will see the weight/bias of Conv node becomes constant.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/84219

Approved by: https://github.com/BowenBao, https://github.com/thiagocrepaldi

Introduce `_jit_pass_onnx_assign_node_and_value_names` to parse and assign

scoped name for nodes and values in exported onnx graph.

Module layer information is obtained from `ONNXScopeName` captured in `scope`

attribute in nodes. For nodes, the processed onnx node name are stored in

attribute `onnx_name`. For values, the processed onnx output name are stored

as `debugName`.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/82040

Approved by: https://github.com/AllenTiTaiWang, https://github.com/justinchuby, https://github.com/abock