mirror of

https://github.com/pytorch/pytorch.git

synced 2025-10-20 12:54:11 +08:00

main

12 Commits

| Author | SHA1 | Message | Date | |

|---|---|---|---|---|

| 26740f853e |

Remove unnecessary use of ctx.resolve_tools. (#120493)

In this case, it's simpler to use ctx.actions.run(executable = ...), which already ensures that the runfiles associated with the executable are present. (It's also possible to use ctx.actions.run_shell(tools = ...) with a custom command line, but it's unclear to me that indirecting through the shell is needed here.) Pull Request resolved: https://github.com/pytorch/pytorch/pull/120493 Approved by: https://github.com/ezyang |

|||

| ced5c89b6f |

add explicit vectorization for Half dtype on CPU (#96076)

This patch is part of half float performance optimization on CPU: * add specification for dtype `Half` in `Vectorized<>` under both avx256 and avx512. * add specification for dtype `Half` in functional utils, e.g. `vec::map_reduce<>()`, which uses float32 as accumulate type. Also add a helper struct `vec_hold_type<scalar_t>`, since Vectorized<Half>::value_type is pointing to its underlying storage type which is `uint16_t`, leading to error if the kernel uses `Vec::value_type`. Half uses the same logic as BFloat16 in the Vectorized<>, each half vector is mapped to 2x float vectors for computation. Notice that this patch modified the cmake files by adding **-mf16c** on AVX2 build, from https://gcc.gnu.org/onlinedocs/gcc/x86-Options.html, we can see that all the hardware platforms that support **avx2** already have **f16c** Pull Request resolved: https://github.com/pytorch/pytorch/pull/96076 Approved by: https://github.com/malfet |

|||

| c6c207f620 |

use ufunc_defs.bzl in BUILD.bazel

Pull Request resolved: https://github.com/pytorch/pytorch/pull/77747 This allows us to remove some duplication in aten.bzl. Differential Revision: [D36481025](https://our.internmc.facebook.com/intern/diff/D36481025/) **NOTE FOR REVIEWERS**: This PR has internal Facebook specific changes or comments, please review them on [Phabricator](https://our.internmc.facebook.com/intern/diff/D36481025/)! Approved by: https://github.com/seemethere |

|||

| a0a23c6ef8 |

[bazel] make it possible to build the whole world, update CI (#78870)

Fixes https://github.com/pytorch/pytorch/issues/77509 This PR supersedes https://github.com/pytorch/pytorch/pull/77510. It allows both `bazel query //...` and `bazel build --config=gpu //...` to work. Concretely the changes are: 1. Add "GenerateAten" mnemonic -- this is a convenience thing, so anybody who uses [Remote Execution](https://bazel.build/docs/remote-execution) can add a ``` build:rbe --strategy=GenerateAten=sandboxed,local ``` line to the `~/.bazelrc` and build this action locally (it doesn't have hermetic dependencies at the moment). 2. Replaced few `http_archive` repos by the proper existing submodules to avoid code drift. 3. Updated `pybind11_bazel` and added `python_version="3"` to `python_configure`. This prevents hard-to-debug error that are caused by an attempt to build with python2 on the systems where it's a default python (Ubuntu 18.04 for example). 4. Added `unused_` repos, they purpose is to hide the unwanted submodules of submodules that often have bazel targets in them. 5. Updated CI to build //... -- this is a great step forward to prevent regressions in targets not only in the top-level BUILD.bazel file, but in other folders too. 6. Switch default bazel build to use gpu support. Pull Request resolved: https://github.com/pytorch/pytorch/pull/78870 Approved by: https://github.com/ezyang |

|||

| 501d0729cb |

move build_variables.bzl and ufunc_defs.bzl from pytorch-root/tools/ to the root

Pull Request resolved: https://github.com/pytorch/pytorch/pull/78542 This makes importing easier in different build systems that have different absolute names for the pytorch-root. Differential Revision: [D36782582](https://our.internmc.facebook.com/intern/diff/D36782582/) **NOTE FOR REVIEWERS**: This PR has internal Facebook specific changes or comments, please review them on [Phabricator](https://our.internmc.facebook.com/intern/diff/D36782582/)! Approved by: https://github.com/malfet |

|||

| ce7910ba81 |

ufunc codegen (#65851)

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/65851 Design doc: https://docs.google.com/document/d/12rtlHnPUpaJ-I52Iob3L0WA3rKRr_OY7fXqeCvn2MVY/edit First read the design doc to understand the user syntax. In this PR, we have converted add to use ufunc codegen; most of the cpp changes are deleting the preexisting implementations of add, and ufunc/add.h are the new implementations in the ufunc format. The bulk of this PR is in the new codegen machinery. Here's the order to read the files: * `tools/codegen/model.py` * Some self-explanatory utility classes: `ScalarType`, `DTYPE_CLASSES` * New classes for representing ufunc entries in `native_functions.yaml`: `UfuncKey` and `UfuncInnerLoop`, as well as parsing logic for these entries. UfuncKey has some unusual entries (e.g., CPUScalar) that don't show up in the documentation, more on these below). * A predicate `is_ufunc_dispatch_key` for testing which dispatch keys should get automatically generated when an operator opts into ufuncs (CPU and CUDA, for now!) * `tools/codegen/api/types.py` * More self-explanatory utility stuff: ScalarTypeToCppMapping mapping ScalarType to CppTypes; Binding.rename for changing the name of a binding (used when we assign constructor variables to member variables inside CUDA functors) * New VectorizedCType, representing `at::vec::Vectorized<T>`. This is used inside vectorized CPU codegen. * New `scalar_t` and `opmath_t` BaseCppTypes, representing template parameters that we work with when doing codegen inside ufunc kernel loops (e.g., where you previously had Tensor, now you have `scalar_t`) * `StructuredImplSignature` represents a `TORCH_IMPL_FUNC` definition, and straightforwardly follows from preexisting `tools.codegen.api.structured` * `tools/codegen/translate.py` - Yes, we use translate a LOT in this PR. I improved some of the documentation, the only substantive changes are adding two new conversions: given a `scalar_t` or a `const Scalar&`, make it convertible to an `opmath_t` * `tools/codegen/api/ufunc.py` * OK, now we're at the meaty stuff. This file represents the calling conventions of three important concepts in ufunc codegen, which we'll describe shortly. All of these APIs are relatively simple, since there aren't any complicated types by the time you get to kernels. * stubs are the DispatchStub trampolines that CPU kernels use to get to their vectorized versions. They drop all Tensor arguments (as they are in TensorIterator) but otherwise match the structured calling convention * ufuncs are the inner loop template functions that you wrote in ufunc/add.h which do the actual computation in question. Here, all the Tensors and Scalars have been converted into the computation type (`opmath_t` in CUDA, `scalar_t` in CPU) * ufunctors are a CUDA-only concept representing functors that take some of their arguments on a host-side constructor, and the rest in the device-side apply. Once again, Tensors and Scalars are converted into the computation type, `opmath_t`, but for clarity all the functions take `scalar_t` as argument (as this is the type that is most salient at the call site). Because the constructor and apply are code generated separately, `ufunctor_arguments` returns a teeny struct `UfunctorBindings` * `tools/codegen/dest/ufunc.py` - the workhorse. This gets its own section below. * `tools/codegen/gen.py` - just calling out to the new dest.ufunc implementation to generate UfuncCPU_add.cpp, UFuncCPUKernel_add.cpp and UfuncCUDA_add.cu files per ufunc operator. Each of these files does what you expect (small file that registers kernel and calls stub; CPU implementation; CUDA implementation). There is a new file manager for UFuncCPUKernel files as these need to get replicated by cmake for vectorization. One little trick to avoid recompilation is we directly replicate code generated forward declarations in these files, to reduce the number of headers we depend on (this is codegen, we're just doing the preprocessors job!) * I'll talk about build system adjustments below. OK, let's talk about tools/codegen/dest/ufunc.py. This file can be roughly understood in two halves: one for CPU code generation, and the other for CUDA code generation. **CPU codegen.** Here's roughly what we want to generate: ``` // in UfuncCPU_add.cpp using add_fn = void (*)(TensorIteratorBase&, const at::Scalar&); DECLARE_DISPATCH(add_fn, add_stub); DEFINE_DISPATCH(add_stub); TORCH_IMPL_FUNC(ufunc_add_CPU) (const at::Tensor& self, const at::Tensor& other, const at::Scalar& alpha, const at::Tensor& out) { add_stub(device_type(), *this, alpha); } // in UfuncCPUKernel_add.cpp void add_kernel(TensorIteratorBase& iter, const at::Scalar& alpha) { at::ScalarType st = iter.common_dtype(); RECORD_KERNEL_FUNCTION_DTYPE("add_stub", st); switch (st) { AT_PRIVATE_CASE_TYPE("add_stub", at::ScalarType::Bool, bool, [&]() { auto _s_alpha = alpha.to<scalar_t>(); cpu_kernel(iter, [=](scalar_t self, scalar_t other) { return ufunc::add(self, other, _s_alpha); }); }) AT_PRIVATE_CASE_TYPE( "add_stub", at::ScalarType::ComplexFloat, c10::complex<float>, [&]() { auto _s_alpha = alpha.to<scalar_t>(); auto _v_alpha = at::vec::Vectorized<scalar_t>(_s_alpha); cpu_kernel_vec( iter, [=](scalar_t self, scalar_t other) { return ufunc::add(self, other, _s_alpha); }, [=](at::vec::Vectorized<scalar_t> self, at::vec::Vectorized<scalar_t> other) { return ufunc::add(self, other, _v_alpha); }); }) ... ``` The most interesting change about the generated code is what previously was an `AT_DISPATCH` macro invocation is now an unrolled loop. This makes it easier to vary behavior per-dtype (you can see in this example that the entry for bool and float differ) without having to add extra condtionals on top. Otherwise, to generate this code, we have to hop through several successive API changes: * In TORCH_IMPL_FUNC(ufunc_add_CPU), go from StructuredImplSignature to StubSignature (call the stub). This is normal argument massaging in the classic translate style. * In add_kernel, go from StubSignature to UfuncSignature. This is nontrivial, because we must do various conversions outside of the inner kernel loop. These conversions are done by hand, setting up the context appropriately, and then the final ufunc call is done using translate. (BTW, I introduce a new convention here, call on a Signature, for code generating a C++ call, and I think we should try to use this convention elsewhere) The other piece of nontrivial logic is the reindexing by dtype. This reindexing exists because the native_functions.yaml format is indexed by UfuncKey: ``` Generic: add (AllAndComplex, BFloat16, Half) ScalarOnly: add (Bool) ``` but when we do code generation, we case on dtype first, and then we generate a `cpu_kernel` or `cpu_kernel_vec` call. We also don't care about CUDA code generation (which Generic) hits. Do this, we lower these keys into two low level keys, CPUScalar and CPUVector, which represent the CPU scalar and CPU vectorized ufuncs, respectively (Generic maps to CPUScalar and CPUVector, while ScalarOnly maps to CPUScalar only). Reindexing then gives us: ``` AllAndComplex: CPUScalar: add CPUVector: add Bool: CPUScalar: add ... ``` which is a good format for code generation, but too wordy to force native_functions.yaml authors to write. Note that when reindexing, it is possible for there to be a conflicting definition for the same dtype; we just define a precedence order and have one override the other, so that it is easy to specialize on a particular dtype if necessary. Also note that because CPUScalar/CPUVector are part of UfuncKey, technically you can manually specify them in native_functions.yaml, although I don't expect this functionality to be used. **CUDA codegen.** CUDA code generation has many of the same ideas as CPU codegen, but it needs to know about functors, and stubs are handled slightly differently. Here is what we want to generate: ``` template <typename scalar_t> struct CUDAFunctorOnSelf_add { using opmath_t = at::opmath_type<scalar_t>; opmath_t other_; opmath_t alpha_; CUDAFunctorOnSelf_add(opmath_t other, opmath_t alpha) : other_(other), alpha_(alpha) {} __device__ scalar_t operator()(scalar_t self) { return ufunc::add(static_cast<opmath_t>(self), other_, alpha_); } }; ... two more functors ... void add_kernel(TensorIteratorBase& iter, const at::Scalar & alpha) { TensorIteratorBase& iter = *this; at::ScalarType st = iter.common_dtype(); RECORD_KERNEL_FUNCTION_DTYPE("ufunc_add_CUDA", st); switch (st) { AT_PRIVATE_CASE_TYPE("ufunc_add_CUDA", at::ScalarType::Bool, bool, [&]() { using opmath_t = at::opmath_type<scalar_t>; if (false) { } else if (iter.is_cpu_scalar(1)) { CUDAFunctorOnOther_add<scalar_t> ufunctor( iter.scalar_value<opmath_t>(1), (alpha).to<opmath_t>()); iter.remove_operand(1); gpu_kernel(iter, ufunctor); } else if (iter.is_cpu_scalar(2)) { CUDAFunctorOnSelf_add<scalar_t> ufunctor( iter.scalar_value<opmath_t>(2), (alpha).to<opmath_t>()); iter.remove_operand(2); gpu_kernel(iter, ufunctor); } else { gpu_kernel(iter, CUDAFunctor_add<scalar_t>((alpha).to<opmath_t>())); } }) ... REGISTER_DISPATCH(add_stub, &add_kernel); TORCH_IMPL_FUNC(ufunc_add_CUDA) (const at::Tensor& self, const at::Tensor& other, const at::Scalar& alpha, const at::Tensor& out) { add_kernel(*this, alpha); } ``` The functor business is the bulk of the complexity. Like CPU, we decompose CUDA implementation into three low-level keys: CUDAFunctor (normal, all CUDA kernels will have this), and CUDAFunctorOnOther/CUDAFunctorOnScalar (these are to support Tensor-Scalar specializations when the Scalar lives on CPU). Both Generic and ScalarOnly provide ufuncs for CUDAFunctor, but for us to also lift these into Tensor-Scalar specializations, the operator itself must be eligible for Tensor-Scalar specialization. At the moment, this is hardcoded to be all binary operators, but in the future we can use tags in native_functions.yaml to disambiguate (or perhaps expand codegen to handle n-ary operators). The reindexing process not only reassociates ufuncs by dtype, but it also works out if Tensor-Scalar specializations are needed and codegens the ufunctors necessary for the level of specialization here (`compute_ufunc_cuda_functors`). Generating the actual kernel (`compute_ufunc_cuda_dtype_body`) just consists of, for each specialization, constructing the functor and then passing it off to `gpu_kernel`. Most of the hard work is in functor generation, where we take care to make sure `operator()` has the correct input and output types (which `gpu_kernel` uses to arrange for memory accesses to the actual CUDA tensor; if you get these types wrong, your kernel will still work, it will just run very slowly!) There is one big subtlety with CUDA codegen: this won't work: ``` Generic: add (AllAndComplex, BFloat16, Half) ScalarOnly: add_bool (Bool) ``` This is because, even though there are separate Generic/ScalarOnly entries, we only generate a single functor to cover ALL dtypes in this case, and the functor has the ufunc name hardcoded into it. You'll get an error if you try to do this; to fix it, just make sure the ufunc is named the same consistently throughout. In the code, you see this because after testing for the short circuit case (when a user provided the functor themselves), we squash all the generic entries together and assert their ufunc names are the same. Hypothetically, if we generated a separate functor per dtype, we could support differently named ufuncs but... why would you do that to yourself. (One piece of nastiness is that the native_functions.yaml syntax doesn't stop you from shooting yourself in the foot.) A brief word about CUDA stubs: technically, they are not necessary, as there is no CPU/CPUKernel style split for CUDA kernels (so, if you look, structured impl actually calls add_kernel directly). However, there is some code that still makes use of CUDA stubs (in particular, I use the stub to conveniently reimplement sub in terms of add), so we still register it. This might be worth frying some more at a later point in time. **Build system changes.** If you are at FB, you should review these changes in fbcode, as there are several changes in files that are not exported to ShipIt. The build system changes in this patch are substantively complicated by the fact that I have to implement these changes five times: * OSS cmake build * OSS Bazel build * FB fbcode Buck build * FB xplat Buck build (selective build) * FB ovrsource Buck build Due to technical limitations in the xplat Buck build related to selective build, it is required that you list every ufunc header manually (this is done in tools/build_variables.bzl) The OSS cmake changes are entirely in cmake/Codegen.cmake there is a new set of files cpu_vec_generated (corresponding to UfuncCPUKernel files) which is wired up in the same way as other files. These files are different because they need to get compiled multiple times under different vectorization settings. I adjust the codegen, slightly refactoring the inner loop into its own function so I can use different base path calculation depending on if the file is traditional (in the native/cpu folder) or generated (new stuff from this diff. The Bazel/Buck changes are organized around tools/build_variables.bzl, which contain the canonical list of ufunc headers (aten_ufunc_headers), and tools/ufunc_defs.bzl (added to ShipIt export list in D34465699) which defines a number of functions that compute the generated cpu, cpu kernel and cuda files based on the headers list. For convenience, these functions take a genpattern (a string with a {} for interpolation) which can be used to easily reformat the list of formats in target form, which is commonly needed in the build systems. The split between build_variables.bzl and ufunc_defs.bzl is required because build_variables.bzl is executed by a conventional Python interpreter as part of the OSS cmake, but we require Skylark features to implement the functions in ufunc_defs.bzl (I did some quick Googling but didn't find a lightweight way to run the Skylark interpreter in open source.) With these new file lists, the rest of the build changes are mostly inserting references to these files wherever necessary; in particular, cpu kernel files have to be worked into the multiple vectorization build flow (intern_build_aten_ops in OSS Bazel). Most of the subtlety relates to selective build. Selective build requires operator files to be copied per overall selective build; as dhruvbird explains to me, glob expansion happens during the action graph phase, but the selective build handling of TEMPLATE_SOURCE_LIST is referencing the target graph. In other words, we can't use a glob to generate deps for another rule, because we need to copy files from wherever (included generated files) to a staging folder so the rules can pick them up. It can be somewhat confusing to understand which bzl files are associated with which build. Here are the relevant mappings for files I edited: * Used by everyone - tools/build_tools.bzl, tools/ufunc_defs.bzl * OSS Bazel - aten.bzl, BUILD.bazel * FB fbcode Buck - TARGETS * FB xplat Buck -BUCK, pt_defs.bzl, pt_template_srcs.bzl * FB ovrsource Buck - ovrsource_defs.bzl, pt_defs.bzl Note that pt_defs.bzl is used by both xplat and ovrsource. This leads to the "tiresome" handling for enabled backends, as selective build is CPU only, but ovrsource is CPU and CUDA. BTW, while I was at it, I beefed up fb/build_arvr.sh to also do a CUDA ovrsource build, which was not triggered previously. Signed-off-by: Edward Z. Yang <ezyang@fb.com> Test Plan: Imported from OSS Reviewed By: albanD Differential Revision: D31306586 Pulled By: ezyang fbshipit-source-id: 210258ce83f578f79cf91b77bfaeac34945a00c6 (cherry picked from commit d65157b0b894b6701ee062f05a5f57790a06c91c) |

|||

| 7a12b5063e |

[AutoAccept][Codemod][FBSourceBuckFormatLinter] Daily arc lint --take BUCKFORMAT

Reviewed By: zertosh Differential Revision: D33119794 fbshipit-source-id: ca327caf34560c0bba32511e57d5dc18b71bdfe1 |

|||

| 4829dcea09 |

Codegen: Generate seperate headers per operator (#68247)

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/68247 This splits `Functions.h`, `Operators.h`, `NativeFunctions.h` and `NativeMetaFunctions.h` into seperate headers per operator base name. With `at::sum` as an example, we can include: ```cpp <ATen/core/sum.h> // Like Functions.h <ATen/core/sum_ops.h> // Like Operators.h <ATen/core/sum_native.h> // Like NativeFunctions.h <ATen/core/sum_meta.h> // Like NativeMetaFunctions.h ``` The umbrella headers are still being generated, but all they do is include from the `ATen/ops' folder. Further, `TensorBody.h` now only includes the operators that have method variants. Which means files that only include `Tensor.h` don't need to be rebuilt when you modify function-only operators. Currently there are about 680 operators that don't have method variants, so this is potentially a significant win for incremental builds. Test Plan: Imported from OSS Reviewed By: mrshenli Differential Revision: D32596272 Pulled By: albanD fbshipit-source-id: 447671b2b6adc1364f66ed9717c896dae25fa272 |

|||

| 9e53c823b8 |

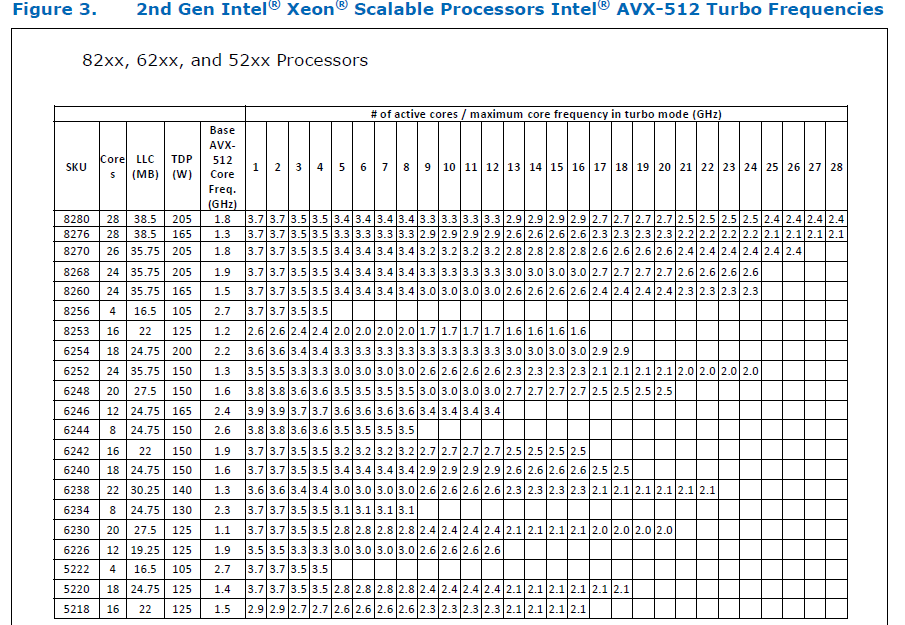

Add AVX512 support in ATen & remove AVX support (#61903)

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/61903 ### Remaining Tasks - [ ] Collate results of benchmarks on two Intel Xeon machines (with & without CUDA, to check if CPU throttling causes issues with GPUs) - make graphs, including Roofline model plots (Intel Advisor can't make them with libgomp, though, but with Intel OpenMP). ### Summary 1. This draft PR produces binaries with with 3 types of ATen kernels - default, AVX2, AVX512 . Using the environment variable `ATEN_AVX512_256=TRUE` also results in 3 types of kernels, but the compiler can use 32 ymm registers for AVX2, instead of the default 16. ATen kernels for `CPU_CAPABILITY_AVX` have been removed. 2. `nansum` is not using AVX512 kernel right now, as it has poorer accuracy for Float16, than does AVX2 or DEFAULT, whose respective accuracies aren't very good either (#59415). It was more convenient to disable AVX512 dispatch for all dtypes of `nansum` for now. 3. On Windows , ATen Quantized AVX512 kernels are not being used, as quantization tests are flaky. If `--continue-through-failure` is used, then `test_compare_model_outputs_functional_static` fails. But if this test is skipped, `test_compare_model_outputs_conv_static` fails. If both these tests are skipped, then a third one fails. These are hard to debug right now due to not having access to a Windows machine with AVX512 support, so it was more convenient to disable AVX512 dispatch of all ATen Quantized kernels on Windows for now. 4. One test is currently being skipped - [test_lstm` in `quantization.bc](https://github.com/pytorch/pytorch/issues/59098) - It fails only on Cascade Lake machines, irrespective of the `ATEN_CPU_CAPABILITY` used, because FBGEMM uses `AVX512_VNNI` on machines that support it. The value of `reduce_range` should be used as `False` on such machines. The list of the changes is at https://gist.github.com/imaginary-person/4b4fda660534f0493bf9573d511a878d. Credits to ezyang for proposing `AVX512_256` - these use AVX2 intrinsics but benefit from 32 registers, instead of the 16 ymm registers that AVX2 uses. Credits to limo1996 for the initial proposal, and for optimizing `hsub_pd` & `hadd_pd`, which didn't have direct AVX512 equivalents, and are being used in some kernels. He also refactored `vec/functional.h` to remove duplicated code. Credits to quickwritereader for helping fix 4 failing complex multiplication & division tests. ### Testing 1. `vec_test_all_types` was modified to test basic AVX512 support, as tests already existed for AVX2. Only one test had to be modified, as it was hardcoded for AVX2. 2. `pytorch_linux_bionic_py3_8_gcc9_coverage_test1` & `pytorch_linux_bionic_py3_8_gcc9_coverage_test2` are now using `linux.2xlarge` instances, as they support AVX512. They were used for testing AVX512 kernels, as AVX512 kernels are being used by default in both of the CI checks. Windows CI checks had already been using machines with AVX512 support. ### Would the downclocking caused by AVX512 pose an issue? I think it's important to note that AVX2 causes downclocking as well, and the additional downclocking caused by AVX512 may not hamper performance on some Skylake machines & beyond, because of the double vector-size. I think that [this post with verifiable references is a must-read](https://community.intel.com/t5/Software-Tuning-Performance/Unexpected-power-vs-cores-profile-for-MKL-kernels-on-modern-Xeon/m-p/1133869/highlight/true#M6450). Also, AVX512 would _probably not_ hurt performance on a high-end machine, [but measurements are recommended](https://lemire.me/blog/2018/09/07/avx-512-when-and-how-to-use-these-new-instructions/). In case it does, `ATEN_AVX512_256=TRUE` can be used for building PyTorch, as AVX2 can then use 32 ymm registers instead of the default 16. [FBGEMM uses `AVX512_256` only on Xeon D processors](https://github.com/pytorch/FBGEMM/pull/209), which are said to have poor AVX512 performance. This [official data](https://www.intel.com/content/dam/www/public/us/en/documents/specification-updates/xeon-scalable-spec-update.pdf) is for the Intel Skylake family, and the first link helps understand its significance. Cascade Lake & Ice Lake SP Xeon processors are said to be even better when it comes to AVX512 performance. Here is the corresponding data for [Cascade Lake](https://cdrdv2.intel.com/v1/dl/getContent/338848) -   The corresponding data isn't publicly available for Intel Xeon SP 3rd gen (Ice Lake SP), but [Intel mentioned that the 3rd gen has frequency improvements pertaining to AVX512](https://newsroom.intel.com/wp-content/uploads/sites/11/2021/04/3rd-Gen-Intel-Xeon-Scalable-Platform-Press-Presentation-281884.pdf). Ice Lake SP machines also have 48 KB L1D caches, so that's another reason for AVX512 performance to be better on them. ### Is PyTorch always faster with AVX512? No, but then PyTorch is not always faster with AVX2 either. Please refer to #60202. The benefit from vectorization is apparent with with small tensors that fit in caches or in kernels that are more compute heavy. For instance, AVX512 or AVX2 would yield no benefit for adding two 64 MB tensors, but adding two 1 MB tensors would do well with AVX2, and even more so with AVX512. It seems that memory-bound computations, such as adding two 64 MB tensors can be slow with vectorization (depending upon the number of threads used), as the effects of downclocking can then be observed. Original pull request: https://github.com/pytorch/pytorch/pull/56992 Reviewed By: soulitzer Differential Revision: D29266289 Pulled By: ezyang fbshipit-source-id: 2d5e8d1c2307252f22423bbc14f136c67c3e6184 |

|||

| 684589e8e0 |

[codemod][fbcode][1/n] Apply buildifier

Test Plan: Manual inspection. Sandcastle. Reviewed By: karlodwyer, zsol Differential Revision: D27702434 fbshipit-source-id: ee7498331c51daf44a29f2de452e3b02488b9af3 |

|||

| ebcacd5e87 |

[Bazel] Build ATen_CPU_AVX2 lib with AVX2 arch flags enabled (#37381)

Summary:

Make sleef dependency public so that `ATen_CPU_{capability}` libs can depend on it

Pull Request resolved: https://github.com/pytorch/pytorch/pull/37381

Test Plan: CI

Differential Revision: D21273443

Pulled By: malfet

fbshipit-source-id: 7f756c7f3c605e51cf0c27ea37f687913cd48708

|

|||

| 447bcd341d |

Bazel build of pytorch with gating CI (#36011)

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/36011 Differential Revision: D20873430 Pulled By: malfet fbshipit-source-id: 8ffffd10ca0ff8bdab578a70a9b2b777aed985d0 |