mirror of

https://github.com/huggingface/peft.git

synced 2025-10-20 23:43:47 +08:00

Compare commits

294 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

| 0649947396 | |||

| b5acf5d6be | |||

| 748f7968f3 | |||

| 47b3712898 | |||

| 2558dd872d | |||

| 6f41990da4 | |||

| d8fec400c7 | |||

| 32f3878870 | |||

| cb08d095a5 | |||

| 86d086ec37 | |||

| 02ae6bcb37 | |||

| 77b7238b90 | |||

| 3edcebf713 | |||

| e0cb15e2ee | |||

| 3ec55f4ac4 | |||

| 608a90ded9 | |||

| e19f7bf424 | |||

| 250b7eb85f | |||

| f5f7b67d60 | |||

| 7a22b7daf0 | |||

| e7b47ac01d | |||

| 8bc3c0861d | |||

| 383e1fab0e | |||

| d0fa70aeb6 | |||

| b1d6c77108 | |||

| f0d3c6b892 | |||

| 3d9529d190 | |||

| 835181460c | |||

| 3d6520e2eb | |||

| 5a4b9cade6 | |||

| 144b7345c2 | |||

| bdb856786e | |||

| ed865e2812 | |||

| 56773b9a92 | |||

| c8974c5880 | |||

| 7671926243 | |||

| 9f0cfc9919 | |||

| 811169939f | |||

| b0f1bb468c | |||

| 31c884e934 | |||

| 88875f1cf5 | |||

| 0d283ae0e6 | |||

| e07095a654 | |||

| 5b60ec0204 | |||

| dfac641c63 | |||

| 26726bf1dd | |||

| 16ec3f995a | |||

| ca6bbb594f | |||

| 2e821c1dc8 | |||

| 8452d71e14 | |||

| 02b5aeddf9 | |||

| c4c826c0c0 | |||

| d582b68c7f | |||

| 4537317961 | |||

| ffb8512396 | |||

| 78daa4cf76 | |||

| 65b75a6798 | |||

| a62b337940 | |||

| 8221246f2f | |||

| 8e979fc732 | |||

| a86b29a217 | |||

| 8dd45b75d7 | |||

| 91e4b0879d | |||

| a18734d87a | |||

| 6008f272a5 | |||

| a9425d1409 | |||

| 3b63996964 | |||

| 3eb6bbacee | |||

| 5471c9a1be | |||

| d28fffb917 | |||

| 6dca6d2292 | |||

| a1fe368bfc | |||

| 234bbabd9b | |||

| e3840c249e | |||

| 7e84dec20b | |||

| e7e95c004b | |||

| e597388305 | |||

| 7662f342e0 | |||

| b58b13b528 | |||

| 98f4db2c79 | |||

| c43cc5028e | |||

| 84abf5a5ab | |||

| 34f3fba2b3 | |||

| 9119b780eb | |||

| 7e5335d093 | |||

| 096fe53737 | |||

| 90aa2c1e05 | |||

| 01732176e0 | |||

| aa2ca83ca7 | |||

| 1b3b7b5b2a | |||

| bc9426f10b | |||

| 3967fcc8ea | |||

| 23213cad8d | |||

| 2efc36ccdf | |||

| cc27cfd478 | |||

| b74c2f644d | |||

| 470b66c639 | |||

| f81147268e | |||

| 37dd675f91 | |||

| 7b7e4b2194 | |||

| 043d5c0bd6 | |||

| 8a0dce2fb9 | |||

| ede3c7df22 | |||

| cf467d8aa0 | |||

| 47c4d9578c | |||

| 65513e5db4 | |||

| f5a95930c2 | |||

| 963e3128ed | |||

| 8db74d42c4 | |||

| a564779b67 | |||

| cde8f1af2b | |||

| 25dec602f3 | |||

| 83de1af281 | |||

| 5f2084698b | |||

| e95dc1360b | |||

| 234774345b | |||

| 7d0c0a33d3 | |||

| 7716dd86e9 | |||

| 60ec4d8502 | |||

| 6e953810af | |||

| a1c472f08f | |||

| 055e4dbe1e | |||

| c1a83fd692 | |||

| 7da7f85188 | |||

| eba459553c | |||

| 9bb83ed1a5 | |||

| b5492db514 | |||

| ddf90a8b2f | |||

| 97f3ed577e | |||

| 17273aa4bf | |||

| fc78a2491e | |||

| 497bbeafbd | |||

| 21d8d467dc | |||

| e805a3173a | |||

| 9350ab8a9d | |||

| 912ad41e96 | |||

| ce925d844a | |||

| d1be2696fd | |||

| 4da2876a10 | |||

| a30e006bb2 | |||

| fff24008eb | |||

| dffde4537f | |||

| 9d943672c0 | |||

| 1a7f3e3478 | |||

| 68b90a14d7 | |||

| 75e4ef3536 | |||

| 5e4aa7eb92 | |||

| 5eb5b492af | |||

| a2d96d097a | |||

| 30889ef260 | |||

| 67918efb49 | |||

| 189a9a666d | |||

| bfc102c0c0 | |||

| 1c1c7fdaa6 | |||

| 4a15595822 | |||

| bb2471d926 | |||

| 54ca31153d | |||

| ebbff4023a | |||

| 62237dc9b1 | |||

| eaa5eef28e | |||

| bf54136a79 | |||

| a43ec59762 | |||

| 0089ebd272 | |||

| fe01d6de85 | |||

| f9b673ea37 | |||

| dc28a61e82 | |||

| 71585d611f | |||

| c6bcf91ca1 | |||

| 4354a7d496 | |||

| f36f50acb4 | |||

| 777c0b6ad7 | |||

| 6451cbd70c | |||

| 7d28536b18 | |||

| eb2c12d99a | |||

| c6b28a22b8 | |||

| e96eef9ea1 | |||

| 54ee2fb1af | |||

| cbd783b4df | |||

| 26504a0119 | |||

| 4186c9b104 | |||

| 8665e2b571 | |||

| cbf346d962 | |||

| 2a0fb71f4f | |||

| c4cf9e7d3b | |||

| cf04d0353f | |||

| 4023da904f | |||

| 6fe1aac65d | |||

| 799420aef1 | |||

| 993836ff90 | |||

| 1c9679ac71 | |||

| e745ffd7d0 | |||

| 029dcd5a1c | |||

| 482a2a6d9a | |||

| 119de1715c | |||

| a0a46c06db | |||

| 3708793ba9 | |||

| 46a84bd395 | |||

| bd544bb2ce | |||

| 55c37e9c0b | |||

| 997e6ec5ab | |||

| ddb114af0a | |||

| 4b02148af2 | |||

| 0f1e9091cc | |||

| 88e2e75cc3 | |||

| c9df262d69 | |||

| 67a08009ff | |||

| 971dd6e815 | |||

| ee6f6dcee7 | |||

| 21c304f6f6 | |||

| e73967edea | |||

| b08e6faf2b | |||

| 5c13ea3b12 | |||

| 00b820061e | |||

| 504d3c8329 | |||

| fc9f4b3176 | |||

| 895513c465 | |||

| c893394808 | |||

| 86562eec49 | |||

| b467e3de5c | |||

| 2ab005f3ab | |||

| b482391b80 | |||

| d56df7fc64 | |||

| a87ff4c744 | |||

| 2665f80a17 | |||

| 9fd788bedb | |||

| 2336780f9e | |||

| c22a8e5d47 | |||

| 1a7433b136 | |||

| 70d559d029 | |||

| bffbbbf76a | |||

| 9c70468a3c | |||

| f7cf460f7c | |||

| 1b1091c158 | |||

| c456d55216 | |||

| e05b2670c5 | |||

| 5ed46e4f04 | |||

| 5bad88ba04 | |||

| 6a57472665 | |||

| da17ac0f48 | |||

| 2674f5ea66 | |||

| 2b901ee572 | |||

| 8298f1a366 | |||

| f0fb9516d8 | |||

| 04c411010b | |||

| da29ae62d4 | |||

| 64c8d1da85 | |||

| e586f96740 | |||

| e35d46de19 | |||

| b4faffea8a | |||

| 19145bba8a | |||

| c0dd27bc97 | |||

| fb607d00ad | |||

| a634f6a13e | |||

| dd4771b2f4 | |||

| 043238578f | |||

| b4ac2d840b | |||

| 0ae52fece1 | |||

| 8351331d78 | |||

| f1ecfa6ae6 | |||

| b5a8a294ed | |||

| 9cdaed2769 | |||

| 18a0910113 | |||

| 99e1a55f54 | |||

| 21df968fd1 | |||

| 5a3a5acff2 | |||

| 70302d7b4f | |||

| 3ff90626b6 | |||

| 1877329093 | |||

| 98429b8184 | |||

| d350a00ece | |||

| ad756173f1 | |||

| 94877b5008 | |||

| f020404ee6 | |||

| 79298c7c24 | |||

| b25ce8a0cd | |||

| 5d84484079 | |||

| 49ddefa834 | |||

| 3af469eeea | |||

| 5e7e5ad836 | |||

| 9d8287f3e3 | |||

| 2efd02769b | |||

| 669dd4edeb | |||

| b5641cc744 | |||

| c5d94855cd | |||

| face67dfeb | |||

| d9094cebea | |||

| 493ae58beb | |||

| ed4ce9fc94 | |||

| 4c48970cb0 | |||

| 46e03602ed | |||

| 45343a4ccc | |||

| 276c91b143 | |||

| cfe35a7878 | |||

| d47d23aa0e |

21

.github/ISSUE_TEMPLATE/bug-report.yml

vendored

21

.github/ISSUE_TEMPLATE/bug-report.yml

vendored

@ -15,17 +15,17 @@ body:

|

||||

attributes:

|

||||

label: Who can help?

|

||||

description: |

|

||||

Your issue will be replied to more quickly if you can figure out the right person to tag with @

|

||||

Your issue will be replied to more quickly if you can figure out the right person to tag with @.

|

||||

If you know how to use git blame, that is the easiest way, otherwise, here is a rough guide of **who to tag**.

|

||||

|

||||

|

||||

All issues are read by one of the core maintainers, so if you don't know who to tag, just leave this blank and

|

||||

a core maintainer will ping the right person.

|

||||

|

||||

|

||||

Please tag fewer than 3 people.

|

||||

|

||||

Library: @pacman100 @younesbelkada @sayakpaul

|

||||

|

||||

Documentation: @stevhliu and @MKhalusova

|

||||

|

||||

Library: @pacman100 @younesbelkada @benjaminbossan @sayakpaul

|

||||

|

||||

Documentation: @stevhliu

|

||||

|

||||

placeholder: "@Username ..."

|

||||

|

||||

@ -55,12 +55,11 @@ body:

|

||||

label: Reproduction

|

||||

description: |

|

||||

Please provide a code sample that reproduces the problem you ran into. It can be a Colab link or just a code snippet.

|

||||

Please provide the simplest reproducer as possible so that we can quickly fix the issue.

|

||||

Please provide the simplest reproducer as possible so that we can quickly fix the issue. When you paste

|

||||

the error message, please include the full traceback.

|

||||

|

||||

placeholder: |

|

||||

Reproducer:

|

||||

|

||||

|

||||

Reproducer:

|

||||

|

||||

- type: textarea

|

||||

id: expected-behavior

|

||||

|

||||

143

.github/workflows/build_docker_images.yml

vendored

143

.github/workflows/build_docker_images.yml

vendored

@ -10,6 +10,9 @@ concurrency:

|

||||

group: docker-image-builds

|

||||

cancel-in-progress: false

|

||||

|

||||

env:

|

||||

CI_SLACK_CHANNEL: ${{ secrets.CI_DOCKER_CHANNEL }}

|

||||

|

||||

jobs:

|

||||

latest-cpu:

|

||||

name: "Latest Peft CPU [dev]"

|

||||

@ -42,6 +45,15 @@ jobs:

|

||||

push: true

|

||||

tags: huggingface/peft-cpu

|

||||

|

||||

- name: Post to Slack

|

||||

if: always()

|

||||

uses: huggingface/hf-workflows/.github/actions/post-slack@main

|

||||

with:

|

||||

slack_channel: "C06LKJB31RU"

|

||||

title: 🤗 Results of the PEFT-CPU docker build

|

||||

status: ${{ job.status }}

|

||||

slack_token: ${{ secrets.SLACK_CIFEEDBACK_BOT_TOKEN }}

|

||||

|

||||

latest-cuda:

|

||||

name: "Latest Peft GPU [dev]"

|

||||

runs-on: ubuntu-latest

|

||||

@ -72,3 +84,134 @@ jobs:

|

||||

context: ./docker/peft-gpu

|

||||

push: true

|

||||

tags: huggingface/peft-gpu

|

||||

|

||||

- name: Post to Slack

|

||||

if: always()

|

||||

uses: huggingface/hf-workflows/.github/actions/post-slack@main

|

||||

with:

|

||||

slack_channel: "C06LKJB31RU"

|

||||

title: 🤗 Results of the PEFT-GPU docker build

|

||||

status: ${{ job.status }}

|

||||

slack_token: ${{ secrets.SLACK_CIFEEDBACK_BOT_TOKEN }}

|

||||

|

||||

latest-cuda-bnb-source:

|

||||

name: "Latest Peft GPU + bnb source [dev]"

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- name: Cleanup disk

|

||||

run: |

|

||||

sudo ls -l /usr/local/lib/

|

||||

sudo ls -l /usr/share/

|

||||

sudo du -sh /usr/local/lib/

|

||||

sudo du -sh /usr/share/

|

||||

sudo rm -rf /usr/local/lib/android

|

||||

sudo rm -rf /usr/share/dotnet

|

||||

sudo du -sh /usr/local/lib/

|

||||

sudo du -sh /usr/share/

|

||||

- name: Set up Docker Buildx

|

||||

uses: docker/setup-buildx-action@v1

|

||||

- name: Check out code

|

||||

uses: actions/checkout@v3

|

||||

- name: Login to DockerHub

|

||||

uses: docker/login-action@v1

|

||||

with:

|

||||

username: ${{ secrets.DOCKERHUB_USERNAME }}

|

||||

password: ${{ secrets.DOCKERHUB_PASSWORD }}

|

||||

|

||||

- name: Build and Push GPU

|

||||

uses: docker/build-push-action@v4

|

||||

with:

|

||||

context: ./docker/peft-gpu-bnb-source

|

||||

push: true

|

||||

tags: huggingface/peft-gpu-bnb-source

|

||||

|

||||

- name: Post to Slack

|

||||

if: always()

|

||||

uses: huggingface/hf-workflows/.github/actions/post-slack@main

|

||||

with:

|

||||

slack_channel: "C06LKJB31RU"

|

||||

title: 🤗 Results of the PEFT-GPU (bnb source / HF latest) docker build

|

||||

status: ${{ job.status }}

|

||||

slack_token: ${{ secrets.SLACK_CIFEEDBACK_BOT_TOKEN }}

|

||||

|

||||

latest-cuda-bnb-source-latest:

|

||||

name: "Latest Peft GPU + bnb source [accelerate / peft / transformers latest]"

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- name: Cleanup disk

|

||||

run: |

|

||||

sudo ls -l /usr/local/lib/

|

||||

sudo ls -l /usr/share/

|

||||

sudo du -sh /usr/local/lib/

|

||||

sudo du -sh /usr/share/

|

||||

sudo rm -rf /usr/local/lib/android

|

||||

sudo rm -rf /usr/share/dotnet

|

||||

sudo du -sh /usr/local/lib/

|

||||

sudo du -sh /usr/share/

|

||||

- name: Set up Docker Buildx

|

||||

uses: docker/setup-buildx-action@v1

|

||||

- name: Check out code

|

||||

uses: actions/checkout@v3

|

||||

- name: Login to DockerHub

|

||||

uses: docker/login-action@v1

|

||||

with:

|

||||

username: ${{ secrets.DOCKERHUB_USERNAME }}

|

||||

password: ${{ secrets.DOCKERHUB_PASSWORD }}

|

||||

|

||||

- name: Build and Push GPU

|

||||

uses: docker/build-push-action@v4

|

||||

with:

|

||||

context: ./docker/peft-gpu-bnb-latest

|

||||

push: true

|

||||

tags: huggingface/peft-gpu-bnb-latest

|

||||

|

||||

- name: Post to Slack

|

||||

if: always()

|

||||

uses: huggingface/hf-workflows/.github/actions/post-slack@main

|

||||

with:

|

||||

slack_channel: "C06LKJB31RU"

|

||||

title: 🤗 Results of the PEFT-GPU (bnb source / HF source) docker build

|

||||

status: ${{ job.status }}

|

||||

slack_token: ${{ secrets.SLACK_CIFEEDBACK_BOT_TOKEN }}

|

||||

|

||||

latest-cuda-bnb-source-multi:

|

||||

name: "Latest Peft GPU + bnb (multi-backend) source [accelerate / peft / transformers source]"

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- name: Cleanup disk

|

||||

run: |

|

||||

sudo ls -l /usr/local/lib/

|

||||

sudo ls -l /usr/share/

|

||||

sudo du -sh /usr/local/lib/

|

||||

sudo du -sh /usr/share/

|

||||

sudo rm -rf /usr/local/lib/android

|

||||

sudo rm -rf /usr/share/dotnet

|

||||

sudo du -sh /usr/local/lib/

|

||||

sudo du -sh /usr/share/

|

||||

- name: Set up Docker Buildx

|

||||

uses: docker/setup-buildx-action@v1

|

||||

- name: Check out code

|

||||

uses: actions/checkout@v3

|

||||

- name: Login to DockerHub

|

||||

uses: docker/login-action@v1

|

||||

with:

|

||||

username: ${{ secrets.DOCKERHUB_USERNAME }}

|

||||

password: ${{ secrets.DOCKERHUB_PASSWORD }}

|

||||

|

||||

- name: Build and Push GPU

|

||||

uses: docker/build-push-action@v4

|

||||

with:

|

||||

context: ./docker/peft-gpu-bnb-multi-source

|

||||

push: true

|

||||

tags: huggingface/peft-gpu-bnb-multi-source

|

||||

|

||||

- name: Post to Slack

|

||||

if: always()

|

||||

uses: huggingface/hf-workflows/.github/actions/post-slack@main

|

||||

with:

|

||||

slack_channel: "C06LKJB31RU"

|

||||

title: 🤗 Results of the PEFT-GPU (bnb source multi-backend / HF latest) docker build

|

||||

status: ${{ job.status }}

|

||||

slack_token: ${{ secrets.SLACK_CIFEEDBACK_BOT_TOKEN }}

|

||||

|

||||

|

||||

|

||||

3

.github/workflows/build_documentation.yml

vendored

3

.github/workflows/build_documentation.yml

vendored

@ -14,6 +14,7 @@ jobs:

|

||||

commit_sha: ${{ github.sha }}

|

||||

package: peft

|

||||

notebook_folder: peft_docs

|

||||

custom_container: huggingface/transformers-doc-builder

|

||||

secrets:

|

||||

token: ${{ secrets.HUGGINGFACE_PUSH }}

|

||||

hf_token: ${{ secrets.HF_DOC_BUILD_PUSH }}

|

||||

hf_token: ${{ secrets.HF_DOC_BUILD_PUSH }}

|

||||

|

||||

1

.github/workflows/build_pr_documentation.yml

vendored

1

.github/workflows/build_pr_documentation.yml

vendored

@ -14,3 +14,4 @@ jobs:

|

||||

commit_sha: ${{ github.event.pull_request.head.sha }}

|

||||

pr_number: ${{ github.event.number }}

|

||||

package: peft

|

||||

custom_container: huggingface/transformers-doc-builder

|

||||

|

||||

14

.github/workflows/delete_doc_comment.yml

vendored

14

.github/workflows/delete_doc_comment.yml

vendored

@ -1,14 +0,0 @@

|

||||

name: Delete doc comment

|

||||

|

||||

on:

|

||||

workflow_run:

|

||||

workflows: ["Delete doc comment trigger"]

|

||||

types:

|

||||

- completed

|

||||

|

||||

|

||||

jobs:

|

||||

delete:

|

||||

uses: huggingface/doc-builder/.github/workflows/delete_doc_comment.yml@main

|

||||

secrets:

|

||||

comment_bot_token: ${{ secrets.COMMENT_BOT_TOKEN }}

|

||||

12

.github/workflows/delete_doc_comment_trigger.yml

vendored

12

.github/workflows/delete_doc_comment_trigger.yml

vendored

@ -1,12 +0,0 @@

|

||||

name: Delete doc comment trigger

|

||||

|

||||

on:

|

||||

pull_request:

|

||||

types: [ closed ]

|

||||

|

||||

|

||||

jobs:

|

||||

delete:

|

||||

uses: huggingface/doc-builder/.github/workflows/delete_doc_comment_trigger.yml@main

|

||||

with:

|

||||

pr_number: ${{ github.event.number }}

|

||||

133

.github/workflows/nightly-bnb.yml

vendored

Normal file

133

.github/workflows/nightly-bnb.yml

vendored

Normal file

@ -0,0 +1,133 @@

|

||||

name: BNB from source self-hosted runner with slow tests (scheduled)

|

||||

|

||||

on:

|

||||

workflow_dispatch:

|

||||

schedule:

|

||||

- cron: "0 2 * * *"

|

||||

|

||||

env:

|

||||

RUN_SLOW: "yes"

|

||||

IS_GITHUB_CI: "1"

|

||||

# To be able to run tests on CUDA 12.2

|

||||

NVIDIA_DISABLE_REQUIRE: "1"

|

||||

SLACK_API_TOKEN: ${{ secrets.SLACK_API_TOKEN }}

|

||||

|

||||

|

||||

jobs:

|

||||

run_all_tests_single_gpu:

|

||||

strategy:

|

||||

fail-fast: false

|

||||

matrix:

|

||||

docker-image-name: ["huggingface/peft-gpu-bnb-source:latest", "huggingface/peft-gpu-bnb-latest:latest", "huggingface/peft-gpu-bnb-multi-source:latest"]

|

||||

runs-on: [self-hosted, single-gpu, nvidia-gpu, t4, ci]

|

||||

env:

|

||||

CUDA_VISIBLE_DEVICES: "0"

|

||||

TEST_TYPE: "single_gpu_${{ matrix.docker-image-name }}"

|

||||

container:

|

||||

image: ${{ matrix.docker-image-name }}

|

||||

options: --gpus all --shm-size "16gb" --ipc host -v /mnt/cache/.cache/huggingface:/mnt/cache/

|

||||

defaults:

|

||||

run:

|

||||

shell: bash

|

||||

steps:

|

||||

- uses: actions/checkout@v3

|

||||

- name: Pip install

|

||||

run: |

|

||||

source activate peft

|

||||

pip install -e . --no-deps

|

||||

pip install pytest-reportlog pytest-cov parameterized datasets scipy einops

|

||||

pip install "pytest>=7.2.0,<8.0.0" # see: https://github.com/huggingface/transformers/blob/ce4fff0be7f6464d713f7ac3e0bbaafbc6959ae5/setup.py#L148C6-L148C26

|

||||

mkdir transformers-clone && git clone https://github.com/huggingface/transformers.git transformers-clone # rename to transformers clone to avoid modules conflict

|

||||

if [ "${{ matrix.docker-image-name }}" == "huggingface/peft-gpu-bnb-latest:latest" ]; then

|

||||

cd transformers-clone

|

||||

transformers_version=$(pip show transformers | grep '^Version:' | cut -d ' ' -f2 | sed 's/\.dev0//')

|

||||

echo "Checking out tag for Transformers version: v$transformers_version"

|

||||

git fetch --tags

|

||||

git checkout tags/v$transformers_version

|

||||

cd ..

|

||||

fi

|

||||

- name: Run examples on single GPU

|

||||

if: always()

|

||||

run: |

|

||||

source activate peft

|

||||

make tests_examples_single_gpu_bnb

|

||||

|

||||

- name: Run core tests on single GPU

|

||||

if: always()

|

||||

run: |

|

||||

source activate peft

|

||||

make tests_core_single_gpu_bnb

|

||||

|

||||

- name: Run transformers tests on single GPU

|

||||

if: always()

|

||||

run: |

|

||||

source activate peft

|

||||

make transformers_tests

|

||||

|

||||

- name: Generate Report

|

||||

if: always()

|

||||

run: |

|

||||

pip install slack_sdk tabulate

|

||||

python scripts/log_reports.py --slack_channel_name bnb-daily-ci-collab >> $GITHUB_STEP_SUMMARY

|

||||

|

||||

run_all_tests_multi_gpu:

|

||||

strategy:

|

||||

fail-fast: false

|

||||

matrix:

|

||||

docker-image-name: ["huggingface/peft-gpu-bnb-source:latest", "huggingface/peft-gpu-bnb-latest:latest", "huggingface/peft-gpu-bnb-multi-source:latest"]

|

||||

runs-on: [self-hosted, multi-gpu, nvidia-gpu, t4, ci]

|

||||

env:

|

||||

CUDA_VISIBLE_DEVICES: "0,1"

|

||||

TEST_TYPE: "multi_gpu_${{ matrix.docker-image-name }}"

|

||||

container:

|

||||

image: ${{ matrix.docker-image-name }}

|

||||

options: --gpus all --shm-size "16gb" --ipc host -v /mnt/cache/.cache/huggingface:/mnt/cache/

|

||||

defaults:

|

||||

run:

|

||||

shell: bash

|

||||

steps:

|

||||

- uses: actions/checkout@v3

|

||||

- name: Pip install

|

||||

run: |

|

||||

source activate peft

|

||||

pip install -e . --no-deps

|

||||

pip install pytest-reportlog pytest-cov parameterized datasets scipy einops

|

||||

pip install "pytest>=7.2.0,<8.0.0" # see: https://github.com/huggingface/transformers/blob/ce4fff0be7f6464d713f7ac3e0bbaafbc6959ae5/setup.py#L148C6-L148C26

|

||||

mkdir transformers-clone && git clone https://github.com/huggingface/transformers.git transformers-clone

|

||||

if [ "${{ matrix.docker-image-name }}" == "huggingface/peft-gpu-bnb-latest:latest" ]; then

|

||||

cd transformers-clone

|

||||

transformers_version=$(pip show transformers | grep '^Version:' | cut -d ' ' -f2 | sed 's/\.dev0//')

|

||||

echo "Checking out tag for Transformers version: v$transformers_version"

|

||||

git fetch --tags

|

||||

git checkout tags/v$transformers_version

|

||||

cd ..

|

||||

fi

|

||||

|

||||

- name: Run core GPU tests on multi-gpu

|

||||

if: always()

|

||||

run: |

|

||||

source activate peft

|

||||

|

||||

- name: Run examples on multi GPU

|

||||

if: always()

|

||||

run: |

|

||||

source activate peft

|

||||

make tests_examples_multi_gpu_bnb

|

||||

|

||||

- name: Run core tests on multi GPU

|

||||

if: always()

|

||||

run: |

|

||||

source activate peft

|

||||

make tests_core_multi_gpu_bnb

|

||||

|

||||

- name: Run transformers tests on multi GPU

|

||||

if: always()

|

||||

run: |

|

||||

source activate peft

|

||||

make transformers_tests

|

||||

|

||||

- name: Generate Report

|

||||

if: always()

|

||||

run: |

|

||||

pip install slack_sdk tabulate

|

||||

python scripts/log_reports.py --slack_channel_name bnb-daily-ci-collab >> $GITHUB_STEP_SUMMARY

|

||||

25

.github/workflows/nightly.yml

vendored

25

.github/workflows/nightly.yml

vendored

@ -15,7 +15,9 @@ env:

|

||||

|

||||

jobs:

|

||||

run_all_tests_single_gpu:

|

||||

runs-on: [self-hosted, docker-gpu, multi-gpu]

|

||||

strategy:

|

||||

fail-fast: false

|

||||

runs-on: [self-hosted, single-gpu, nvidia-gpu, t4, ci]

|

||||

env:

|

||||

CUDA_VISIBLE_DEVICES: "0"

|

||||

TEST_TYPE: "single_gpu"

|

||||

@ -24,14 +26,12 @@ jobs:

|

||||

options: --gpus all --shm-size "16gb" -e NVIDIA_DISABLE_REQUIRE=true

|

||||

defaults:

|

||||

run:

|

||||

working-directory: peft/

|

||||

shell: bash

|

||||

steps:

|

||||

- name: Update clone & pip install

|

||||

- uses: actions/checkout@v3

|

||||

- name: Pip install

|

||||

run: |

|

||||

source activate peft

|

||||

git config --global --add safe.directory '*'

|

||||

git fetch && git checkout ${{ github.sha }}

|

||||

pip install -e . --no-deps

|

||||

pip install pytest-reportlog

|

||||

|

||||

@ -49,6 +49,11 @@ jobs:

|

||||

run: |

|

||||

source activate peft

|

||||

make tests_core_single_gpu

|

||||

|

||||

- name: Run regression tests on single GPU

|

||||

run: |

|

||||

source activate peft

|

||||

make tests_regression

|

||||

|

||||

- name: Generate Report

|

||||

if: always()

|

||||

@ -57,7 +62,9 @@ jobs:

|

||||

python scripts/log_reports.py >> $GITHUB_STEP_SUMMARY

|

||||

|

||||

run_all_tests_multi_gpu:

|

||||

runs-on: [self-hosted, docker-gpu, multi-gpu]

|

||||

strategy:

|

||||

fail-fast: false

|

||||

runs-on: [self-hosted, multi-gpu, nvidia-gpu, t4, ci]

|

||||

env:

|

||||

CUDA_VISIBLE_DEVICES: "0,1"

|

||||

TEST_TYPE: "multi_gpu"

|

||||

@ -66,14 +73,12 @@ jobs:

|

||||

options: --gpus all --shm-size "16gb" -e NVIDIA_DISABLE_REQUIRE=true

|

||||

defaults:

|

||||

run:

|

||||

working-directory: peft/

|

||||

shell: bash

|

||||

steps:

|

||||

- name: Update clone

|

||||

- uses: actions/checkout@v3

|

||||

- name: Pip install

|

||||

run: |

|

||||

source activate peft

|

||||

git config --global --add safe.directory '*'

|

||||

git fetch && git checkout ${{ github.sha }}

|

||||

pip install -e . --no-deps

|

||||

pip install pytest-reportlog

|

||||

|

||||

|

||||

59

.github/workflows/test-docker-build.yml

vendored

Normal file

59

.github/workflows/test-docker-build.yml

vendored

Normal file

@ -0,0 +1,59 @@

|

||||

name: Test Docker images (on PR)

|

||||

|

||||

on:

|

||||

pull_request:

|

||||

paths:

|

||||

# Run only when DockerFile files are modified

|

||||

- "docker/**"

|

||||

jobs:

|

||||

get_changed_files:

|

||||

name: "Build all modified docker images"

|

||||

runs-on: ubuntu-latest

|

||||

outputs:

|

||||

matrix: ${{ steps.set-matrix.outputs.matrix }}

|

||||

steps:

|

||||

- name: Check out code

|

||||

uses: actions/checkout@v3

|

||||

- name: Get changed files

|

||||

id: changed-files

|

||||

uses: tj-actions/changed-files@1c8e6069583811afb28f97afeaf8e7da80c6be5c #v42

|

||||

with:

|

||||

files: docker/**

|

||||

json: "true"

|

||||

- name: Run step if only the files listed above change

|

||||

if: steps.changed-files.outputs.any_changed == 'true'

|

||||

id: set-matrix

|

||||

env:

|

||||

ALL_CHANGED_FILES: ${{ steps.changed-files.outputs.all_changed_files }}

|

||||

run: |

|

||||

echo "matrix=${{ steps.changed-files.outputs.all_changed_files}}" >> $GITHUB_OUTPUT

|

||||

build_modified_files:

|

||||

needs: get_changed_files

|

||||

name: Build Docker images on modified files

|

||||

runs-on: ubuntu-latest

|

||||

if: ${{ needs.get_changed_files.outputs.matrix }} != ''

|

||||

strategy:

|

||||

fail-fast: false

|

||||

matrix:

|

||||

docker-file: ${{ fromJson(needs.get_changed_files.outputs.matrix) }}

|

||||

steps:

|

||||

- name: Cleanup disk

|

||||

run: |

|

||||

sudo ls -l /usr/local/lib/

|

||||

sudo ls -l /usr/share/

|

||||

sudo du -sh /usr/local/lib/

|

||||

sudo du -sh /usr/share/

|

||||

sudo rm -rf /usr/local/lib/android

|

||||

sudo rm -rf /usr/share/dotnet

|

||||

sudo du -sh /usr/local/lib/

|

||||

sudo du -sh /usr/share/

|

||||

- name: Set up Docker Buildx

|

||||

uses: docker/setup-buildx-action@v1

|

||||

- name: Check out code

|

||||

uses: actions/checkout@v3

|

||||

- name: Build Docker image

|

||||

uses: docker/build-push-action@v4

|

||||

with:

|

||||

file: ${{ matrix.docker-file }}

|

||||

context: .

|

||||

push: False

|

||||

28

.github/workflows/tests-main.yml

vendored

Normal file

28

.github/workflows/tests-main.yml

vendored

Normal file

@ -0,0 +1,28 @@

|

||||

name: tests on transformers main

|

||||

|

||||

on:

|

||||

push:

|

||||

branches: [main]

|

||||

paths-ignore:

|

||||

- 'docs/**'

|

||||

|

||||

jobs:

|

||||

tests:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- uses: actions/checkout@v3

|

||||

- name: Set up Python 3.11

|

||||

uses: actions/setup-python@v4

|

||||

with:

|

||||

python-version: 3.11

|

||||

cache: "pip"

|

||||

cache-dependency-path: "setup.py"

|

||||

- name: Install dependencies

|

||||

run: |

|

||||

python -m pip install --upgrade pip

|

||||

# cpu version of pytorch

|

||||

pip install -U git+https://github.com/huggingface/transformers.git

|

||||

pip install -e .[test]

|

||||

- name: Test with pytest

|

||||

run: |

|

||||

make test

|

||||

8

.github/workflows/tests.yml

vendored

8

.github/workflows/tests.yml

vendored

@ -3,7 +3,11 @@ name: tests

|

||||

on:

|

||||

push:

|

||||

branches: [main]

|

||||

paths-ignore:

|

||||

- 'docs/**'

|

||||

pull_request:

|

||||

paths-ignore:

|

||||

- 'docs/**'

|

||||

|

||||

jobs:

|

||||

check_code_quality:

|

||||

@ -28,8 +32,8 @@ jobs:

|

||||

needs: check_code_quality

|

||||

strategy:

|

||||

matrix:

|

||||

python-version: ["3.8", "3.9", "3.10"]

|

||||

os: ["ubuntu-latest", "macos-latest", "windows-latest"]

|

||||

python-version: ["3.8", "3.9", "3.10", "3.11"]

|

||||

os: ["ubuntu-latest", "macos-12", "windows-latest"]

|

||||

runs-on: ${{ matrix.os }}

|

||||

steps:

|

||||

- uses: actions/checkout@v3

|

||||

|

||||

2

.gitignore

vendored

2

.gitignore

vendored

@ -138,4 +138,4 @@ dmypy.json

|

||||

.DS_Store

|

||||

|

||||

# More test things

|

||||

wandb

|

||||

wandb

|

||||

|

||||

13

.pre-commit-config.yaml

Normal file

13

.pre-commit-config.yaml

Normal file

@ -0,0 +1,13 @@

|

||||

repos:

|

||||

- repo: https://github.com/astral-sh/ruff-pre-commit

|

||||

rev: v0.2.1

|

||||

hooks:

|

||||

- id: ruff

|

||||

args:

|

||||

- --fix

|

||||

- id: ruff-format

|

||||

- repo: https://github.com/pre-commit/pre-commit-hooks

|

||||

rev: v4.5.0

|

||||

hooks:

|

||||

- id: check-merge-conflict

|

||||

- id: check-yaml

|

||||

25

Makefile

25

Makefile

@ -1,19 +1,19 @@

|

||||

.PHONY: quality style test docs

|

||||

|

||||

check_dirs := src tests examples docs

|

||||

check_dirs := src tests examples docs scripts docker

|

||||

|

||||

# Check that source code meets quality standards

|

||||

|

||||

# this target runs checks on all files

|

||||

quality:

|

||||

black --check $(check_dirs)

|

||||

ruff $(check_dirs)

|

||||

ruff format --check $(check_dirs)

|

||||

doc-builder style src/peft tests docs/source --max_len 119 --check_only

|

||||

|

||||

# Format source code automatically and check is there are any problems left that need manual fixing

|

||||

style:

|

||||

black $(check_dirs)

|

||||

ruff $(check_dirs) --fix

|

||||

ruff format $(check_dirs)

|

||||

doc-builder style src/peft tests docs/source --max_len 119

|

||||

|

||||

test:

|

||||

@ -34,3 +34,22 @@ tests_core_single_gpu:

|

||||

tests_common_gpu:

|

||||

python -m pytest tests/test_decoder_models.py $(if $(IS_GITHUB_CI),--report-log "common_decoder.log",)

|

||||

python -m pytest tests/test_encoder_decoder_models.py $(if $(IS_GITHUB_CI),--report-log "common_encoder_decoder.log",)

|

||||

|

||||

tests_examples_multi_gpu_bnb:

|

||||

python -m pytest -m "multi_gpu_tests and bitsandbytes" tests/test_gpu_examples.py $(if $(IS_GITHUB_CI),--report-log "multi_gpu_examples.log",)

|

||||

|

||||

tests_examples_single_gpu_bnb:

|

||||

python -m pytest -m "single_gpu_tests and bitsandbytes" tests/test_gpu_examples.py $(if $(IS_GITHUB_CI),--report-log "single_gpu_examples.log",)

|

||||

|

||||

tests_core_multi_gpu_bnb:

|

||||

python -m pytest -m "multi_gpu_tests and bitsandbytes" tests/test_common_gpu.py $(if $(IS_GITHUB_CI),--report-log "core_multi_gpu.log",)

|

||||

|

||||

tests_core_single_gpu_bnb:

|

||||

python -m pytest -m "single_gpu_tests and bitsandbytes" tests/test_common_gpu.py $(if $(IS_GITHUB_CI),--report-log "core_single_gpu.log",)

|

||||

|

||||

# For testing transformers tests for bnb runners

|

||||

transformers_tests:

|

||||

RUN_SLOW=1 python -m pytest transformers-clone/tests/quantization/bnb $(if $(IS_GITHUB_CI),--report-log "transformers_tests.log",)

|

||||

|

||||

tests_regression:

|

||||

python -m pytest -s --regression tests/regression/ $(if $(IS_GITHUB_CI),--report-log "regression_tests.log",)

|

||||

|

||||

398

README.md

398

README.md

@ -19,23 +19,24 @@ limitations under the License.

|

||||

<p>State-of-the-art Parameter-Efficient Fine-Tuning (PEFT) methods</p>

|

||||

</h3>

|

||||

|

||||

Parameter-Efficient Fine-Tuning (PEFT) methods enable efficient adaptation of pre-trained language models (PLMs) to various downstream applications without fine-tuning all the model's parameters. Fine-tuning large-scale PLMs is often prohibitively costly. In this regard, PEFT methods only fine-tune a small number of (extra) model parameters, thereby greatly decreasing the computational and storage costs. Recent State-of-the-Art PEFT techniques achieve performance comparable to that of full fine-tuning.

|

||||

Fine-tuning large pretrained models is often prohibitively costly due to their scale. Parameter-Efficient Fine-Tuning (PEFT) methods enable efficient adaptation of large pretrained models to various downstream applications by only fine-tuning a small number of (extra) model parameters instead of all the model's parameters. This significantly decreases the computational and storage costs. Recent state-of-the-art PEFT techniques achieve performance comparable to fully fine-tuned models.

|

||||

|

||||

Seamlessly integrated with 🤗 Accelerate for large scale models leveraging DeepSpeed and Big Model Inference.

|

||||

PEFT is integrated with Transformers for easy model training and inference, Diffusers for conveniently managing different adapters, and Accelerate for distributed training and inference for really big models.

|

||||

|

||||

Supported methods:

|

||||

> [!TIP]

|

||||

> Visit the [PEFT](https://huggingface.co/PEFT) organization to read about the PEFT methods implemented in the library and to see notebooks demonstrating how to apply these methods to a variety of downstream tasks. Click the "Watch repos" button on the organization page to be notified of newly implemented methods and notebooks!

|

||||

|

||||

1. LoRA: [LORA: LOW-RANK ADAPTATION OF LARGE LANGUAGE MODELS](https://arxiv.org/abs/2106.09685)

|

||||

2. Prefix Tuning: [Prefix-Tuning: Optimizing Continuous Prompts for Generation](https://aclanthology.org/2021.acl-long.353/), [P-Tuning v2: Prompt Tuning Can Be Comparable to Fine-tuning Universally Across Scales and Tasks](https://arxiv.org/pdf/2110.07602.pdf)

|

||||

3. P-Tuning: [GPT Understands, Too](https://arxiv.org/abs/2103.10385)

|

||||

4. Prompt Tuning: [The Power of Scale for Parameter-Efficient Prompt Tuning](https://arxiv.org/abs/2104.08691)

|

||||

5. AdaLoRA: [Adaptive Budget Allocation for Parameter-Efficient Fine-Tuning](https://arxiv.org/abs/2303.10512)

|

||||

6. $(IA)^3$: [Few-Shot Parameter-Efficient Fine-Tuning is Better and Cheaper than In-Context Learning](https://arxiv.org/abs/2205.05638)

|

||||

7. MultiTask Prompt Tuning: [Multitask Prompt Tuning Enables Parameter-Efficient Transfer Learning](https://arxiv.org/abs/2303.02861)

|

||||

8. LoHa: [FedPara: Low-Rank Hadamard Product for Communication-Efficient Federated Learning](https://arxiv.org/abs/2108.06098)

|

||||

9. LoKr: [KronA: Parameter Efficient Tuning with Kronecker Adapter](https://arxiv.org/abs/2212.10650) based on [Navigating Text-To-Image Customization:From LyCORIS Fine-Tuning to Model Evaluation](https://arxiv.org/abs/2309.14859) implementation

|

||||

Check the PEFT Adapters API Reference section for a list of supported PEFT methods, and read the [Adapters](https://huggingface.co/docs/peft/en/conceptual_guides/adapter), [Soft prompts](https://huggingface.co/docs/peft/en/conceptual_guides/prompting), and [IA3](https://huggingface.co/docs/peft/en/conceptual_guides/ia3) conceptual guides to learn more about how these methods work.

|

||||

|

||||

## Getting started

|

||||

## Quickstart

|

||||

|

||||

Install PEFT from pip:

|

||||

|

||||

```bash

|

||||

pip install peft

|

||||

```

|

||||

|

||||

Prepare a model for training with a PEFT method such as LoRA by wrapping the base model and PEFT configuration with `get_peft_model`. For the bigscience/mt0-large model, you're only training 0.19% of the parameters!

|

||||

|

||||

```python

|

||||

from transformers import AutoModelForSeq2SeqLM

|

||||

@ -50,17 +51,35 @@ peft_config = LoraConfig(

|

||||

model = AutoModelForSeq2SeqLM.from_pretrained(model_name_or_path)

|

||||

model = get_peft_model(model, peft_config)

|

||||

model.print_trainable_parameters()

|

||||

# output: trainable params: 2359296 || all params: 1231940608 || trainable%: 0.19151053100118282

|

||||

"trainable params: 2359296 || all params: 1231940608 || trainable%: 0.19151053100118282"

|

||||

```

|

||||

|

||||

## Use Cases

|

||||

To load a PEFT model for inference:

|

||||

|

||||

### Get comparable performance to full finetuning by adapting LLMs to downstream tasks using consumer hardware

|

||||

```py

|

||||

from peft import AutoPeftModelForCausalLM

|

||||

from transformers import AutoTokenizer

|

||||

import torch

|

||||

|

||||

GPU memory required for adapting LLMs on the few-shot dataset [`ought/raft/twitter_complaints`](https://huggingface.co/datasets/ought/raft/viewer/twitter_complaints). Here, settings considered

|

||||

are full finetuning, PEFT-LoRA using plain PyTorch and PEFT-LoRA using DeepSpeed with CPU Offloading.

|

||||

model = AutoPeftModelForCausalLM.from_pretrained("ybelkada/opt-350m-lora").to("cuda")

|

||||

tokenizer = AutoTokenizer.from_pretrained("facebook/opt-350m")

|

||||

|

||||

Hardware: Single A100 80GB GPU with CPU RAM above 64GB

|

||||

model.eval()

|

||||

inputs = tokenizer("Preheat the oven to 350 degrees and place the cookie dough", return_tensors="pt")

|

||||

|

||||

outputs = model.generate(input_ids=inputs["input_ids"].to("cuda"), max_new_tokens=50)

|

||||

print(tokenizer.batch_decode(outputs, skip_special_tokens=True)[0])

|

||||

|

||||

"Preheat the oven to 350 degrees and place the cookie dough in the center of the oven. In a large bowl, combine the flour, baking powder, baking soda, salt, and cinnamon. In a separate bowl, combine the egg yolks, sugar, and vanilla."

|

||||

```

|

||||

|

||||

## Why you should use PEFT

|

||||

|

||||

There are many benefits of using PEFT but the main one is the huge savings in compute and storage, making PEFT applicable to many different use cases.

|

||||

|

||||

### High performance on consumer hardware

|

||||

|

||||

Consider the memory requirements for training the following models on the [ought/raft/twitter_complaints](https://huggingface.co/datasets/ought/raft/viewer/twitter_complaints) dataset with an A100 80GB GPU with more than 64GB of CPU RAM.

|

||||

|

||||

| Model | Full Finetuning | PEFT-LoRA PyTorch | PEFT-LoRA DeepSpeed with CPU Offloading |

|

||||

| --------- | ---- | ---- | ---- |

|

||||

@ -68,9 +87,7 @@ Hardware: Single A100 80GB GPU with CPU RAM above 64GB

|

||||

| bigscience/mt0-xxl (12B params) | OOM GPU | 56GB GPU / 3GB CPU | 22GB GPU / 52GB CPU |

|

||||

| bigscience/bloomz-7b1 (7B params) | OOM GPU | 32GB GPU / 3.8GB CPU | 18.1GB GPU / 35GB CPU |

|

||||

|

||||

Performance of PEFT-LoRA tuned [`bigscience/T0_3B`](https://huggingface.co/bigscience/T0_3B) on [`ought/raft/twitter_complaints`](https://huggingface.co/datasets/ought/raft/viewer/twitter_complaints) leaderboard.

|

||||

A point to note is that we didn't try to squeeze performance by playing around with input instruction templates, LoRA hyperparams and other training related hyperparams. Also, we didn't use the larger 13B [mt0-xxl](https://huggingface.co/bigscience/mt0-xxl) model.

|

||||

So, we are already seeing comparable performance to SoTA with parameter efficient tuning. Also, the final additional checkpoint size is just `19MB` in comparison to `11GB` size of the backbone [`bigscience/T0_3B`](https://huggingface.co/bigscience/T0_3B) model, but one still has to load the original full size model.

|

||||

With LoRA you can fully finetune a 12B parameter model that would've otherwise run out of memory on the 80GB GPU, and comfortably fit and train a 3B parameter model. When you look at the 3B parameter model's performance, it is comparable to a fully finetuned model at a fraction of the GPU memory.

|

||||

|

||||

| Submission Name | Accuracy |

|

||||

| --------- | ---- |

|

||||

@ -78,331 +95,58 @@ So, we are already seeing comparable performance to SoTA with parameter efficien

|

||||

| Flan-T5 | 0.892 |

|

||||

| lora-t0-3b | 0.863 |

|

||||

|

||||

**Therefore, we can see that performance comparable to SoTA is achievable by PEFT methods with consumer hardware such as 16GB and 24GB GPUs.**

|

||||

> [!TIP]

|

||||

> The bigscience/T0_3B model performance isn't optimized in the table above. You can squeeze even more performance out of it by playing around with the input instruction templates, LoRA hyperparameters, and other training related hyperparameters. The final checkpoint size of this model is just 19MB compared to 11GB of the full bigscience/T0_3B model. Learn more about the advantages of finetuning with PEFT in this [blog post](https://www.philschmid.de/fine-tune-flan-t5-peft).

|

||||

|

||||

An insightful blogpost explaining the advantages of using PEFT for fine-tuning FlanT5-XXL: [https://www.philschmid.de/fine-tune-flan-t5-peft](https://www.philschmid.de/fine-tune-flan-t5-peft)

|

||||

### Quantization

|

||||

|

||||

### Parameter Efficient Tuning of Diffusion Models

|

||||

Quantization is another method for reducing the memory requirements of a model by representing the data in a lower precision. It can be combined with PEFT methods to make it even easier to train and load LLMs for inference.

|

||||

|

||||

GPU memory required by different settings during training is given below. The final checkpoint size is `8.8 MB`.

|

||||

* Learn how to finetune [meta-llama/Llama-2-7b-hf](https://huggingface.co/meta-llama/Llama-2-7b-hf) with QLoRA and the [TRL](https://huggingface.co/docs/trl/index) library on a 16GB GPU in the [Finetune LLMs on your own consumer hardware using tools from PyTorch and Hugging Face ecosystem](https://pytorch.org/blog/finetune-llms/) blog post.

|

||||

* Learn how to finetune a [openai/whisper-large-v2](https://huggingface.co/openai/whisper-large-v2) model for multilingual automatic speech recognition with LoRA and 8-bit quantization in this [notebook](https://colab.research.google.com/drive/1DOkD_5OUjFa0r5Ik3SgywJLJtEo2qLxO?usp=sharing) (see this [notebook](https://colab.research.google.com/drive/1vhF8yueFqha3Y3CpTHN6q9EVcII9EYzs?usp=sharing) instead for an example of streaming a dataset).

|

||||

|

||||

Hardware: Single A100 80GB GPU with CPU RAM above 64GB

|

||||

### Save compute and storage

|

||||

|

||||

PEFT can help you save storage by avoiding full finetuning of models on each of downstream task or dataset. In many cases, you're only finetuning a very small fraction of a model's parameters and each checkpoint is only a few MBs in size (instead of GBs). These smaller PEFT adapters demonstrate performance comparable to a fully finetuned model. If you have many datasets, you can save a lot of storage with a PEFT model and not have to worry about catastrophic forgetting or overfitting the backbone or base model.

|

||||

|

||||

## PEFT integrations

|

||||

|

||||

PEFT is widely supported across the Hugging Face ecosystem because of the massive efficiency it brings to training and inference.

|

||||

|

||||

### Diffusers

|

||||

|

||||

The iterative diffusion process consumes a lot of memory which can make it difficult to train. PEFT can help reduce the memory requirements and reduce the storage size of the final model checkpoint. For example, consider the memory required for training a Stable Diffusion model with LoRA on an A100 80GB GPU with more than 64GB of CPU RAM. The final model checkpoint size is only 8.8MB!

|

||||

|

||||

| Model | Full Finetuning | PEFT-LoRA | PEFT-LoRA with Gradient Checkpointing |

|

||||

| --------- | ---- | ---- | ---- |

|

||||

| CompVis/stable-diffusion-v1-4 | 27.5GB GPU / 3.97GB CPU | 15.5GB GPU / 3.84GB CPU | 8.12GB GPU / 3.77GB CPU |

|

||||

|

||||

> [!TIP]

|

||||

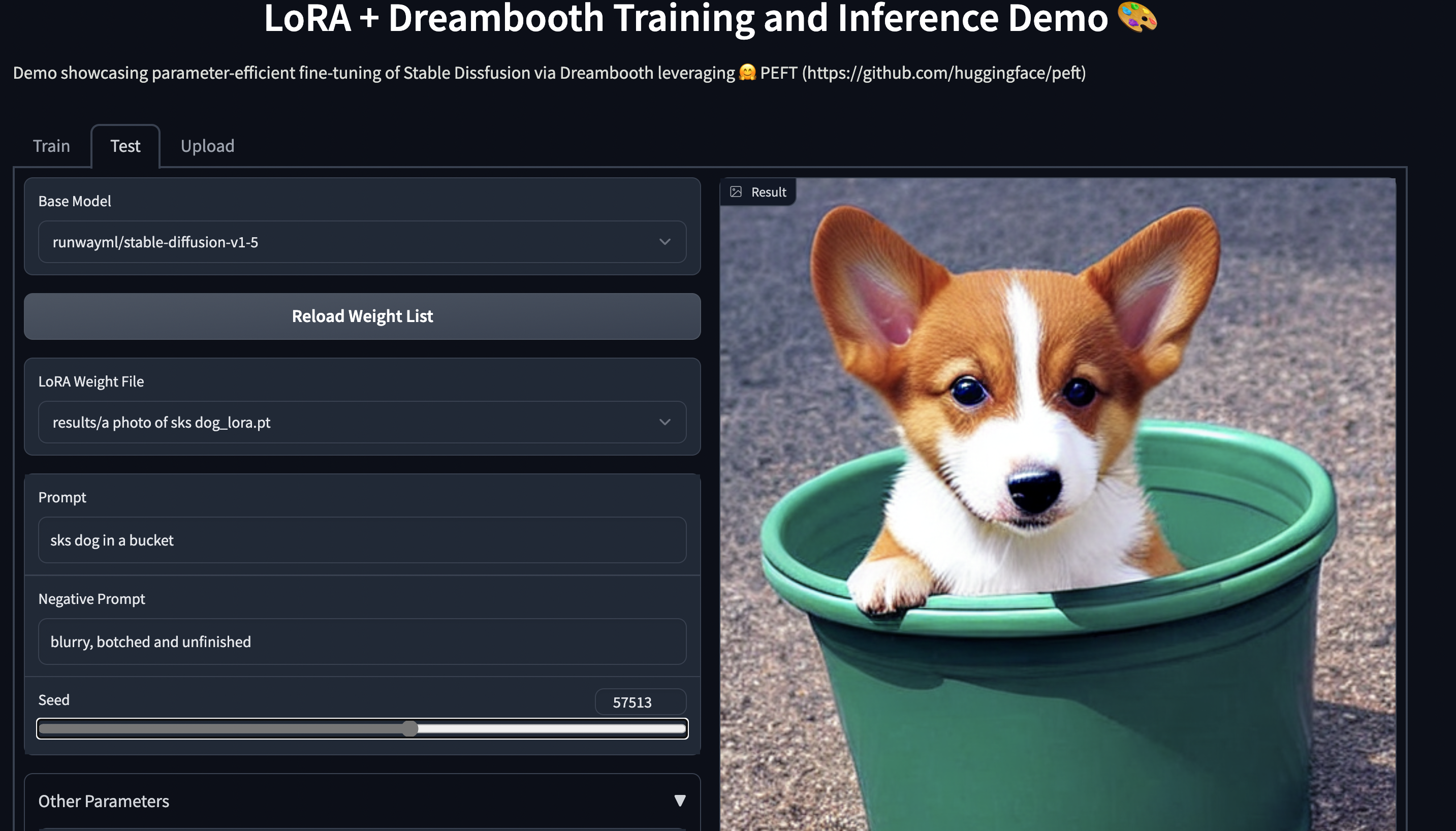

> Take a look at the [examples/lora_dreambooth/train_dreambooth.py](examples/lora_dreambooth/train_dreambooth.py) training script to try training your own Stable Diffusion model with LoRA, and play around with the [smangrul/peft-lora-sd-dreambooth](https://huggingface.co/spaces/smangrul/peft-lora-sd-dreambooth) Space which is running on a T4 instance. Learn more about the PEFT integration in Diffusers in this [tutorial](https://huggingface.co/docs/peft/main/en/tutorial/peft_integrations#diffusers).

|

||||

|

||||

**Training**

|

||||

An example of using LoRA for parameter efficient dreambooth training is given in [`examples/lora_dreambooth/train_dreambooth.py`](examples/lora_dreambooth/train_dreambooth.py)

|

||||

### Accelerate

|

||||

|

||||

```bash

|

||||

export MODEL_NAME= "CompVis/stable-diffusion-v1-4" #"stabilityai/stable-diffusion-2-1"

|

||||

export INSTANCE_DIR="path-to-instance-images"

|

||||

export CLASS_DIR="path-to-class-images"

|

||||

export OUTPUT_DIR="path-to-save-model"

|

||||

[Accelerate](https://huggingface.co/docs/accelerate/index) is a library for distributed training and inference on various training setups and hardware (GPUs, TPUs, Apple Silicon, etc.). PEFT models work with Accelerate out of the box, making it really convenient to train really large models or use them for inference on consumer hardware with limited resources.

|

||||

|

||||

accelerate launch train_dreambooth.py \

|

||||

--pretrained_model_name_or_path=$MODEL_NAME \

|

||||

--instance_data_dir=$INSTANCE_DIR \

|

||||

--class_data_dir=$CLASS_DIR \

|

||||

--output_dir=$OUTPUT_DIR \

|

||||

--train_text_encoder \

|

||||

--with_prior_preservation --prior_loss_weight=1.0 \

|

||||

--instance_prompt="a photo of sks dog" \

|

||||

--class_prompt="a photo of dog" \

|

||||

--resolution=512 \

|

||||

--train_batch_size=1 \

|

||||

--lr_scheduler="constant" \

|

||||

--lr_warmup_steps=0 \

|

||||

--num_class_images=200 \

|

||||

--use_lora \

|

||||

--lora_r 16 \

|

||||

--lora_alpha 27 \

|

||||

--lora_text_encoder_r 16 \

|

||||

--lora_text_encoder_alpha 17 \

|

||||

--learning_rate=1e-4 \

|

||||

--gradient_accumulation_steps=1 \

|

||||

--gradient_checkpointing \

|

||||

--max_train_steps=800

|

||||

```

|

||||

### TRL

|

||||

|

||||

Try out the 🤗 Gradio Space which should run seamlessly on a T4 instance:

|

||||

[smangrul/peft-lora-sd-dreambooth](https://huggingface.co/spaces/smangrul/peft-lora-sd-dreambooth).

|

||||

PEFT can also be applied to training LLMs with RLHF components such as the ranker and policy. Get started by reading:

|

||||

|

||||

|

||||

* [Fine-tune a Mistral-7b model with Direct Preference Optimization](https://towardsdatascience.com/fine-tune-a-mistral-7b-model-with-direct-preference-optimization-708042745aac) with PEFT and the [TRL](https://huggingface.co/docs/trl/index) library to learn more about the Direct Preference Optimization (DPO) method and how to apply it to a LLM.

|

||||

* [Fine-tuning 20B LLMs with RLHF on a 24GB consumer GPU](https://huggingface.co/blog/trl-peft) with PEFT and the [TRL](https://huggingface.co/docs/trl/index) library, and then try out the [gpt2-sentiment_peft.ipynb](https://github.com/huggingface/trl/blob/main/examples/notebooks/gpt2-sentiment.ipynb) notebook to optimize GPT2 to generate positive movie reviews.

|

||||

* [StackLLaMA: A hands-on guide to train LLaMA with RLHF](https://huggingface.co/blog/stackllama) with PEFT, and then try out the [stack_llama/scripts](https://github.com/huggingface/trl/tree/main/examples/research_projects/stack_llama/scripts) for supervised finetuning, reward modeling, and RL finetuning.

|

||||

|

||||

**NEW** ✨ Multi Adapter support and combining multiple LoRA adapters in a weighted combination

|

||||

|

||||

## Model support

|

||||

|

||||

**NEW** ✨ Dreambooth training for Stable Diffusion using LoHa and LoKr adapters [`examples/stable_diffusion/train_dreambooth.py`](examples/stable_diffusion/train_dreambooth.py)

|

||||

Use this [Space](https://stevhliu-peft-methods.hf.space) or check out the [docs](https://huggingface.co/docs/peft/main/en/index) to find which models officially support a PEFT method out of the box. Even if you don't see a model listed below, you can manually configure the model config to enable PEFT for a model. Read the [New transformers architecture](https://huggingface.co/docs/peft/main/en/developer_guides/custom_models#new-transformers-architectures) guide to learn how.

|

||||

|

||||

### Parameter Efficient Tuning of LLMs for RLHF components such as Ranker and Policy

|

||||

- Here is an example in [trl](https://github.com/lvwerra/trl) library using PEFT+INT8 for tuning policy model: [gpt2-sentiment_peft.py](https://github.com/lvwerra/trl/blob/main/examples/sentiment/scripts/gpt2-sentiment_peft.py) and corresponding [Blog](https://huggingface.co/blog/trl-peft)

|

||||

- Example using PEFT for Instruction finetuning, reward model and policy : [stack_llama](https://github.com/lvwerra/trl/tree/main/examples/research_projects/stack_llama/scripts) and corresponding [Blog](https://huggingface.co/blog/stackllama)

|

||||

## Contribute

|

||||

|

||||

### INT8 training of large models in Colab using PEFT LoRA and bits_and_bytes

|

||||

|

||||

- Here is now a demo on how to fine tune [OPT-6.7b](https://huggingface.co/facebook/opt-6.7b) (14GB in fp16) in a Google Colab: [](https://colab.research.google.com/drive/1jCkpikz0J2o20FBQmYmAGdiKmJGOMo-o?usp=sharing)

|

||||

|

||||

- Here is now a demo on how to fine tune [whisper-large](https://huggingface.co/openai/whisper-large-v2) (1.5B params) (14GB in fp16) in a Google Colab: [](https://colab.research.google.com/drive/1DOkD_5OUjFa0r5Ik3SgywJLJtEo2qLxO?usp=sharing) and [](https://colab.research.google.com/drive/1vhF8yueFqha3Y3CpTHN6q9EVcII9EYzs?usp=sharing)

|

||||

|

||||

### Save compute and storage even for medium and small models

|

||||

|

||||

Save storage by avoiding full finetuning of models on each of the downstream tasks/datasets,

|

||||

With PEFT methods, users only need to store tiny checkpoints in the order of `MBs` all the while retaining

|

||||

performance comparable to full finetuning.

|

||||

|

||||

An example of using LoRA for the task of adapting `LayoutLMForTokenClassification` on `FUNSD` dataset is given in `~examples/token_classification/PEFT_LoRA_LayoutLMForTokenClassification_on_FUNSD.py`. We can observe that with only `0.62 %` of parameters being trainable, we achieve performance (F1 0.777) comparable to full finetuning (F1 0.786) (without any hyperparam tuning runs for extracting more performance), and the checkpoint of this is only `2.8MB`. Now, if there are `N` such datasets, just have these PEFT models one for each dataset and save a lot of storage without having to worry about the problem of catastrophic forgetting or overfitting of backbone/base model.

|

||||

|

||||

Another example is fine-tuning [`roberta-large`](https://huggingface.co/roberta-large) on [`MRPC` GLUE](https://huggingface.co/datasets/glue/viewer/mrpc) dataset using different PEFT methods. The notebooks are given in `~examples/sequence_classification`.

|

||||

|

||||

|

||||

## PEFT + 🤗 Accelerate

|

||||

|

||||

PEFT models work with 🤗 Accelerate out of the box. Use 🤗 Accelerate for Distributed training on various hardware such as GPUs, Apple Silicon devices, etc during training.

|

||||

Use 🤗 Accelerate for inferencing on consumer hardware with small resources.

|

||||

|

||||

### Example of PEFT model training using 🤗 Accelerate's DeepSpeed integration

|

||||

|

||||

DeepSpeed version required `v0.8.0`. An example is provided in `~examples/conditional_generation/peft_lora_seq2seq_accelerate_ds_zero3_offload.py`.

|

||||

a. First, run `accelerate config --config_file ds_zero3_cpu.yaml` and answer the questionnaire.

|

||||

Below are the contents of the config file.

|

||||

```yaml

|

||||

compute_environment: LOCAL_MACHINE

|

||||

deepspeed_config:

|

||||

gradient_accumulation_steps: 1

|

||||

gradient_clipping: 1.0

|

||||

offload_optimizer_device: cpu

|

||||

offload_param_device: cpu

|

||||

zero3_init_flag: true

|

||||

zero3_save_16bit_model: true

|

||||

zero_stage: 3

|

||||

distributed_type: DEEPSPEED

|

||||

downcast_bf16: 'no'

|

||||

dynamo_backend: 'NO'

|

||||

fsdp_config: {}

|

||||

machine_rank: 0

|

||||

main_training_function: main

|

||||

megatron_lm_config: {}

|

||||

mixed_precision: 'no'

|

||||

num_machines: 1

|

||||

num_processes: 1

|

||||

rdzv_backend: static

|

||||

same_network: true

|

||||

use_cpu: false

|

||||

```

|

||||

b. run the below command to launch the example script

|

||||

```bash

|

||||

accelerate launch --config_file ds_zero3_cpu.yaml examples/peft_lora_seq2seq_accelerate_ds_zero3_offload.py

|

||||

```

|

||||

|

||||

c. output logs:

|

||||

```bash

|

||||

GPU Memory before entering the train : 1916

|

||||

GPU Memory consumed at the end of the train (end-begin): 66

|

||||

GPU Peak Memory consumed during the train (max-begin): 7488

|

||||

GPU Total Peak Memory consumed during the train (max): 9404

|

||||

CPU Memory before entering the train : 19411

|

||||

CPU Memory consumed at the end of the train (end-begin): 0

|

||||

CPU Peak Memory consumed during the train (max-begin): 0

|

||||

CPU Total Peak Memory consumed during the train (max): 19411

|

||||

epoch=4: train_ppl=tensor(1.0705, device='cuda:0') train_epoch_loss=tensor(0.0681, device='cuda:0')

|

||||

100%|████████████████████████████████████████████████████████████████████████████████████████████| 7/7 [00:27<00:00, 3.92s/it]

|

||||

GPU Memory before entering the eval : 1982

|

||||

GPU Memory consumed at the end of the eval (end-begin): -66

|

||||

GPU Peak Memory consumed during the eval (max-begin): 672

|

||||

GPU Total Peak Memory consumed during the eval (max): 2654

|

||||

CPU Memory before entering the eval : 19411

|

||||

CPU Memory consumed at the end of the eval (end-begin): 0

|

||||

CPU Peak Memory consumed during the eval (max-begin): 0

|

||||

CPU Total Peak Memory consumed during the eval (max): 19411

|

||||

accuracy=100.0

|

||||

eval_preds[:10]=['no complaint', 'no complaint', 'complaint', 'complaint', 'no complaint', 'no complaint', 'no complaint', 'complaint', 'complaint', 'no complaint']

|

||||

dataset['train'][label_column][:10]=['no complaint', 'no complaint', 'complaint', 'complaint', 'no complaint', 'no complaint', 'no complaint', 'complaint', 'complaint', 'no complaint']

|

||||

```

|

||||

|

||||

### Example of PEFT model inference using 🤗 Accelerate's Big Model Inferencing capabilities

|

||||

An example is provided in `~examples/causal_language_modeling/peft_lora_clm_accelerate_big_model_inference.ipynb`.

|

||||

|

||||

|

||||

## Models support matrix

|

||||

|

||||

### Causal Language Modeling

|

||||

| Model | LoRA | Prefix Tuning | P-Tuning | Prompt Tuning | IA3 |

|

||||

|--------------| ---- | ---- | ---- | ---- | ---- |

|

||||

| GPT-2 | ✅ | ✅ | ✅ | ✅ | ✅ |

|

||||

| Bloom | ✅ | ✅ | ✅ | ✅ | ✅ |

|

||||

| OPT | ✅ | ✅ | ✅ | ✅ | ✅ |

|

||||

| GPT-Neo | ✅ | ✅ | ✅ | ✅ | ✅ |

|

||||

| GPT-J | ✅ | ✅ | ✅ | ✅ | ✅ |

|

||||

| GPT-NeoX-20B | ✅ | ✅ | ✅ | ✅ | ✅ |

|

||||

| LLaMA | ✅ | ✅ | ✅ | ✅ | ✅ |

|

||||

| ChatGLM | ✅ | ✅ | ✅ | ✅ | ✅ |

|

||||

|

||||

### Conditional Generation

|

||||

| Model | LoRA | Prefix Tuning | P-Tuning | Prompt Tuning | IA3 |

|

||||

| --------- | ---- | ---- | ---- | ---- | ---- |

|

||||

| T5 | ✅ | ✅ | ✅ | ✅ | ✅ |

|

||||

| BART | ✅ | ✅ | ✅ | ✅ | ✅ |

|

||||

|

||||

### Sequence Classification

|

||||

| Model | LoRA | Prefix Tuning | P-Tuning | Prompt Tuning | IA3 |

|

||||

| --------- | ---- | ---- | ---- | ---- | ---- |

|

||||

| BERT | ✅ | ✅ | ✅ | ✅ | ✅ |

|

||||

| RoBERTa | ✅ | ✅ | ✅ | ✅ | ✅ |

|

||||

| GPT-2 | ✅ | ✅ | ✅ | ✅ | |

|

||||

| Bloom | ✅ | ✅ | ✅ | ✅ | |

|

||||

| OPT | ✅ | ✅ | ✅ | ✅ | |

|

||||

| GPT-Neo | ✅ | ✅ | ✅ | ✅ | |

|

||||

| GPT-J | ✅ | ✅ | ✅ | ✅ | |

|

||||

| Deberta | ✅ | | ✅ | ✅ | |

|

||||

| Deberta-v2 | ✅ | | ✅ | ✅ | |

|

||||

|

||||

### Token Classification

|

||||

| Model | LoRA | Prefix Tuning | P-Tuning | Prompt Tuning | IA3 |

|

||||

| --------- | ---- | ---- | ---- | ---- | ---- |

|

||||

| BERT | ✅ | ✅ | | | |

|

||||

| RoBERTa | ✅ | ✅ | | | |

|

||||

| GPT-2 | ✅ | ✅ | | | |

|

||||

| Bloom | ✅ | ✅ | | | |

|

||||

| OPT | ✅ | ✅ | | | |

|

||||

| GPT-Neo | ✅ | ✅ | | | |

|

||||

| GPT-J | ✅ | ✅ | | | |

|

||||

| Deberta | ✅ | | | | |

|

||||

| Deberta-v2 | ✅ | | | | |

|

||||

|

||||

### Text-to-Image Generation

|

||||

|

||||

| Model | LoRA | LoHa | LoKr | Prefix Tuning | P-Tuning | Prompt Tuning | IA3 |

|

||||

| --------- | ---- | ---- | ---- | ---- | ---- | ---- | ---- |

|

||||

| Stable Diffusion | ✅ | ✅ | ✅ | | | |

|

||||

|

||||

|

||||

### Image Classification

|

||||

|

||||

| Model | LoRA | Prefix Tuning | P-Tuning | Prompt Tuning | IA3 |

|

||||

| --------- | ---- | ---- | ---- | ---- | ---- |

|

||||

| ViT | ✅ | | | | |

|

||||

| Swin | ✅ | | | | |

|

||||

|

||||

### Image to text (Multi-modal models)

|

||||

|

||||

| Model | LoRA | Prefix Tuning | P-Tuning | Prompt Tuning | IA3

|

||||

| --------- | ---- | ---- | ---- | ---- | ---- |

|

||||

| Blip-2 | ✅ | | | | |

|

||||

|

||||

___Note that we have tested LoRA for [ViT](https://huggingface.co/docs/transformers/model_doc/vit) and [Swin](https://huggingface.co/docs/transformers/model_doc/swin) for fine-tuning on image classification. However, it should be possible to use LoRA for any compatible model [provided](https://huggingface.co/models?pipeline_tag=image-classification&sort=downloads&search=vit) by 🤗 Transformers. Check out the respective

|

||||

examples to learn more. If you run into problems, please open an issue.___

|

||||

|

||||

The same principle applies to our [segmentation models](https://huggingface.co/models?pipeline_tag=image-segmentation&sort=downloads) as well.

|

||||

|

||||

### Semantic Segmentation

|

||||

|

||||

| Model | LoRA | Prefix Tuning | P-Tuning | Prompt Tuning | IA3 |

|

||||

| --------- | ---- | ---- | ---- | ---- | ---- |

|

||||

| SegFormer | ✅ | | | | |

|

||||

|

||||

|

||||

## Caveats:

|

||||

|

||||

1. Below is an example of using PyTorch FSDP for training. However, it doesn't lead to

|

||||

any GPU memory savings. Please refer issue [[FSDP] FSDP with CPU offload consumes 1.65X more GPU memory when training models with most of the params frozen](https://github.com/pytorch/pytorch/issues/91165).

|

||||

|

||||

```python

|

||||

from peft.utils.other import fsdp_auto_wrap_policy

|

||||

|

||||

...

|

||||

|

||||

if os.environ.get("ACCELERATE_USE_FSDP", None) is not None:

|

||||

accelerator.state.fsdp_plugin.auto_wrap_policy = fsdp_auto_wrap_policy(model)

|

||||

|

||||

model = accelerator.prepare(model)

|

||||

```

|

||||

|

||||

Example of parameter efficient tuning with [`mt0-xxl`](https://huggingface.co/bigscience/mt0-xxl) base model using 🤗 Accelerate is provided in `~examples/conditional_generation/peft_lora_seq2seq_accelerate_fsdp.py`.

|

||||

a. First, run `accelerate config --config_file fsdp_config.yaml` and answer the questionnaire.

|

||||

Below are the contents of the config file.

|

||||

```yaml

|

||||

command_file: null

|

||||

commands: null

|

||||

compute_environment: LOCAL_MACHINE

|

||||

deepspeed_config: {}

|

||||

distributed_type: FSDP

|

||||

downcast_bf16: 'no'

|

||||

dynamo_backend: 'NO'

|

||||

fsdp_config:

|

||||

fsdp_auto_wrap_policy: TRANSFORMER_BASED_WRAP

|

||||

fsdp_backward_prefetch_policy: BACKWARD_PRE

|

||||

fsdp_offload_params: true

|

||||

fsdp_sharding_strategy: 1

|

||||

fsdp_state_dict_type: FULL_STATE_DICT

|

||||

fsdp_transformer_layer_cls_to_wrap: T5Block

|

||||

gpu_ids: null

|

||||

machine_rank: 0

|

||||

main_process_ip: null

|

||||

main_process_port: null

|

||||

main_training_function: main

|

||||

megatron_lm_config: {}

|

||||

mixed_precision: 'no'

|

||||

num_machines: 1

|

||||

num_processes: 2

|

||||

rdzv_backend: static

|

||||

same_network: true

|

||||

tpu_name: null

|

||||

tpu_zone: null

|

||||

use_cpu: false

|

||||

```

|

||||

b. run the below command to launch the example script

|

||||

```bash

|

||||

accelerate launch --config_file fsdp_config.yaml examples/peft_lora_seq2seq_accelerate_fsdp.py

|

||||

```

|

||||

|

||||

2. When using ZeRO3 with zero3_init_flag=True, if you find the gpu memory increase with training steps. we might need to update deepspeed after [deepspeed commit 42858a9891422abc](https://github.com/microsoft/DeepSpeed/commit/42858a9891422abcecaa12c1bd432d28d33eb0d4) . The related issue is [[BUG] Peft Training with Zero.Init() and Zero3 will increase GPU memory every forward step ](https://github.com/microsoft/DeepSpeed/issues/3002)

|

||||

|

||||

## 🤗 PEFT as a utility library

|

||||

|

||||

Inject trainable adapters on any `torch` model using `inject_adapter_in_model` method. Note the method will make no further change to the model.

|

||||

|

||||

```python

|

||||

import torch

|

||||

from peft import inject_adapter_in_model, LoraConfig

|

||||

|

||||

class DummyModel(torch.nn.Module):

|

||||

def __init__(self):

|

||||

super().__init__()

|

||||

self.embedding = torch.nn.Embedding(10, 10)

|

||||

self.linear = torch.nn.Linear(10, 10)

|

||||

self.lm_head = torch.nn.Linear(10, 10)

|

||||

|

||||

def forward(self, input_ids):

|

||||

x = self.embedding(input_ids)

|

||||

x = self.linear(x)